Mira Network begins with a simple but stubborn question: what if information had to prove itself before anyone trusted it?

The idea sounds obvious at first. Of course information should be checked. Of course facts should be verified. But the reality of the internet tells a different story. Most things move too quickly to be questioned. A confident sentence appears, people repeat it, and suddenly it becomes part of the background noise of the digital world.

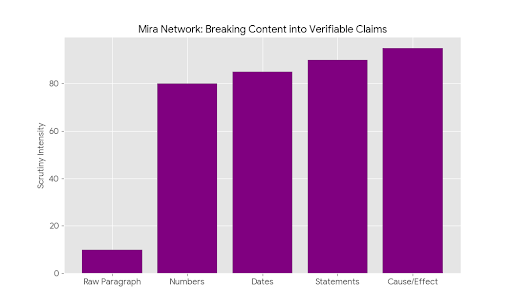

Mira approaches that problem in an unusual way. Instead of treating a piece of content as a single answer, it breaks it apart. A paragraph might look like one idea, but hidden inside it are several smaller claims. A number here. A statement about cause and effect there. A reference to a date or an event somewhere in the middle. Each of those claims becomes something that can be checked on its own.

Those small pieces are then sent across a distributed network where different participants examine them independently. No single authority decides what is correct. Instead, many validators review the same claim and reach their own conclusions. When enough of them agree, the claim is accepted. When they don’t, the uncertainty becomes visible rather than hidden behind confident language.

What makes the system interesting is that accuracy isn’t just encouraged—it’s economically rewarded. Validators have incentives to review claims carefully because careless verification carries consequences. In a strange way, honesty becomes part of the structure rather than something we simply hope people practice.

The need for something like this becomes obvious once you start noticing how easily believable information spreads.

A few months ago, a friend of mine was helping his younger brother prepare for a school presentation. The kid had found a detailed explanation online about a historical event. It looked impressive—statistics, timelines, quotes. The sort of page that feels authoritative the moment you read it.

But something about it felt slightly off.

They dug a little deeper and eventually traced the claims back through several sources. Somewhere along the chain, a small error had slipped in. One number had been copied incorrectly. Another site repeated it. Then another. Within a few years the incorrect version had quietly become the most common version.

The strange thing about misinformation isn’t always deception. Sometimes it’s just repetition.

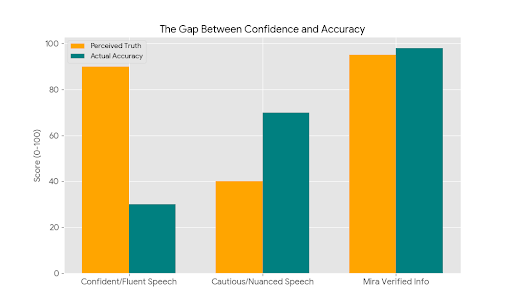

Humans are surprisingly vulnerable to confident language. When something reads smoothly, when the explanation flows easily, our brains tend to interpret that fluency as truth. Psychologists have long observed this pattern: clarity often feels like evidence even when it isn’t.

You can see it happen in everyday conversations. Someone explains something with total certainty and people nod along. Another person speaks more cautiously, mentioning uncertainty or nuance, and suddenly they sound less convincing even if they’re closer to the truth.

Confidence performs well. Verification works quietly.

That imbalance is part of what Mira tries to address. Instead of allowing a polished answer to move forward untouched, the system slows things down just enough for claims to pass through multiple independent checks. Each validator examines a fragment of the information rather than the entire narrative. The result is something closer to collective scrutiny than a single judgment.

It reminds me of a small moment from years ago at a café where a group of us used to meet regularly. Two friends once got into a long argument about a famous chess match. One insisted a particular move had happened in the fifth game of a tournament. The other swore it happened in the sixth.

Neither would give in.

Eventually someone found an old tournament record online. We went through the games one by one until we located the move. It turned out the move existed, but not in either game they had argued about. It appeared in a completely different match from the same event.

The argument dissolved immediately.

What ended the debate wasn’t authority or ego. It was the simple act of multiple people checking the same claim from different angles.

In a way, Mira tries to recreate that moment at scale. Instead of a room full of friends searching through records, there’s a distributed network examining claims piece by piece, building agreement slowly rather than accepting a statement at face value.

What’s interesting is how invisible this kind of infrastructure becomes when it works well. People rarely think about the systems that make reliable information possible. Electricity, water systems, internet routing protocols—most of them operate quietly in the background.

Verification may eventually become the same kind of invisible layer.

We’ve spent decades building systems that produce information faster than ever before. Now the harder challenge is building systems that help us trust what we read. Not by relying on a single authority, but by creating a process where claims survive scrutiny from many independent observers.

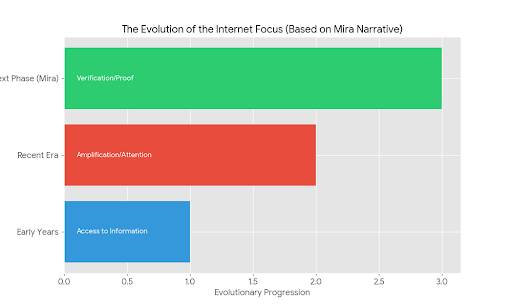

It’s a subtle shift, but an important one. The internet’s early years were about access to information. The next phase was about amplification—what spreads fastest wins attention. The next phase may be something quieter: information that carries proof of how it was examined.

And maybe that’s the real significance of projects like Mira. Not just the technology itself, but the small cultural change it suggests.

In a world filled with confident answers, the most valuable thing might simply be a system that pauses long enough to ask, very carefully,

are we actually sure about this?