I've been spending time lately looking at how automation works in crypto not the flashy end of it, but the plumbing. The bots, the scripts, the on-chain logic that runs quietly in the background of most DeFi activity.

The dominant pattern is pretty straightforward: a trigger appears, a bot reacts, a transaction executes. Price hits a threshold, liquidity shifts. A reward unlocks, a script claims it. The chain records the result. There's an elegance to it clean cause and effect, minimal moving parts.

Most of the time, that's probably fine. For simple, repeatable tasks, immediate execution makes sense. You don't need layers of oversight when the operation is deterministic and the stakes are bounded.

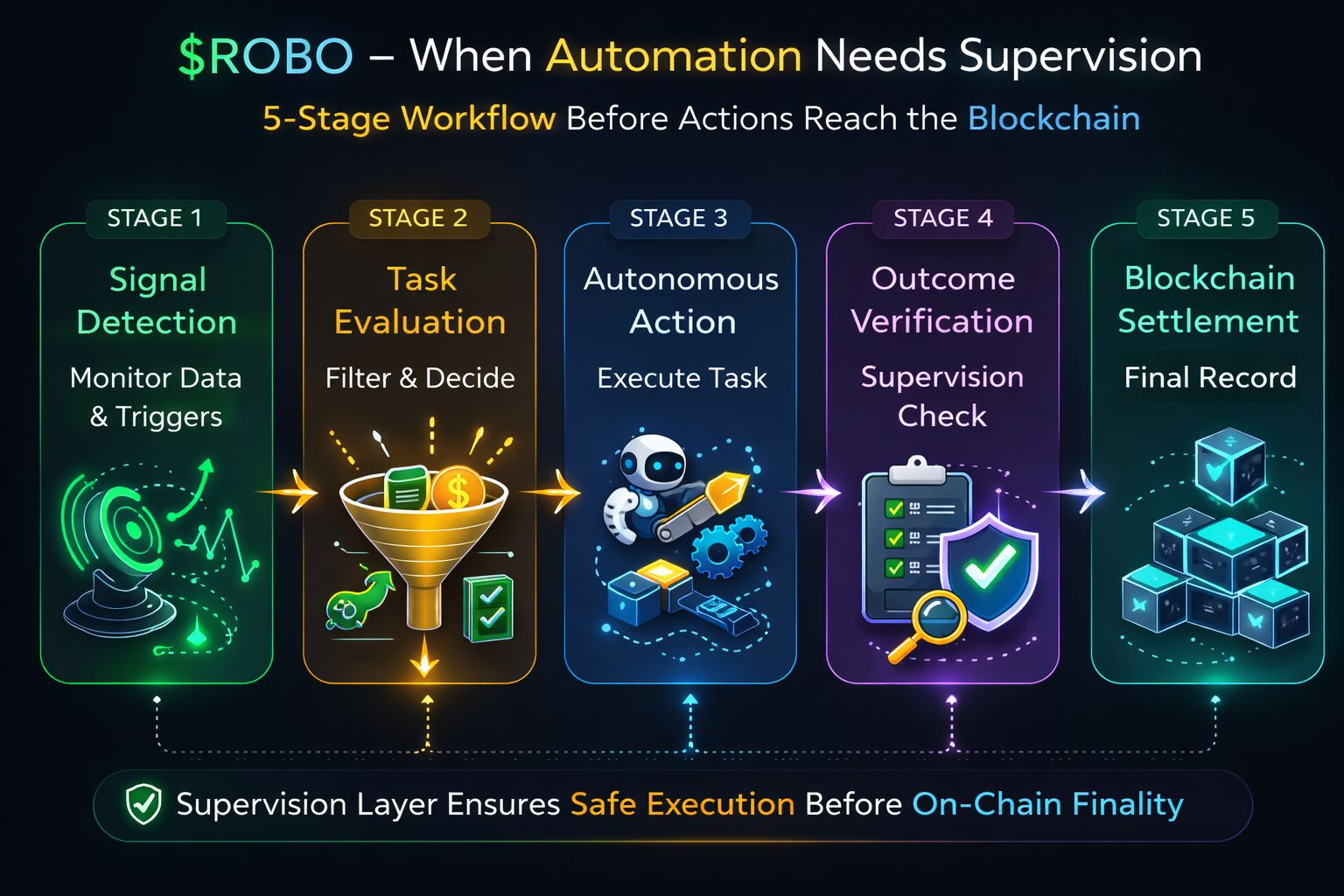

But I've been looking at some system diagrams related to something called Fabric Protocol specifically the architecture they refer to as ROBO and the structure there feels noticeably different. I want to be careful about how I describe it, because I'm working from diagrams and publicly available material rather than direct knowledge of the implementation. Still, what I think I'm seeing is worth thinking through.

Rather than a direct path from signal to transaction, the workflow appears to move through distinct stages: signal detection, task evaluation, execution, verification, and only then settlement. On paper that might look like any other software pipeline most systems have internal logic before they act. But the distinction that caught my attention is where each stage sits relative to the blockchain itself.

In a typical automation setup, the chain is where the outcome first becomes real. The decision happens off-chain, the transaction fires, and the network confirms it. Verification, in that model, means the protocol checking that the transaction is valid not that it was appropriate.

What the ROBO architecture seems to propose is a layer that sits before settlement and asks a different kind of question. Not is this transaction valid? but something closer to does this transaction still reflect what the system actually intended?

I find that distinction genuinely interesting, though I hold it loosely. I don't know exactly how those verification checks work in practice, or how much real discretion they have. It's possible the layer is more procedural than substantive. But even the structural commitment to having a checkpoint there before the chain commits the result suggests a different philosophy about what automation should look like as it gets more complex.

The reason I keep thinking about this is that simple automation and complex automation feel like qualitatively different problems. A script that monitors a single price feed and executes one type of transaction is fairly easy to reason about. When something goes wrong, the failure mode is usually visible. But autonomous systems increasingly operate across multiple protocols, respond to layered conditions, and make sequences of decisions where each step depends on assumptions that might not hold by the time execution happens.

In that environment, immediate execution starts to feel fragile in a way it didn't before. A corrupted data feed. Network congestion that shifts conditions between decision and settlement. An edge case the original logic didn't anticipate. When there's no pause between trigger and transaction, there's also no moment for the system to notice that something has changed.

That's the argument for what I'd loosely call supervisory friction not delays for their own sake, but checkpoints that give the system a chance to confirm that the action still makes sense before it becomes irreversible.

There are real trade-offs here, and I don't want to paper over them. Every additional stage adds latency and complexity. In strategies where speed is the primary variable arbitrage being the obvious case any meaningful pause is probably unacceptable. There's also a harder question about governance: if a verification layer has the authority to hold or cancel an action, who defined the rules it's using? Centralizing that kind of logic in a supervisory component could quietly undermine the decentralized properties that make blockchain interaction meaningful in the first place. That tension doesn't have an obvious resolution.

So I'm not reading this architecture as a solution to anything, exactly. More as an experiment with a particular set of priorities one that trades some execution speed for something resembling operational oversight.

Whether that trade makes sense probably depends on what the system is being asked to do. For complex, high stakes, multi-step agent behavior, the case for supervision seems reasonable. For simpler tasks where speed matters, it probably isn't worth the overhead.

What lingers for me is the broader question the design is implicitly responding to. If decentralized systems eventually host large numbers of autonomous agents running simultaneously agents that interact with each other, adapt to changing conditions, and manage meaningful financial decisions the limiting factor probably won't be execution speed. It'll be the capacity to catch mistakes before they compound.

I don't know if Fabric's approach is the right answer to that problem. I'm not sure anyone knows yet what the right answer looks like. But the question feels worth sitting with, and this architecture at least seems to be taking it seriously.