It’s 5:00 AM, I’m on my third coffee, and I’m staring at a node that looks perfect on paper but just got flagged by the Fabric verifier for replay signals. There’s something strangely satisfying about that—like finally seeing the crack in a piece of polished marble. It confirms you weren't just being cynical; the red flags were real.

After living through enough of these cycles, you realize fraud in robot networks isn't usually happening inside the robot itself— it’s in the proof layer. When logs are tied to rewards, those logs become a commodity. And anywhere there’s a commodity, someone is going to try to counterfeit it.

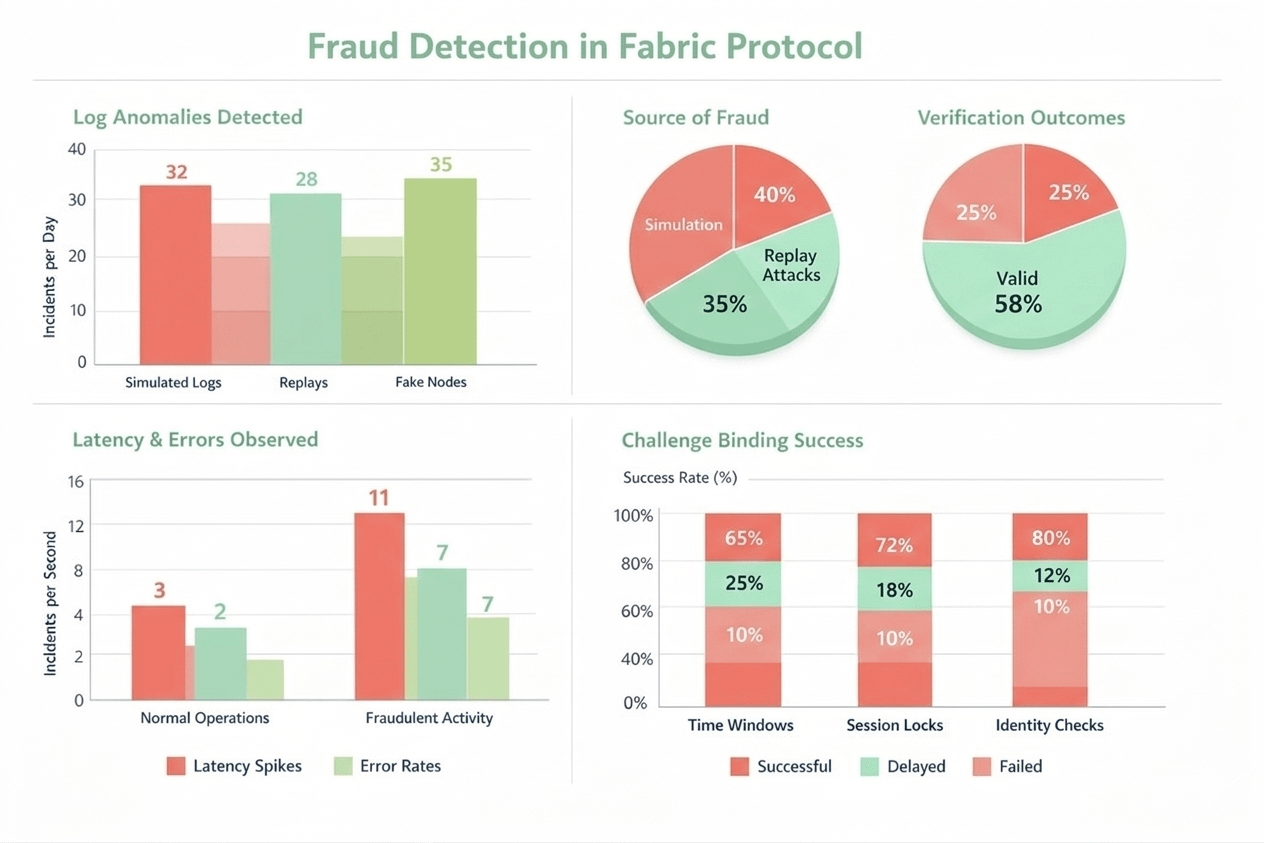

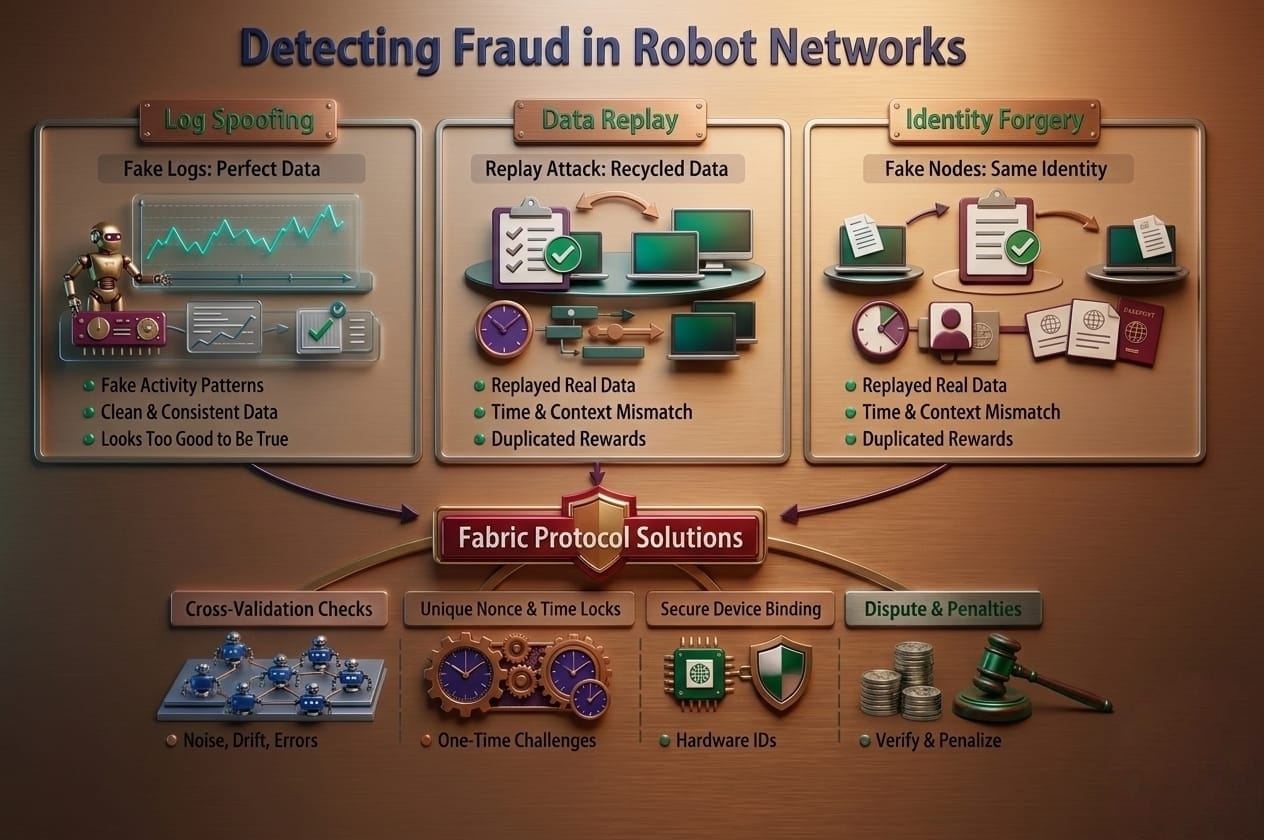

Log simulation is the oldest move in the book. Cheaters script a sequence that looks exactly how a human thinks a robot should act: steady cadence, "natural" minor errors, and beautiful latency.

But real-world operations are messy. They have packet loss, time drifts, and weird gaps that don't make sense on a spreadsheet. I think Fabric is on the right track because it leans into that messiness. It uses cross-checks—matching task logs against execution traces and physical constraints—to ensure the data couldn't have been "pre-baked" in a script.

Replay attacks are even more annoying because the data is technically "real." It’s a valid trace from yesterday, just played back today under a new ID to double-dip on rewards. If you don't lock data to a specific context, it’s dangerous.

Fabric handles this by forcing every proof to bind to a one-time challenge. You add a strict time window and session constraints, or you leave the door wide open for replay to breathe.

None of this matters if device identity is "soft." If you can spin up a thousand virtual nodes and feed them the same stream, the network collapses. This is why the hardware-level attestation Fabric hints at—using secure elements or monotonic counters—is so vital. You have to make cloning an identity more expensive than the reward itself.

The most painful lesson I’ve learned? Never reward "clean" logs. Real robots survive by managing failure, not by avoiding it. If a system pays for smoothness, operators will spend all their time polishing the mask of fraud. We should be rewarding "honest noise."

Trust shouldn't come from a project’s claims; it comes from the constraints they build. When proof is locked to time, identity, and randomized audits, the market is finally forced to pay for real work.

The question is: when the next gold rush happens, will we have the patience to stick to these constraints, or will we start believing the "perfect" curves again?

@Fabric Foundation #ROBO $ROBO