I was watching a friend argue with an AI chatbot about a historical fact not long ago. The AI chatbot answered quickly. With confidence. It even cited a source. My friend paused, checked the source and then frowned. The reference did not actually say what the AI chatbot claimed it did. The answer looked polished. The truth behind it was uncertain.

That moment stayed in my head for a while. It reminded me that the real problem with AI may not be generating answers. Mira Network and AI systems like it may be verifying them. We are entering a period where AI systems can produce an amount of information almost instantly. Reports, summaries, explanations, code, analysis. The speed is impressive. Sometimes unsettling.

Yet the strange part is that most conversations about AI still revolve around how powerful the modelsre becoming. Larger datasets, models, faster training. The spotlight stays on generation.. Once machines begin generating things at this scale another question quietly appears. Who checks the results of AI systems?

Mira Network becomes interesting because it looks at the layer that comes after the answer is produced. Verification of AI-generated claims is what Mira Network focuses on. In terms verification means checking whether an AI-generated claim actually holds up under scrutiny. Did the AI model reason correctly? Did it use information?. Did it just produce something that sounds convincing?

The difference matters more than people realize. Writing an answer is easy for a machine like an AI chatbot. Confirming that the answer is reliable takes work. Anyone who has spent time researching something knows this instinctively. You can write a paragraph quickly. Verifying every statement inside it takes patience. Sometimes it takes longer than writing the paragraph itself.

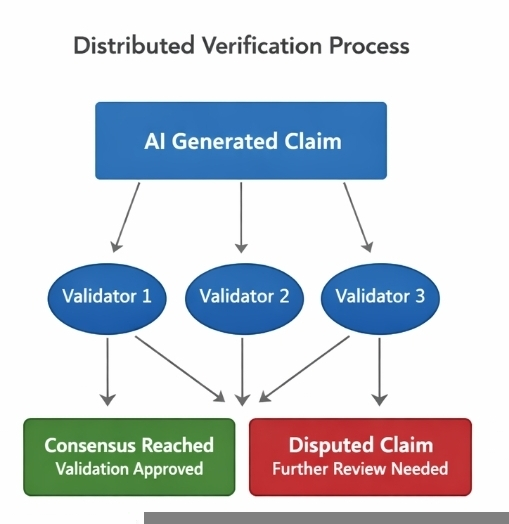

AI is a system that follows the pattern. Generation is cheap. Verification of AI-generated claims is expensive. The idea behind Mira Network is that verification should not rely on an authority. Instead it spreads the task across a network of participants. These participants are often called validators. Their role is to examine AI claims and evaluate whether they appear correct.

They may use their models reasoning systems or data checks to do this. If several validators independently reach conclusions the system gains confidence in the AI-generated claim. If the validators disagree the AI-generated claim becomes questionable. May need further evaluation. At glance this sounds like a technical mechanism. In reality it is also a coordination system.

Many independent actors are trying to assess the reliability of information at the time. They are not simply running software. They are been participating in a shared decision that process. This is where things become complicated. Imagine thousands of AI agents producing claims every minute. Each claim might require validators to review it.

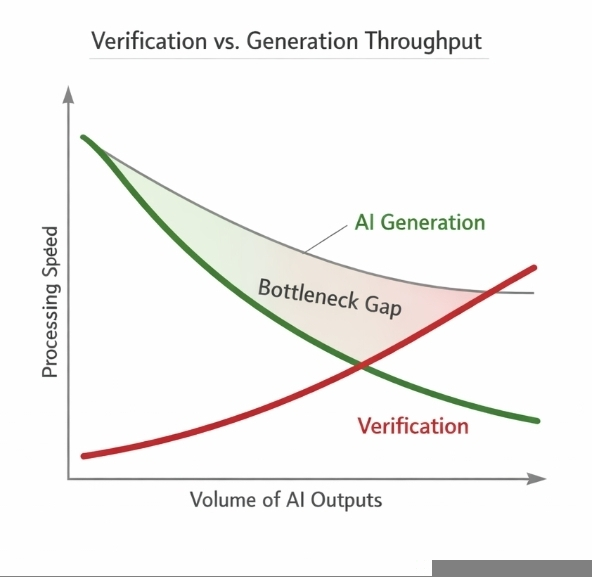

That means verification work multiplies quickly. The network may need to process more checks than the original generation tasks. In words the bottleneck shifts. Of waiting for AI to produce answers systems may begin waiting for the answers to be verified. I sometimes think of it like publishing.

Writing an article can take an afternoon. Fact-checking it properly can take days. Editors call sources confirm dates check quotations. The process slows everything down. It also protects credibility. Without verification the speed of publishing would. Trust would collapse. Something similar could happen with AI systems.

As AI agents begin interacting with systems, markets and automated services the cost of mistakes rises. A wrong answer in a chat conversation might be harmless. A wrong decision in an automated system could be expensive. So verification starts to look less like a feature and more like infrastructure.

Mira Network tries to organize that infrastructure through incentives. Validators are rewarded for participating in the verification process. In terms the network pays people or systems to check whether AI outputs are reliable. Economic incentives are not an idea in crypto networks. Blockchains rely on this very heavily.

Participants maintain the system because they receive rewards for doing. Mira seems to apply the thinking to information reliability. Still this approach brings its challenges. One question that often crosses my mind is how verification scales when the majority of participants are also machines.

A validator might not be a human carefully reading each claim. It might be another AI model running evaluation tests. So now you have AI generating claims, AI systems verifying those claims and a network aggregating the results. It works in theory.. It also creates layers of automation that depend heavily on statistical agreement rather than certainty.

Consensus all is not the same as truth. History offers plenty of examples where groups confidently agreed on something that later turned out to be wrong. Distributed verification reduces the risk of points of failure but it does not magically produce perfect knowledge. Reputations that systems may attempt to manage the risk.

Validators that consistently provide assessments gain credibility within the network. Over time their evaluations carry influence. Validators that perform poorly lose reputation or economic rewards. This dynamic reminds me of how credibility evolves on platforms like Binance Square.

Writers who repeatedly share insights slowly build an audience. Their posts appear often in rankings and dashboards. Meanwhile accounts that post low-value content fade into the background. The platform never explicitly declares who is "correct ". Patterns of reliability still emerge.

Verification networks operate in a way just with machine claims instead of social commentary.. Even reputation systems cannot fully solve the core tension between speed and reliability. The more checks you require the slower the system becomes. The fewer checks you require the the risk of error.

There is no balance.. Perhaps that is the part people rarely talk about when discussing autonomous AI systems. Intelligence itself may not be the limiting factor anymore. Models are improving quickly. The ability to generate answers will probably keep accelerating.

Verification on the hand grows heavier as systems scale. Every additional layer of checking adds friction. Every validator adds computation. Every dispute adds delay. So the future of AI may depend less on how smart the models become and more on how societies design systems of trust around them.

I sometimes wonder whether we are approaching a moment where information will be cheap but certainty will remain expensive. AI might generate streams of answers. The difficult part will be figuring it out that which ones deserves the attention. Networks like Mira are experimenting with that problem in a way.

Not by making AI smarter. By trying to build a system that questions it. Whether that system can keep up with the speed of machine intelligence is still unclear.. The question itself feels important. Because if machines can produce knowledge faster than we can verify it the real scarcity in the AI era may not be intelligence all. It may simply be trust, in AI systems.

Articolo

Mira Network:Why Distributed Verification Could Become the Bottleneck of Autonomous AI

Disclaimer: Include opinioni di terze parti. Non è una consulenza finanziaria. Può includere contenuti sponsorizzati. Consulta i T&C.

0

9

1.1k

Esplora le ultime notizie sulle crypto

⚡️ Partecipa alle ultime discussioni sulle crypto

💬 Interagisci con i tuoi creator preferiti

👍 Goditi i contenuti che ti interessano

Email / numero di telefono