For a long time I believed that the biggest advantage of artificial intelligence was speed. I would open a model, describe a complicated system that involved multiple chains, smart contracts, cross-chain messaging, and data movement between networks, and within seconds the machine would return something that looked incredibly polished. The response would read like it had been written by a team of senior engineers working together. The logic appeared structured, the architecture looked complete, and the explanation sounded confident enough to convince almost anyone that the solution was correct. The danger of that moment is something many people do not fully recognize yet, because when an answer looks intelligent and arrives instantly it creates the illusion of certainty. The model is not hesitating, it is not questioning its own reasoning, and it is not showing any doubt about the path it suggests. I have caught myself several times feeling the temptation to move forward immediately, almost treating the machine’s confidence as proof that the plan was safe to execute. The truth, however, is that behind that perfect formatting and persuasive tone there is often a black box that hides whether the logic is actually correct or simply convincing.

This black box problem becomes much more serious when the task is not theoretical but operational. When an AI system is asked to design or manage something involving multiple blockchains, the consequences of a small mistake can quickly become permanent. A poorly designed contract can lock funds, a misinterpreted regulation can create compliance issues, and an incorrect assumption about how chains interact can cause systems to fail after deployment. The frightening part is that most AI models are extremely good at sounding right even when they are wrong. They generate answers based on patterns rather than verified truth, which means they can confidently produce explanations that look flawless while quietly hiding critical errors. When people treat those outputs as trustworthy without verification, they are essentially gambling their infrastructure on a guess that happens to be written well.

This is exactly the reason why the verification layer introduced in Season 2 of the network built by the organization known as Mira Network has started to change the way I approach AI-assisted workflows. Instead of assuming that the machine’s output is reliable simply because it appears logical, the system treats the output as something that must be investigated before it can be trusted. When I first ran a complex deployment plan through the network’s trust layer, I expected the process to act like a simple review tool that either approved or rejected the result. What actually happened was far more detailed and far more useful than a basic yes-or-no check.

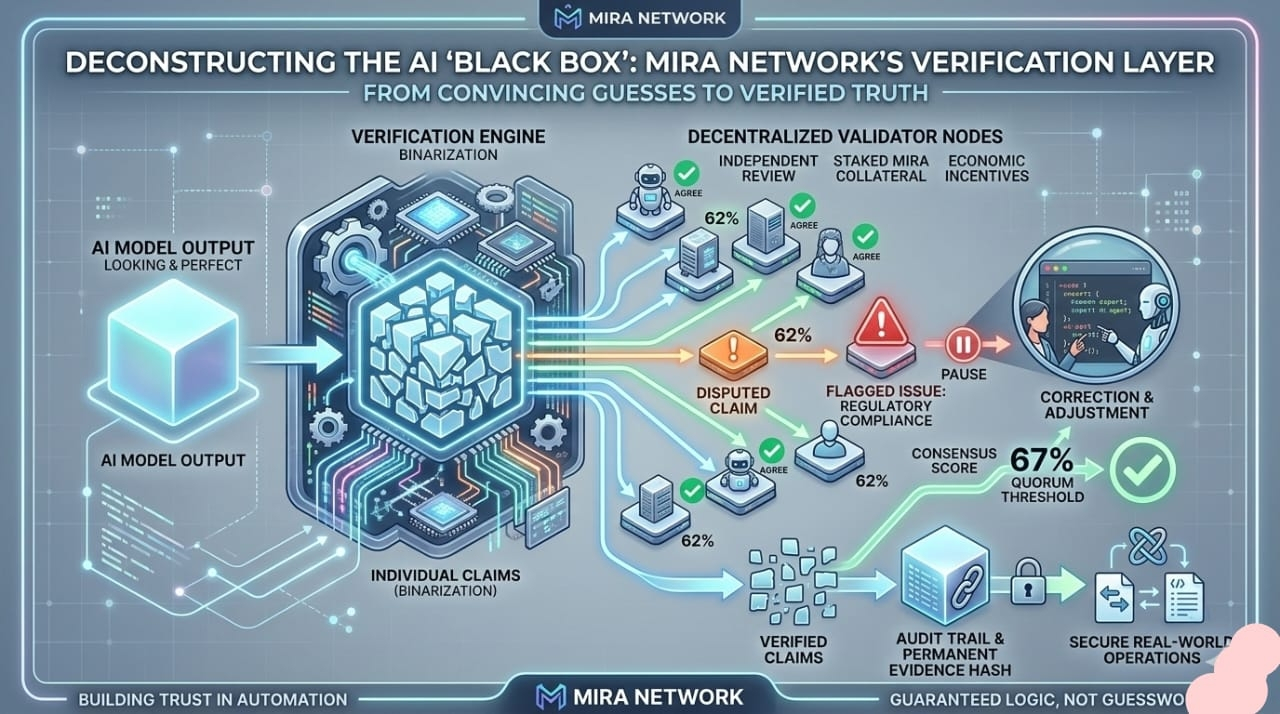

The verification process began with a step called binarization, which is essentially the act of breaking a large AI output into many small claims that can be examined individually. The plan I submitted contained dozens of assumptions, calculations, and logical steps that the model had woven together into a single polished explanation. Instead of treating that entire explanation as one unit, the system separated it into fifty-four independent statements that could each be evaluated on their own merits. Every claim was then sent across a decentralized network of validator nodes whose job was to analyze the statements and determine whether they were accurate. The experience felt very different from traditional AI usage because the machine’s answer was no longer treated as a finished product but rather as evidence that needed to be examined.

At first the process looked smooth and almost routine. The early claims moved quickly through the verification pipeline, with independent nodes reaching agreement and pushing the consensus score higher with each step. The dashboard showed the network gradually confirming the accuracy of the plan, and for a moment it felt as if the entire system would pass verification without any difficulty. But then something unexpected happened that revealed why this mechanism exists in the first place. One of the claims stopped progressing toward consensus even though the majority of nodes had already agreed on its validity.

The claim stalled at sixty-two percent agreement, which in many systems would be considered enough to move forward. The network, however, operates with a strict rule that requires a sixty-seven percent quorum before a decision can be finalized and recorded. That difference between sixty-two and sixty-seven percent might sound small on paper, but in practice it represents the difference between uncertainty and verified truth. One node had flagged a subtle issue involving regulatory requirements around cross-border data movement. Every other model I had previously consulted had ignored that detail completely, and even my own review had not noticed it. Because the network requires strong consensus before closing a claim, the process paused until the disagreement could be resolved.

That pause turned out to be the most valuable part of the entire experience. Instead of allowing the system to move forward with a potential mistake hidden inside the plan, the verification layer forced me to return to the specific step that had triggered disagreement. I reviewed the flagged line, investigated the regulatory condition that the node had identified, and realized that the architecture needed a small but important adjustment. After correcting the issue and submitting the plan again, the verification process ran through the claims once more. This time the consensus crossed the required threshold and the system generated an evidence hash that permanently recorded the verified result.

The difference between these two outcomes illustrates what the network is actually trying to accomplish with its verification model. The goal is not to replace artificial intelligence or claim that machines can never produce useful answers. Instead, the system assumes that AI will eventually produce incorrect information and designs a framework that prevents those mistakes from silently entering real operations. In that sense the trust layer treats AI less like an oracle and more like a witness whose statements must be evaluated by independent observers.

The observers in this case are validator nodes that participate in the network’s consensus process. Each node stakes the native asset known as MIRA as collateral before it can participate in verification. This requirement changes the dynamic of the network in a very important way because validators are not simply offering opinions about whether a claim is correct. They are putting their own capital at risk when they agree or disagree with a statement. If a validator supports a claim that later proves to be false or rejects one that is proven correct, the protocol can impose penalties that reduce the stake they have locked into the system. That economic pressure encourages validators to focus on evidence rather than intuition, because careless judgments can carry financial consequences.

This structure introduces what could be described as economic gravity into the verification process. Instead of creating an environment where participants casually vote based on personal beliefs, the network forces every validator to weigh their decisions carefully. Their incentives are aligned with the accuracy of the outcome because the cost of being wrong is directly connected to their stake. Over time this creates a decentralized jury that is motivated to reach the most reliable conclusion rather than simply following the majority opinion.

What makes this particularly interesting is how the system interacts with the broader idea of automation in blockchain environments. Many people imagine a future where autonomous agents manage logistics, coordinate financial flows, and execute complex instructions across multiple chains without human involvement. While that vision is technologically exciting, it also introduces serious risks if those agents operate without a mechanism that verifies their reasoning. An AI system that moves funds or orchestrates infrastructure needs more than intelligence. It needs a trust framework that ensures its decisions are correct before they become irreversible.

The verification layer addresses this need by inserting a decentralized checkpoint between AI reasoning and real-world execution. Instead of allowing a model’s output to immediately control contracts or trigger transactions, the system requires the logic to pass through a consensus process that evaluates its accuracy. The requirement of a sixty-seven percent quorum may appear strict, but it creates a powerful filter that separates assumptions from verified claims. The network essentially draws a clear line between a guess produced by an algorithm and a statement that has been validated by multiple independent participants.

As participation in the network grows, this model could reshape how people think about the relationship between artificial intelligence and decentralized infrastructure. In the early stages of the AI boom, speed was the dominant priority. The faster a system could generate answers, the more valuable it seemed. Now a different perspective is beginning to emerge where reliability matters just as much as speed. In high-stakes environments such as financial systems or cross-chain logistics, an incorrect answer delivered instantly is often more dangerous than a correct answer that takes time to verify.

The roadmap moving forward focuses on expanding the tools that allow developers to integrate this verification process directly into their applications. With deeper software development kit support and broader network participation expected in the coming months, the long-term objective is to make verification a normal step in automated workflows rather than an optional safety measure. The vision is an ecosystem where every important decision made by an AI system leaves behind a traceable audit trail that shows exactly how the result was validated.

In that future the intelligence of the machine will still matter, but the evidence supporting its conclusions will matter just as much. The ability to trace every claim back to a verified consensus will transform AI from a tool that produces persuasive answers into a system that produces accountable results. When automation reaches that level of transparency, the fear of the black box begins to fade because every decision carries proof that it was examined before it was allowed to shape the real world.

For me, that shift represents the real significance of this new phase of development. Artificial intelligence will continue to evolve and become more capable, but intelligence alone is not enough to build trustworthy systems. What ultimately matters is whether the outputs guiding important operations can be examined, verified, and proven reliable. By combining AI reasoning with decentralized validation, the network is attempting to build an environment where automation moves from guesswork to guaranteed logic, creating a world where the trail of evidence behind a decision is just as important as the decision itself.

#Mira #mira @Mira - Trust Layer of AI $MIRA