I have spent years working at the intersection of blockchain and AI. Designing systems, writing architecture, shipping products. And in all that time, one problem kept showing up in different shapes but it was always the same problem underneath.

I could not prove that the AI was right.

Not in a way that mattered. Not in a way I could show a client, write into a contract, or log on a ledger. The model would return an answer with complete confidence and I had no reliable mechanism to verify whether that confidence was earned or fabricated. Hallucinations are not rare edge cases in production systems. They are a structural feature of how these models work. They do not know what they do not know. And when you are building something that other people depend on, that gap between output and truth becomes your problem to carry.

The second problem was just as frustrating. Every time I started a new project or moved into a new domain, I was rebuilding the same logic from scratch. Retrieval setup. Reasoning chain. Verification step. Output formatting. The same pipeline, assembled manually again and again, because there was no real infrastructure for reuse. You were not building on top of something. You were rebuilding from the bottom every single time. That is not how mature engineering works. That is how you waste months.

The third problem was the one nobody wanted to talk about honestly. When you use a centralized AI system, you are trusting an institution. You are trusting their benchmarks, their policy documents, their internal evaluations. There is no external audit trail. There is no public record of what the model decided and why. As someone who came from blockchain, where the entire point is that you do not have to trust a single authority because the ledger does the work, this felt like a step backwards. We had spent years building systems where verification was public and inspectable. And then AI came along and said trust us, we ran the tests.

That combination of problems, unreliable outputs, no reusable infrastructure, and centralized unverifiable trust, is what made me take Mira seriously when I first looked at it properly.

What Mira is doing is not a marginal improvement on existing AI tools. It is a structural rethink of where trust lives in an AI system.

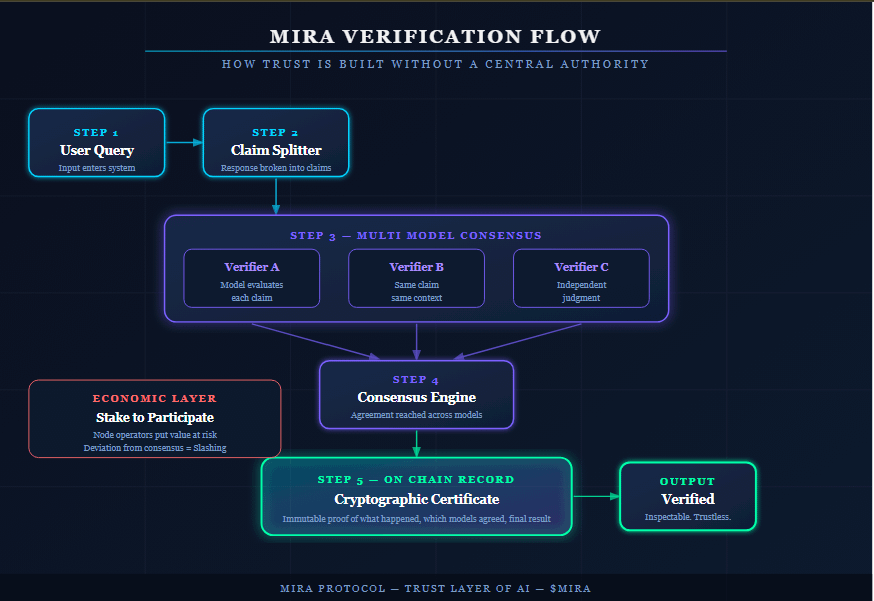

The verification layer works like this. Instead of asking you to trust a single model's full response, Mira breaks that response into individual claims. Each claim gets sent to multiple independent verifier models that evaluate the exact same statement in the exact same context. Consensus is reached across those models. And then a cryptographic certificate is generated that records what happened, which models agreed, which did not, and what the final determination was. That certificate lives on chain. It is inspectable. It is not a company's word. It is a record.

For me as an architect, that changes everything. It means I can build systems where the verification step is not a promise buried in documentation. It is an output I can log, audit, and show. When a client asks how I know the AI got it right, I have an actual answer. Not a benchmark. Not a policy page. A cryptographic trail.

The Mira Flow side of things solves the second problem I described. Instead of assembling pipelines manually every time, Flow lets you build modular workflows where AI models, knowledge retrieval, reasoning modules, data sources, and action tools connect together once and then run as a reusable system. The pipeline becomes infrastructure. You build it properly once and it works repeatedly without being reconstructed from scratch for every new project. That is how serious engineering is supposed to work and it is the first time I have seen it approached that way seriously in the AI space.

The economic layer underneath all of this is what makes it durable rather than just elegant in theory. Node operators who participate in verification have to stake. If they deviate from consensus persistently, they get slashed. That means careless verification is not just bad behavior. It is expensive behavior. The incentive structure is aligned with accuracy in a way that a centralized system controlled by one company simply cannot replicate, because there is no external pressure on them to be wrong less often. On Mira, being wrong consistently costs you something real.

One case study they published showed accuracy improving from 75 percent to 96 percent after a company integrated their Verified Generation API. I read that number carefully because I know how easy it is to present favorable data. But the direction of the result matches what the architecture would predict. When you replace single model confidence with multi model consensus and you make deviation expensive, accuracy goes up. That is not surprising. It is the expected outcome of the design.

What I keep coming back to is a simple comparison. In blockchain we say do not trust, verify. The whole point of a public ledger is that you do not need to believe anyone. You can check the record yourself. AI has been operating on the opposite principle since the beginning. Believe the output. Trust the company. Read the terms of service.

Mira is the first project I have seen that is genuinely trying to bring the blockchain principle into AI infrastructure. Not as a marketing angle. As an actual engineering decision about where verification lives and who gets to inspect it.

That is why I think it matters. Not because the token is moving. Not because the narrative is hot right now. But because the problem it is solving is real, I have lived inside that problem for years, and the architecture it is proposing is the right shape of answer.

Not financial advice. This is an architect talking about infrastructure.

#BinanceSquare #AI #Blockchain