Over the last few months, I’ve started relying on AI tools more often while researching different topics.

The speed is impressive.

You ask a question.

A detailed explanation appears almost instantly.

At first, it feels like having a powerful assistant always ready to help.

But after using these tools regularly, I began to notice something subtle.

Sometimes the answer sounds confident…

yet a small detail turns out to be incorrect when checked against other sources.

Nothing dramatic.

Just enough to make you pause.

Those moments reveal an important reality about many AI systems today.

Most models generate responses by predicting patterns in data.

They are extremely good at producing language that sounds reasonable and convincing.

But the system itself often doesn’t verify whether each statement is actually true.

This is where the idea of decentralized verification becomes interesting.

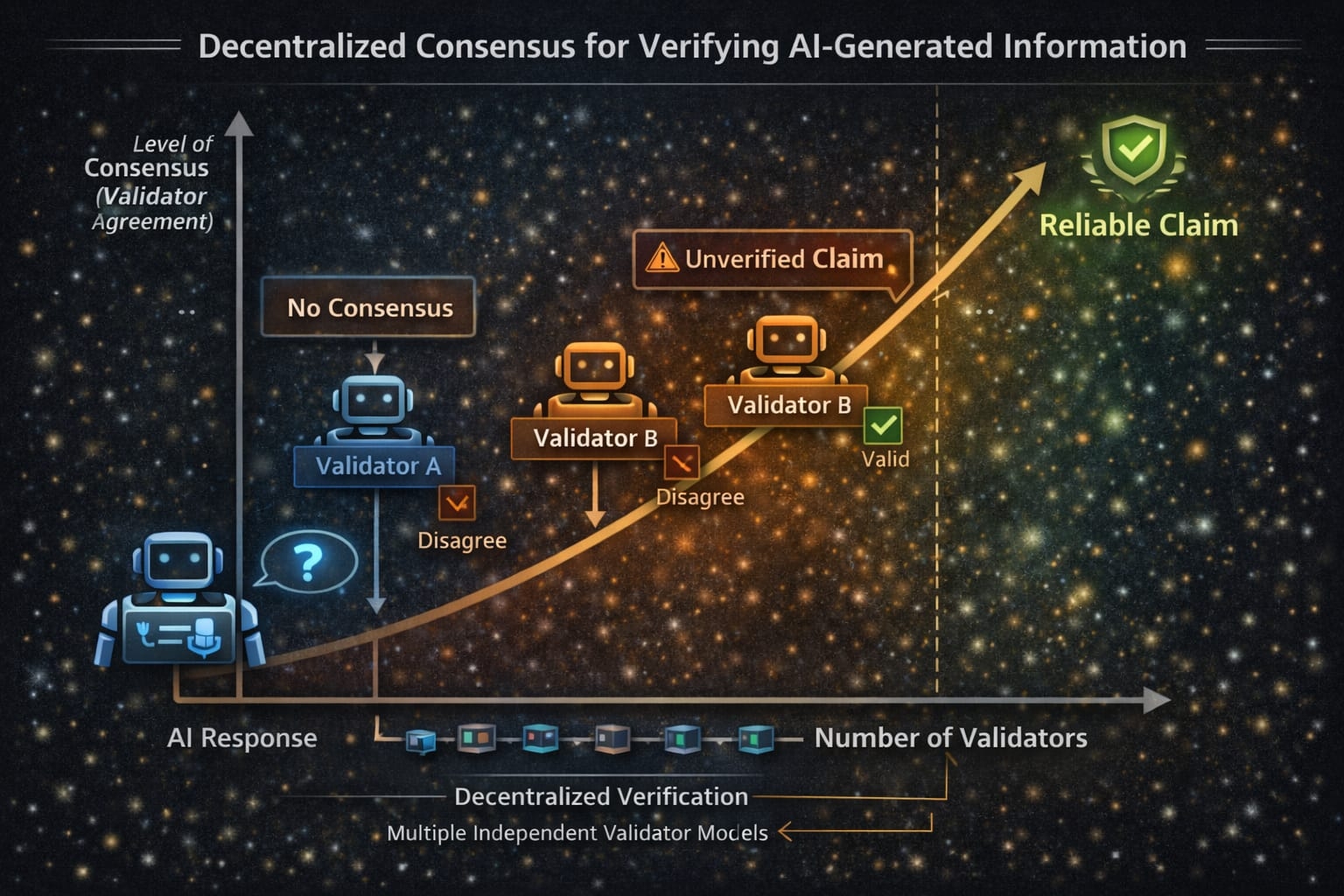

Instead of relying on a single model to generate and judge its own output, a network can examine the response from multiple perspectives.

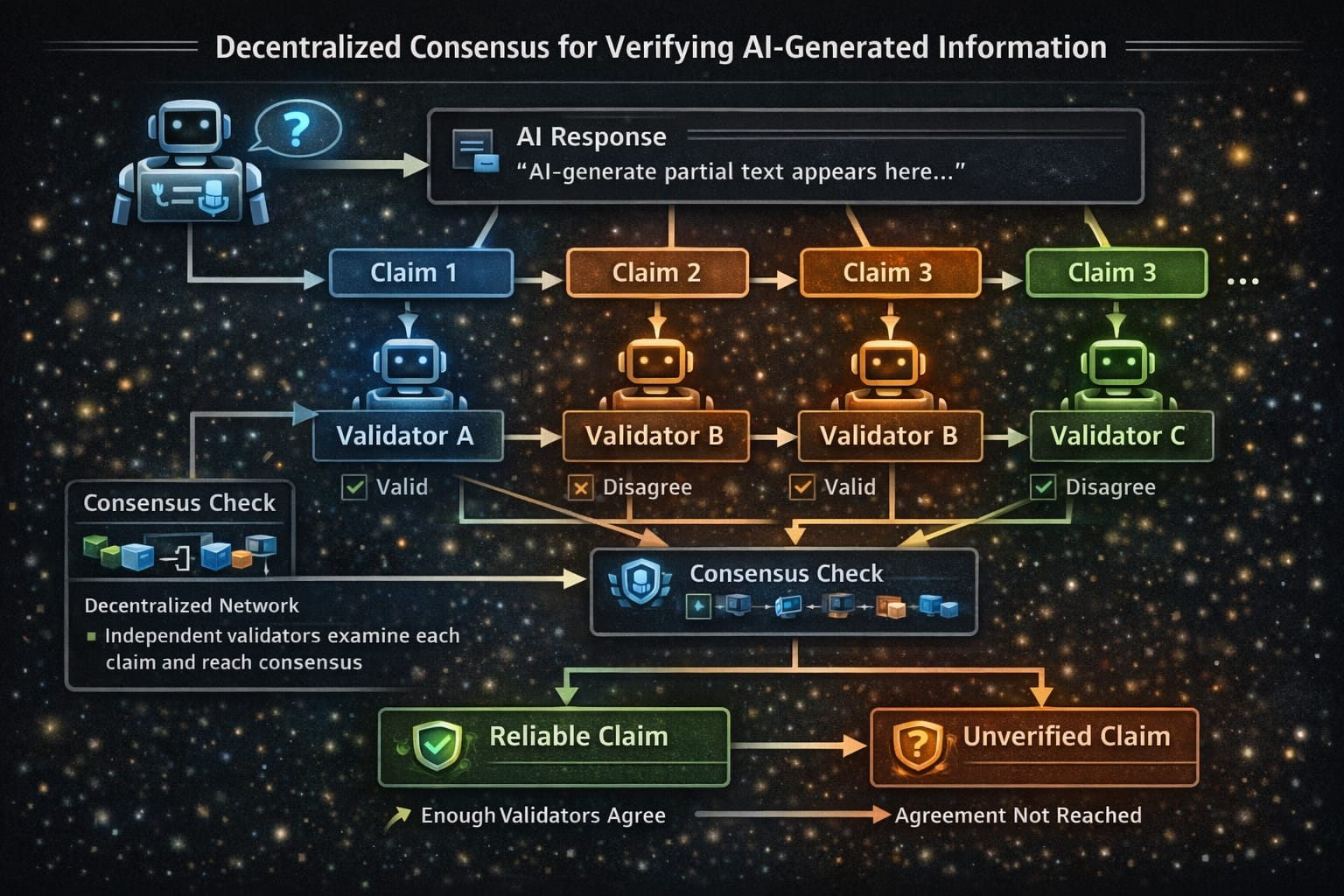

One approach is to break an AI response into smaller pieces.

Each piece becomes an individual claim.

Those claims can then be evaluated by different AI models acting as independent validators.

Every validator reviews the information separately.

Some models may agree with the claim.

Others may challenge it.

When enough validators reach agreement, the network forms a consensus about whether the claim is reliable.

In simple terms, trust doesn’t come from one model’s confidence.

It comes from multiple systems independently reaching the same conclusion.

This concept feels similar to how decentralized technologies solve trust in other areas.

Instead of relying on a single authority, reliability emerges from agreement across many participants.

For AI systems, this kind of structure could become increasingly important.

AI is already influencing research, financial analysis, education, and everyday decision-making.

In these situations, the difference between information that sounds correct and information that can be verified becomes critical.

Generating answers quickly is already something AI can do very well.

The real challenge now is making sure those answers can be checked, validated and trusted before people rely on them.

Decentralized consensus offers one possible path toward that future.

@Mira - Trust Layer of AI #Mira $MIRA