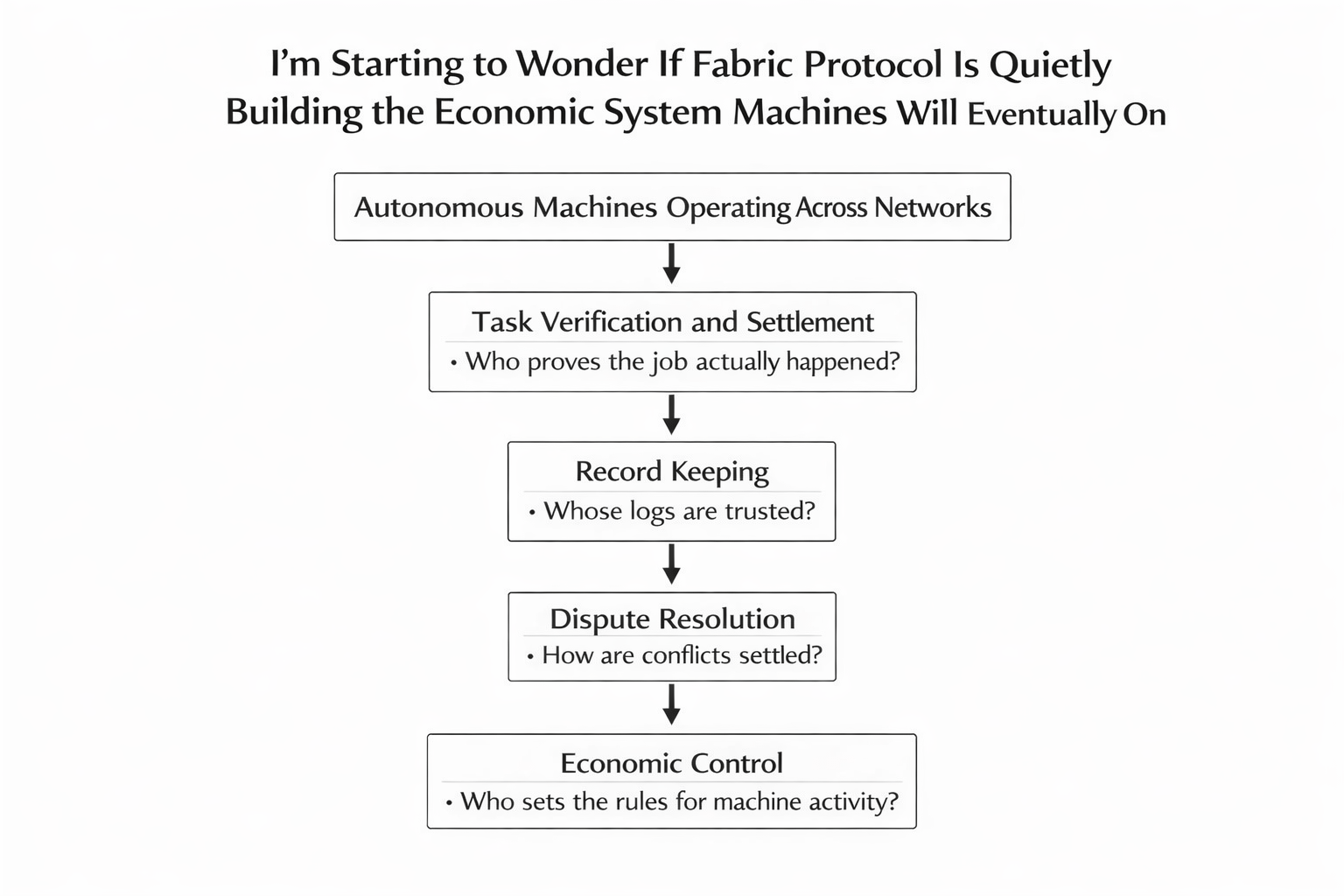

I’m Starting to Wonder If Fabric Protocol Is Quietly Building the Economic System Machines Will Eventually Depend On

The longer I spend studying projects connected to robotics, automation, and decentralized systems, the more I notice something interesting. Most conversations about the future of machines focus almost entirely on capability. People talk about faster robots, smarter AI models, and autonomous systems that can perform increasingly complex tasks.

But capability alone doesn’t create an economy.

What actually creates an economy is coordination.

And the more I look at Fabric Protocol and $ROBO , the more I feel like that is the part the project is really trying to solve. Not the robots themselves, but the invisible infrastructure that allows those machines to participate in a broader system of work, payments, trust, and accountability.

That’s a much less glamorous problem than building intelligent machines, but it might be the more important one.

Because once machines start operating outside isolated environments, everything becomes messy very quickly.

Robots don’t just need sensors and motors. They need identity. They need a way to prove what they did. They need a system where tasks can be recorded, verified, and settled economically. And most importantly, they need a framework that different organizations can trust without relying on a single central authority.

That is where Fabric starts to feel different from most projects in this space.

Instead of approaching robotics purely as a hardware or AI problem, the project appears to be thinking about the ecosystem around machines. The operators deploying them, the builders improving them, the networks coordinating them, and the economic incentives that keep those systems functioning as they scale.

The more I think about it, the more it feels similar to how the internet itself evolved.

In the early days of the internet, the most visible progress came from applications. Websites, search engines, social platforms, and online services captured most of the attention. But underneath all of that activity was a quieter layer of infrastructure — protocols that allowed computers to identify each other, exchange information, and coordinate across networks.

Without those protocols, none of the applications would have worked.

Fabric seems to be exploring whether something similar is needed for machine economies.

Imagine a near-future scenario where autonomous machines are operating across cities, warehouses, farms, ports, and energy systems. Some robots deliver goods. Others inspect infrastructure. Some maintain equipment, while others collect environmental data.

Each of those machines is completing tasks that create value.

But once you have machines operating across different organizations, different companies, and different jurisdictions, a whole new set of questions begins to appear.

Who verifies that the task actually happened?

Who records the result?

Who releases payment?

Who resolves disputes when something goes wrong?

And perhaps most importantly, who controls the system where all of those interactions take place?

This is where coordination becomes more important than capability.

To understand why, imagine a logistics environment where several companies operate delivery robots in the same city. One company provides the machines. Another manages charging infrastructure. A third runs a delivery marketplace connecting customers to services. A fourth provides insurance coverage if accidents occur.

Now imagine a delivery task fails.

The robot reports that it completed the job.

The customer insists the package never arrived.

The operator’s system shows a successful delivery timestamp.

The insurance provider demands evidence before covering a claim.

Without a shared system of verification, every participant relies on their own internal records. Each company trusts its own logs. Each system produces its own version of the story.

Disputes become messy. Resolution becomes slow. Trust becomes expensive.

Now imagine that every key event in that process — task creation, robot identity, location checkpoints, and task completion — is recorded inside a shared coordination layer that all participants can reference.

Instead of arguing over whose system is correct, the participants rely on a standardized record.

That doesn’t magically solve reality, but it does create a common source of evidence.

And evidence is what makes complex systems workable.

Here is another example that shows why this matters.

Imagine a port operating hundreds of autonomous cargo vehicles that move containers between ships, cranes, and storage yards. Each vehicle completes thousands of movements every day. If one container goes missing or arrives at the wrong location, several companies become involved — the shipping company, the port operator, the logistics provider, and the insurance firm.

If every system records different information about where that container moved, disputes can take days to resolve. But if movement checkpoints and task confirmations are recorded in a shared verification layer, every participant can reference the same timeline of events.

Suddenly the argument changes from speculation to evidence.

Another example helps illustrate the idea even more clearly.

Picture a large automated warehouse running hundreds of robotic picking units overnight. Orders move through the system continuously. Robots retrieve items from storage racks and deliver them to packing stations.

By morning, the system reports that thousands of orders were completed successfully.

But a major client complains that an entire shipment is missing.

The warehouse dashboard shows everything was processed correctly. The robotics system claims the units completed their routes. The shipping department insists they packed what the system told them to pack.

Now someone has to figure out where the mistake actually happened.

Did a robot skip a rack?

Did the system misreport completion?

Did a packing station mishandle an item?

Without reliable verification records, the dispute becomes political instead of technical.

Whoever controls the logs controls the narrative.

Fabric appears to be asking whether there should be a neutral layer where those operational events can be recorded and verified across different participants.

That idea may not sound dramatic at first glance, but it touches a very real problem.

The more automation spreads into real-world industries, the more important accountability becomes.

Machines don’t just perform tasks — they generate claims about tasks. They generate data about actions taken, locations visited, and work completed. When those claims become economically meaningful, systems must exist to verify them.

And verification becomes the backbone of trust.

This is also where the economic design behind the network begins to matter.

The $ROBO token, as I understand it, isn’t meant to be just a speculative asset floating around markets. In theory, it acts more like an operational component of the system. Participants who want to operate within the network may need to stake tokens, signal reliability, and accept economic penalties if they submit dishonest information.

That kind of structure turns the token into collateral for honesty.

For example, imagine a drone fleet responsible for delivering urgent medical supplies between hospitals. Each flight generates telemetry data — location points, timestamps, and delivery confirmations. Independent validators in the network review that data.

If a validator falsely approves a delivery that never occurred, their staked tokens could be penalized.

Suddenly honesty is not just a moral expectation — it becomes an economic requirement.

Systems built this way attempt to align incentives with accurate reporting.

You can imagine a similar scenario in energy infrastructure. Autonomous inspection drones monitor pipelines or power lines across large regions. When the drone reports that an inspection was completed safely, regulators and maintenance teams need to trust that information. If those inspection events are verified through a neutral protocol, the data becomes much harder to falsify.

That’s the type of operational reliability that infrastructure systems aim to provide.

Of course, none of this removes the execution challenge.

Building coordination infrastructure is far harder than launching an application. Infrastructure has to function reliably under stress. It has to handle edge cases, disagreements, failures, and real-world complexity.

And unlike speculative projects that live entirely in digital markets, robotics systems operate in environments where mistakes can have physical consequences.

Factories, hospitals, transportation networks, and logistics systems do not tolerate unreliable software for long.

Which means Fabric’s success will not be measured by hype or narrative cycles.

It will be measured by something much quieter.

Real operators integrating the system into their workflows.

Real disputes being resolved through its verification layer.

Real participants depending on the network because it reduces friction in their operations.

Infrastructure earns its reputation through repeated usage, not through marketing.

The internet itself offers a useful analogy.

Most people rarely think about the protocols that allow the internet to function. They don’t celebrate domain name systems or routing protocols. Those systems are invisible precisely because they work so reliably that nobody needs to think about them.

But remove them, and the entire network collapses.

If Fabric eventually becomes a place where machine identity, task verification, and economic settlement are handled consistently across different participants, the protocol could begin to occupy a similar role for machine economies.

Not visible.

But essential.

That outcome is still uncertain, of course.

The challenge is enormous. Coordinating machines across industries, organizations, and regulatory environments is far more complicated than coordinating digital transactions. Verification mechanisms must be robust. Incentives must remain balanced. Governance must avoid capture by narrow interests.

But the reason the project keeps attracting attention is that it is at least asking the right structural questions.

Instead of focusing only on what machines will be able to do, Fabric seems to be asking what kind of system those machines will need in order to function inside a broader economic environment.

That shift in perspective matters.

Because the future of automation may not be defined solely by how intelligent machines become, but by the frameworks that allow those machines to interact safely, transparently, and economically with the rest of the world.

And if that future arrives, the infrastructure supporting it may become just as important as the machines themselves.

That’s the reason I keep watching Fabric.

Not because it fits neatly into a trending narrative, but because it’s exploring a layer of the machine economy that most people are still ignoring.

And sometimes the most important systems are the ones that operate quietly underneath everything else.