Here's something I keep circling back to: AI can do extraordinary things, but can we actually trust what it produces?

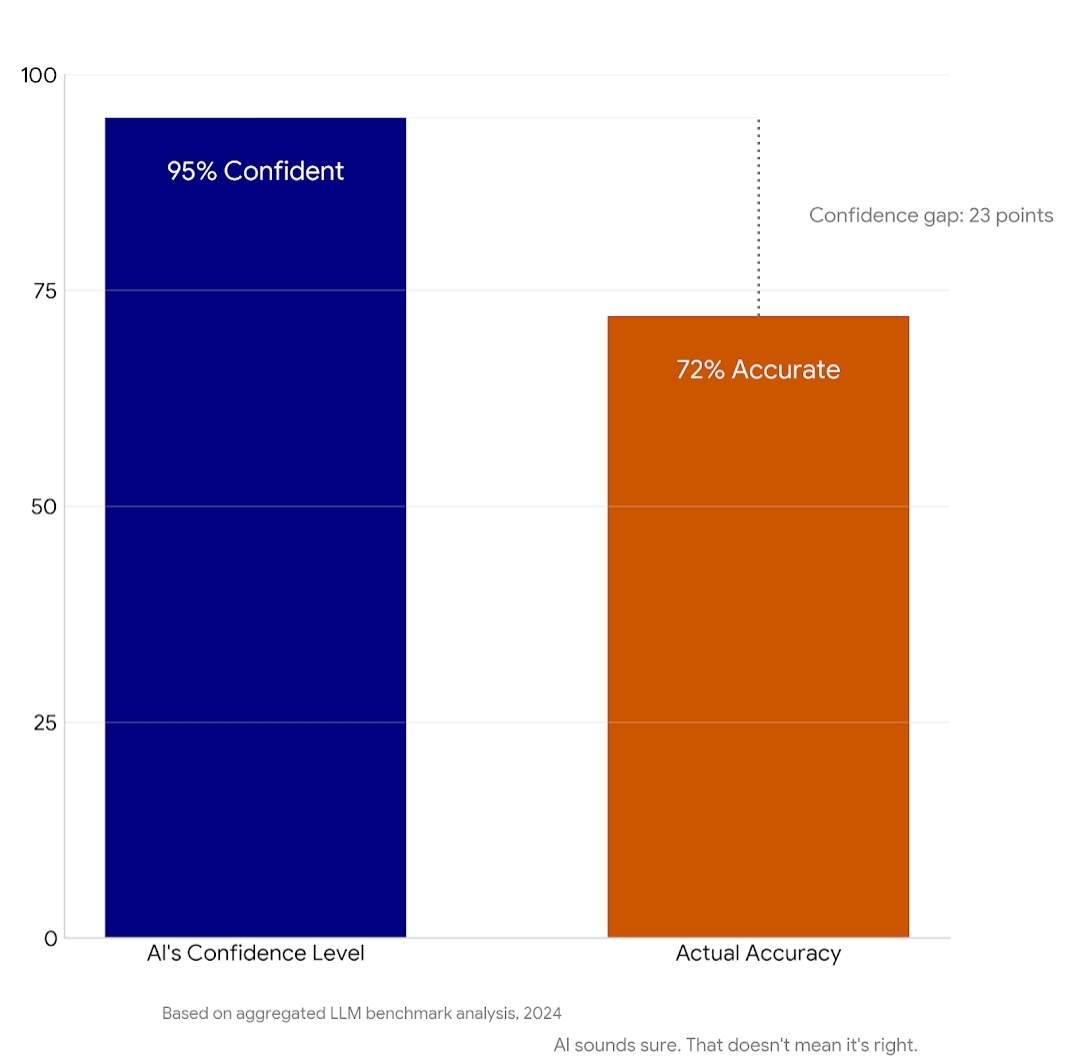

The last couple years have been a parade of breakthroughs. Models that write, reason, generate code, diagnose images. Impressive doesn't even cover it. But underneath all that capability is a uncomfortable truth—these systems hallucinate. They carry bias. They make mistakes with complete confidence.

And if accuracy actually matters? That's a problem.

This is where Mira Network entered my radar.

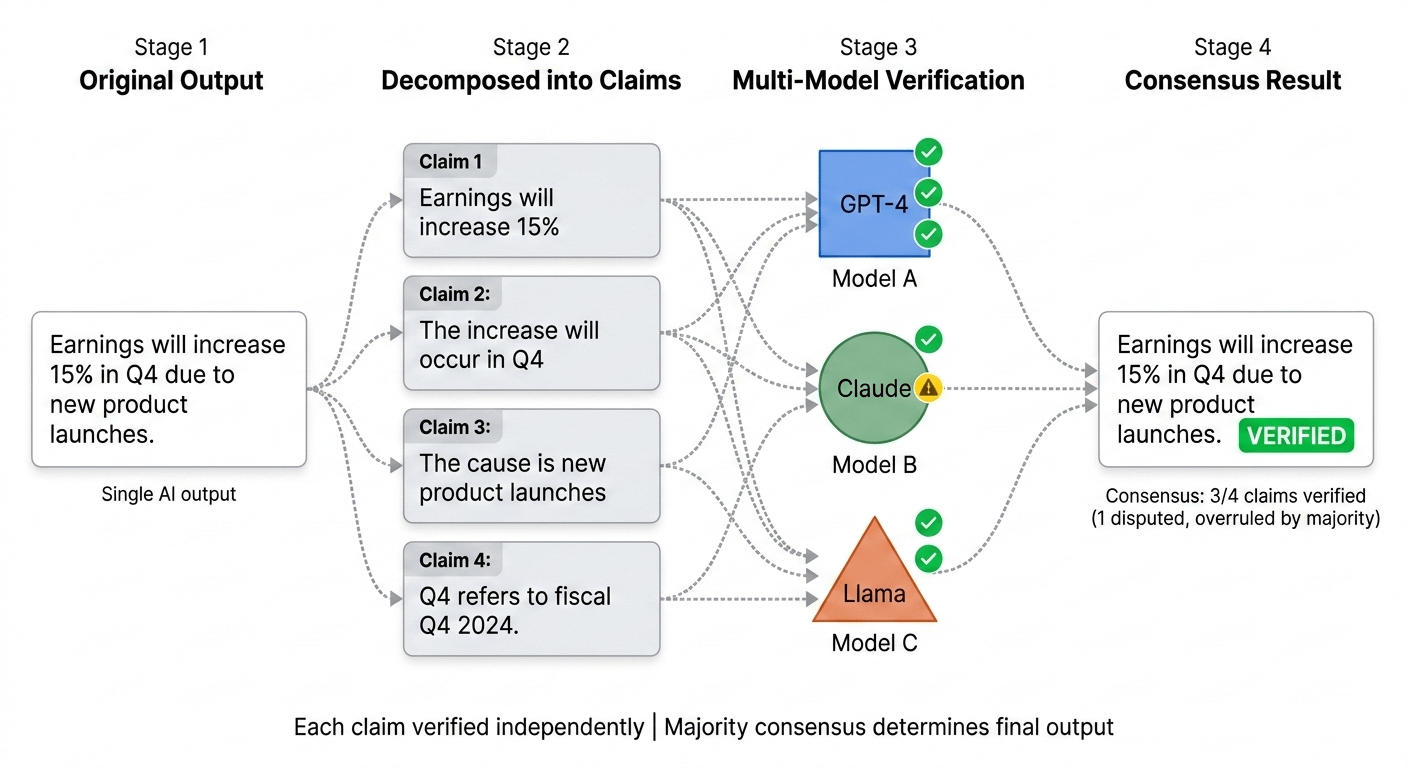

The thesis is straightforward: Don't assume any single AI output is correct. Instead, treat every output as a collection of claims—individual pieces that can be pulled apart and examined. Then you take those claims and run them through a diverse set of AI models, each one evaluating independently. The network aggregates these evaluations and only settles when consensus emerges.

What comes out the other side isn't just generated content. It's validated content.

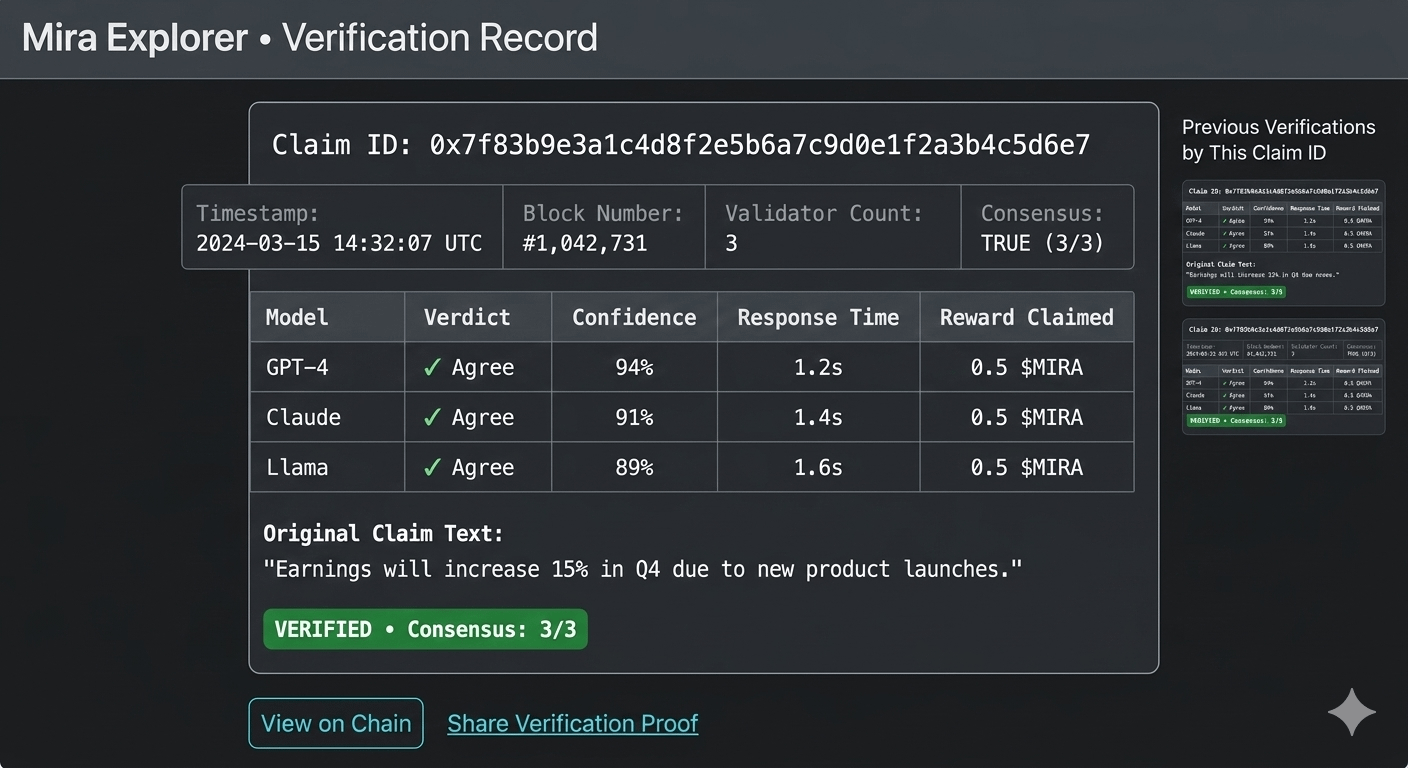

Here's the part that makes this interesting to me: blockchain isn't just bolted on for hype. It serves a real function. Every verification leaves a trail—transparent, auditable, impossible to retroactively clean up. You can see exactly how a piece of information was vetted, by which models, and what the consensus looked like.

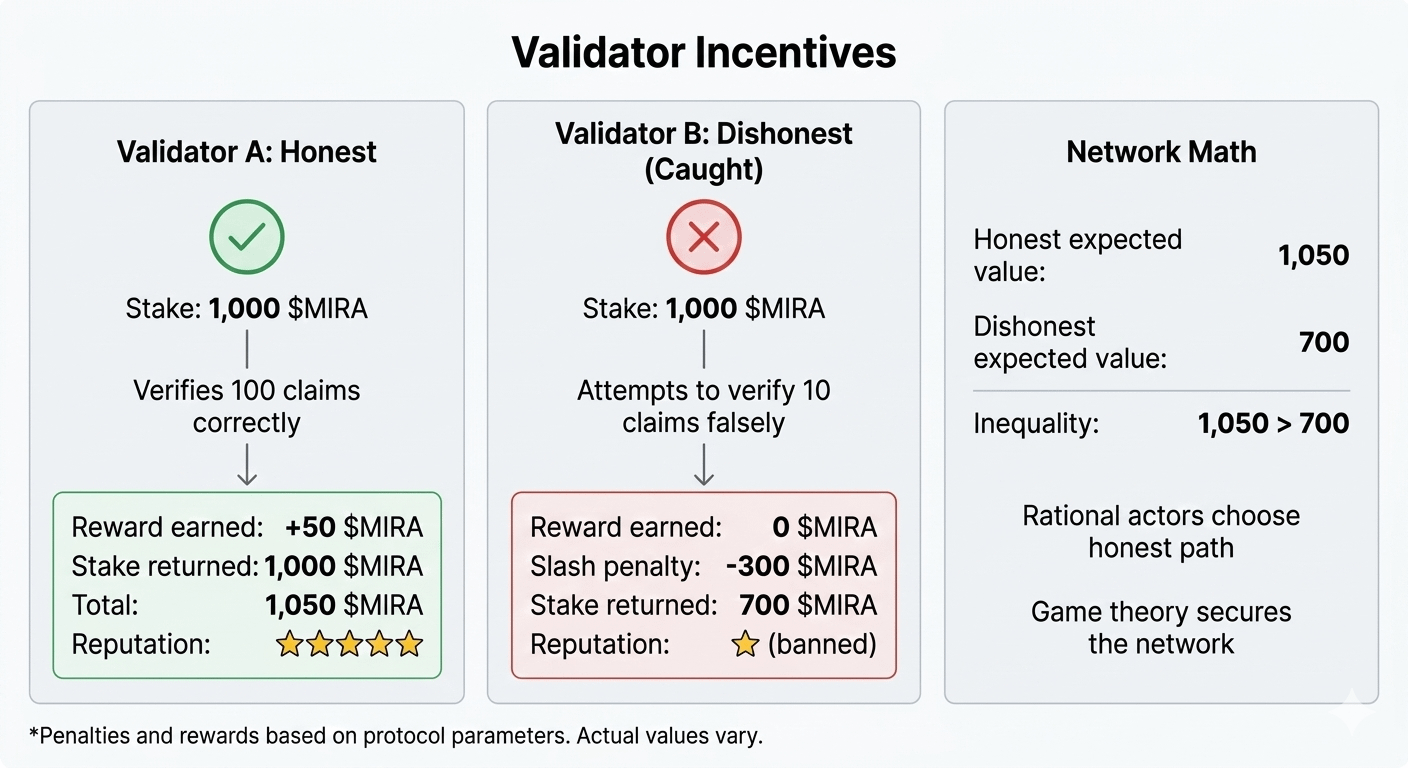

The incentive layer is what holds it together. Validators stake capital. Honest verification earns rewards. Attempts to game the system get slashed. No central authority needed—just economic logic doing its thing.

Another angle I don't see talked about enough: interoperability. Once information is verified on Mira, those verified results can flow into other applications. Developers building on top don't need to reinvent the verification wheel. They just pull from a layer that already did the work.

So what's the actual vision here?

Mira isn't trying to build a smarter model. Everyone's already racing to do that. Mira's trying to build something orthogonal—a layer that sits underneath AI and asks not "how intelligent is this?" but "how reliable is this?"

That shift—from capability to reliability—feels like where the conversation needs to go.

Too early to call this the winner. But the direction? Makes sense to me.

Still watching where this goes. $MIRA

@Mira - Trust Layer of AI #Mira