Over the past year I’ve spent a lot of time thinking about a quiet problem inside the AI industry that most people in crypto rarely talk about seriously: verification. Not model performance, not GPU shortages, not the race between tech giants. Verification. The simple question of whether an AI system’s output can actually be trusted.

This is the context in which I started paying closer attention to @Mira - Trust Layer of AI and the idea behind $MIRA.

Most people approach AI infrastructure projects expecting another model marketplace or compute network. That assumption misses what Mira is trying to address. The real weakness in modern AI systems is not that they cannot generate answers. The problem is that they generate answers too easily, with no reliable way to prove whether those answers are correct. Hallucinations, subtle bias, and logical errors are not rare edge cases. They are structural features of how current models operate.

From a market perspective, this creates a strange contradiction. AI is being pushed into increasingly sensitive environments—finance, automation, decision systems—yet the outputs themselves remain probabilistic guesses. In many cases the industry simply accepts this trade-off.

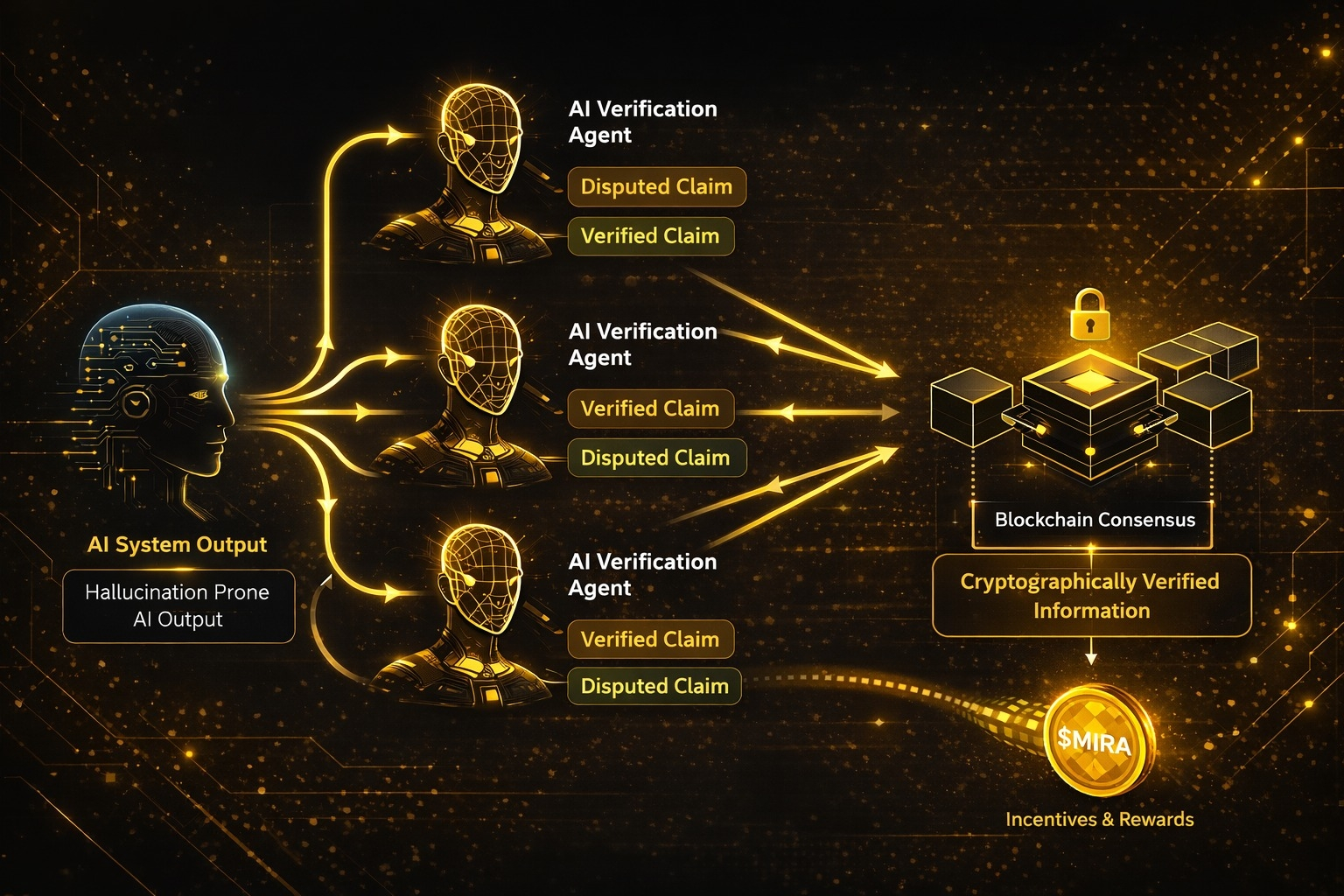

What Mira attempts to do is introduce a verification layer between AI output and real-world usage. Instead of treating an AI response as a single authoritative answer, the system breaks it into smaller verifiable claims. These claims are then distributed across multiple independent AI models that attempt to validate or challenge them. The result is a form of consensus around the reliability of information.

If that sounds familiar, it should. Conceptually it borrows something from the way blockchains approach truth. A single node does not decide the state of the system. Consensus emerges through distributed validation.

When I look at Mira through this lens, it feels less like an AI product and more like a coordination mechanism.

The architecture reflects that idea. Rather than focusing on training larger models, the network focuses on orchestrating interactions between models. When an AI system produces an output, the protocol decomposes that output into atomic statements. These statements are then routed through a network of verification agents. Each agent independently evaluates the claim using its own reasoning process.

The important detail here is that disagreement is not treated as failure. Disagreement becomes data. When multiple agents produce different evaluations, the protocol weighs those responses and produces a consensus confidence score. That score becomes a cryptographically verifiable record on-chain.

In simple terms, Mira attempts to turn AI answers into verifiable information rather than probabilistic text.

What makes this interesting to me from a crypto perspective is how incentives enter the picture. Verification is not free. Models require compute, and participants need a reason to provide that compute. This is where the role of $MIRA becomes relevant.

The token functions as the economic layer coordinating the verification process. Participants who contribute computational verification resources are rewarded, while inaccurate or malicious validation attempts can be penalized. In practice this creates a market for truth verification.

Whether that market becomes meaningful is still an open question.

From a user standpoint, interaction with a system like Mira is surprisingly indirect. Most people will never consciously think about verification layers. Developers integrating AI into applications simply want a confidence measure attached to model outputs. Traders and analysts might care about reliability scores when automated agents produce research or signals.

But the infrastructure behind that simple interface is where the complexity lives.

There are also uncomfortable trade-offs here that deserve attention.

First, verification adds latency. If every AI output must pass through multiple independent validators, response times inevitably increase. For real-time systems this could become a serious constraint.

Second, verification itself is not perfectly objective. If the validating agents are also AI models, they inherit many of the same biases and reasoning limitations as the original system. The network may reduce hallucinations, but it cannot eliminate them entirely.

Third, the economics of verification are still experimental. The value of $MIRA ultimately depends on sustained demand for trustworthy AI outputs. If developers decide that probabilistic answers are “good enough,” the incentive structure could weaken.

That said, the timing of this idea feels deliberate.

The AI industry is entering a phase where reliability matters more than novelty. Early excitement focused on what models could produce. The next stage will likely focus on whether those outputs can be trusted in high-stakes environments. Finance, automated trading systems, and enterprise decision tools will require stronger guarantees.

This is where protocols like Mira start to make sense.

From a market observation standpoint, I tend to watch two signals when evaluating infrastructure projects like this. The first is developer integration. If applications begin embedding verification scores into their systems, that suggests real utility. The second signal is on-chain activity tied to verification requests. A healthy protocol should show growing demand for validation rather than purely speculative token trading.

Price action alone rarely reveals whether an infrastructure protocol is working. Usage patterns do.

In the broader cycle of crypto innovation, Mira sits in an interesting intersection between AI and decentralized coordination. We have seen cycles driven by compute networks, data marketplaces, and model hosting platforms. Verification networks represent a slightly different layer of the stack.

They assume that AI models already exist and will continue to exist. The question is not how to build them, but how to make their outputs dependable enough for autonomous systems.

I don’t think most of the market has fully internalized that distinction yet.

Right now attention is still focused on building bigger models and faster inference pipelines. Verification feels like a secondary concern. But historically in technology, the layers that ensure reliability often become the most important ones once systems mature.

The internet did not scale because information could be generated. It scaled because protocols ensured that information could move reliably between machines.

Whether @mira_network becomes that kind of layer for AI remains uncertain. Many technical and economic assumptions still need to prove themselves under real usage.

But the underlying question the project raises is difficult to ignore.

If machines are increasingly responsible for producing knowledge, making decisions, and interacting with financial systems, then eventually someone has to answer a simple question: who verifies the machines?

That question alone might end up being more important than the models themselves.