What makes Mira interesting is that it does not start from the usual AI promise that the next model will finally be smart enough to trust on its own. It starts from a less glamorous, more durable insight: intelligence and reliability are not the same thing. Mira describes itself as a verification layer for autonomous AI, a system meant to check outputs and actions through “collective intelligence” rather than ask users to accept the judgment of one model or one company. That shift matters, because it treats trust as an infrastructure problem, not a branding exercise.

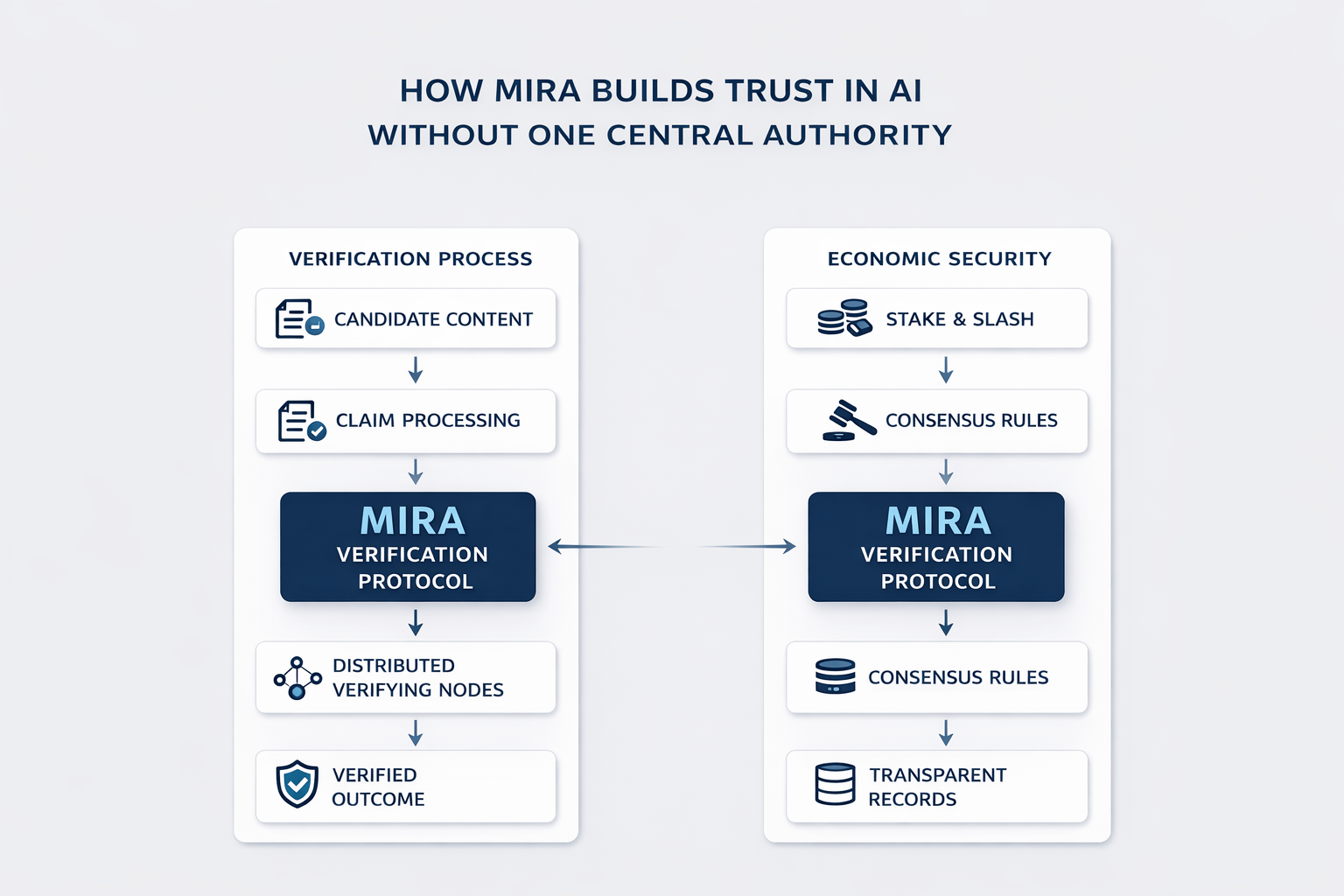

The core of Mira’s argument is laid out plainly in its whitepaper. A single model can’t be great at everything. The text argues that no matter how advanced it is, it won’t be able to reduce both hallucinations and bias equally well in every area. And even if you use a centrally controlled group of models, those systems still reflect the judgments and assumptions of the people who chose them. So the real limitation isn’t just the technology — it’s also the people behind it. It is institutional. If one authority decides what counts as truth, the system may look consistent while still being narrow, brittle, or quietly skewed. Mira’s answer is to distribute verification itself.

That sounds abstract until you get to the mechanism. Mira explains that the system takes a draft answer and splits it into separate claims that can each be checked on their own. That way, several verifier models are evaluating the exact same claim in the exact same context, instead of wandering off in different directions while reacting to a long response. Customers get to decide what domain matters to them and how much consensus they want before they trust the result. From there, the network sends the claims out, collects the responses, and creates a cryptographic certificate that records what happened, including which models lined up on each claim. The whole idea is to make trust feel less fuzzy and more provable. It is a trail.

What gives that trail weight is the economic layer beneath it. Mira’s whitepaper says the network combines Proof-of-Work and Proof-of-Stake because verification tasks can otherwise be gamed by cheap guessing. Once responses are standardized into constrained choices, random answers stop being impossible and start being statistically tempting. Mira’s fix is staking and slashing: node operators put value at risk, and persistent deviation from consensus can be penalized. That does not guarantee truth in some philosophical sense, but it does make careless or manipulative behavior more expensive, which is often what real systems need.

There is also a practical difference between saying a system is trustworthy and making its activity legible. Mira’s public explorer shows live verification activity on Base, including total verifications, success rate, transaction hashes, latest block data, and fees. That may sound like a blockchain detail, but it changes the social texture of trust. A centralized AI company usually asks users to rely on policy pages, benchmarks, and reputation. Mira is trying to replace some of that with inspectable records. It is an attempt to move confidence away from private assurances and toward public evidence.

The strongest part of the story is that Mira is not presenting verification as a theoretical ideal only. In one published case study, it says Learnrite used its Verified Generation API to improve educational question accuracy from 75 percent to 96 percent. It has also built Klok, a chat application on top of the same verification infrastructure. Those examples do not prove the model for every use case, and they should be read as company-published evidence, not neutral validation. Still, they show what Mira is actually trying to do: make reliability operational, not aspirational.

The deeper point is that Mira does not really remove authority. It redistributes it. Some judgment still lives in protocol design, staking rules, consensus thresholds, and governance. Its MiCA filing says that token holders who stake their tokens can take part in governance, with each token counting as one vote. It also says the system is designed to become more decentralized over time through a process of progressive decentralization. So the question is not whether authority disappears. It does not. The question is whether authority becomes visible, contestable, and harder for any single actor to monopolize. On that front, Mira has a serious answer. It builds trust not by claiming perfect AI, but by refusing to let one center define reliability for everyone else.

@Mira - Trust Layer of AI #Mira #mira $MIRA