Woh Moment Jab Maine Apne Trusted AI Answers Par Doubt Kiya

Kuch mahine pehle, main ek financial regulatory update par research karne ke liye ek AI tool use kar raha tha. Response ekdum confident, clean aur well structured aaya. Main usko almost publish karne hi wala tha ki mere ek colleague ne do paragraphs mein teen factual errors nikal diye.

Woh experience mere dimaag mein chhap gaya. Isliye nahi ki AI ne kuch galat bataya woh toh expected hai. Balki isliye kyunki us galti ko pakadne ka koi mechanism hi nahi tha. Koi audit trail nahi. Koi independent check nahi. Bas ek model, ek output, aur mera blind trust.

Yahi woh core problem hai jise solve karne ke liye Mira ko banaya gaya hai. Maine iske underlying tech ko jitna study kiya, mujhe utna hi realize hua ki yeh sirf ek interesting crypto project nahi hai yeh ek infrastructure play hai jo us chiz ko target kar raha hai jise AI ne aaj tak properly solve nahi kiya: verifiable truth, bina kisi central authority ke.

Yahan Trustless Hona Itna Important Kyun Hai?

Zyadatar log trustless sunte hain toh unhe lagta hai yeh koi typical crypto jargon hai. Par Mira ke context mein, iska matlab bahot precise aur important hai.

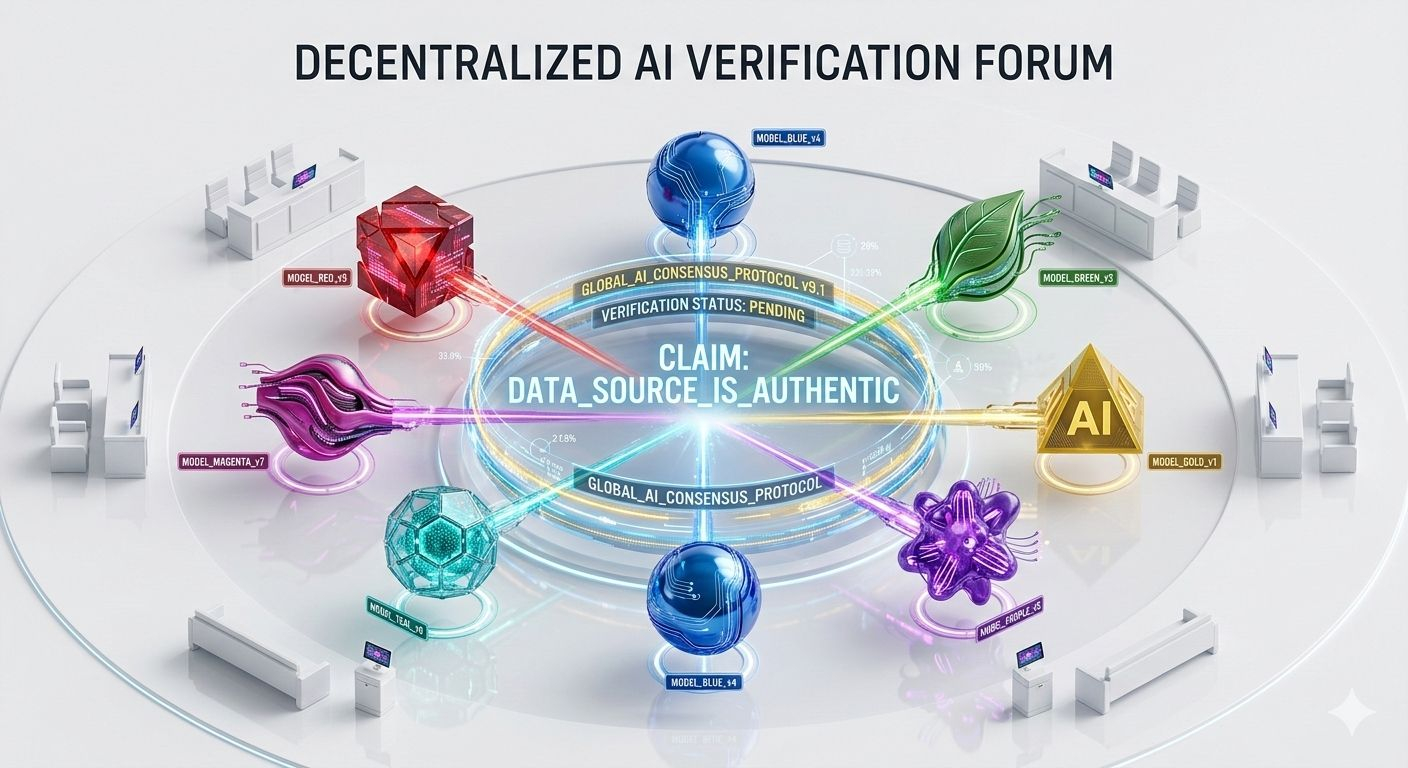

Koi ek central authority ya hidden model final decision nahi leta. Iske bajaye, truth collectively decide hota hai ek distributed network ke through jisme diverse models shamil hote hain.

Isko aaj ki major AI companies ke operating style se compare kijiye. OpenAI decide karta hai ki GPT kya output dega. Google, Gemini ke guardrails control karta hai. Anthropic, Claude ke responses shape karta hai. Har ek company authority ka single point hai aur isliye single point of failure, bias, ya manipulation bhi ban sakti hai. Trustworthy AI ka raasta ek aise verification system se hokar guzarta hai jo kisi ek curator par depend na ho, balki trust ko poore network mein distribute kare.

Yahi Mira ka philosophical foundation hai. Aur yeh sirf khayali baatein nahi hain isko properly engineer kiya gaya hai.

Yeh Verification Machine Asal Mein Kaam Kaise Karti Hai

Yeh protocol ek AI model ke complex response ko chhote chhote, checkable claims mein tod (deconstruct) deta hai. Phir in claims ko independent verifier nodes ke network mein distribute kiya jata hai, jahan har node alag AI models run kar raha hota hai. Yeh nodes independently claims ko evaluate karte hain, aur network ek consensus mechanism (blockchain validation jaisa) use karta hai yeh decide karne ke liye ki claim accurate hai ya nahi.

Isko ek multi-expert panel review ki tarah sochiye jo machine speed par chal raha ho. Kisi ek reviewer ke paas final authority nahi hoti. Har claim ko alag architectures, training data, aur perspectives wale models independently interrogate karte hain. Agreement assumed nahi hota, required hota hai.

Agar models ki supermajority kisi claim ki validity par agree karti hai, toh Mira use approve kar deta hai. Agar disagreement hota hai, toh claim flag ya reject ho jata hai. Concept simple hai, par isko scale par fake karna namumkin ke barabar hai.

The Ensemble Principle: Models Ki Diversity Hi Asli Security Layer Hai

Yahan ek aisi baat hai jise zyada tar log Mira ko dekhte waqt miss kar dete hain. Asli power kisi ek verifier node ke smart hone mein nahi hai. Asli power is ensemble (group) ki diversity mein hai.

Mira 110 se zyada AI models ko integrate karta hai, jisse single point failures aur hallucinations effectively kam ho jate hain.

Jab GPT, DeepSeek, aur Llama independently ek hi factual claim par same conclusion par aate hain, toh unka ek sath aana meaning rakhta hai jo ki kisi ek model ke confidence score mein nahi milta. Decentralized auditing aur consensus mechanisms ke through, Mira ka diverse system hallucinations ko filter out karta hai aur individual biases ko balance karta hai.

Real-world numbers is baat ko back karte hain. Production environments mein jab outputs ko Mira ke consensus process se filter kiya gaya, toh factual accuracy 70% se badhkar 96% ho gayi. Yeh koi chhota improvement nahi hai yeh difference hai ek experiment-worthy tool aur ek aise infrastructure ke beech jis par aap critical decisions le sakein.

Proof of Verification: Woh Mechanism Jo System Ko Secure Banata Hai

Ek decentralized verification network tabhi kaam karta hai jab verifiers khud game na khel sakein (yaani cheating na kar sakein). Toh Mira yeh kaise ensure karta hai ki nodes sach mein kaam kar rahe hain aur tukke nahi laga rahe?

Jaise hi specialized models claims verify kar lete hain, ek hybrid consensus mechanism shuru hota hai jisme Proof of Stake aur Proof of Work dono ka combination hota hai isko Proof of Verification kehte hain. Verifiers ko economic incentives diye jate hain taaki wo actual mein calculation/inference karein. Verification ka result phir ek cryptographic certificate ke form mein owner ko bheja jata hai.

Staking Requirement: Har node ko token stake karne padte hain, jo unka economic commitment hota hai.

Penalties & Rewards: Jo nodes fake results submit karte hain (jaise bina compute kiye random output dena), un par penalty lagti hai. Aur jo lagatar consensus ke sath align hote hain, unhe performance based compensation milta hai.

Yeh design elegance Mira ko ek simple multi model API call se alag banati hai. Economic incentives protocol level par honesty enforce karte hain, sirf trust ya goodwill par nahi.

Ab Tak Is Par Kya Build Ho Chuka Hai

Theory apni jagah hai, par production deployment bilkul alag game hai. Spheron ke GPU network (jisme 8,200+ GPUs aur 44,000+ community nodes hain) ke sath partnership ke through, Mira ne massive scale achieve kiya hai. March 2025 tak, Mira daily 1 million se zyada inferences aur 2 million users ko support kar raha tha. Isne complex AI tasks mein error rate ko 30% se 5% tak gira diya hai.

Mira ki verification layer par already kaafi applications run kar rahi hain:

Klok: Ek multi model AI assistant jahan GPT 4o, DeepSeek, aur Llama trustless verification nodes ki tarah har response ko cross-check karte hain.

Learnrite: Scale par verified educational content generate karta hai.

GigabrainGG: High stakes financial environment mein AI-powered trading signals ko validate karta hai, jahan ek hallucinated signal ka matlab real money loss ho sakta hai.

Mira integrated applications ke through daily 3 Billion tokens verify kar raha hai aur 4.5 million se zyada users ko support kar raha hai. Itne early stage mein is scale ko ignore karna mushkil hai.

The Honest Risks (Asli Challenges)

Koi bhi infrastructure analysis risk factor ke bina adhura hai. Mira ka architecture solid hai, par kuch execution variables abhi bhi open hain:

Token Unlock Pressure: Launch par sirf 19.12% supply circulation mein hai. Core contributors aur early investors ke mid-term unlocks ki wajah se significant volatility aur selling pressure aa sakta hai.

Competitive Landscape: Har major AI company ka apna commercial incentive hai ki wo apni verification layer khud banaye aur third-party protocols par depend na rahe. Market kya choose karega decentralized verification ya internal solutions yeh dekhna baaki hai.

The Strategic Lens Jo Zyadatar Log Miss Kar Rahe Hain

Meri nazar mein sabse non-obvious point yeh hai: Mira AI models se compete nahi kar raha. Yeh khud ko un sabhi ke neeche ek trust layer ki tarah position kar raha hai ek aisa infrastructure jo autonomous AI systems ko medicine, law, aur finance mein bina human supervision ke deploy karne ke liye safe banata hai.

Inka vision sirf verification tak limited nahi hai. Yeh ek naye class ke foundation models create karna chahte hain jahan verification aur generation ek sath intrinsic honge.

Agar yeh vision successful hota hai, toh Mira sirf AI ke sath baithne wala ek tool nahi banega yeh woh standard ban jayega jis par AI outputs ko measure kiya jayega.

Market bhi is potential ko dekh raha hai. Mira ne BITKRAFT Ventures, Framework Ventures, Accel, Mechanism Capital, aur Polygon ke founder se $9 million ki seed funding raise ki hai, jo inke blockchain plus AI model ki solid market recognition dikhata hai.

Ek Sawal Jis Par Sochna Banta Hai

Humne barso is baat par debate ki hai ki kya AI par trust kiya ja sakta hai. Mira ek aisa infrastructure bana raha hai jo is sawal ka jawab de sake kisi ek company par bharosa karke nahi, balki decentralized consensus ke through jise koi ek gatekeeper control nahi karta.

Yeh ya toh AI mein abhi ka sabse bada infrastructure bet hai, ya phir ek early stage idealism jo aisi market se takra raha hai jo abhi ready nahi hai. Shayad dono.

Toh main aapse yeh janna chahta hoon: Agar aapke padhne se pehle hi AI outputs ki accuracy cryptographically verify ho sake toh kya yeh aapka AI use karne ka tarika badal dega, especially un decisions ke liye jo sach mein matter karte hain? Aur kya aapko lagta hai enterprises decentralized verification ke liye pay karenge, ya apne internal solutions khud banayenge?

Apne thoughts comments mein zaroor share karein. Yeh ek aisi conversation hai jo sach mein matter karti hai.