The first time I sat down with @Mira - Trust Layer of AI architecture diagrams, I had that quiet moment where the pieces don’t look extraordinary on their own, but the way they connect starts to reveal something deeper. On the surface it looks like another verification layer for AI claims. Underneath, it’s really a new way of turning uncertainty into structured work for a network.

At the center of it are verifier nodes. Think of them less like traditional validators and more like investigators. Their job isn’t just confirming whether a transaction happened, the way a typical blockchain node might. They’re checking claims. A model output, a dataset reference, a prediction, even a piece of generated content can arrive as a claim that needs verification.

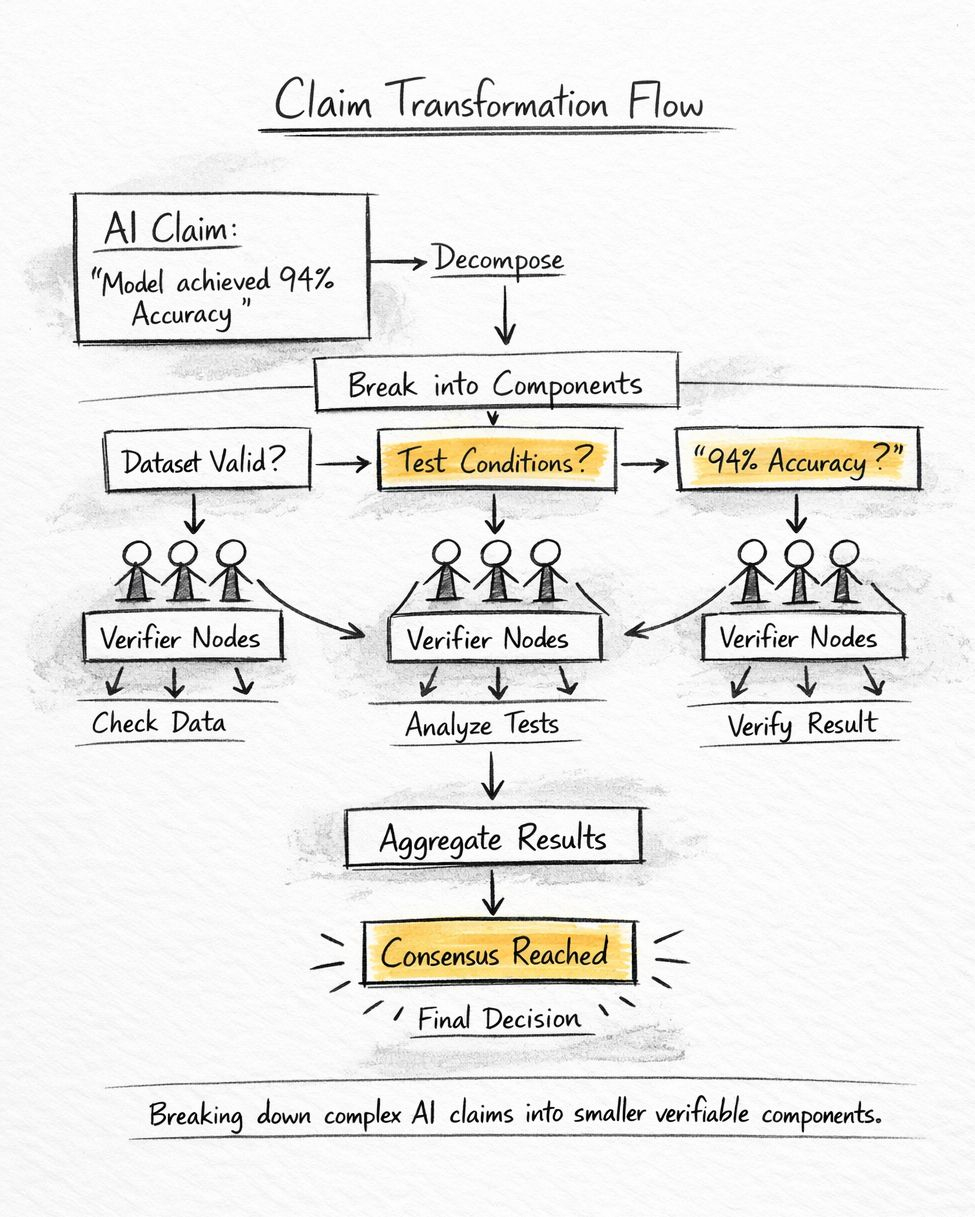

What struck me early is that Mira treats every claim as something that can be broken apart. The protocol calls this claim transformation. A complex statement gets decomposed into smaller testable components that different nodes can evaluate. If someone says a model achieved 94 percent accuracy on a benchmark, that statement quietly splits into multiple verifiable pieces. Was the dataset correct. Were the evaluation conditions consistent. Was the result reproducible.

That sounds simple. It isn’t.

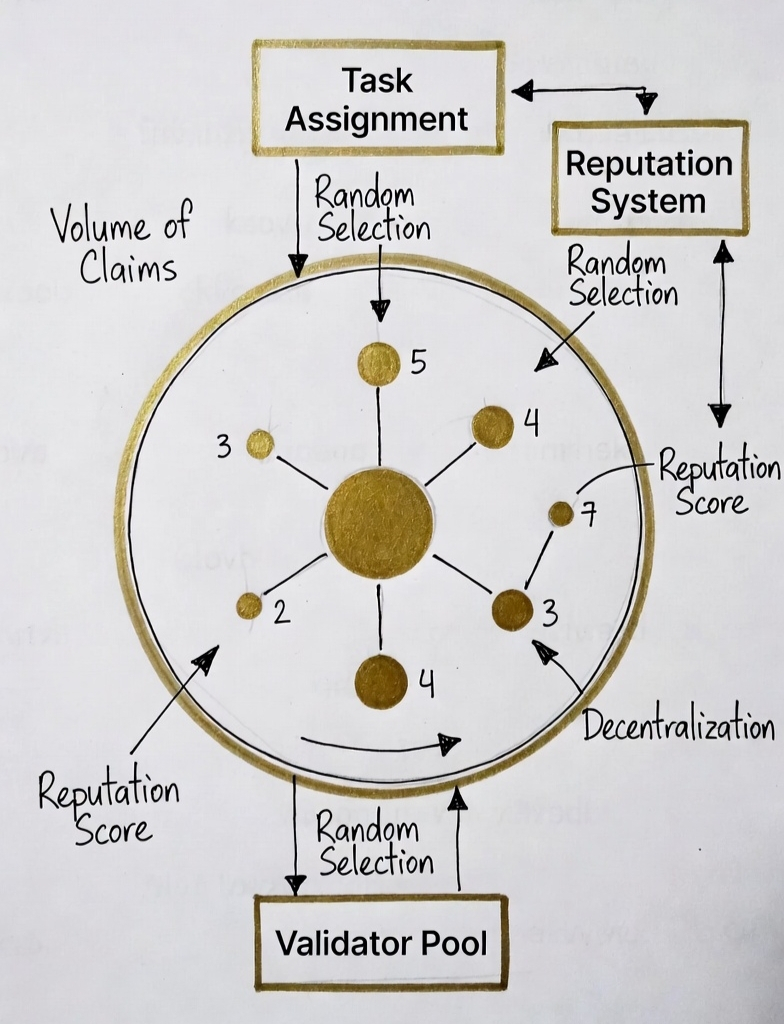

Because once claims become fragments, the system needs a structure to distribute that work without letting any single verifier dominate the outcome. That’s where the dynamic validator network comes in. Instead of a fixed validator set like many proof systems use, Mira rotates participants depending on the type of claim and their historical accuracy.

Numbers start to tell the story here. In early simulations described in the protocol research, claim decomposition often produces three to seven verification tasks per statement. That might not sound like much until you scale it. If a system processes 50,000 AI-related claims per day, which is realistic given how fast model outputs move through the ecosystem now, the network suddenly has to evaluate closer to 200,000 individual checks.

The dynamic validator pool exists to absorb that load while reducing coordinated manipulation. Validators earn reputation scores based on verification accuracy. Over time, the protocol weights participation based on those scores. If a node consistently produces verifications that align with consensus outcomes, its influence quietly increases. If it drifts, it fades out of selection probability.

Underneath that reputation layer sits the economic engine. Each verification carries a cost and reward. Early documentation points to verification bounties in the range of small fractions of a token per claim, but that scale matters. Even a 0.02 token reward multiplied across hundreds of thousands of checks creates steady incentive flow through the system.

Understanding that helps explain why claim transformation matters so much. By breaking claims apart, Mira increases the surface area for verification work. More nodes can participate. That spreads trust while also spreading incentives.

Of course the design opens obvious questions. Fragmentation helps decentralize verification, but it also increases complexity. Every additional verification step introduces latency and coordination overhead. If claims require five checks instead of one, the network needs five times the participation to maintain speed.

Meanwhile, dynamic validator selection has its own tension. Reputation systems tend to concentrate influence over time. The nodes that perform well early accumulate higher weighting, which can slowly create a quiet center of gravity in the network. The protocol tries to counter this with randomization and periodic score decay, but whether that balance holds in a live environment remains to be seen.

Still, the broader pattern here feels important. Right now the crypto market is full of infrastructure focused on moving value faster or cheaper. Mira sits in a different category. It treats verification itself as a distributed resource.

And that reflects something bigger happening across AI and blockchain. We’re moving from systems that store information to systems that constantly question it.

If this architecture holds under real network pressure, the real insight might be simple. In the next phase of decentralized systems, truth itself becomes the workload.