Most conversations about artificial intelligence revolve around one simple goal: making models smarter.

The industry measures progress through larger datasets, bigger models, and faster inference speeds. Each new generation of AI promises higher accuracy and more capability.

And in many ways, that progress is real.

But a different problem appears the moment AI begins interacting with financial systems, governance structures, and autonomous agents operating on-chain.

At that point, intelligence alone is no longer the most important property.

Reliability becomes more important.

Because when AI outputs are used to trigger trades, manage liquidity, interpret DAO proposals, or guide automated systems that move capital, errors stop being harmless mistakes.

They become economic events.

This is where the core idea behind Mira Network begins to matter.

Most AI systems today operate under a very simple trust model. A user asks a question, a model generates an answer, and the user decides whether to believe it.

This structure works reasonably well when AI is used for research, brainstorming, or general assistance. If the answer is slightly wrong, the consequences are limited.

But once AI is connected to systems that manage real value, the same trust model becomes fragile.

A misinterpreted governance proposal could influence voting outcomes.

A flawed market analysis could trigger an incorrect trade.

A hallucinated data point could guide a liquidity allocation strategy.

The risk grows because the outputs are no longer informational.

They are operational.

AI systems are slowly moving from advisory tools to autonomous actors within digital economies.

And autonomy introduces a new requirement: verification.

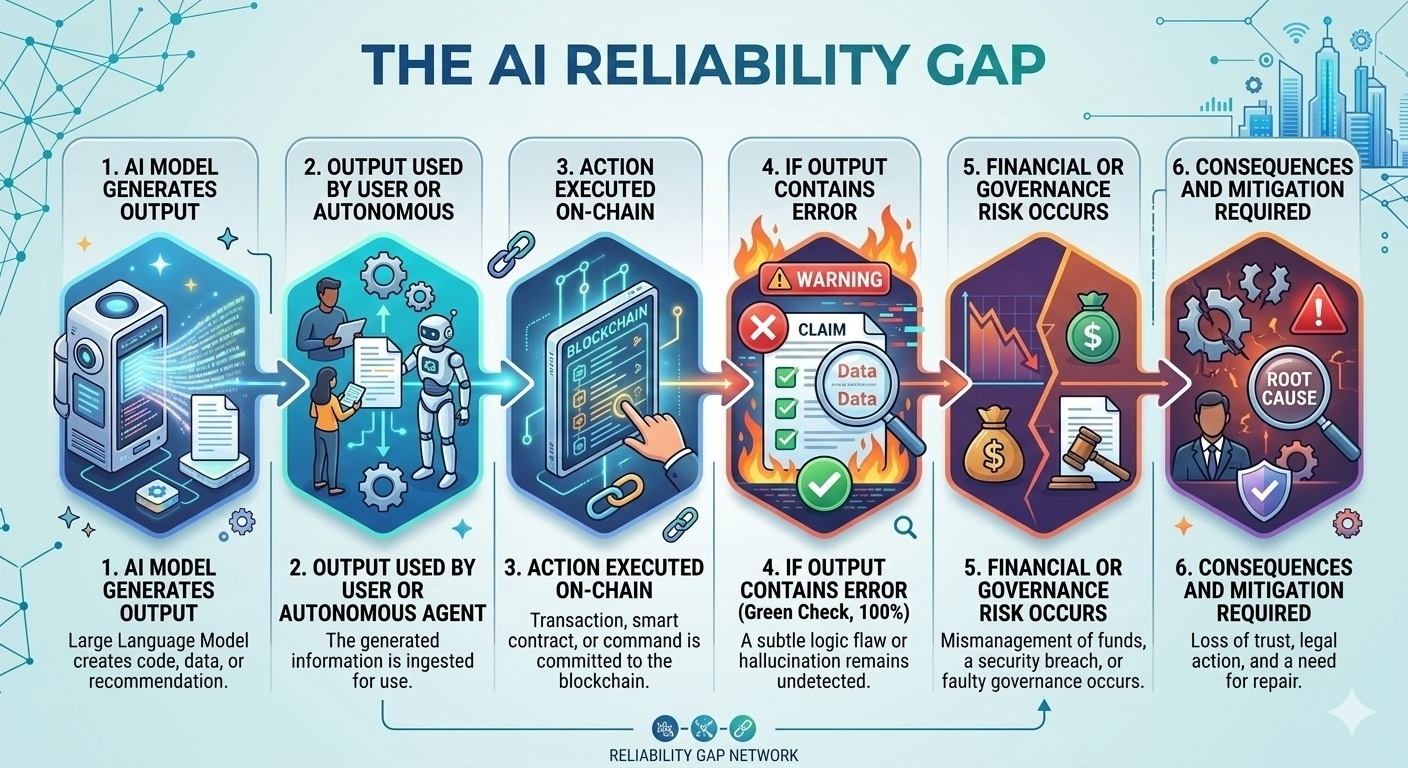

The Reliability Gap in AI Systems

Even the most advanced models remain probabilistic systems. They generate outputs based on patterns learned from training data, not on guaranteed logical certainty.

That means hallucinations, bias, and subtle reasoning errors can still appear.

Larger models reduce the frequency of those problems, but they do not eliminate them entirely. The underlying architecture still produces answers based on probability rather than proof.

When humans review those answers, mistakes can be caught.

But autonomous systems do not always have that safety layer. As AI agents become more capable, they increasingly operate without direct human oversight.

That creates what can be described as a reliability gap.

AI can generate information extremely quickly, but the ecosystem lacks an equally strong mechanism for verifying whether those outputs are correct before they are used.

Closing this reliability gap is becoming one of the most important infrastructure problems in the AI ecosystem.

Because if AI is going to manage capital, coordinate systems, and guide decision-making processes, its outputs cannot simply be trusted by default.

They must be validated.

Separating Creation from Verification

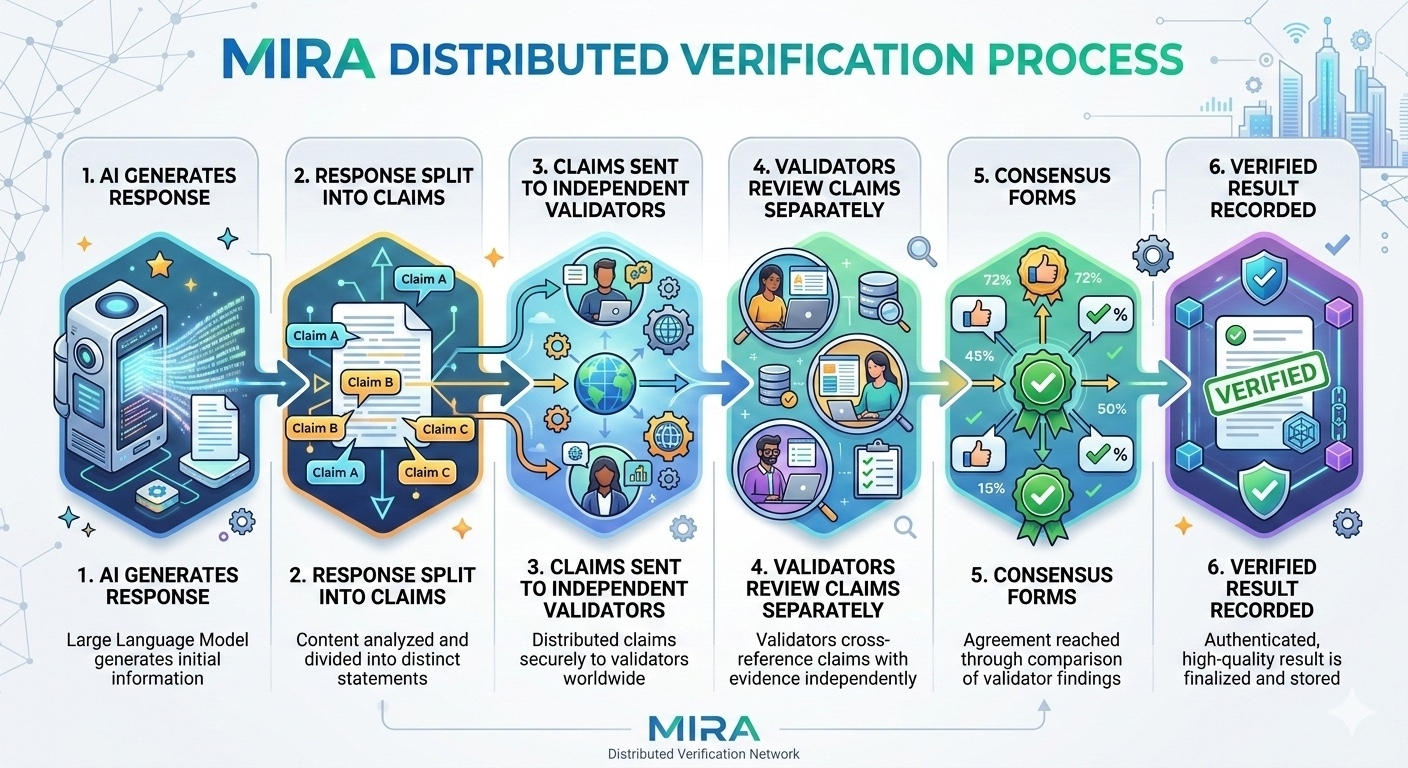

The approach taken by Mira begins with a simple structural change.

Instead of treating an AI output as a single block of information, the system breaks the output into smaller, testable claims.

A model generates a response.

That response is decomposed into individual statements that can be independently evaluated. Each of those claims is then distributed to a network of validators responsible for checking their accuracy.

These validators may include other AI models, hybrid AI-human systems, or specialized verification participants.

The key feature is independence.

Validators examine claims without knowing how other validators are responding. This separation prevents coordination and reduces the influence of shared bias.

Each participant evaluates the claim using its own reasoning or model.

When enough validators have completed their assessments, consensus begins to emerge around which claims are correct and which should be rejected.

The validated results are then assembled back into a verified output.

This structure introduces something most AI systems currently lack: distributed verification.

Instead of relying on a single chain of reasoning produced by one model, the system distributes the responsibility of validation across multiple independent evaluators.

The result is not simply an answer.

It is an answer that has been examined and confirmed through a structured validation process.

Economic Incentives and Accountability

Verification systems also require incentives to function reliably.

Without incentives, validators may have little reason to perform careful analysis. Worse, malicious actors could attempt to manipulate verification outcomes.

To address this, Mira introduces an economic layer through the $MIRA token.

Validators must stake tokens to participate in the verification process. Their stake represents a commitment to honest evaluation.

If a validator consistently provides accurate assessments, they earn rewards for their contributions. If they repeatedly validate incorrect claims or behave dishonestly, their stake can be penalized.

This structure transforms verification into an economically reinforced activity.

Participants are not simply asked to verify claims—they are financially motivated to do so accurately.

The mechanism resembles systems already familiar within blockchain networks.

Validators in proof-of-stake systems secure blockchains by staking capital. Their financial exposure discourages malicious behavior and encourages reliable participation.

Mira applies a similar logic to AI verification.

Instead of securing transaction ordering, the system secures information accuracy.

Why Verification Matters for Autonomous Systems

The importance of verification becomes clearer when examining how AI is beginning to operate within Web3 environments.

Autonomous agents are gradually emerging across multiple areas of the ecosystem.

Some agents monitor markets and execute arbitrage strategies across exchanges.

Others manage liquidity pools or rebalance portfolios in decentralized finance protocols.

Some interpret governance proposals and help participants understand complex technical changes.

As these agents become more capable, their role will likely expand.

Future AI systems may monitor protocol health, allocate treasury funds, or coordinate interactions between decentralized services.

Each of these activities involves decision-making.

And decision-making requires reliable information.

Without verification mechanisms, errors made by autonomous systems could propagate quickly across interconnected protocols.

One incorrect output could trigger a chain of actions affecting multiple financial systems.

Verification reduces this risk by introducing checkpoints before outputs are used operationally.

Instead of blindly trusting an AI-generated answer, systems can require validation before allowing that information to influence financial decisions.

Infrastructure for the AI Economy

One of the interesting aspects of verification infrastructure is that it often operates quietly in the background.

End users rarely think about how information is validated before they rely on it. Yet verification systems are essential for maintaining trust in complex networks.

Financial auditing is an example.

Banks and corporations operate under strict auditing requirements not because auditing is exciting, but because it ensures accountability within financial systems.

Similarly, as AI becomes more deeply integrated into digital economies, verification mechanisms may become a fundamental layer of infrastructure.

AI generation and AI verification could evolve into two distinct components of the ecosystem.

Generation focuses on creating intelligent outputs.

Verification focuses on ensuring those outputs are reliable enough to act on.

This separation mirrors other areas of technological development. In many systems, creation and validation eventually become specialized roles handled by different layers of infrastructure.

Mira’s approach suggests a future where AI outputs are not accepted automatically.

Instead, they pass through a distributed verification process that establishes trust before action occurs.

The Long-Term Implication

If AI continues to move toward autonomous operation within financial systems, the need for verification will only increase.

Smarter models will certainly continue to emerge. Improvements in architecture, training techniques, and hardware will push AI capabilities forward.

But intelligence alone does not guarantee reliability.

A highly intelligent system can still produce incorrect conclusions.

Verification ensures that mistakes are caught before they create systemic consequences.

In that sense, the most valuable infrastructure in the AI ecosystem may not be the models themselves.

It may be the mechanisms that ensure those models can be trusted.

The future of AI in Web3 may depend not only on how intelligent the systems become, but on how effectively their outputs can be verified.

If autonomous agents are going to operate inside decentralized financial systems, trust cannot rely on assumptions.

It will need to be enforced through structure.

And verification protocols may become the layer that makes that possible.