A few months ago I was watching someone test an AI tool that could summarize research papers. It was impressive at first. The model produced a neat explanation in seconds, clear language, confident tone, everything you’d expect from a system trained on oceans of data.

Then we opened the original paper.

Two numbers were wrong. One citation didn’t exist. And one conclusion had quietly drifted away from what the author actually wrote.

Nothing dramatic. Just small mistakes. The kind that slip past you if you’re not paying attention.

That moment stayed with me because it captures something odd about the current wave of artificial intelligence. These systems feel incredibly capable, yet they still have this habit of sounding certain even when they’re not.

For entertainment, it hardly matters. For systems that move money, write contracts, or guide decisions, it starts to matter quite a lot.

And that’s where a project like Mira Network enters the conversation. Not as another AI model trying to be smarter, but as something more mundane and maybe more necessary: a way to check whether AI is telling the truth.

The Strange Weakness in Modern AI

Most people imagine AI as a giant database that looks things up and delivers answers. The reality is less tidy.

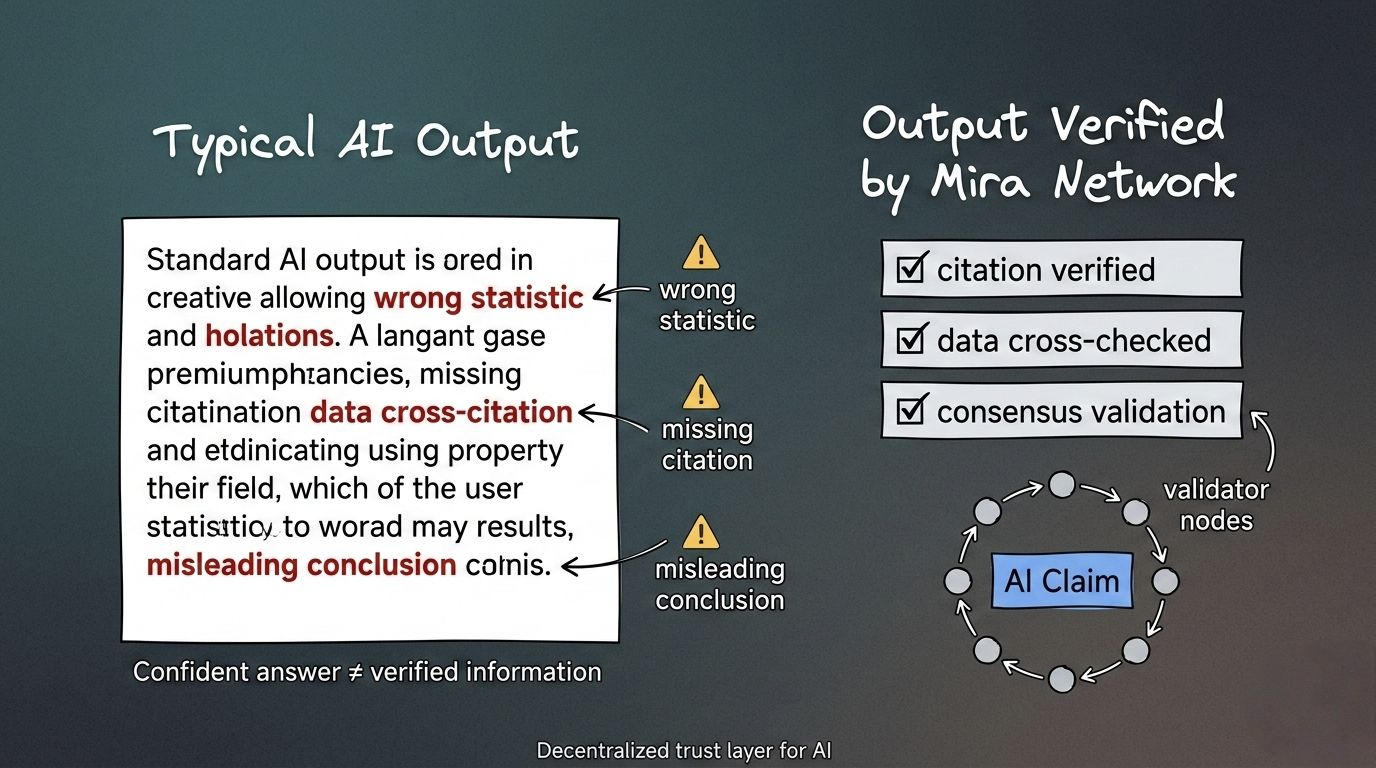

Large language models generate responses by predicting patterns in text. They don’t “know” facts in the way humans do. They estimate what the next word should be based on probability.

That approach works remarkably well most of the time. But it also means the system can occasionally wander off course without realizing it.

You ask for a statistic. It produces one that sounds right.

You ask for a research citation. It generates something formatted like a citation.

Sometimes those things are correct. Sometimes they’re not.

Researchers have been measuring this for a while now. Even the most advanced models still produce hallucinations. Not constantly, but often enough that anyone building serious applications has to think about verification.

It’s a bit like hiring an incredibly fast intern who can draft a full report in five minutes but occasionally invents a source without noticing.

You would still use the intern. You’d just check the work before publishing it.

The difficulty appears when AI systems start operating faster than humans can realistically verify.

A Different Kind of Infrastructure

Mira Network is built around a fairly simple observation.

If one AI system might be wrong, perhaps several systems checking the same claim could do better.

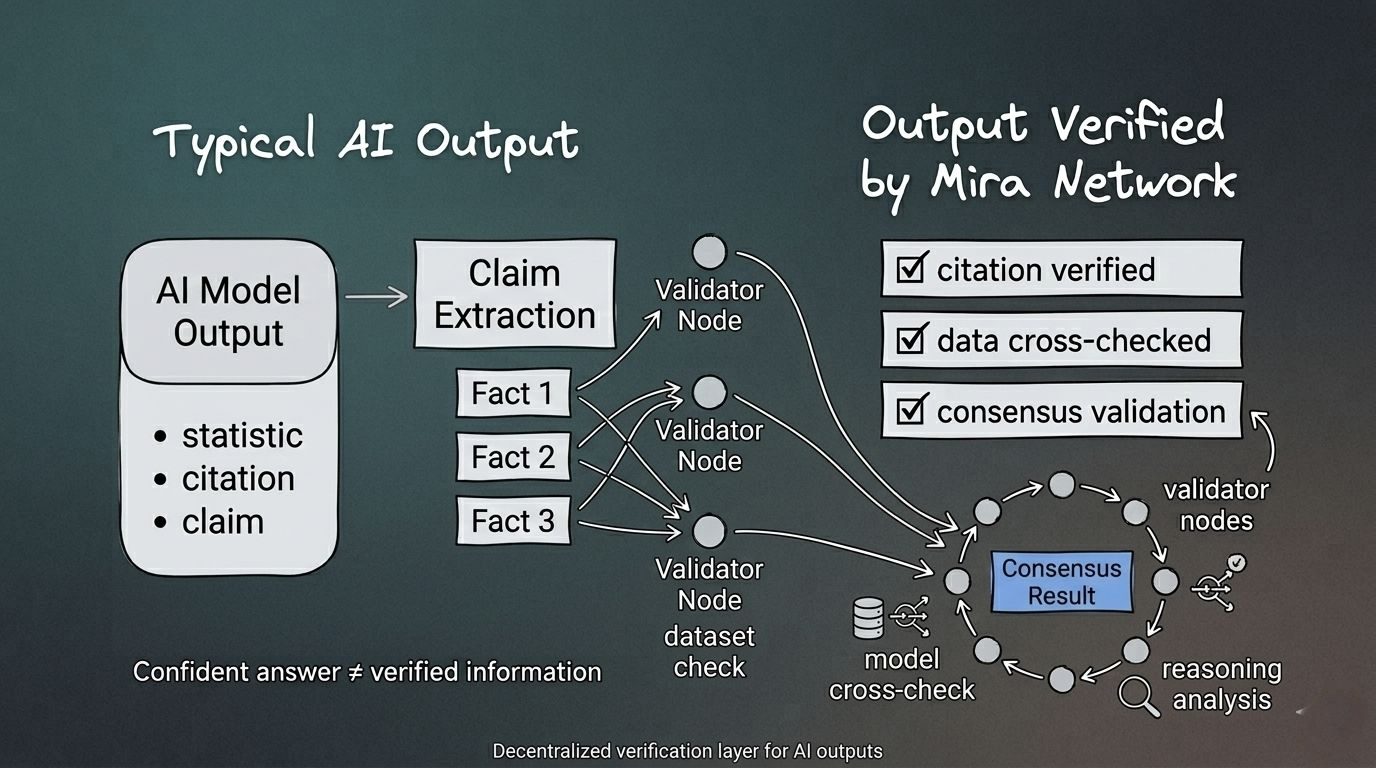

Instead of trusting a single model’s answer, Mira breaks AI outputs into smaller statements and sends them through a distributed verification process. Different validator nodes analyze those claims and reach consensus about whether they appear accurate.

It’s not unlike peer review, though the comparison isn’t perfect.

Picture an AI assistant generating a paragraph about financial markets. The system might include statements about historical events, price data, or regulatory decisions. Mira extracts those pieces and asks multiple validators to examine them.

If enough of them agree the claim holds up it passes through as verified.

If not, the output gets flagged.

In a world where AI responses increasingly drive automated systems, that extra step might matter more than it seems.

Under the Hood, It’s Still a Network

Of course none of this happens magically.

Participants who help verify claims run validator nodes and stake the network’s native token MIRA. The stake acts as collateral. If validators consistently provide inaccurate assessments they risk losing part of that stake.

It’s a familiar design in decentralized systems. Economic incentives keep participants honest, or at least encourage them to try.

At the same time, the verification itself relies on computational tools. Validators might run different AI models, datasets, or reasoning engines to evaluate each claim.

The end result is a sort of layered process where computation produces analysis and the network aggregates the results.

Not perfect, but perhaps stronger than trusting a single model.

A Small Thought Experiment

Imagine an automated trading system that reads market analysis produced by AI. Without verification, that analysis flows directly into the algorithm’s decision making.

Now imagine the same system with a verification layer.

Before the analysis reaches the trading engine, the key claims get checked by independent validators. Numbers, references, historical comparisons. Small pieces, but important ones.

The trading system still moves quickly. It just does so with slightly more confidence in the information feeding it.

That’s the basic philosophy behind Mira.

Not replacing AI. Just making sure it’s behaving itself.

Where the Token Fits In

The token side of the system is straightforward in concept.

Developers who want their AI outputs verified submit requests to the network. Validators process those requests and earn rewards for the work. Staking helps maintain honest participation and token holders can delegate to validators if they prefer not to run nodes themselves.

The total supply is capped at one billion tokens. Over time the value of the network depends largely on whether verification becomes something AI applications genuinely need.

And that’s the interesting part.

If AI keeps expanding into high-stakes environments — finance, research, governance — verification could quietly become essential infrastructure.

If not, it remains a niche service.

The market will decide which path wins.

Not Everything Is Easy to Verify

One thing worth mentioning is that not all AI outputs are equally suited for verification.

Facts are relatively straightforward. Historical dates, numerical data, widely documented events.

But plenty of AI responses live in fuzzier territory. Opinions, creative writing, strategic reasoning. In those cases consensus becomes more subjective.

Another challenge is speed. Verification introduces an extra step, and every extra step adds latency. For applications where milliseconds matter, that trade-off will require careful design.

And like many early networks, decentralization grows gradually. Validator participation and governance structures take time to mature.

None of these issues are fatal, but they’re real.

A Subtle Shift in the AI Conversation

For the past few years most headlines about artificial intelligence have focused on bigger models and faster hardware. The race was about capability.

Now something else is entering the conversation. Reliability.

Companies deploying AI systems are starting to realize that impressive output is only half the problem. The other half is knowing when the output can be trusted.

Verification layers like Mira reflect that shift.

They don’t feel revolutionary in the dramatic sense. No spectacular demos, no viral screenshots. Just infrastructure quietly trying to make AI a little more dependable.

Sometimes the most important systems are the ones that operate behind the scenes checking details that everyone else is too busy to notice.

@Mira - Trust Layer of AI #Mira $MIRA