When people talk about trust in robotics the conversation usually jumps to safety protocols or advanced hardware. My first reaction is different. Trust in autonomous machines rarely fails because the robots are incapable. It fails because the systems around them—data flows, governance, and accountability—are unclear. Without a structure that defines who controls decisions and how those decisions are verified, even the most advanced robots remain difficult to rely on.

When people talk about trust in robotics the conversation usually jumps to safety protocols or advanced hardware. My first reaction is different. Trust in autonomous machines rarely fails because the robots are incapable. It fails because the systems around them—data flows, governance, and accountability—are unclear. Without a structure that defines who controls decisions and how those decisions are verified, even the most advanced robots remain difficult to rely on.

For most robotics ecosystems today, governance is an afterthought. Machines collect data run models and perform actions, but the frameworks that coordinate ownership and responsibility are often fragmented. Companies control the hardware developers control the software, and users are left trusting systems they cannot inspect. The result is a gap between what robots can technically do and what people are willing to let them do.

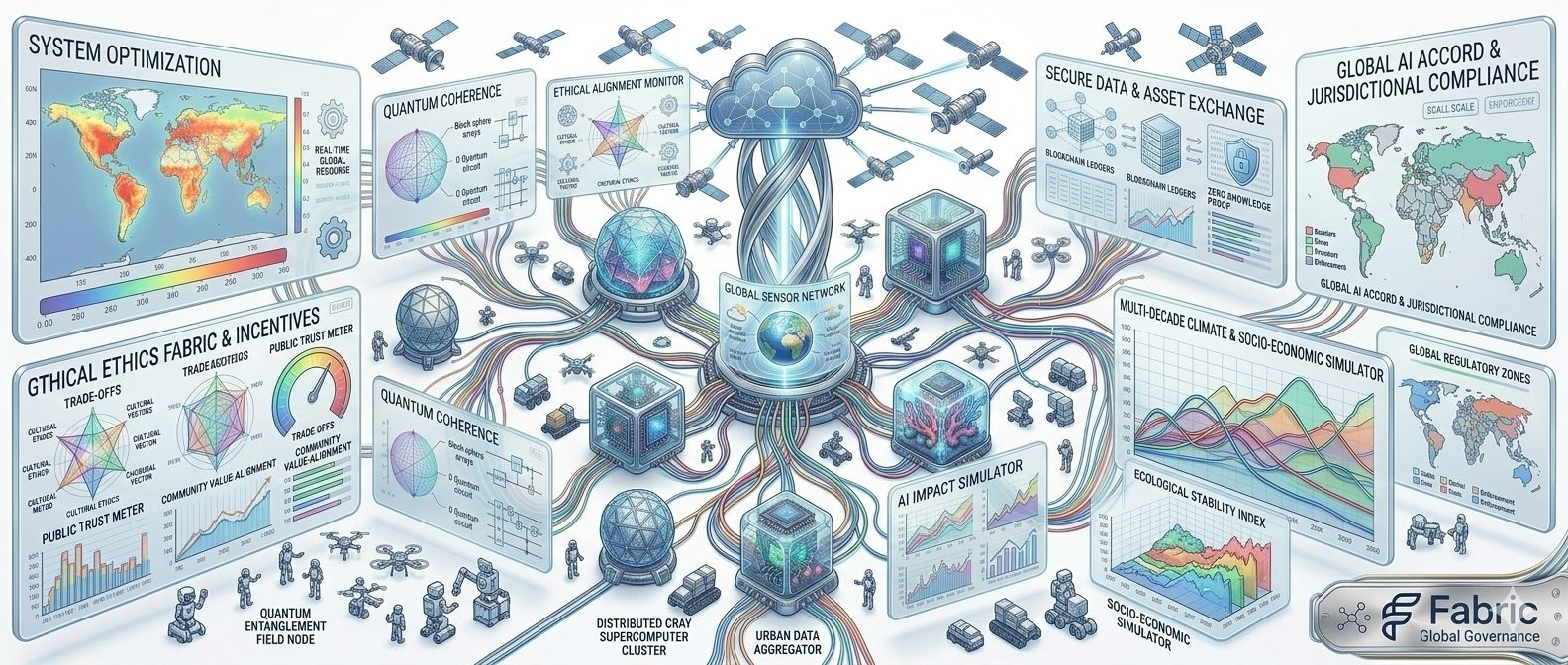

This is where the model behind Fabric Foundation starts to look different. Instead of treating robots as isolated devices the Fabric approach treats them as participants in a coordinated network. Data computation and governance are organized through verifiable infrastructure rather than closed systems. The robot isn’t just executing commands; it’s operating within a framework where actions, decisions and results can be validated.

That shift changes how trust is produced. In traditional robotics deployments, verification usually happens internally. Logs exist, but they are controlled by the same entity running the machines. If something goes wrong proving what happened often relies on centralized records. Fabric’s model introduces a different layer—one where robotic activity can be coordinated and verified through shared infrastructure rather than private oversight.

Of course verification systems don’t exist in isolation. Once you create a network that coordinates machines through verifiable computing a new set of operational questions appears. Who governs updates to robotic behaviors? How are data contributions validated? How do multiple stakeholders collaborate without handing control to a single operator?

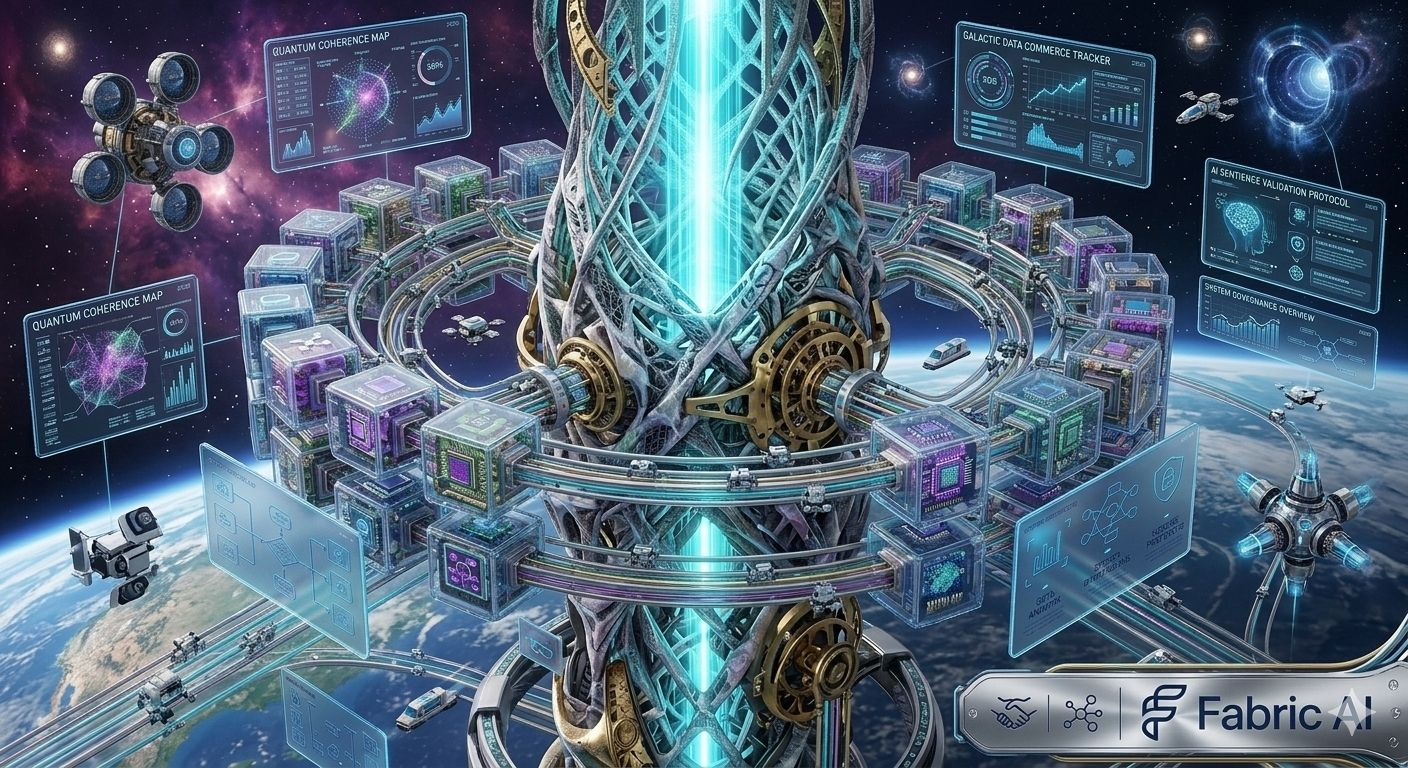

These questions matter because robotics is entering a phase where autonomy is expanding quickly. Warehouses, logistics systems, and industrial environments are already integrating fleets of machines that interact with real-world assets. When dozens or hundreds of robots coordinate tasks, governance becomes less about controlling one device and more about managing an entire system of agents acting simultaneously.

Fabric’s design tries to address that by introducing governance mechanisms directly into the infrastructure layer. Instead of separating robotics from network coordination it merges the two. Data collection, computational work and policy enforcement are organized through a public ledger environment allowing decisions to be tracked and validated across participants.

The interesting part isn’t just transparency it’s the shift in incentives. When machine activity becomes verifiable and governed through shared infrastructure contributors—developers operators and organizations—can collaborate without relying solely on institutional trust. The system itself provides the auditability that normally requires centralized control.

But introducing governance into robotics networks also changes where risk appears. In a closed system failures are usually local. A robot malfunctions a model produces incorrect output or a sensor provides inaccurate readings. Those problems are serious but they tends to remain contained within the organization operating the machines.

In an open coordinated network, the risks move up the stack. Governance disputes, flawed verification mechanisms or poorly designed coordination rules can affect the behavior of many machines at once. Instead of debugging a single robot operators may need to address systemic issues affecting an entire network of autonomous agents.

That doesn’t make the model weaker. In many ways it makes the system more realistic. As robotics expands beyond isolated deployments, coordination will increasingly happen between organizations, not just within them. A robot delivering goods, inspecting infrastructure, or collecting environmental data may interact with multiple entities that each have their own incentives.

This is why governance becomes a foundational problem rather than a peripheral one. Trust in robotics isn’t only about whether a machine can perform a task. It’s about whether the ecosystem around that machine can enforce accountability, resolve disputes and maintain reliable operation as complexity grows.

Fabric’s approach suggests that the future of robotics may look less like a collection of proprietary machines and more like a coordinated network of autonomous agents. In that environment, trust isn’t created solely by engineering precision. It emerges from transparent systems that define how machines collaborate, how their work is verified, and how decisions about their behavior are governed.

The real test of this model won’t come when everything is running smoothly. Any system can appear trustworthy when conditions are stable. The real question is how the governance layer behaves when pressure increases—when machines produce conflicting data, when incentives diverge, or when network participants disagree on how robots should operate.

That’s where the long-term value of the Fabric model will ultimately be measured. Not in whether robots can join a network, but in whether that network can maintain trust when the complexity of real-world coordination starts to push the system to its limits.@Fabric Foundation #ROBO