What if the future economy is run by machines but no one can verify what those machines are actually doing?

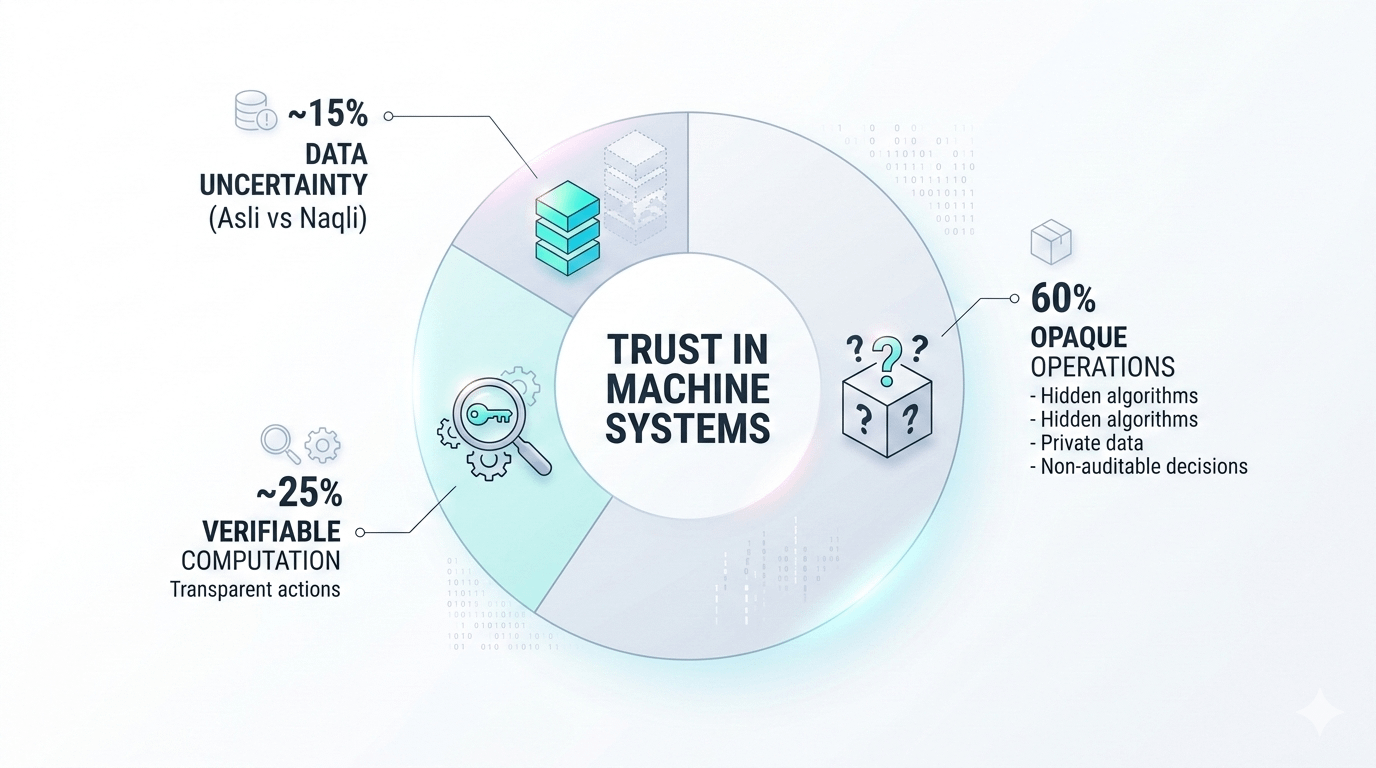

Robots making logistics decisions. AI agents running infrastructure. Autonomous systems interacting with humans. The question is no longer just about intelligence. The real question is about trust. Who verifies the actions of these systems? Who checks the computation? And how do we know the data feeding them is Asli vs Naqli?

This is the type of challenge @Fabric Foundation is trying to solve.

Not another typical blockchain narrative. And not just an AI experiment either. The idea sits right at the intersection of robotics, verification, and decentralized coordination. Fabric is essentially attempting to build a global network where robots and AI agents can collaborate while their actions remain transparent. Every critical operation can pass through a kind of Check-shack, allowing the network to verify what machines actually did.

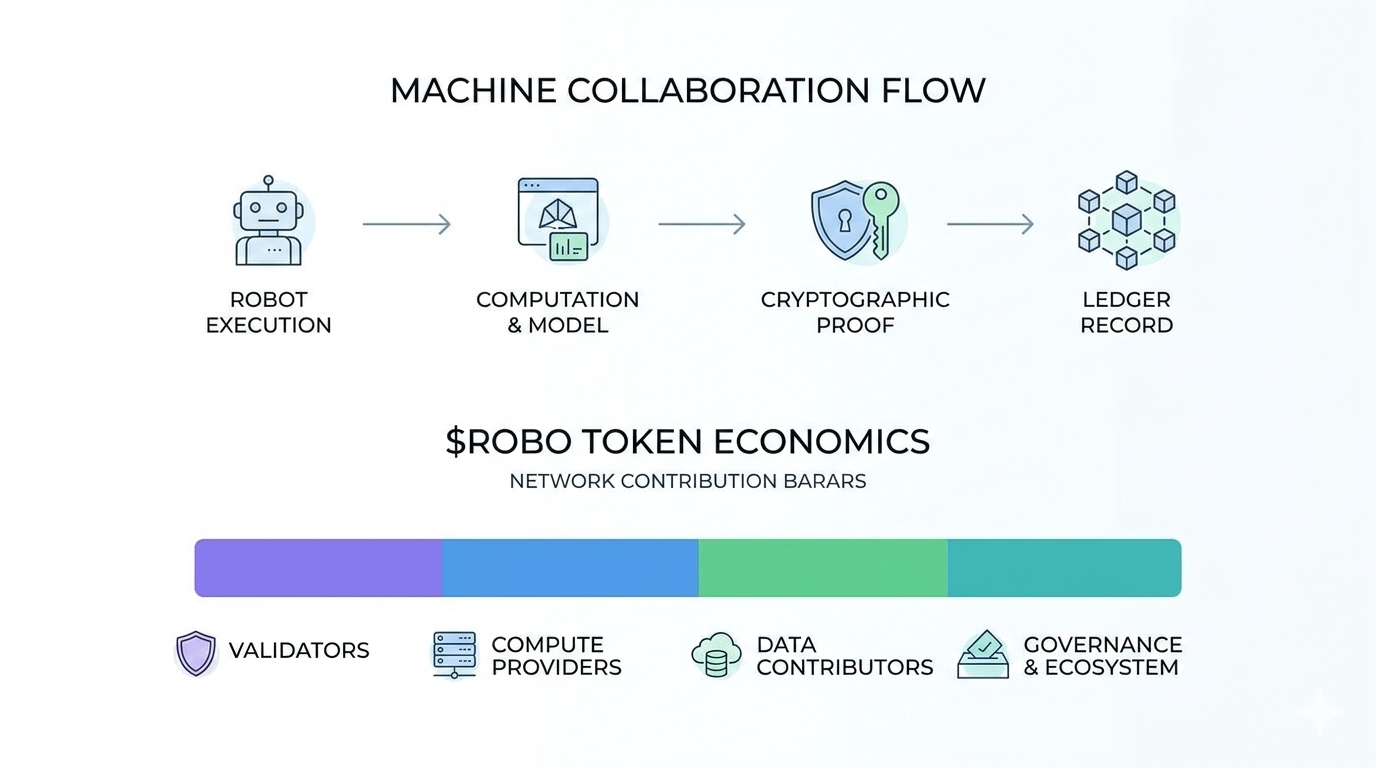

Today, most robotics systems operate behind closed doors. Companies run them on private infrastructure. The algorithms are hidden. The training data is rarely visible. When machines make decisions, outsiders usually have no way to audit the process. Fabric proposes a different model. Instead of isolated systems, it introduces shared infrastructure where machine activity can be recorded and verified through a public ledger. Machines do not simply execute tasks. They also provide cryptographic proof that those tasks were executed as expected.

It may sound simple at first, but the impact of this idea could be massive. As robotics expands into logistics, manufacturing, healthcare, and automation-heavy industries, the ability to verify machine behavior becomes increasingly important. Fabric approaches this through verifiable computing. AI outputs can be connected to cryptographic proofs, allowing observers to confirm that certain computations actually occurred. The network architecture is designed so robots act as participants rather than passive devices. They interact directly with the protocol, creating a traceable layer of machine collaboration.

But there is an important nuance here that many people overlook. Blockchain can confirm that something happened. It does not automatically confirm that the decision itself was correct. A robot might prove that it executed a model exactly as programmed, yet that model could still rely on flawed or biased data. This is where the Asli vs Naqli problem becomes serious. If the data entering the system is unreliable, verification alone cannot fix the mistake. It simply proves that the flawed process happened exactly as designed.

This gap between verification and truth is something almost every decentralized AI infrastructure project eventually faces. Fabric attempts to reduce the risk through modular architecture, separating layers responsible for data coordination, computation, and governance. The goal is to create an environment where machine collaboration becomes observable and auditable rather than hidden inside corporate systems.

Then there is the economic layer that holds the network together. Fabric introduces the $ROBO token as the incentive mechanism. Validators are expected to secure the network. Compute providers supply infrastructure resources. Data contributors support the ecosystem by feeding the network with useful inputs. The token becomes the economic glue connecting all these participants.

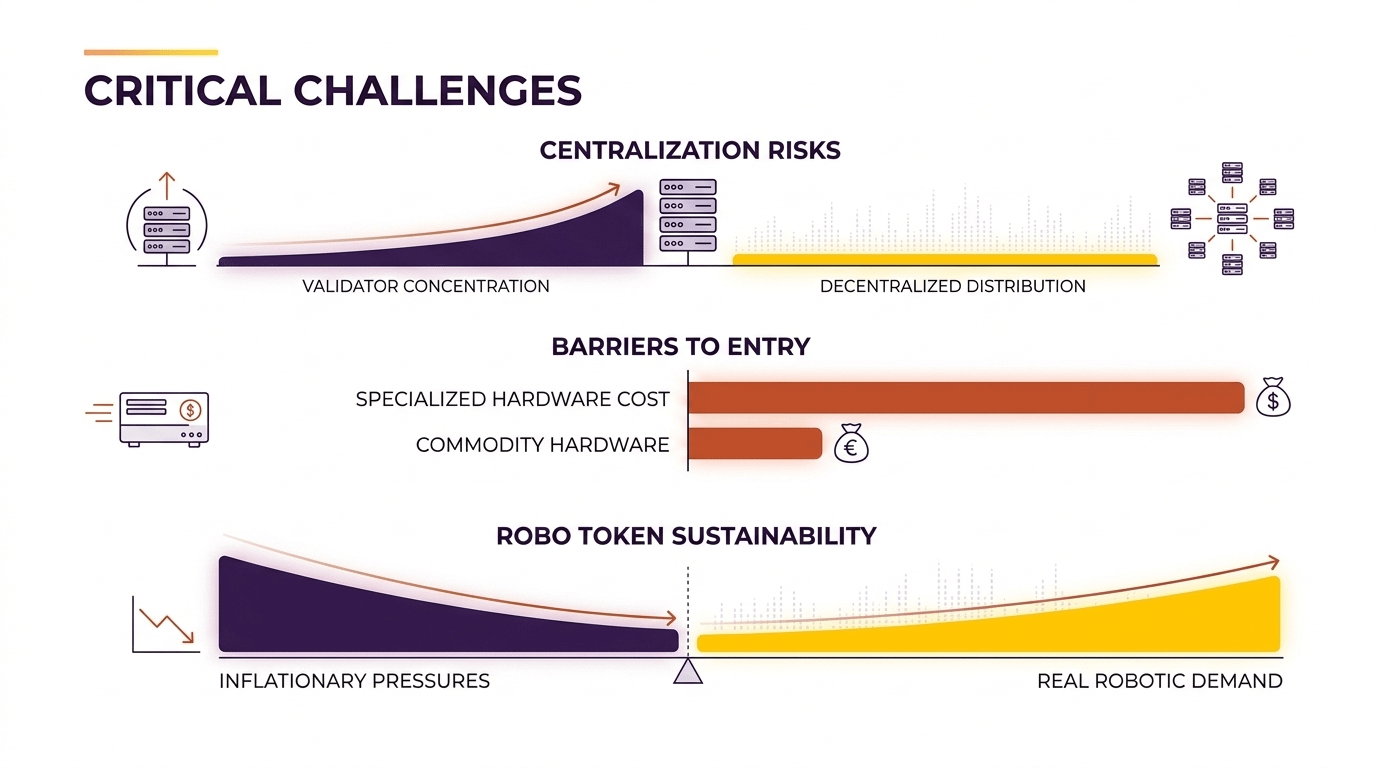

However, sustainability depends heavily on how this token economy evolves. If emissions are too high, inflation could weaken long-term value. If rewards are too small, infrastructure providers may not find it worthwhile to support the network. Robotics infrastructure requires reliability and stability over long periods, not short speculative cycles. For the model to work, demand for the token must eventually come from real robotic activity rather than temporary market hype.

In my view, Fabric’s vision is quite ambitious. The idea of building a transparent coordination layer for machines makes logical sense as AI systems become more autonomous. But the path forward is not without risks. Validator power could concentrate if the infrastructure requirements are too demanding. Specialized hardware may create barriers that lead to centralization among a small group of operators. And the token economy must prove that incentives can remain balanced without relying on excessive inflation.

The bigger conversation is not just about Fabric Protocol itself. It is about how society plans to manage intelligent machines in the future. If robots and AI agents eventually control logistics networks, factories, or digital infrastructure, should their actions remain hidden within private systems? Or should there be a transparent network where anyone can run a Check-shack and verify what those machines are actually doing?

I’m curious what others think about this direction. As autonomous machines become more powerful, will decentralized verification networks become necessary or will centralized control still dominate the robotics economy?