There is a strange assumption in discussions about robotics if machines become intelligent enough, everything else will sort itself out.

Better perception models, stronger reasoning engines, and more capable hardware are often seen as the key components for autonomous systems. However, intelligence alone does not address a much deeper issue that arises when robots operate outside controlled environments.

Autonomous machines need identity.

In a factory or warehouse, a robot’s identity doesn’t matter much. The company that operates the facility controls the hardware, software, and the surrounding network. Trust is centralized. Every machine belongs to a known system.

However, robotics is no longer limited to these environments. Delivery bots navigate public streets, drones inspect infrastructure, and agricultural machines gather environmental data across vast regions. Once robots function in shared spaces, they behave less like tools and more like participants in a network.

Every network participant raises the same question: can its actions be verified?

This is where the infrastructure explored by @Fabric Foundation becomes interesting. Fabric Protocol views robotics from a standpoint that treats machines not just as devices, but as autonomous agents that can engage in verifiable computation networks.

When a robot completes a computational task, such as analyzing sensor data, generating maps, or interpreting environmental signals, the output can be paired with cryptographic proof. This proof allows other systems to verify that the computation was done correctly.

Instead of relying blindly on a machine’s internal software, the network checks the outcome.

These verified results can then be recorded in a shared ledger, creating a transparent history of machine activity. This leads to a structure where robotic actions become auditable events instead of obscure operations.

This design subtly changes the role of data generated by machines.

Currently, most robotics deployments create large amounts of information that remain confined within proprietary systems. Navigation maps, infrastructure scans, and operational data are all kept within individual organizations.

But once machine computations can be verified and linked to persistent identities, these outputs can be used in a wider coordination network. Data from one system becomes accessible to others without requiring blind trust.

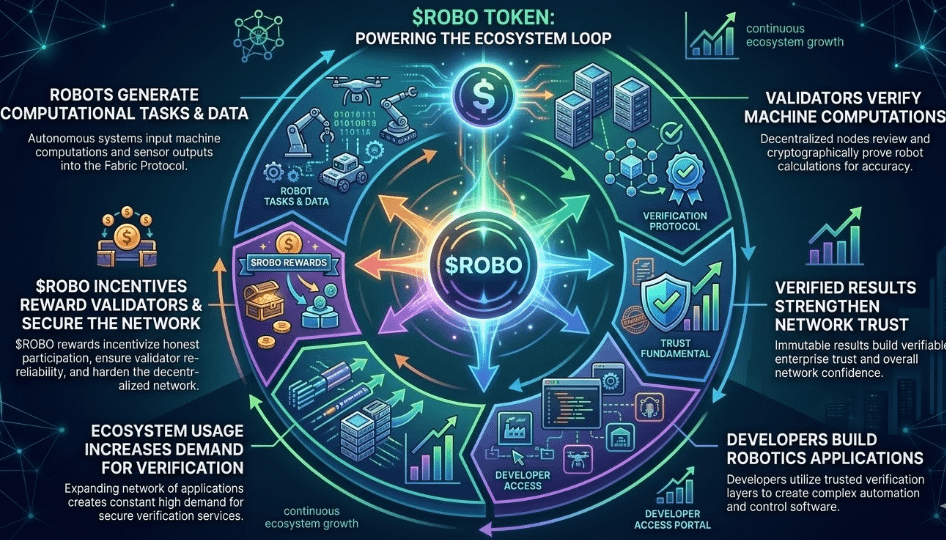

Maintaining this verification layer requires incentives, which is where the token $ROBO comes into play.

In Fabric’s ecosystem, validators confirm the computational proofs generated by machines and ensure that outputs remain trustworthy across the network. Developers creating robotic applications rely on this verification infrastructure to validate the data entering their systems. Governance participants help guide how the protocol develops as machine networks grow.

Thus, the token becomes part of the mechanism that supports the verification market itself. The presence of ROBO in the ecosystem shows its role in aligning the participants responsible for maintaining that trust layer.

What makes this architecture especially relevant now is the growing overlap between AI agents and physical robotics systems. Autonomous software agents can already execute tasks, manage data flows, and interact with decentralized networks. As these agents start coordinating with physical machines, verification becomes even more crucial.

When a robot executes instructions from an AI agent, multiple layers of uncertainty arise. The system must verify not only what the machine did but also how the underlying computation was created.

Fabric’s approach indicates that autonomous systems may need something similar to an identity and verification framework before large-scale machine collaboration can be reliable.

Implementing such infrastructure across real-world robotics networks will not be easy. Physical machines generate huge data streams, operate under latency constraints, and interact with environments governed by safety regulations.

Nonetheless, the trend is becoming harder to ignore. The robotics industry is advancing toward interconnected machine ecosystems instead of isolated devices.

In these ecosystems, intelligence alone is not sufficient.

Machines may ultimately need something humans have relied on for centuries in complex systems: a way to prove who they are and verify what they have done.

#ROBO $ROBO @Fabric Foundation