The more time I spend exploring AI tools, the more I notice a strange contradiction. On one hand, artificial intelligence has become incredibly capable. Models can summarize complex documents, generate code, analyze market data, and even assist with research. On the other hand, anyone who has used these systems seriously knows that they still make mistakes — sometimes subtle, sometimes obvious. An answer can sound extremely confident while still being wrong.

Thattension between capability and reliability is one of the quiet challenges in the AI industry today. We are building systems that can produce large amounts of information, but we still struggle to verify whether that information is trustworthy. When AI is used casually, the risk is small. But as these systems move into financial analysis, automation, and decision-making tools, the reliability problem becomes much harder to ignore.

While looking into projects trying to address this issue, I came across **Mira Network**. What interested me was that the project is not trying to compete in the race to build the most powerful AI model. Instead, it focuses on something more foundational: how to verify AI-generated information before people rely on it.

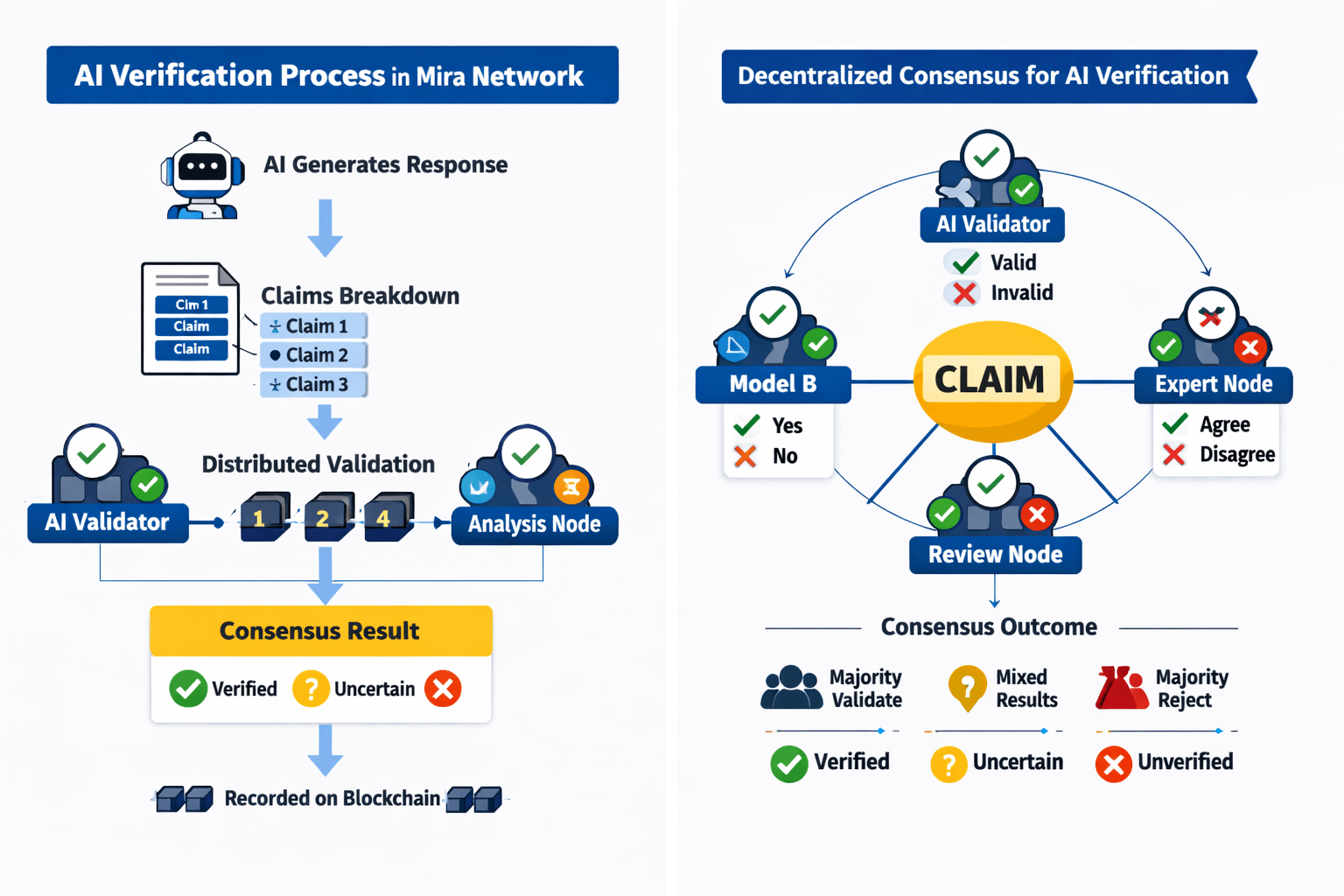

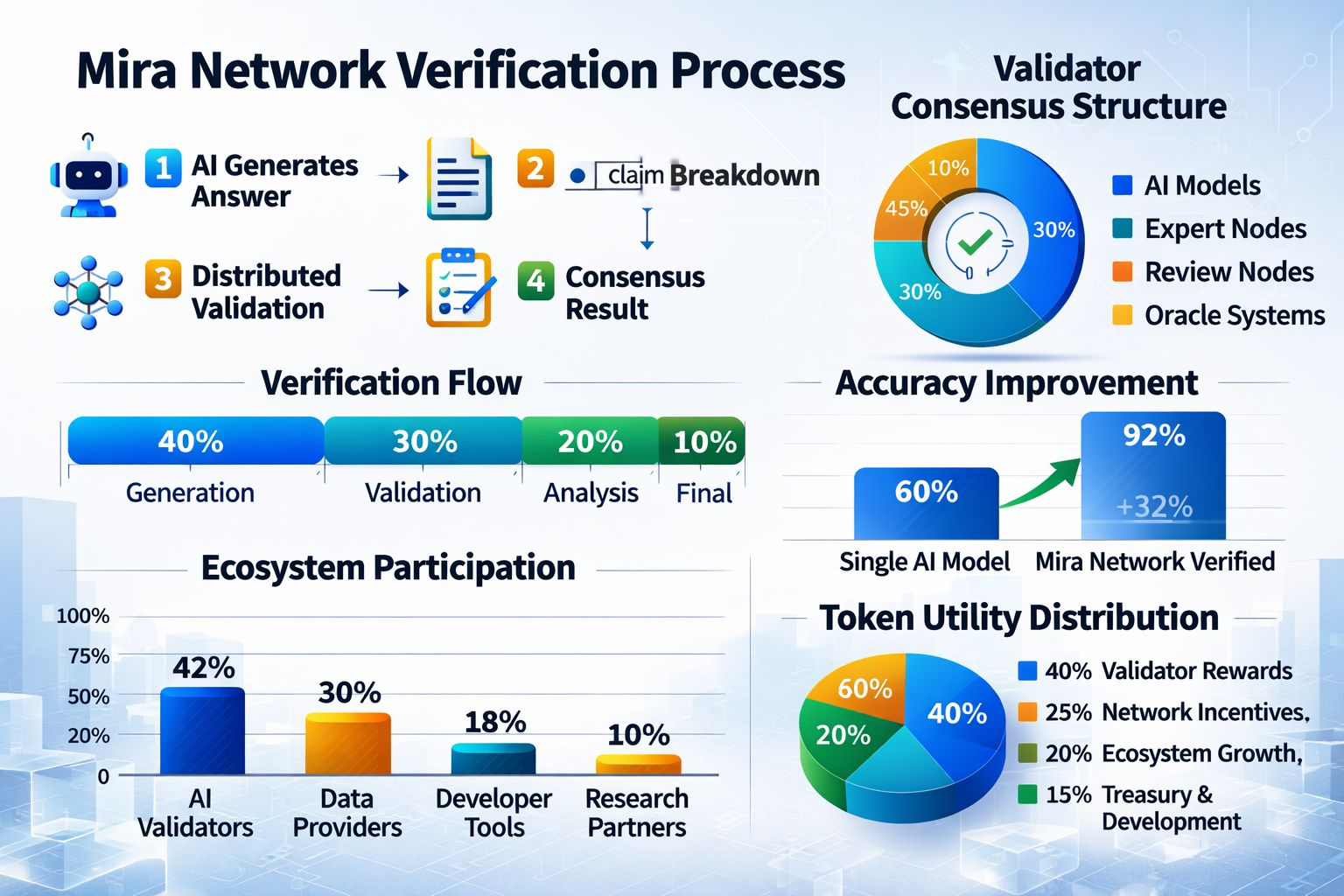

The idea behind Mira is surprisingly straightforward. Instead of treating an AI answer as a finished result, the system treats it more like a set of claims that need to be tested.

Imagine an AI generating a detailed explanation or report. Normally, that response would simply be delivered to the user. In Mira’s system, the process doesn’t stop there. The output is broken down into smaller pieces of information — individual claims that can be examined separately.

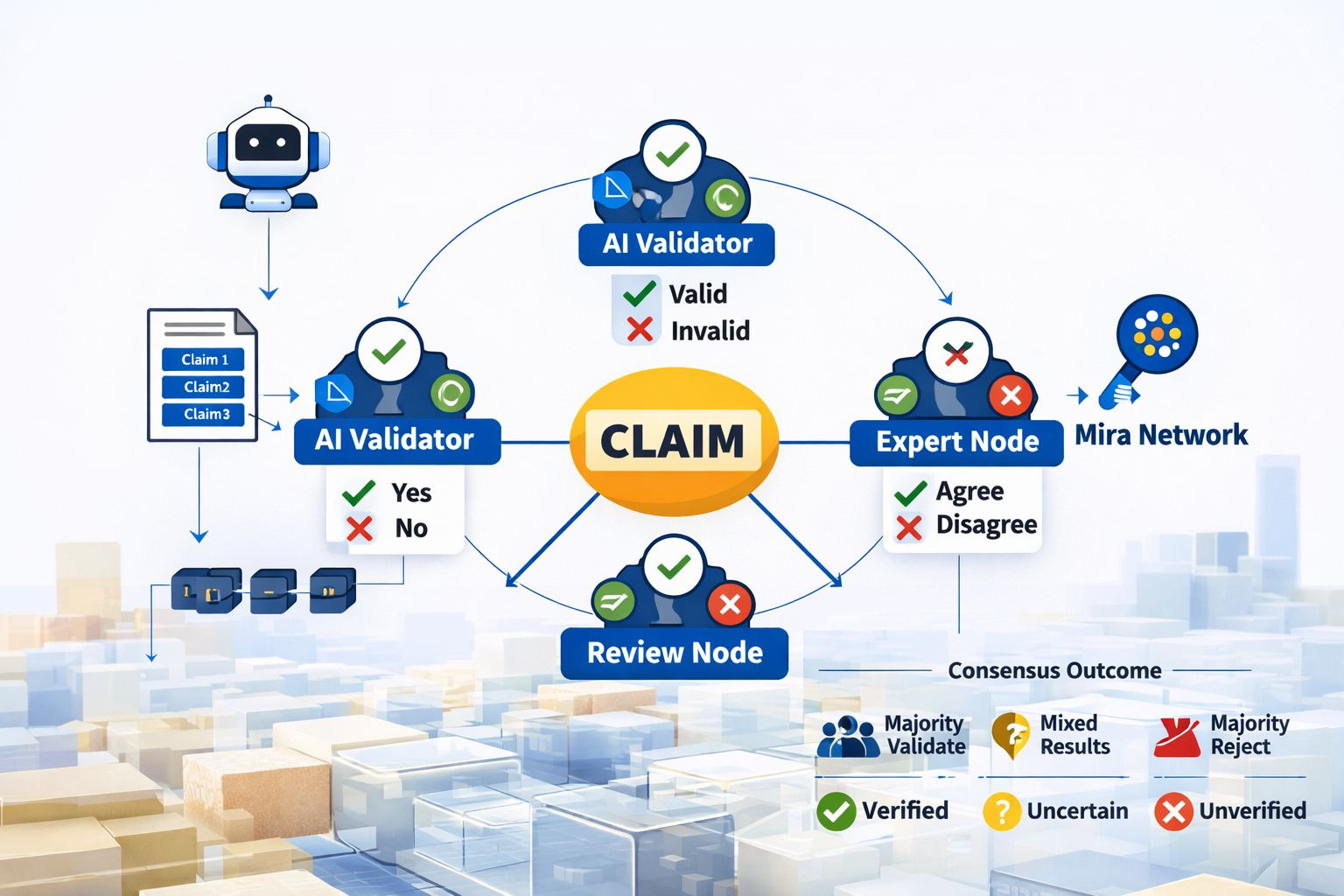

These claims are then sent to a network of validators. Each validator evaluates whether the claim appears accurate based on its own analysis. In many cases these validators can be other AI models, verification agents, or systems designed to analyze information from different perspectives.

Once multiple validators review the same claim, their responses are compared. If the majority of validators reach the same conclusion, the system can assign a higher level of confidence to that piece of information. If their responses disagree, the claim may be flagged as uncertain or unreliable.

What this creates is a kind of distributed verification process. Instead of trusting a single model, the system relies on multiple independent evaluations. The final answer becomes something closer to “verified information” rather than just an AI opinion.

The blockchain layer helps coordinate this process. Verification tasks, validator participation, and results can be recorded on a public ledger. This makes the process transparent and traceable. Anyone examining the system can see how information was evaluated and which validators participated.

From a design perspective, this structure resembles how blockchain networks verify financial transactions. Instead of one authority deciding whether something is valid, multiple participants contribute to a consensus.

Another interesting part of the system is how incentives are structured. Validators who contribute useful verification work can receive rewards, while inaccurate or dishonest behavior may carry penalties. The token associated with the network helps coordinate these incentives, encouraging participants to act carefully when evaluating claims.

What I find appealing about this approach is that it treats AI outputs less like answers and more like starting points for verification. AI generates the information, but the network tests it before it is accepted.

Of course, turning that concept into a practical system is not simple. Verification requires additional computation and coordination. If every AI response requires multiple layers of validation, the network needs to manage the balance between accuracy and efficiency.

Validator diversity is another factor that will likely matter over time. If validators rely on very similar AI models, they may repeat the same biases or mistakes. The system becomes more reliable when different types of models, datasets, and analytical approaches participate in the verification process.

Adoption will also play a large role in determining how useful the network becomes. Developers building AI applications need practical reasons to integrate a verification layer. That means the protocol must provide tools, incentives, and performance that fit naturally into existing workflows.

While studying Mira’s documentation and community discussions, I spent some time thinking about where this type of system fits in the broader AI landscape. Most attention in the industry is still focused on improving model performance. But reliability may become just as important as capability.

If AI systems are expected to assist with research, trading, medical insights, or automated decision making, users will want more than impressive answers. They will want systems that can demonstrate why those answers should be trusted.

This is where verification infrastructure starts to make sense. Just as blockchains introduced new ways to verify financial transactions without centralized intermediaries, similar mechanisms might eventually be used to verify information generated by machines.

During my own research process, I also looked at how the project is discussed across the crypto and AI communities. Interestingly, many conversations focus on the concept of verifiable AI rather than short-term price speculation. That type of discussion usually suggests people are trying to understand the mechanics of the system rather than simply chasing a narrative.

Still, there are open questions that will only be answered over time. Scaling a verification network while maintaining fast response times will require careful engineering. Governance decisions, validator incentives, and developer adoption will all influence how the ecosystem evolves.

But the core idea remains compelling. Artificial intelligence can generate enormous amounts of information, yet our ability to verify that information has not evolved at the same pace. Systems like Mira attempt to close that gap by introducing distributed verification into the AI pipeline.

Whether Mira ultimately becomes a widely used piece of infrastructure will depend on execution and adoption. What is clear, however, is that the problem it is addressing will only become more important as AI systems continue to expand.

In a world increasingly shaped by machine-generated knowledge, the question may not only be how intelligent our systems are, but how confidently we can trust what they produce.

@Mira - Trust Layer of AI #mira #Mira $MIRA