Ever since large language models started writing prose that sounded like expert analysis, researchers flagged some big problems hallucinated facts, drifting accuracy, and baked in bias. They didn’t just notice these issues; they tracked them in journals like Nature, Science, and the Journal of Machine Learning Research. People in finance, healthcare, and autonomous vehicles saw the risk right away. One mistake from an AI system could ripple out and cause real harm. Pretty soon, regulators, insurers, and risk officers started demanding proof for every AI-generated decision clear records, real accountability.

Meanwhile, the blockchain world had already spent years perfecting permissionless ledgers and building tools like Chainlink to guarantee untampered market data. But authenticating complex, context rich AI outputs turned out to be a new beast. They tried blending Merkle proofs with on chain verification, but those first attempts just didn’t cut it. The solutions weren’t flexible enough, and they struggled to handle the scale needed for high stakes applications.

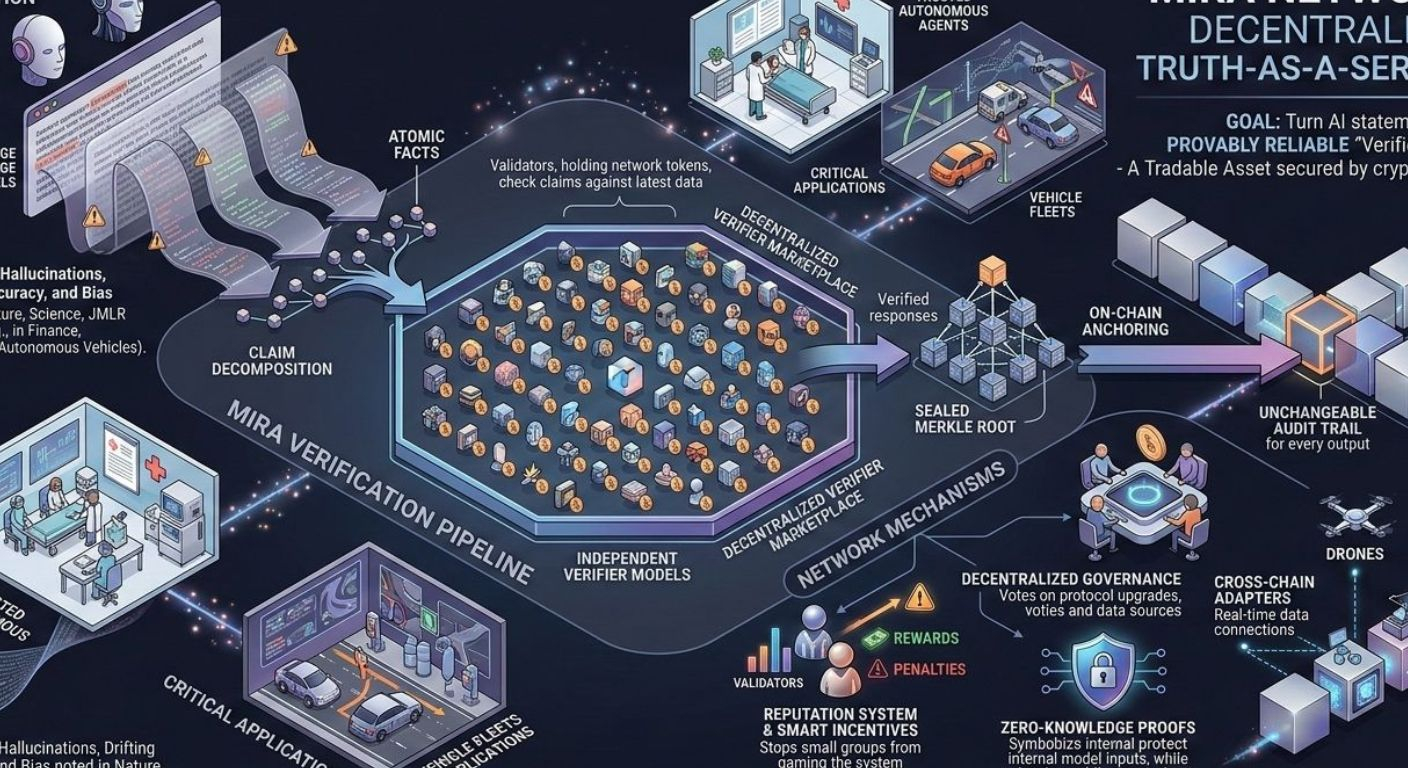

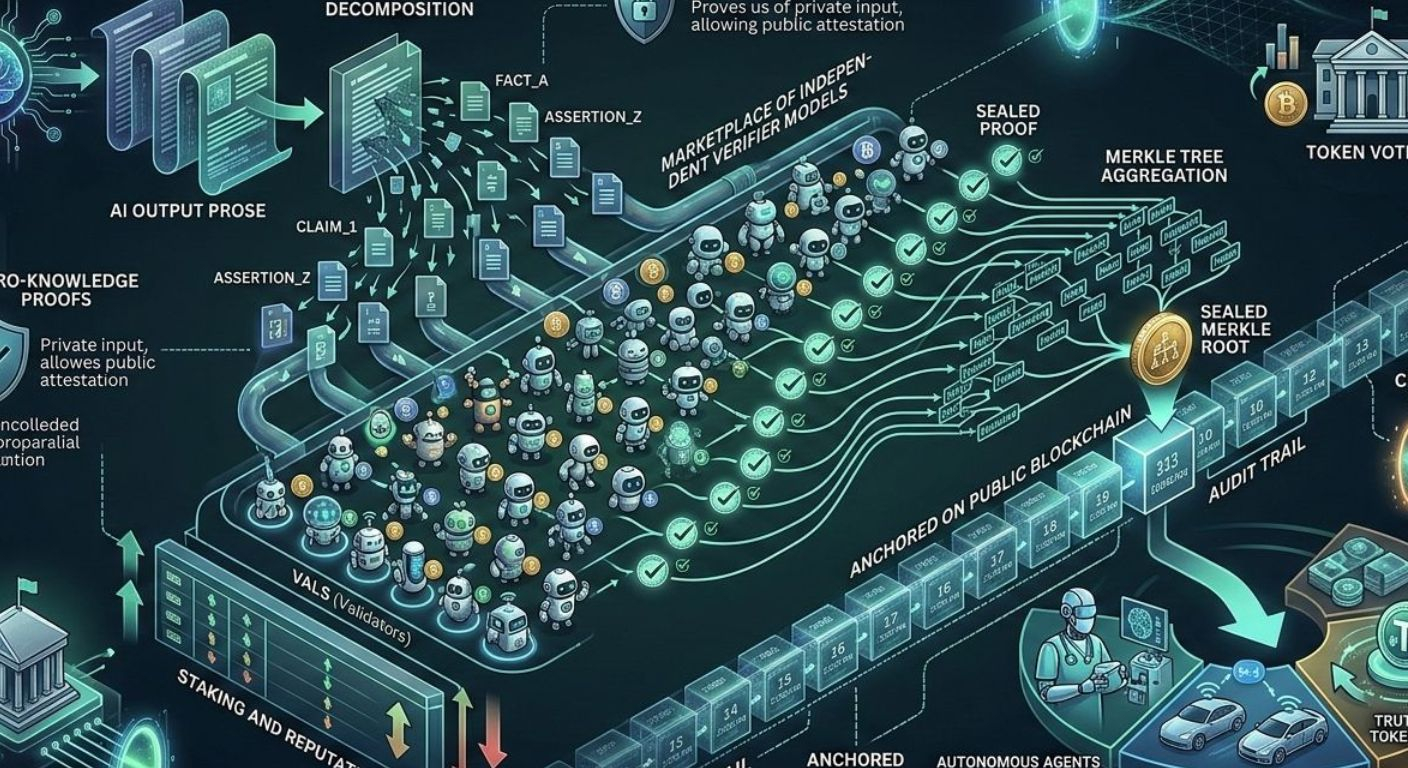

All this led to a simple but powerful idea: what if you could build a decentralized “truth as a service” layer? Something that harnessed AI’s creativity but still satisfied the strict demands of regulators. So, in late 2023, a group of engineers, scientists, and cryptographers got together to design a new kind of protocol. Here’s how it works: every model response gets broken down into individual claims. Each claim goes out to an open marketplace of independent verifier models. Their judgments get bundled into a sealed Merkle root, which is then anchored on a public blockchain. That means you end up with an unchangeable audit trail for every AI output.

The result is Mira Network. The goal? Turn every AI-generated statement from a “maybe” into something provably reliable. That unlocks all sorts of things autonomous agents you can actually trust in hospitals or vehicle fleets, for example. It’s about giving people a reason to trust machine-driven decisions again, and even turning verified truth into a tradable asset, secured by cryptography and smart incentives.

Here’s what Mira’s pipeline actually does: it takes each AI output, splits it into atomic facts, and sends them to a decentralized pool of lightweight validator models. These validators, each holding network tokens, check every claim against the latest data. They return sealed proofs, which get rolled up into a Merkle root and recorded on a public ledger. The system ties rewards and penalties to each validator’s performance, and a reputation system boosts the influence of validators who keep getting it right. This way, the network aligns everyone’s incentives and stops small groups from gaming the system.

Looking ahead, Mira plans to add cross chain adapters so things like drones or trading bots can tap into verified claims across different blockchains in real time. They’ll use zero-knowledge proofs to protect proprietary model inputs while still sharing public attestations. Decentralized governance will let token holders propose new data sources, tweak incentives, and vote on protocol upgrades.

Sure, there are risks. Validators could collude and bias results, spammers might try to drain rewards, and there’s always the question of how regulators will treat algorithmic consensus. Plus, if people rely too much on automation, oversight could slip. But if Mira can navigate these pitfalls, it really could let autonomous agents run safely in critical places and help rebuild trust in machine augmented decisions turning verified truth into a real, tradable asset.

@Mira - Trust Layer of AI #Mira $MIRA