Mira Network represents a pioneering decentralized protocol that addresses one of the most pressing challenges in artificial intelligence: ensuring the reliability and trustworthiness of AI outputs. By integrating blockchain technology with multi-model consensus and game theoretic economic mechanisms, Mira creates a “trust layer” for AI, transforming unverifiable outputs into cryptographically provable, verified intelligence. This approach is particularly vital in an era where large language models (LLMs) frequently produce hallucinations (fabricated information) and biases, undermining applications in finance, healthcare, law, and autonomous systems.

The Core Problem: AI Reliability and the Need for Verification

Modern AI systems excel at pattern recognition and generation but struggle with factual accuracy and consistency. A single model, no matter how advanced, operates in isolation and can confidently output errors. Mira Network solves this by decentralizing verification: instead of relying on one authoritative source, it leverages a network of diverse, independent AI verifier nodes. These nodes each running different models with varied training data, architectures, and perspectives collectively assess AI-generated content.

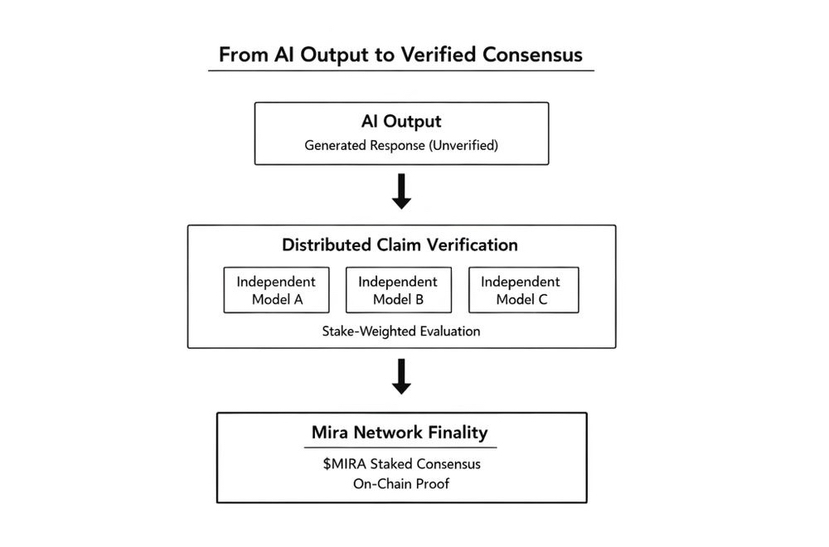

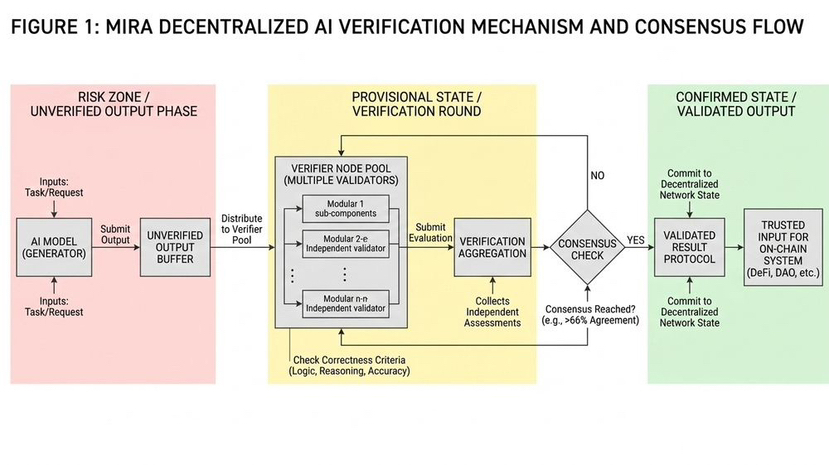

The process begins when a user or application submits content (e.g., an AI response) to Mira. The network breaks it down into smaller, independently verifiable claims while preserving logical relationships. These claims are distributed across nodes for evaluation, often framed as standardized multiple-choice questions (e.g., true/false or select the correct option). Nodes vote on each claim’s validity, and consensus determines the outcome typically requiring a supermajority agreement. A cryptographic certificate is issued, detailing the models involved, their votes, and the final verdict, enabling transparent, auditable proof.

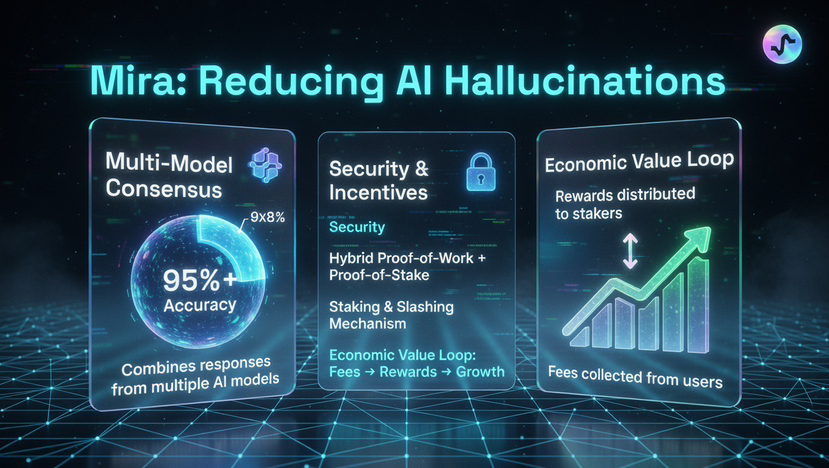

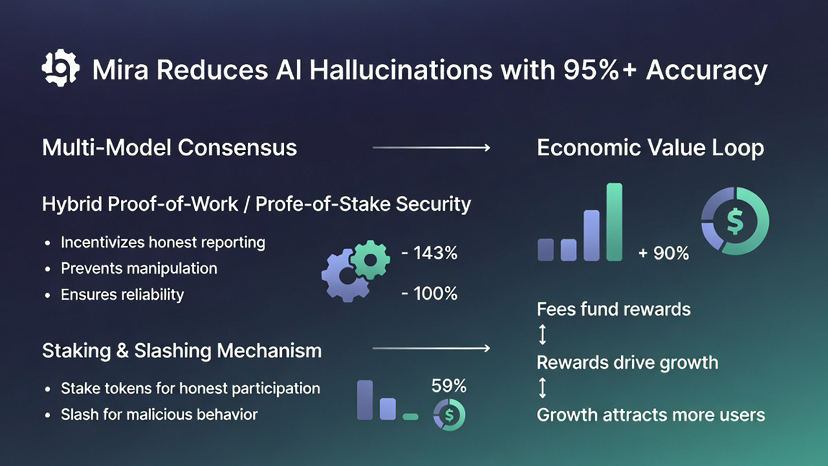

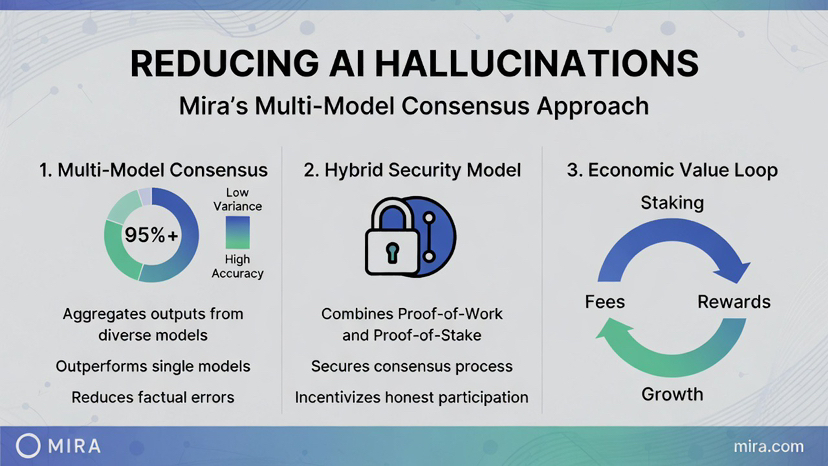

This consensus based verification reduces error rates significantly, with reports indicating Mira achieves over 95% accuracy in verified outputs through its Verified Generate API and marketplace tools.

Game-Theoretic Mechanisms: Aligning Incentives for Honesty

At the heart of Mira’s security lies its economic security model, which draws heavily from game theory to ensure participants act honestly. The network combines Proof-of-Work (PoW) for honest inference (computational effort in verification) with Proof-of-Stake (PoS) for economic alignment.

Nodes must stake tokens (e.g., MIRA) to participate. Honest verifiers earn rewards from user fees paid for verification services. Malicious or lazy behavior such as random guessing, collusion, or consistent deviation from consensus triggers slashing, where staked value is penalized or confiscated.

This design creates a Nash equilibrium where honest participation dominates. Random guessing is deterred because constrained answer spaces (e.g., binary choices offer 50% success probability) make it profitable only without risk but staking introduces downside. As the white paper notes, slashing makes gaming economically irrational.

Game theory principles reinforce this:

Security holds if honest operators control most staked value, making attacks prohibitively expensive (similar to blockchain sybil resistance).

Scaling attracts varied models, reducing bias and collusion probability through sharding and anomaly detection.

Higher usage generates more fees → better rewards → more nodes → greater diversity and accuracy → stronger security.

The white paper includes data on guessing probabilities, showing how success drops sharply with more options and repeated verifications (e.g., for 4 options: 25% for 1 verification, down to ~0.0001% for 10).

Mira’s hybrid model captures real value: users pay for verified outputs, fees flow to honest nodes and data providers. This aligns economic interests with network integrity, fostering specialization (efficient domain specific models) and innovation.

In my point of view conclusion, Mira Network’s integration of game theoretic mechanisms with economic alignment transforms AI verification from a trust-based to a verifiable, incentive driven process. By making dishonesty costly and honesty profitable, it creates a self reinforcing system where collective intelligence secures trust-less AI. As AI adoption accelerates, protocols like Mira could become foundational infrastructure, enabling safer, more autonomous applications. With its decentralized consensus, cryptographic proofs, and robust incentives, Mira paves the way for truly reliable intelligence in a decentralized future.