I’ve noticed that the more polished an AI answer looks, the easier it becomes to forget how little of its judgment I can actually inspect. In ordinary use, that creates a strange gap: the output feels finished, but the trust around it is still informal. What matters to me is not only whether an answer sounds right, but whether there is a durable record of how it was checked.

The core friction is that AI output is easy to generate and hard to verify in a way that multiple parties can trust without falling back on one authority. A model can produce a fluent paragraph in seconds, but fluency is not evidence, and a centralized reviewer only moves the trust problem from the generator to the reviewer. If one company, one verifier set, or one private rulebook decides what counts as “verified,” then reliability still depends on discretion rather than process. The bottleneck is not raw model capability; it is the lack of a shared system that records what was checked, who checked it, and how agreement was reached.

It’s like accepting a lab report without knowing which samples were tested, who signed off on them, or whether the records could be quietly changed afterward.

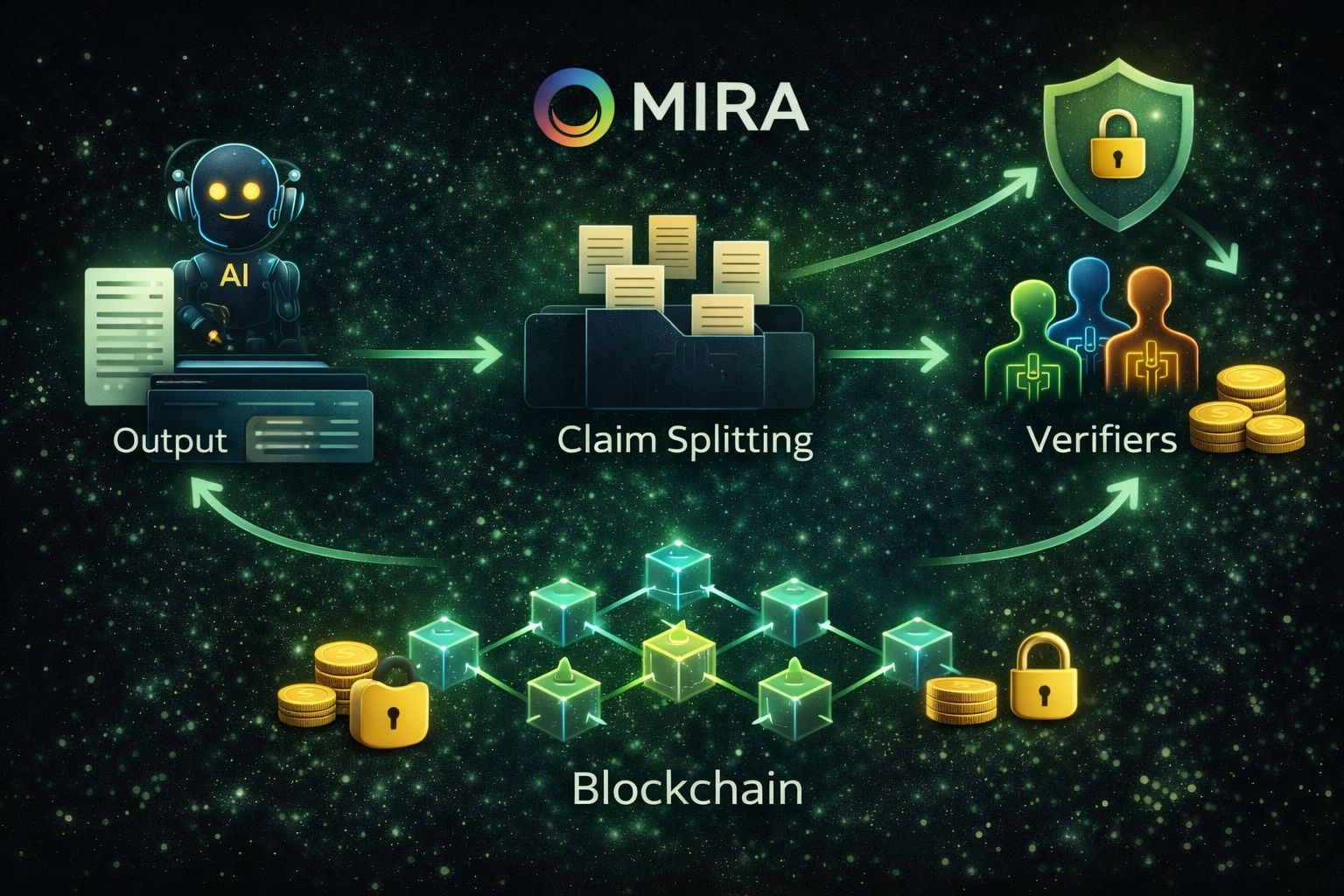

Mira uses blockchain as an accountability layer for AI verification, not as a replacement for inference itself. The main idea, as I understand it, is that models do the checking work while the chain records that work in a form that is ordered, attributable, and resistant to tampering. That distinction matters. The value of blockchain here is not that it makes a model smarter; it is that it turns verification from a private service promise into a shared and auditable process.

The network begins by transforming candidate output into smaller, verifiable claims. That step matters more than it may seem at first. If you send a full paragraph to several verifier models, each one may focus on different parts, interpret ambiguity differently, or fill in missing context in its own way. The result can look like disagreement even when the models were never truly answering the same question. By reducing content into discrete claims, the system creates a standard unit of work. Each claim can then be routed to node operators running verifier models, and each operator can submit a structured judgment on the same underlying task.

Consensus is what makes blockchain fit the problem. Verification is not only a technical exercise; it is a coordination problem under adversarial assumptions. Some operators may be careless, some biased, and some may simply try to optimize rewards without doing real work. The chain can impose explicit thresholds for how much agreement is required before a claim is treated as verified. Instead of trusting one “best” model, the system trusts an aggregation rule applied to signed results from multiple parties. In that setup, blockchain provides ordered finality: it records who submitted what, under which parameters, and what the network ultimately accepted as the final outcome.

On the execution side, the material reads like a specialized verification network rather than a general-purpose smart contract platform. I do not see enough detail to say whether the base state model is account-based or UTXO, or whether a specific virtual machine handles on-chain logic. What is clearer is the transaction lifecycle. A user submits content along with verification requirements, such as a domain or consensus threshold. The network performs the claim-transformation step and routes those claims to operators. Operators return signed verification results. The chain orders those submissions and finalizes an auditable record, then generates a certificate reflecting what was checked and what consensus concluded. Finality depends on the underlying consensus of the chain plus the economic assumption that honest stake remains dominant.

The cryptographic flow matters because attribution is part of the product. A verification result is only useful if it can be tied to a specific request, a specific set of claims, and a specific group of responses. In that sense, the chain functions as a public coordination ledger for verification events. It does not prove truth by itself, but it can prove that a defined verification process occurred, that results were submitted by identifiable participants, and that the accepted outcome followed the network’s stated rules rather than a private override.

Data availability and privacy sit in tension here, and the design seems aware of that. A verification system that exposes too much input data will be hard to use for sensitive workloads, but a system that hides everything becomes difficult to audit. The whitepaper’s approach is to break content into entity-claim pairs and randomly shard them across nodes so that no single operator can reconstruct the full original content. That suggests a storage model where only the minimum necessary verification details are preserved in certificates and on-chain records, while raw content exposure is constrained. The exact split between on-chain data and off-chain handling is not fully specified in the excerpt, so I treat that boundary as open rather than settled.

The token utility is practical in this design. Users pay fees to request verification, which creates demand for operator work. Operators stake value to participate, which gives the system a way to discipline low-effort or manipulative behavior through slashing. Governance then adjusts the parameters that shape security, such as stake requirements, penalty conditions, and consensus strictness. This matters because the verification problem has an awkward property: standardized questions can create a meaningful chance of random success, which means lazy guessing can become economically attractive unless there is a cost to being wrong. Staking and slashing are meant to make that shortcut irrational over time.

Price negotiation appears here in a neutral sense, as part of how verification capacity gets allocated. Users and operators are effectively negotiating over scarce verification throughput through fees and reward rates. If demand for verification rises, fees can rise as well, rationing capacity and attracting more participation. If demand falls, rewards compress and only more efficient operators may remain active. That is not interesting to me as a market narrative. It is interesting because it determines whether the network can sustain a stable cost of honesty: high enough to resist abuse, low enough to remain usable.

One limitation I can’t resolve from the current material is how well this blockchain-based verification model will handle domains where truth is contextual, disputed, or shaped by policy rather than relatively stable factual claims.

I keep coming back to the small discomfort of reading an AI answer that looks finished before it has actually earned trust. What I find useful in this design is that blockchain is being asked to do something narrow but serious: not to generate intelligence, but to make verification legible. For me, that is the more durable idea here. The promise is not perfect output, but a clearer path from claim to accountable judgment, and that already feels more grounded than simply trusting fluent text on sight.

@Mira - Trust Layer of AI $MIRA #Mira