Artificial intelligence is moving forward at a pace that still surprises me every time I think about it. Just a few years ago, most AI systems struggled with basic instructions and simple conversations. They could answer questions in a mechanical way, but the results often felt shallow and limited. Today things look very different. AI tools are writing research summaries, helping scientists explore new ideas, assisting doctors in analyzing medical data, and supporting financial analysts as they study complex market patterns. I’m seeing AI quietly become part of everyday decision making in ways that would have sounded unrealistic not long ago. But even with all this progress, one uncomfortable truth keeps appearing again and again. No matter how powerful these systems become, people still hesitate to fully trust what AI produces.

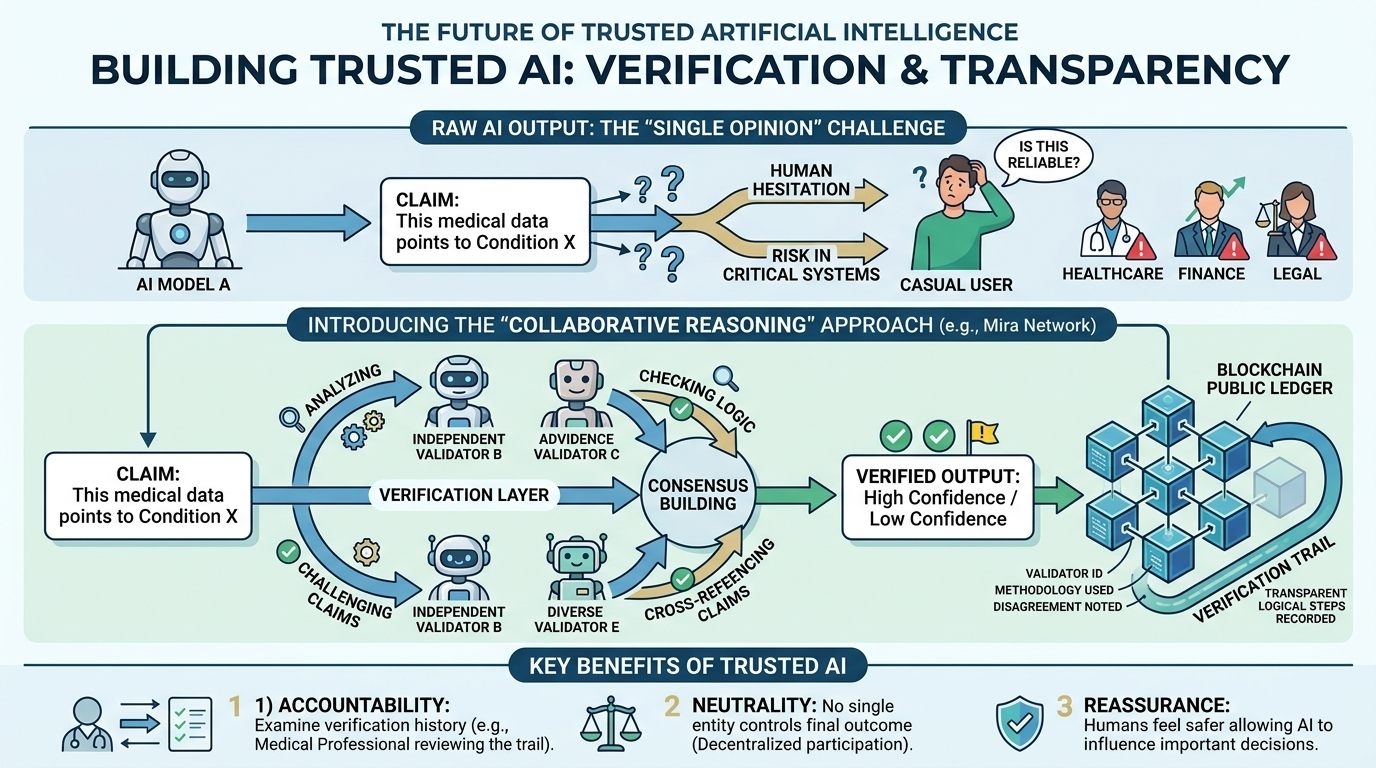

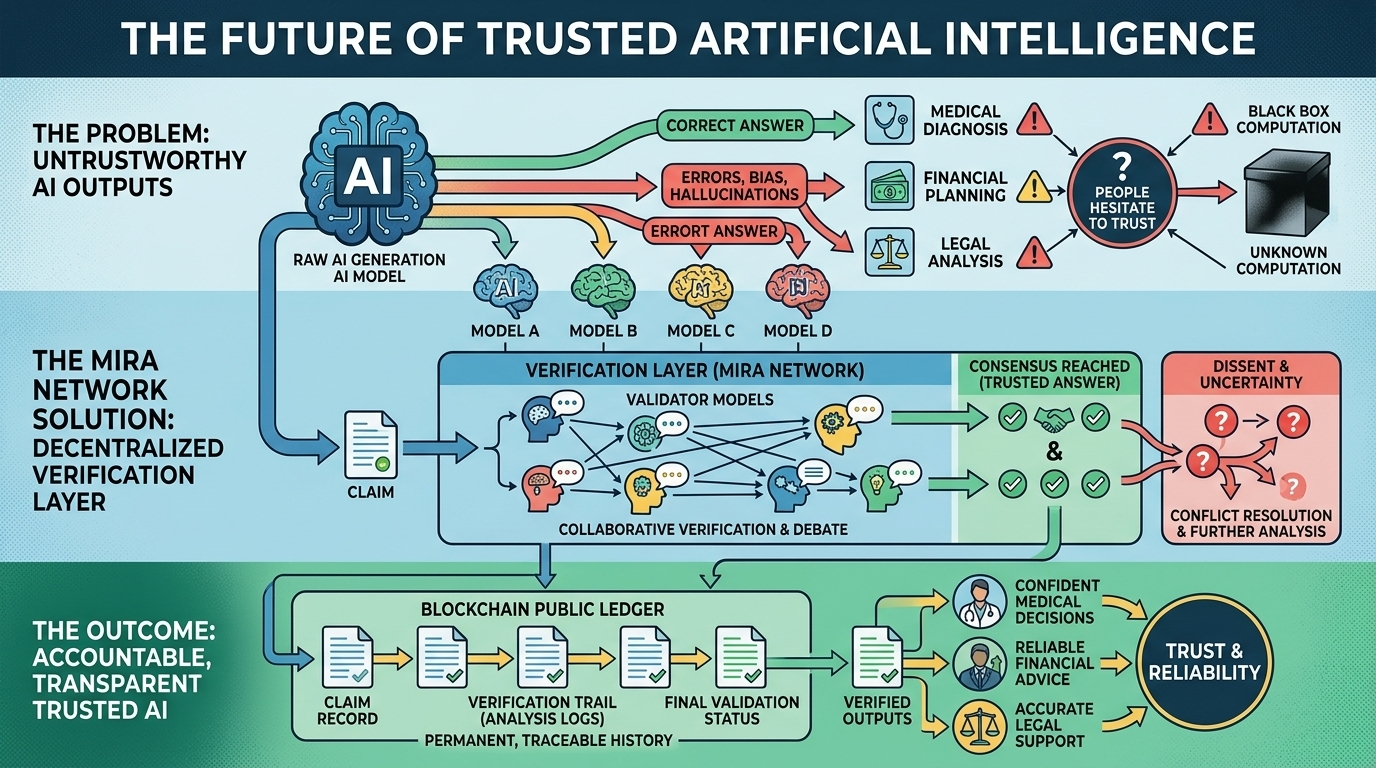

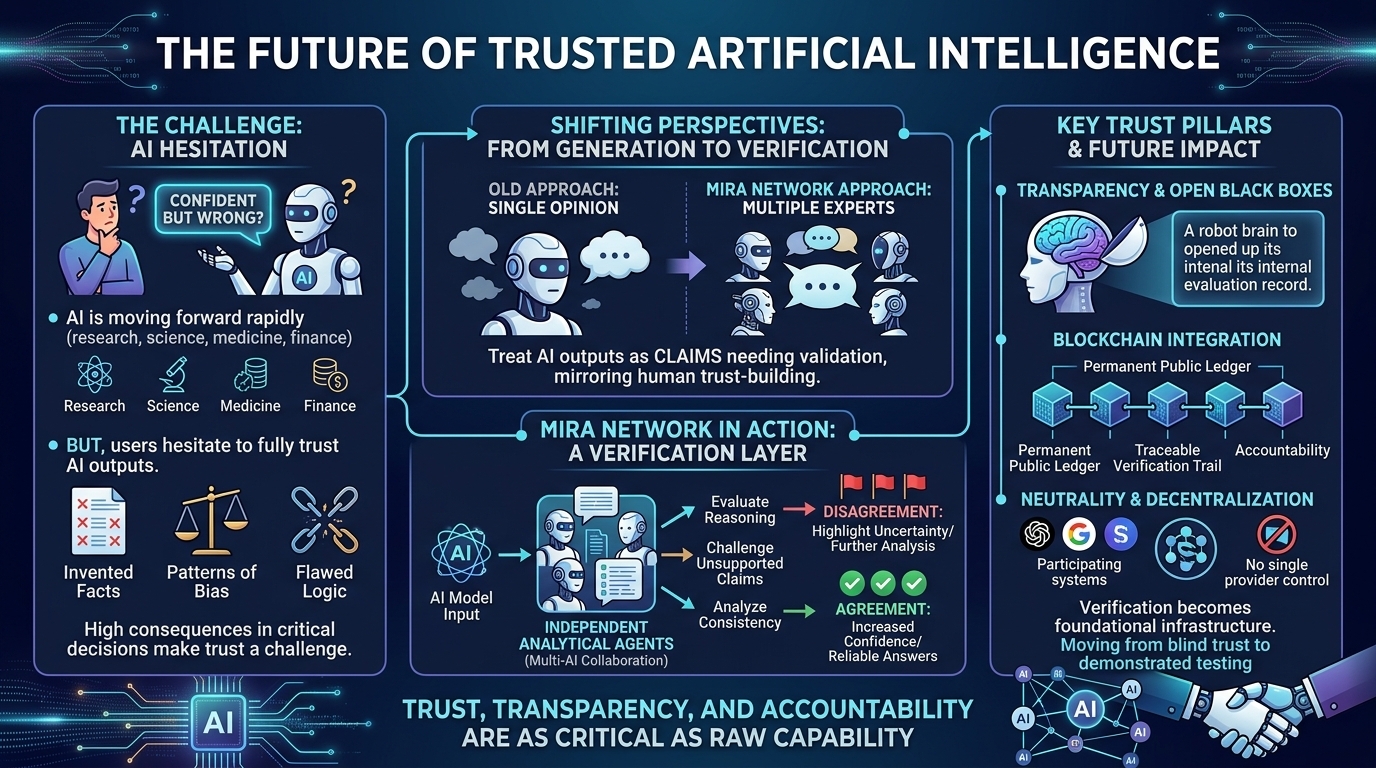

That hesitation is not coming from nowhere. Anyone who has spent enough time using artificial intelligence has probably experienced moments where the system sounds confident but ends up being wrong. Sometimes it invents facts that sound believable but have no real foundation. Other times it repeats patterns of bias that were hidden in the data it learned from. In many situations the logic behind its conclusions simply does not hold up under closer inspection. When someone is casually experimenting with AI or asking for entertainment or general information, these mistakes might feel harmless. But when the same systems are used in healthcare decisions, financial planning, legal analysis, or research environments, the consequences of inaccurate answers can become serious. This growing gap between what AI is capable of producing and what people feel comfortable relying on has quietly become one of the biggest challenges in the entire artificial intelligence industry.

This is where a different way of thinking about AI begins to feel necessary. Instead of asking how we can make AI produce more answers, many developers are beginning to ask a deeper question about how we can make sure those answers are actually trustworthy. I often think about it like the difference between hearing a single opinion and hearing a group of experts discuss the same issue together. When only one voice speaks, there is always uncertainty. When several independent perspectives analyze the same claim, the chances of reaching a reliable conclusion become much stronger. This shift in thinking is exactly the direction that the project called is exploring, and it represents a fascinating attempt to solve one of the most important problems in modern artificial intelligence.

The idea behind Mira Network feels surprisingly simple at first glance, but the implications are powerful. Instead of treating AI generated responses as final answers, the system treats them as claims that need verification. That subtle change transforms the entire process of interacting with artificial intelligence. Rather than trusting one model to generate information and also judge its own accuracy, Mira brings multiple AI systems into the process. Each model evaluates the response, analyzes the reasoning, and contributes its own assessment of whether the claim appears reliable. I find this approach interesting because it mirrors the way humans build trust in complex environments. We rarely rely on one perspective alone when something important is at stake. We consult multiple sources, compare opinions, and look for agreement between independent viewpoints.

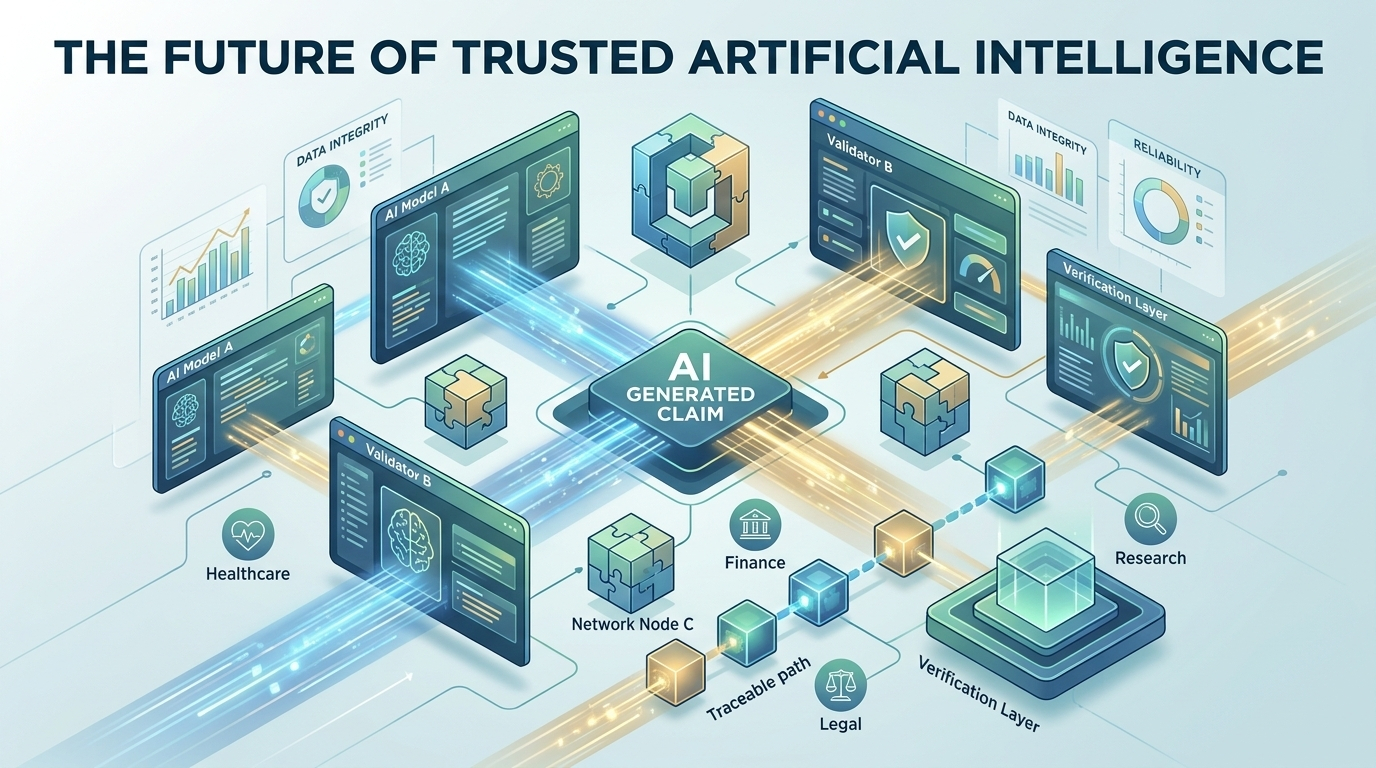

In practical terms this means that Mira Network functions as a verification layer that sits above different artificial intelligence systems. When an AI model generates an answer, the network allows other models to examine that output and challenge it if necessary. They might look for logical flaws, inconsistencies, or unsupported claims. When several independent systems reach similar conclusions about the validity of an answer, the level of confidence in that response increases. When disagreement appears, the network can highlight uncertainty and encourage further analysis. This kind of collaborative verification process begins to transform artificial intelligence from a single voice into a structured discussion among many analytical perspectives.

One of the most interesting aspects of this system is how it attempts to bring transparency into an area that has traditionally been difficult to understand. Most artificial intelligence models operate as complex black boxes. They receive data, perform enormous amounts of internal computation, and produce results that appear almost instantly. For everyday users this often feels mysterious and sometimes frustrating because it becomes difficult to understand how the system arrived at a particular conclusion. Mira Network introduces a structure where the verification process itself can be recorded and observed, which opens the door to a much clearer view of how decisions are evaluated within AI environments.

This is where blockchain technology becomes an important part of the design. Instead of storing verification outcomes in private databases, the results can be recorded on a public ledger. This approach creates a permanent and transparent history showing how specific conclusions were evaluated and validated. I often imagine how valuable this could become in industries where accountability is critical. If a medical recommendation or financial analysis was verified through a network process, professionals could examine the verification trail and understand how that confidence was reached. Rather than relying on blind trust in a hidden system, users gain access to a traceable record that reveals the steps taken to confirm the reliability of an answer.

Another element that makes this ecosystem interesting is its focus on neutrality. In the current landscape of artificial intelligence, many systems are built and controlled by large organizations that develop their own models and platforms. While those innovations have driven rapid progress, they also raise concerns about centralization and influence. Mira Network approaches the situation differently by positioning itself as an independent verification layer that can interact with many different AI models rather than belonging to a single provider. When multiple developers and systems participate in the same verification environment, the process naturally becomes more balanced because no single entity controls the final outcome.

I also find the human side of this idea compelling because trust has always been one of the most fragile elements in technological change. When new tools appear, people often admire their power but hesitate to depend on them completely. Over time that trust grows only when systems prove that they can operate reliably and transparently. Artificial intelligence is now reaching a stage where society is beginning to rely on it for tasks that genuinely affect people’s lives. That shift makes the question of verification feel deeply personal rather than purely technical. It is not only about algorithms and infrastructure. It is also about whether individuals feel safe allowing these systems to influence decisions that matter.

Building a network capable of performing this kind of verification is far from simple. Incentives have to be carefully designed so that participants act honestly and contribute meaningful evaluations. Validators who review AI outputs need clear motivations to behave responsibly and maintain the integrity of the process. Economic mechanisms, governance rules, and technical architecture all play a role in shaping how trustworthy the system becomes over time. As the network grows larger, additional challenges appear around scalability, coordination, and ensuring that verification remains efficient even when thousands of participants are involved.

There is also an emotional dimension that often goes unspoken in conversations about technology. Many people feel both excitement and uneasiness as artificial intelligence becomes more capable each year. On one hand the possibilities are extraordinary. On the other hand the speed of change can feel overwhelming. I sometimes think that systems like Mira Network represent an attempt to bring a sense of reassurance into this rapidly evolving landscape. By creating structures where AI claims are continuously checked, debated, and validated, the technology begins to resemble the collaborative reasoning processes that humans naturally trust.

The long term vision surrounding this idea suggests that verification could become a foundational layer of future digital infrastructure. Instead of AI systems operating in isolation, they could exist within networks where every important output is automatically reviewed by independent analytical agents. Over time this might transform the relationship between humans and artificial intelligence. Rather than viewing AI as a mysterious authority that produces answers we hope are correct, people could interact with systems that openly demonstrate how conclusions were tested and confirmed.

When I think about the direction artificial intelligence is heading, it becomes clear that raw capability alone will not define its success. Power without reliability eventually reaches a limit because people cannot build critical systems on uncertain foundations. Trust, transparency, and accountability will likely become just as important as processing speed or model size. Projects like Mira Network are exploring what that future might look like by focusing less on producing more AI output and more on making sure the output deserves to be trusted.

As artificial intelligence continues weaving itself deeper into everyday life, the need for dependable verification will only grow stronger. Decisions influenced by AI will affect healthcare outcomes, financial stability, scientific discovery, and countless other aspects of society. In that environment, the ability to examine and confirm how AI arrives at its conclusions becomes more than a technical feature. It becomes a safeguard for the entire digital ecosystem. The idea that a network of independent systems can work together to evaluate truth may feel ambitious today, but it also carries a powerful message about the future of technology. Intelligence alone may change the world, but trustworthy intelligence is what allows people to truly embrace it.

#Mira @Mira - Trust Layer of AI $MIRA