I have spent enough time integrating AI systems into real workflows to understand that the real challenge isn’t generating intelligence — it’s controlling where that intelligence is trusted. Anyone who has deployed models into production knows how quickly confidence breaks down when outputs are inconsistent, unverifiable, or impossible to trace. Benchmarks and model sizes look impressive in presentations, but once those outputs start influencing real decisions, the question changes from “How powerful is the AI?” to “Who verifies that it’s right?”

That’s the lens I use when looking at Mira Network.

Most conversations around AI networks focus on adoption metrics: number of models, user growth, or daily queries. Those numbers look good on dashboards, but they don’t explain how the system behaves when conflicting outputs appear or when participants have incentives to manipulate results. Real infrastructure is defined by enforcement, not by traffic.

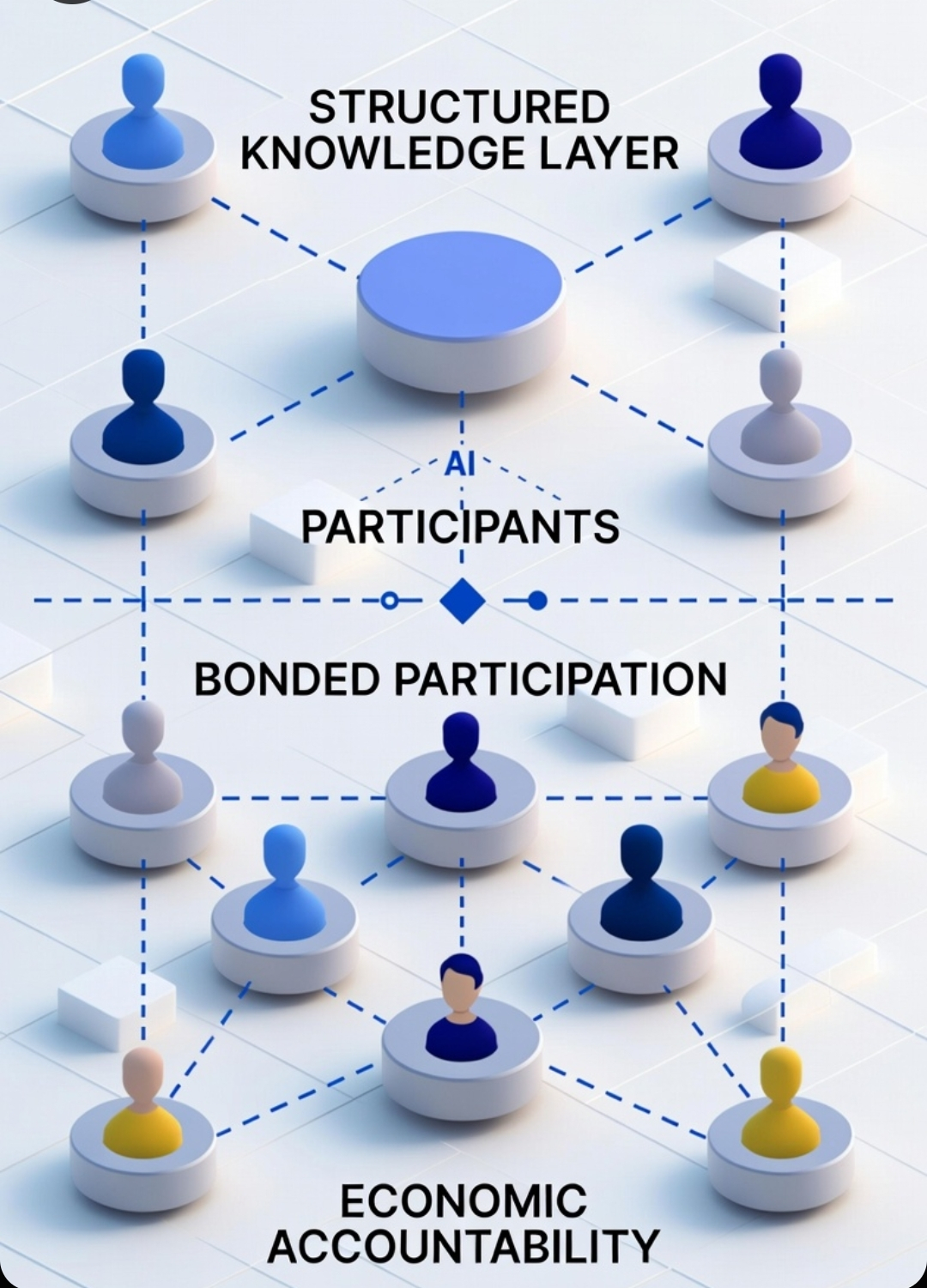

Mira approaches the problem by inserting a decentralized intelligence checkpoint between AI generation and real-world use. Instead of treating outputs as final answers, the network treats them as claims that must pass through verification rounds. Multiple participants inspect the reasoning, compare perspectives, and reach consensus before the output is accepted. Anything that fails verification simply doesn’t propagate. In practice, this acts like a safety gate that prevents flawed reasoning from quietly spreading through downstream systems.

What makes the system interesting from an infrastructure standpoint is how participation is structured. Mira doesn’t rely on simple fee-based access. Anyone can pay a fee to use a network, but fees alone don’t create responsibility. In fee-driven systems, participants can submit work, disappear, and return with a new identity if something goes wrong.

Bonded participation changes that dynamic entirely.

In Mira’s model, stake-weighted entry and work bonds require participants to lock value in order to verify or contribute to the network. That bond becomes a form of economic accountability. If a validator repeatedly approves faulty outputs or attempts to manipulate consensus, the protocol has the ability to penalize the bonded stake. Behavior is shaped by risk, not by reputation alone.

This structure also improves resistance to Sybil attacks. When identities are free, attackers simply create more of them. When each identity requires bonded capital, scaling an attack becomes expensive. The protocol doesn’t eliminate manipulation entirely, but it forces adversaries to risk real resources.

Over time, verified outputs accumulate into a structured knowledge layer where every decision is tied to consensus history. That creates an AI accountability network where reasoning is traceable, auditable, and enforced by protocol rules rather than trust.

And after integrating enough systems, one lesson becomes clear: infrastructure survives because it enforces behavior. Marketing narratives come and go, but protocols that embed enforcement into their architecture are the ones that actually endure.

@Mira - Trust Layer of AI #mira #MIRA $MIRA