For a while, I assumed the biggest risk in AI systems was simple: the model might be wrong.

That concern still exists. Models hallucinate, misinterpret context, or confidently state things that don’t hold up under scrutiny. But as AI begins to sit closer to operational systems, another problem becomes more obvious.

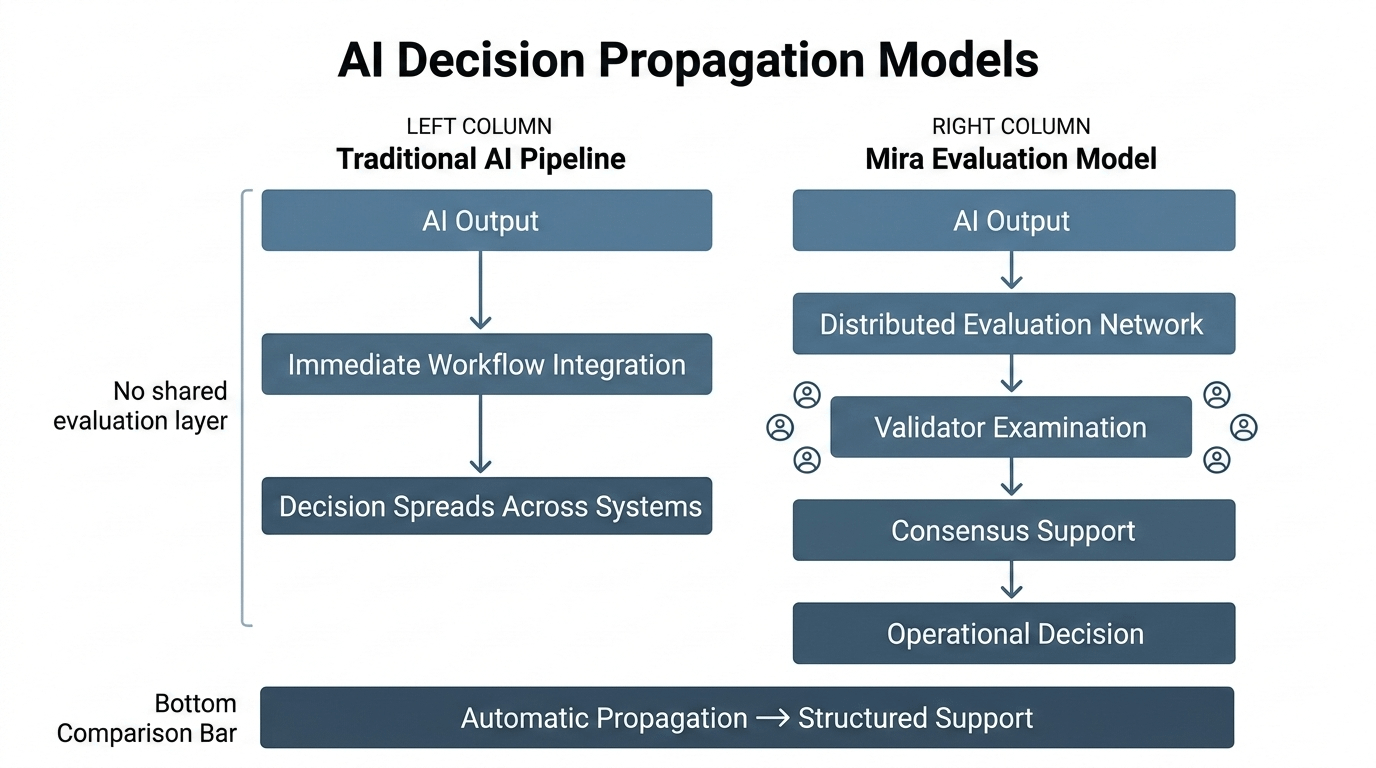

AI decisions tend to travel alone.

An output appears inside a workflow, and it moves forward almost immediately. A recommendation becomes part of a report. A classification becomes part of a risk profile. A summary becomes something a team relies on without ever seeing how it formed.

The decision spreads, but the reasoning that allowed it to spread rarely moves with it.

That disconnect creates an unusual kind of fragility. Systems begin depending on conclusions that no one else can independently examine without re-running the entire process that produced them.

Mira seems to approach this problem from a different angle.

Instead of treating AI output as the final step in a pipeline, Mira treats it as the beginning of a process where that output can be examined within a network before it becomes something other systems rely on.

That subtle change transforms how AI conclusions behave.

In most environments today, if a model generates something convincing, it tends to move forward by default. The assumption is that if nobody objects, the result is acceptable. But that assumption only works when decisions stay local to one team or one application.

As soon as multiple systems begin coordinating around the same conclusion, the absence of a shared evaluation process becomes visible.

Mira introduces the idea that conclusions should not simply propagate. They should accumulate support before they become operational.

Support in this context isn’t social approval. It’s structured evaluation. Participants in the network have incentives to examine outputs and determine whether they hold up under scrutiny. The network doesn’t assume agreement; it creates conditions where agreement can emerge through participation.

This approach has an interesting side effect.

It slows down the silent spread of unexamined outputs without slowing down AI generation itself.

Models can still produce answers quickly. What changes is the environment where those answers gain legitimacy. Instead of becoming trusted because they appear reasonable, they become trusted because they pass through a process designed to test them.

That distinction matters more than it seems.

The speed of AI generation is no longer the limiting factor in most workflows. What slows organizations down is uncertainty about whether AI outputs can safely influence downstream systems. If every answer requires manual oversight, automation reaches a ceiling.

Mira attempts to shift that ceiling.

By embedding evaluation into a decentralized environment, it creates a structure where scrutiny scales alongside generation. Participants who evaluate outputs accurately are rewarded. Those who validate carelessly are disincentivized.

Over time, this encourages something AI ecosystems currently lack: a culture of verification that is economically sustained rather than administratively enforced.

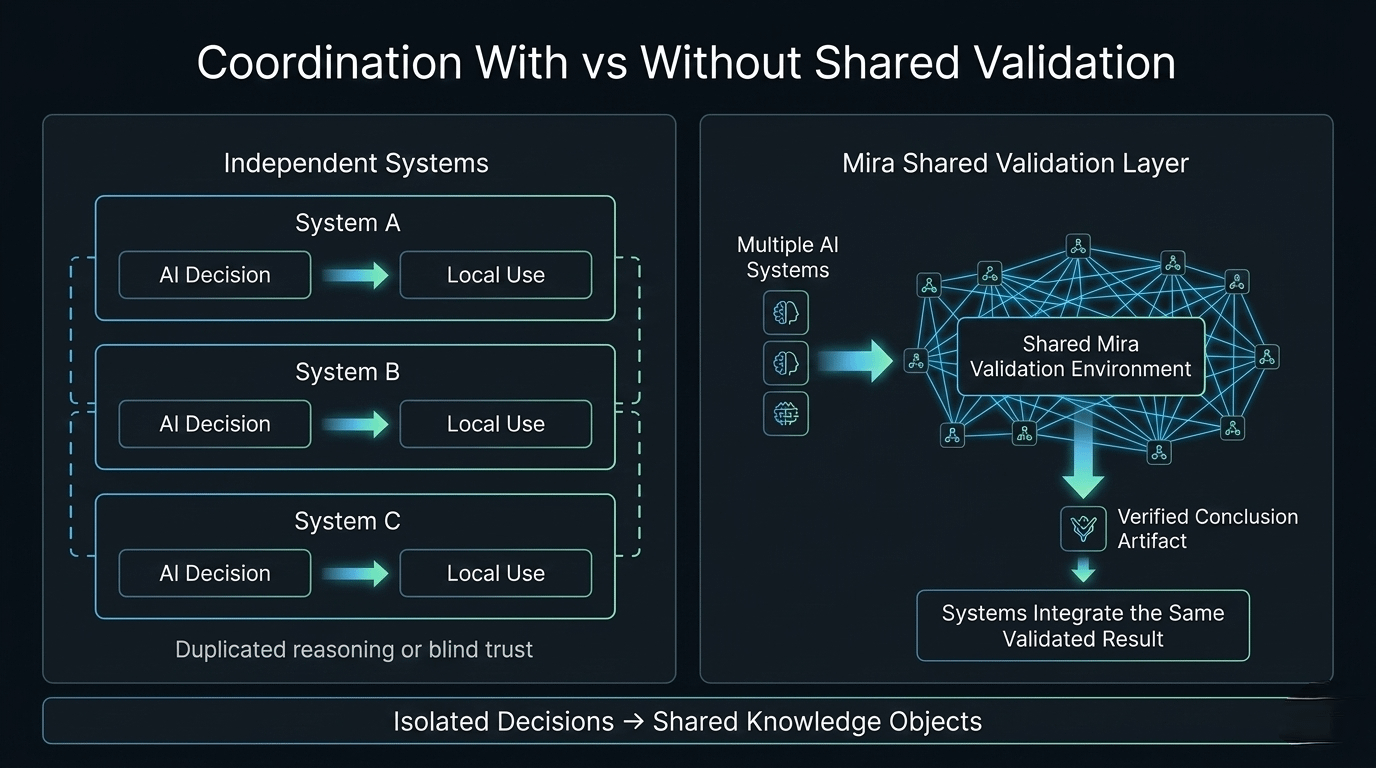

Another implication emerges when multiple systems begin interacting with the same conclusions.

Imagine a scenario where several services depend on an AI-generated interpretation of a complex dataset or policy document. Without a shared evaluation layer, each system must either trust the originating service or reproduce the reasoning independently.

Both approaches introduce inefficiencies.

Reproducing reasoning wastes resources and creates divergence. Blindly trusting another service creates dependency risks.

A validation environment offers a third option. Systems can reference conclusions that have already passed through a known process of examination. Instead of recomputing or blindly inheriting, they depend on conclusions that carry visible evidence of how they were accepted.

That makes coordination easier.

It also introduces a new type of artifact into AI ecosystems: conclusions that behave less like suggestions and more like shared knowledge objects. They still originate from models, but they gain durability through the evaluation environment that surrounds them.

Durability is an underrated quality in AI systems.

Right now, most AI outputs vanish quickly. They influence immediate decisions but rarely persist as stable reference points for other systems. As AI adoption deepens, that ephemerality becomes problematic.

Systems need stable conclusions to coordinate effectively.

Mira appears to be building the conditions where those stable conclusions can emerge.

Not by forcing everyone to trust the same model, but by giving participants a place where outputs can be examined collectively before they become dependencies.

There’s also a governance dimension here.

As AI becomes embedded in domains like finance, healthcare, and public policy, decisions informed by AI will inevitably face scrutiny from external parties. Auditors, regulators, and counterparties will want to know not just what conclusion was reached, but how that conclusion gained legitimacy.

An opaque pipeline makes that question difficult to answer.

A structured validation process makes it easier.

Instead of relying on internal assurances, organizations can point to a network process where outputs were evaluated before they were treated as reliable. That transparency doesn’t eliminate disagreement, but it provides a framework where disagreement can be resolved through procedure rather than speculation.

Over time, systems built this way tend to age better.

Not because they prevent every mistake, but because they make the path from generation to acceptance visible.

Mira’s architecture suggests that the future of AI may depend less on how fast models produce answers and more on how carefully those answers are allowed to spread.

Right now, most AI conclusions travel alone.

They appear, influence decisions, and disappear into workflows without leaving behind a shared place where others can inspect how they were trusted.

Mira proposes something different.

A world where AI decisions don’t move forward quietly, but carry with them the record of how they survived examination.

And in systems where many actors depend on the same reasoning, that difference may determine whether automation becomes a foundation—or a liability.