After spending around five years in the crypto space, one thing becomes very clear. Every market cycle brings a new narrative. First people couldn’t stop talking about DeFi. Then NFTs dominated everything. After that the conversation shifted toward scaling and infrastructure. Now the spotlight has moved again. This time it’s artificial intelligence.

But the more I watched AI projects entering the space, the more something started to bother me. Everyone talks about how powerful AI models are. Very few people talk about whether their answers can actually be trusted.

That’s what pulled me toward Mira. Not the token hype. Not the trading opportunity. The problem it’s trying to solve.

Trust.

AI is getting stronger every year. That part is obvious. Models can write reports, generate code, analyze complex data, and sometimes produce insights faster than entire teams. But at the same time they still make strange mistakes. Confident mistakes. The kind that sound completely correct until you double-check the facts.

Anyone who has spent enough time using large AI models has seen it happen. A response looks clean and logical. Everything reads smoothly. Then you notice something small. A statistic that doesn’t exist. A reference that was invented. A conclusion built on weak assumptions.

In casual situations it’s not a big deal. If a chatbot gets a movie fact wrong, no one really cares. But imagine that same behavior inside a financial risk system. Or a medical assistant helping analyze patient data. That’s where things start to feel uncomfortable.

Traditional systems deal with this through layers of review. Experts check each other. Regulators audit processes. Researchers challenge findings before they become accepted knowledge. AI doesn’t have those guardrails yet.

That gap is where Mira enters the picture.

The core idea behind the project is actually very simple. Don’t rely on one AI system. Ask many of them. If enough independent systems reach the same conclusion, the result becomes far more reliable.

It’s a concept that feels familiar if you’ve been around crypto long enough. Networks like Bitcoin operate on a similar principle. When a transaction happens, no single computer decides whether it’s valid. Thousands of nodes verify the transaction independently. Consensus forms through agreement.

Mira applies that same logic to artificial intelligence.

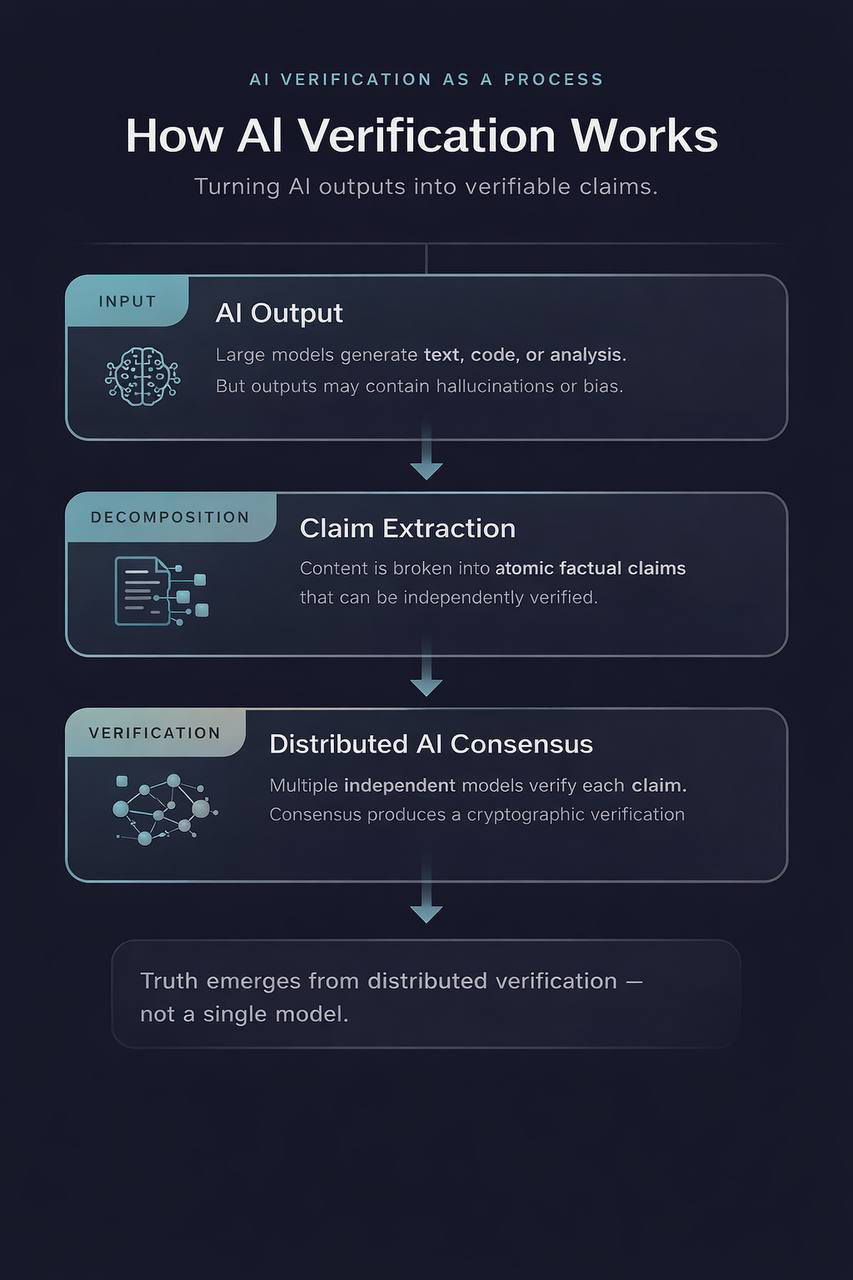

Instead of accepting an AI response at face value, the system breaks the answer into smaller claims. Each claim can then be tested by a network of verifiers. Some nodes might run language models. Others might rely on different datasets or reasoning systems. Each one analyzes the claim and sends back a judgment.

If enough of them agree, the network accepts the result.

It’s a small shift in process, but it changes how AI answers are treated. Instead of simply generating information, the system attempts to verify it.

From a technical perspective, the network works like an infrastructure layer sitting between AI outputs and the applications that use them. An app produces a response. Mira routes that response through its verification system. Independent nodes evaluate the claims. Consensus forms gradually.

When agreement reaches a certain threshold, the result is finalized and recorded. The process leaves a traceable record showing that multiple systems evaluated the information before it was accepted.

Of course, like most decentralized systems, incentives play a big role here. The network uses its native asset, MIRA, to coordinate participants. Developers pay verification fees when they want AI outputs checked. Node operators lock tokens as collateral in order to participate in the verification process. Those who contribute accurate verification earn rewards.

It’s a familiar model in crypto. Stake something valuable, contribute to the network, and earn incentives for honest participation.

The total supply of the token is capped at one billion units, with distribution spread across ecosystem growth, community incentives, and long-term development funding. From a structural standpoint it follows the kind of token design many crypto investors have seen before.

One interesting part of the system is how it combines different security approaches. Operators must stake tokens to participate, which creates financial accountability. But they also have to perform actual verification work rather than simply staking and collecting passive rewards.

That balance matters. It forces nodes to contribute real computational effort instead of just locking tokens and waiting for rewards. If a verifier consistently produces poor results or behaves dishonestly, penalties can reduce their stake.

Still, like any project operating at the intersection of crypto and emerging technology, there are risks that shouldn’t be ignored.

Speculation is the obvious one. Anyone who has been through a few market cycles knows how quickly narratives can inflate expectations. AI alone is a powerful trend. Crypto is another one. When those two worlds collide, excitement tends to move faster than real adoption.

Another concern is verifier diversity. If most nodes rely on the same models or if a small number of operators control large portions of the network, the system could end up repeating the same biases it was designed to avoid.

Governance is also something worth watching. Because voting power is tied to token ownership, large holders could potentially influence the direction of the protocol.

These challenges don’t automatically break the model, but they do highlight how experimental this entire space still is.

At the same time, the long-term vision behind Mira is genuinely interesting. If the system works as intended, decentralized verification could become an important layer in the AI ecosystem.

Imagine a future where companies need proof that AI decisions are reliable. Hospitals might require verification before using automated diagnostics. Financial institutions could demand validated models for credit risk or trading algorithms. Research platforms might rely on decentralized verification to confirm complex analysis.

In that kind of environment, a network designed specifically to validate AI outputs could become valuable infrastructure.

After watching the crypto industry evolve for several years, I’ve learned to approach new ideas with a mix of curiosity and caution. Big visions are common in this space. Execution is much harder.

Mira sits right at the intersection of two rapidly evolving technologies. Artificial intelligence on one side. Decentralized networks on the other.

Whether it becomes a foundational part of the AI ecosystem or just another interesting experiment is something time will reveal.

But one thing feels certain. As AI systems become more involved in real-world decisions, the question of trust isn’t going away.

If anything, it’s only going to get bigger.