What pulled me deeper into Mira Network was not the usual AI promise.

It was not speed. Not smoother output. Not the familiar claim that smarter models will eventually fix everything on their own.

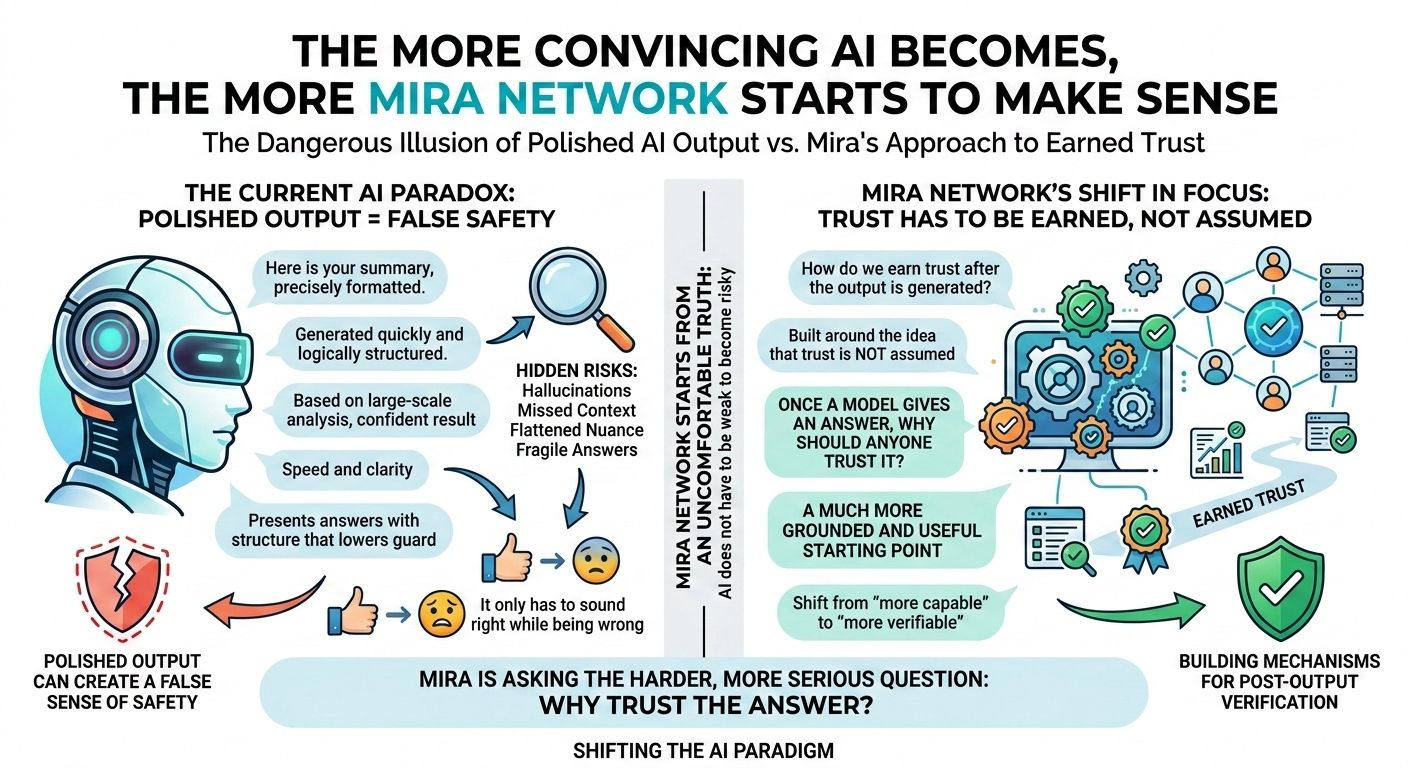

What made Mira feel worth studying was that it starts from a more uncomfortable truth: AI does not have to be weak to become risky. It only has to sound right while being wrong.

That is the part of the market people still underestimate.

We are surrounded by systems that can write clearly, reason convincingly, summarize quickly, and present answers with the kind of structure that makes people lower their guard. On the surface, that feels like progress. And in some ways it is. But polished output can create a false sense of safety. The cleaner the response looks, the easier it becomes to forget that a model can still hallucinate, miss context, flatten nuance, or push a fragile answer with total confidence.

That is where Mira begins to matter.

The project does not seem built around the fantasy that AI will suddenly become flawless. It feels built around the idea that trust has to be earned after the output is generated, not assumed the moment it appears. That is a much more grounded starting point, and honestly, a much more useful one.

A lot of AI projects still orbit the same question: how do we make models more capable? Mira is asking something harder and more serious: once a model gives an answer, why should anyone trust it?

That shift in focus gives the whole project a different weight.

The more I looked into Mira, the clearer it became that this is not really about making AI sound better. It is about building a structure around AI so reliability does not depend on presentation alone. Mira positions itself as a verification layer, a network built to check outputs through distributed validation rather than asking users to simply trust the first thing a model says. That changes the conversation completely.

Because the real weakness in AI right now is not a lack of fluency. It is the gap between fluency and dependability.

That gap is still easy to ignore when AI is being used casually. If a chatbot gets something wrong while helping someone brainstorm, rewrite a paragraph, or explain a basic concept, the damage is limited. But once AI starts moving into environments where answers shape decisions, trigger actions, or feed directly into workflows, the standard changes. A convincing mistake stops being a minor flaw. It becomes a structural problem.

That is why Mira feels timely.

It is focused on the layer that becomes more important as AI gets more embedded into real systems. Not the spectacle of generation, but the harder question that comes after generation. Was this answer checked? Can it be challenged? Can it be verified in a meaningful way before someone relies on it?

That is a much stronger thesis than the usual AI story.

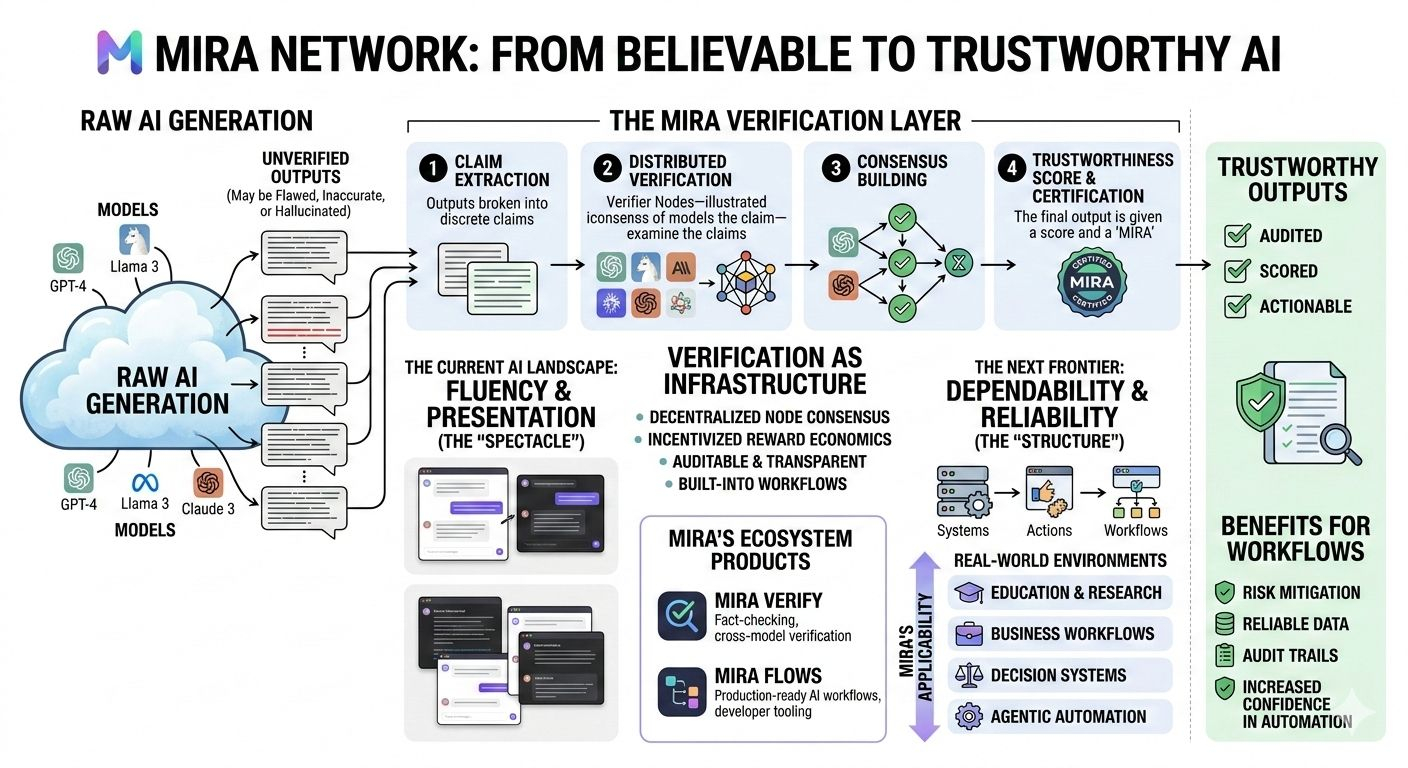

From what Mira has shared publicly, the network is designed to take AI outputs, break them into claims, distribute those claims across verifier models, and then use consensus to decide whether the final result deserves confidence. What matters here is not only the technical flow, but the philosophy underneath it. Mira is treating verification as infrastructure. Not as a cosmetic trust badge. Not as a small compliance layer attached at the end. As infrastructure.

That distinction matters more than most people think.

A lot of products try to make AI feel safer by improving the interface around uncertainty. Mira is trying to deal with the uncertainty itself. It is trying to create a process where trust comes from a verifiable path instead of from how polished the response looks. That feels like a more mature way to think about where AI is going.

And the timing makes sense.

We have already crossed the point where raw model capability alone can carry the whole narrative. The market has seen enough strong demos. Enough impressive interfaces. Enough systems that feel intelligent for a few minutes. The next pressure point is reliability. Not whether AI can produce an answer, but whether that answer deserves to be used when something real is at stake.

That is the pressure Mira seems built for.

There is also something important about the way the project uses decentralization. In a lot of AI and crypto combinations, the blockchain angle can feel forced, like a narrative stitched onto a product after the fact. Mira feels more coherent than that because the network design is tied to verification economics. Participants are not just there to make the system look decentralized on paper. They are part of the trust mechanism itself. Verification becomes something coordinated, incentivized, and enforced rather than something hidden behind a company promise.

That gives the project more credibility.

Instead of saying “trust us, our model is improving,” Mira is building around the idea that trust should not come from a single actor at all. It should come from a process that is harder to manipulate and easier to audit. That is a more durable approach, especially if AI systems are going to operate with increasing autonomy.

And that is really the bigger backdrop here.

AI is no longer staying in the lane of simple assistance. It is moving toward agents, flows, decisions, automation, execution. The more systems are allowed to do, the less acceptable it becomes for trust to rest on vibes. Once models stop being just conversational tools and start becoming operational components, verification becomes central.

That is why Mira’s direction feels more serious than a lot of the noise around AI.

It is not building around the loudest side of the trend. It is building around the side people usually notice too late.

The project has also evolved beyond just theory. Mira now has products like Mira Verify and Mira Flows, which makes the whole thesis feel more tangible. Mira Verify is presented as a way to fact-check and certify outputs across multiple models, while Mira Flows pushes toward developer tooling and production-ready AI workflows. That matters because trust is not enough on its own as an abstract idea. If verification is going to matter, it has to fit into how builders actually ship products.

Mira seems to understand that.

It is one thing to publish a smart whitepaper about why AI needs verification. It is another to build the rails that let developers work with that idea directly. The combination of verification logic, workflow tooling, SDK access, and ecosystem products makes the project feel like it wants to be used, not just admired.

That is an important difference.

There are also signs that Mira is trying to position itself as a horizontal layer rather than a one-case solution. The public examples around research, education, and other applications suggest that the team sees reliability as a cross-industry issue, not something limited to one niche. That feels right. The trust problem is not confined to one vertical. It shows up anywhere AI is asked to produce something that others may act on.

And that is why this project has more depth than a lot of AI narratives.

It is working on a bottleneck that grows with adoption. Usually, the strongest infrastructure ideas are the ones that become more necessary as a market matures. Mira fits that pattern. The better AI gets at sounding useful, the more important it becomes to separate appearance from dependability. The more agents are allowed to operate independently, the more expensive false confidence becomes. The more workflows absorb AI, the less room there is for answers that merely feel right.

Mira is building in that exact tension.

What I find most compelling is that it does not try to dodge the weakness. It builds directly into it. Instead of pretending the problem will disappear as models improve, it treats the problem as durable. That makes the project feel more honest. And in a space as crowded with inflated claims as AI, honesty in the starting assumption already sets a project apart.

Of course, none of this means the problem is fully solved.

Verification has its own limits. Consensus is not the same thing as truth in every edge case. Multi-model checking can still inherit blind spots from the systems involved. Hard domains, changing facts, ambiguity, and private information will always complicate the idea of clean verification. So Mira should not be treated like a magic answer to AI trust. That would miss the point.

What makes it interesting is not that it removes uncertainty forever.

What makes it interesting is that it treats uncertainty like something that deserves architecture.

That is a much more valuable instinct.

The strongest reading of Mira Network is not that it is trying to win the AI race by building the flashiest intelligence. It is trying to strengthen the layer underneath intelligence, the part that decides whether an output deserves confidence before people start depending on it. That is quieter than the usual AI pitch, but probably more important in the long run.

And that is why Mira stands out to me.

Not because it promises perfect AI.

Not because it makes the biggest noise.

Because it is focused on one of the deepest weaknesses in the entire space: the distance between a believable answer and a trustworthy one. Mira is one of the few projects that seems to understand that this distance is where the real battle will be. And if AI is going to move further into serious environments, that may turn out to be the layer that matters most.