I have noticed that discussions about artificial general intelligence often revolve around capability. Researchers talk about more powerful models, larger datasets, and new architectures that might push machines closer to human-level reasoning. But the more I observe how AI systems interact with real-world applications, the more I feel that capability alone is not the full story. Intelligence without accountability can quickly become difficult to trust. That realization is what led me to pay closer attention to the Mira Network and its role in the broader AI ecosystem.

When people talk about AGI, they often imagine a system capable of performing a wide range of tasks across different domains. But if such systems ever emerge, the real challenge will not only be what they can do. It will be how their decisions are verified and understood by the systems and institutions around them.

Today, most AI systems operate within centralized environments. A company builds the model, deploys it, records its activity, and interprets its behavior when something goes wrong. In many cases, that process works reasonably well, but it also means that the evidence of what an AI system did remains under the control of the same organization responsible for the system itself.

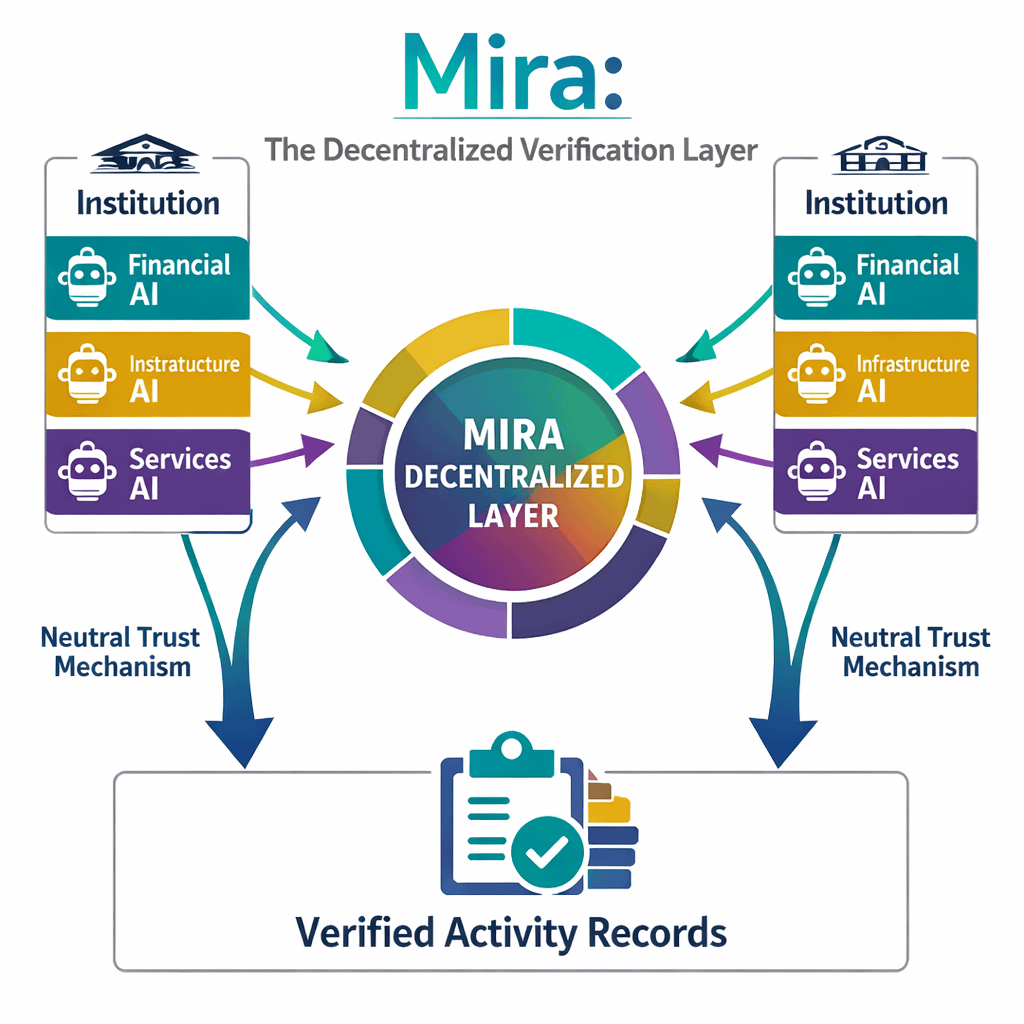

I find myself thinking about what happens when AI systems become more autonomous and interconnected. If different AI agents begin interacting across financial systems, infrastructure networks, and automated services, relying solely on internal logs may start to feel insufficient. In those environments, verification becomes as important as intelligence.

This is where Mira’s infrastructure begins to look relevant.

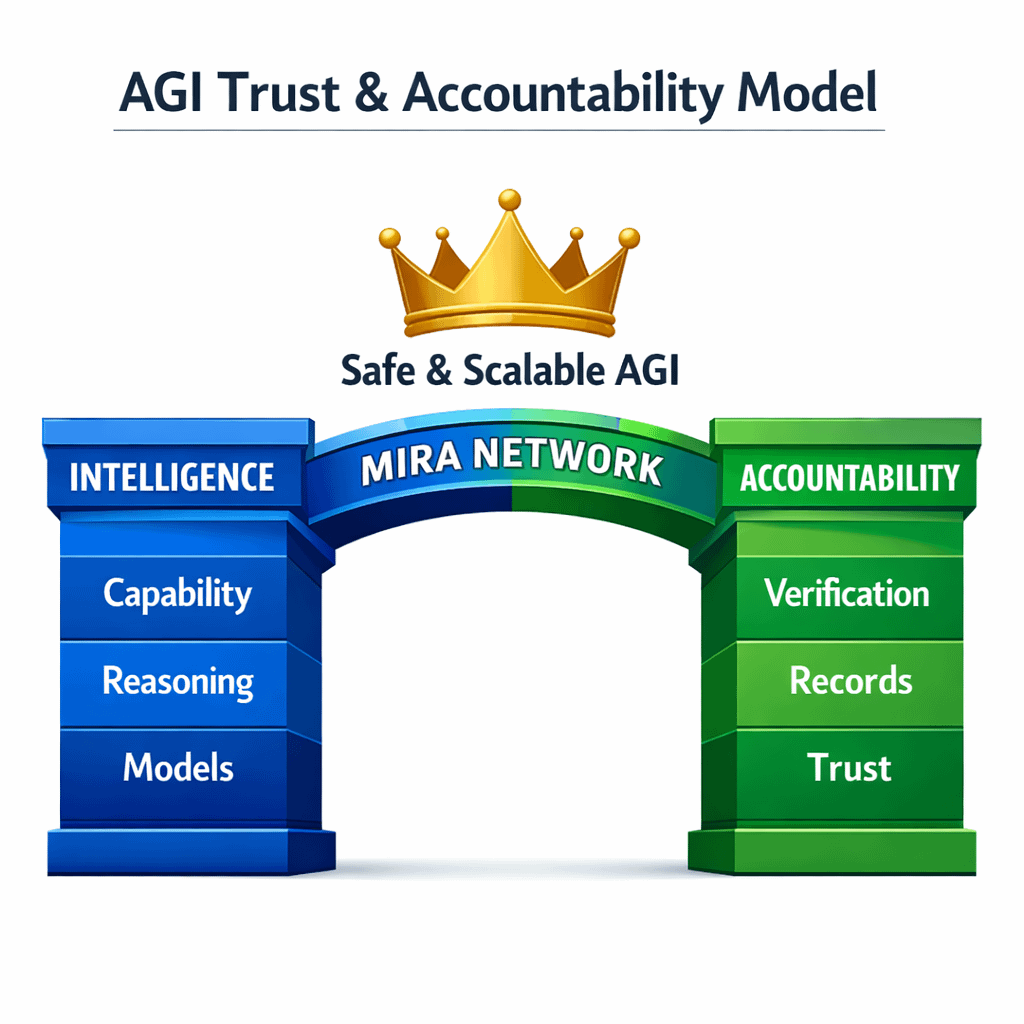

Instead of focusing on building larger models or improving training techniques, Mira attempts to create a decentralized layer for verifying AI activity. Inputs, execution parameters, and outputs can be recorded in a shared environment where multiple participants can confirm what actually happened. From my perspective, that shifts the conversation away from whether AI systems are powerful enough and toward whether they are accountable enough.

The idea is sometimes framed in dramatic language as if Mira is somehow enabling consciousness or intelligence itself. I do not see it that way. What I see is a system trying to create reliable records of AI behavior. In a world where machines make increasingly important decisions, those records may become necessary for coordination between institutions.

Still, I remain cautious about assuming that verification infrastructure automatically solves the challenges surrounding AGI.

Artificial general intelligence, if it ever emerges, will likely introduce complexities far beyond what current systems face. Autonomous agents could operate across industries, jurisdictions, and economic systems simultaneously. A verification layer must remain flexible enough to adapt to those different contexts while maintaining consistency.

Another challenge is integration. Developers already use numerous monitoring tools, logging systems, and auditing frameworks to track AI behavior. For Mira’s network to become meaningful infrastructure, it needs to fit naturally into those existing workflows. If the verification process becomes too heavy or complicated, organizations may continue relying on simpler internal solutions.

At the same time, the trajectory of AI development makes the problem difficult to ignore. As AI agents begin interacting with each other and with automated financial systems, the need for neutral verification mechanisms may grow. Trust between autonomous systems cannot depend solely on the organizations that built them.

That is where Mira’s role as a decentralized verification layer begins to make sense.

I think of it less as the missing link to AGI itself and more as a missing piece of infrastructure that could support increasingly autonomous systems. Intelligence may drive progress, but accountability determines whether that progress can scale safely across institutions.

Whether Mira ultimately becomes that layer is still uncertain. Infrastructure projects often look promising at the conceptual level but face practical challenges once real deployments begin. What I find interesting is that Mira shifts the conversation from how intelligent machines can become to how their actions can be verified.

If AI systems continue moving toward greater autonomy, that shift may prove more important than it initially appears.