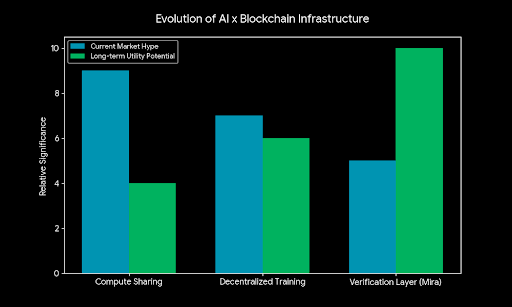

I’ve been around the crypto space for quite a few years now, and one thing I’ve learned is that every cycle introduces a new narrative that tries to combine blockchain with the next big technology trend. A few years ago it was DeFi trying to rebuild financial systems. Then we saw a wave of projects around decentralized storage and compute. Lately the conversation has shifted more toward AI, and naturally crypto is trying to find its place there too.

Recently I came across a project called Mira Network. At first I didn’t think too much about it because, honestly, there are already dozens of projects trying to connect AI and blockchain in some way. But after spending some time reading about it, I started noticing a slightly different angle compared to many of the usual AI-crypto ideas.

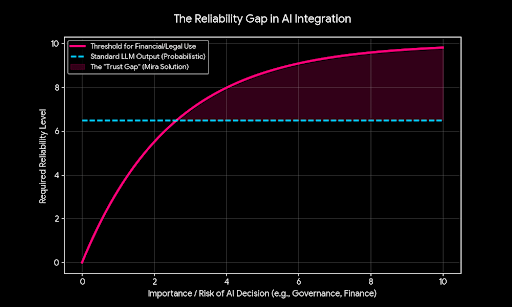

The main thing Mira is trying to tackle is the reliability problem in AI systems. Anyone who has used AI tools regularly knows what I mean. Sometimes the answers look perfect and confident, but when you check them more carefully, parts of the information can be wrong or completely made up. These so-called hallucinations are actually a well-known limitation of current AI models.

Mira’s idea is to create a system where AI outputs don’t just get accepted immediately. Instead, the network tries to verify them before they’re treated as reliable information.

What caught my attention was the way they approach this verification process. Rather than trusting one model or one provider, the system breaks an AI response into smaller pieces of information — basically individual claims. Those claims are then sent to multiple independent models or validators across the network. If enough of them agree that the claim is correct, it passes verification. If they disagree, the information can be flagged or rejected.

When I read that, I couldn’t help thinking that it sounds very similar to the core idea behind blockchains themselves. Instead of trusting a single authority, you distribute verification across a network and let consensus decide what’s valid. The difference here is that instead of verifying financial transactions, they’re trying to verify information generated by AI.

In theory, that’s an interesting concept. AI is becoming more integrated into everyday tools, and if people are going to rely on it for research, decision-making, or automation, accuracy becomes extremely important. A system that adds an extra layer of verification could potentially be useful.

At the same time, I’ve been in this space long enough to know that ideas that sound good in theory don’t always translate into real adoption.

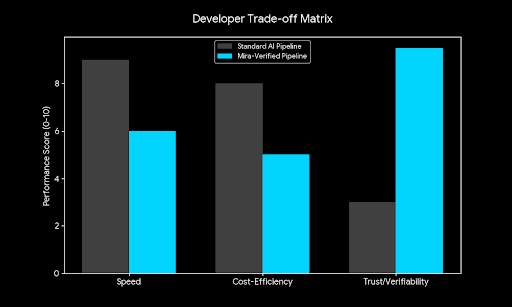

The real question for me is whether developers will actually use something like this. Infrastructure projects in crypto often look impressive technically, but they only become valuable if people start building on top of them. If integrating verification slows down responses or adds too much complexity, developers might simply skip it.

Another thing I noticed is that the project is currently running community campaigns and leaderboard activities. That’s something we’ve seen many times across the industry. Early participation programs usually help attract users and bring attention to a new ecosystem. Sometimes these activities later connect to token incentives or airdrops, which naturally encourages people to explore the platform.

There’s nothing unusual about that strategy. In fact, it’s almost become a standard approach for early-stage crypto networks. Incentives bring the first wave of users, and if things go well, some of those users eventually turn into builders or long-term community members.

But from experience, I’ve also seen how these early phases can be misleading. When rewards are involved, activity can grow very quickly. The real test usually comes later when incentives slow down. That’s when you start seeing whether people continue interacting with the network because it’s genuinely useful.

Mira also seems to rely on a familiar crypto model where participants can run nodes and stake tokens to help verify information. If they provide honest verification, they earn rewards. If they behave maliciously or try to manipulate the system, they risk losing part of their stake. This kind of economic design has been used by many blockchain networks to align incentives between participants and the health of the system.

Another piece of the puzzle is computational power. Since verification tasks require processing, the network may rely on distributed computing resources contributed by participants. That connects to a broader trend we’ve been seeing lately, where projects try to turn unused GPUs or compute resources into decentralized infrastructure for AI workloads.

It’s an interesting direction, especially with the massive demand for computing power that AI models require today. But again, the sustainability of these systems usually depends on real demand. If applications actually use the network to verify AI outputs, the ecosystem can grow naturally. If activity mainly comes from token incentives, things can look busy for a while but slow down later.

Over the years I’ve learned to pay more attention to developer behavior than marketing announcements. Are builders experimenting with the tools? Are new applications integrating the protocol? Is there organic usage happening without incentives?

Those signals usually reveal more about a project’s future than any launch campaign or announcement.

What makes Mira at least somewhat interesting is that the problem they’re trying to solve is real. AI reliability is something the entire industry is talking about right now. If AI continues becoming part of daily workflows, people will eventually demand better ways to verify the information it produces.

Whether a decentralized system becomes the solution to that problem is still uncertain.

For now it feels like one of those early experiments sitting between two rapidly evolving industries. Blockchain is still figuring out its long-term infrastructure role, and AI is moving forward at an incredible pace. Projects like Mira are trying to connect those worlds, but it’s still too early to know which approaches will actually stick.

So at this stage I’m mostly just watching how things develop. I’m curious to see whether developers start integrating the verification layer into real AI tools, and whether activity grows beyond community campaigns and early participation programs.

If real usage starts appearing, the idea could become more interesting over time. But like many early crypto projects, the most important signals will probably appear later, once the initial excitement fades and the network has to stand on its own. For now it’s simply another project on the radar, something worth observing to see how it evolves when real activity begins to show up.