For something that loves to repeat the word “trustless,” Web3 still runs on a surprising amount of plain old trust.

Not the cryptographic kind either. Not the elegant “math will handle it” type of trust people like to talk about in conference panels. I’m talking about the simple, messy reality of trusting other people.

If you step back and really look at how a lot of these systems work day-to-day, there’s an uncomfortable assumption underneath everything. We just assume things will behave the way they’re supposed to. The protocol will hold up. The oracle is feeding in accurate data. The AI tool we plugged into our workflow isn’t confidently making things up.

Most of the time, nobody checks.

Which is kind of ironic when you think about it. For years the slogan has been “don’t trust, verify.” In practice, though, verification often stops earlier than people would like to admit.

To be fair, Web3 solved a very specific problem extremely well: enforcement. Once data makes it onto a blockchain, smart contracts are relentless. They execute exactly the way they were written. No hesitation, no interpretation, no mercy.

But there’s a catch that people gloss over.

Smart contracts can enforce rules, but they have absolutely no way of knowing whether the information they received was correct in the first place.

That gap creates all sorts of strange problems. Projects disappear because critical metadata was sitting on some server that quietly expired. Governance votes get pushed through using incomplete or misleading information. Teams integrate AI tools into operations and then act surprised when the output looks clean and professional but turns out to be completely wrong.

Nothing breaks instantly. That’s the tricky part.

Blocks keep getting produced. Transactions settle. The contracts keep executing exactly as designed. On the surface, everything looks healthy.

But under the hood, the system slowly starts drifting into a gray zone where decisions are being made on top of assumptions nobody actually verified.

And when that starts to show cracks, the solution tends to look familiar. Another trusted layer gets added.

A multisig wallet. A committee. Some internal dashboard controlled by a handful of people who are supposed to keep an eye on things.

Operationally, it makes sense. Someone has to watch the machinery.

But it also pulls things back toward the same centralized patterns the industry originally claimed it wanted to escape. The infrastructure might be decentralized on paper, but real authority often still sits with small groups of humans making judgment calls. Recently I spent some time looking into what Mira Network is trying to do, and what stood out wasn’t some grand promise to “fix Web3.” Instead, they’re focusing on a much narrower issue: verification.

Recently I spent some time looking into what Mira Network is trying to do, and what stood out wasn’t some grand promise to “fix Web3.” Instead, they’re focusing on a much narrower issue: verification.

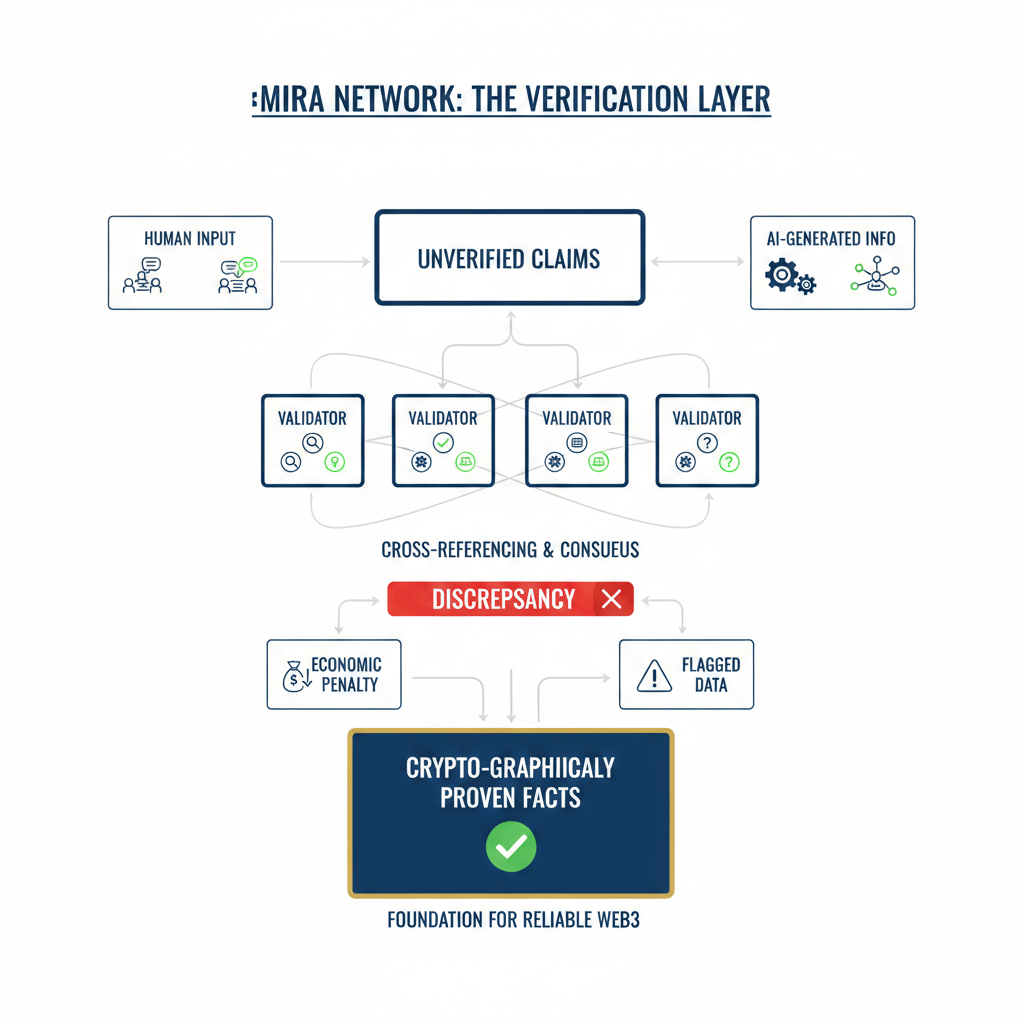

Their approach is surprisingly practical. Instead of relying on a single source of truth, they break claims into smaller pieces and let multiple models check each other’s outputs. When those results don’t line up, the system flags it. Models that repeatedly produce bad results can even face economic penalties.

It’s not flashy technology. You probably won’t see it driving hype cycles or bull-market narratives.

But it addresses a problem that quietly sits at the center of everything.

Web3 loves to debate decentralization, sovereignty, and financial freedom. Those are big ideas, and they matter. Yet the less glamorous side of the conversation — things like data reliability and accountability — tends to get ignored.

And that might be the real weak spot.

Because if the ecosystem keeps building on top of messy human input and AI-generated information without better ways to verify it, then all we’re really doing is stacking more complexity on top of a fragile foundation.

At some point, the industry may have to admit something uncomfortable:

Maybe verification should have been the starting point all along.