AI models often produce answers that sound confident but may contain errors or fabricated facts — a phenomenon commonly called AI hallucination. As AI becomes more integrated into finance, healthcare, research, and governance, relying on unverified outputs becomes increasingly risky.

This is where Mira Network is trying to change the game.

The Core Problem: AI Is Powerful but Unreliable

Modern AI models operate on probability. They generate responses based on patterns learned from large datasets rather than guaranteed factual reasoning. Because of this:

AI can produce incorrect information with high confidence

Users often cannot verify how the answer was generated

Critical decisions may rely on unverified outputs

As AI systems become more autonomous, the need for reliable verification infrastructure becomes essential.

Mira Network’s Approach: A Verification Layer for AI

Mira Network introduces a new concept: a decentralized verification layer for artificial intelligence.

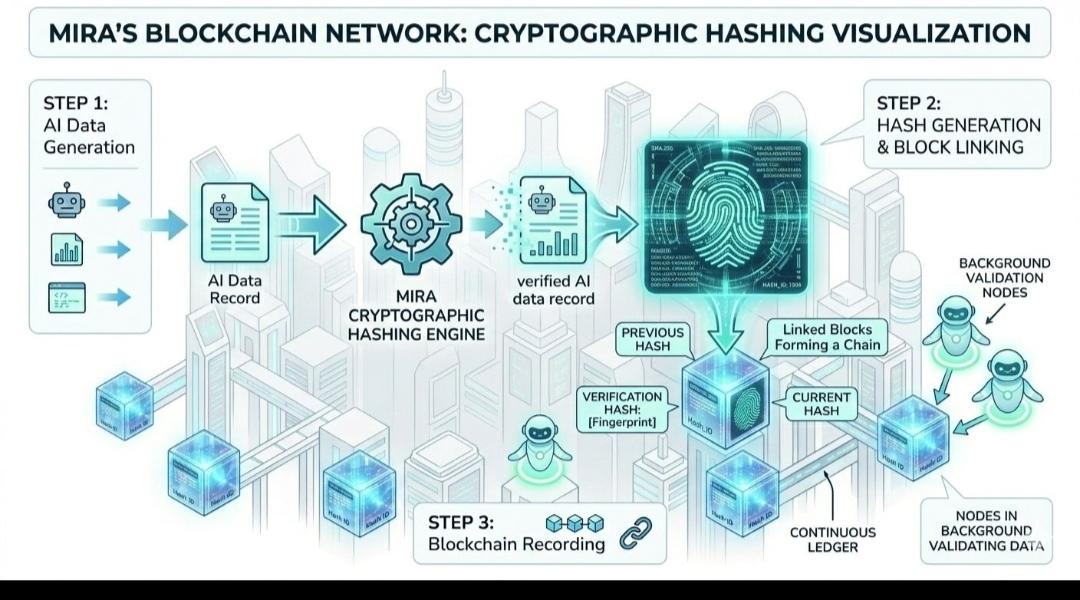

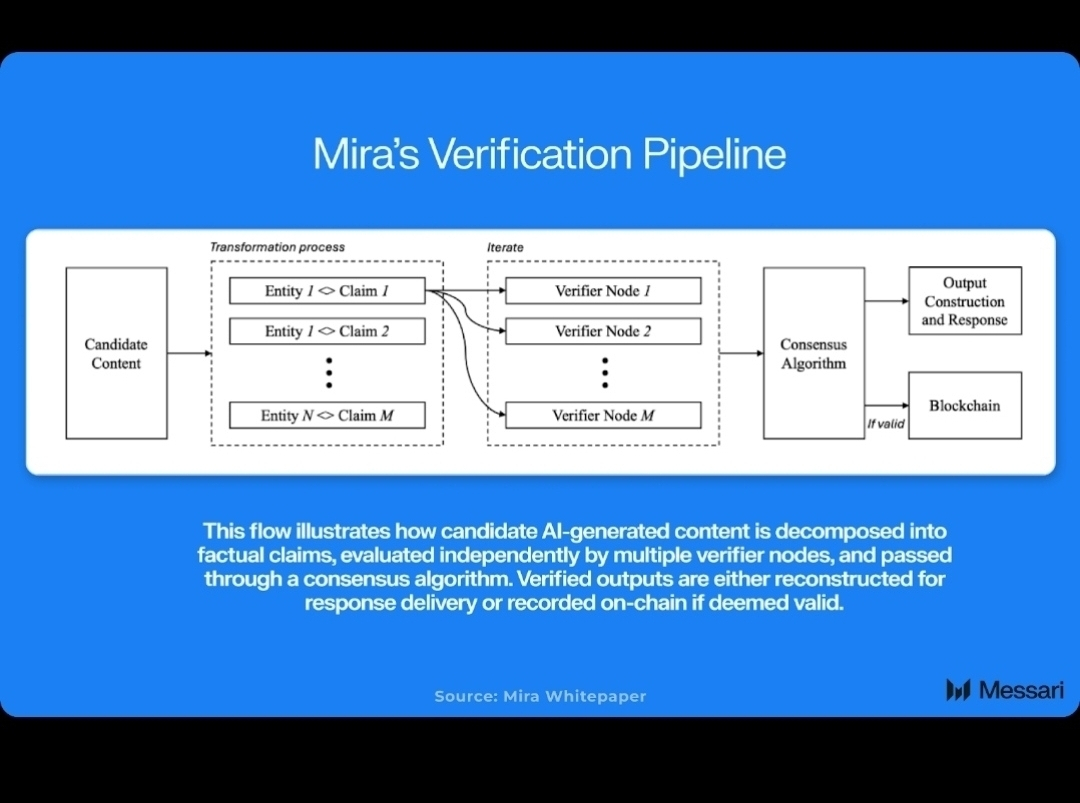

Instead of trusting a single AI model, Mira uses multiple independent validators and models to check the accuracy of AI-generated claims. The process works roughly like this:

AI generates a response

The response is broken into smaller factual claims

Independent validator nodes analyze those claims

A decentralized consensus determines whether they are accurate

Verified outputs receive a cryptographic proof of validity

This method creates a trustless verification system, where the accuracy of AI results does not depend on a single provider.

Why Decentralized Verification Matters

Traditional AI platforms are centralized. Users must trust the company operating the model.

Mira changes this by introducing blockchain-based consensus and economic incentives. Validators stake tokens to verify claims, which encourages honest behavior because incorrect validations can result in penalties. �

unblockmedia.com

This structure introduces:

Transparency – verification results are publicly auditable

Accountability – validators have economic incentives to be correct

Scalability – verification can occur across many nodes simultaneously

Improving Accuracy and Reducing AI Hallucinations

One of the most interesting aspects of Mira’s design is its potential impact on AI reliability.

By verifying claims through distributed consensus, the system can significantly reduce hallucinations and improve factual accuracy. Some analyses suggest verification layers like Mira’s could improve AI accuracy from roughly 70% to around 96% in certain applications.

For industries where accuracy is critical—such as finance, medicine, and legal analysis—this kind of improvement could be transformative.

Growing Adoption and Ecosystem Development

The network is already gaining traction. Reports indicate that Mira’s ecosystem has reached millions of users and processes billions of tokens daily, demonstrating strong demand for trusted AI infrastructure.

GlobeNewswire

Mira is also collaborating with infrastructure providers and AI platforms to integrate verification into real-world applications.

The Bigger Vision: Trusted Autonomous AI

The long-term goal of Mira Network goes beyond simply checking AI answers.

The project aims to build the trust infrastructure that autonomous AI systems will rely on. As AI agents begin performing tasks independently—such as managing financial operations, running digital services, or coordinating machines—verification becomes essential.

Without a system that can prove whether AI outputs are correct, fully autonomous AI systems cannot safely operate at scale.