Artificial intelligence is rapidly transforming education. Platforms now use AI to generate learning material, create exams, and evaluate student responses at scale. While this automation brings speed and efficiency, it also introduces a serious challenge: trust. AI systems can generate answers that look confident and correct but may contain factual mistakes or misleading logic. In an educational environment where accuracy directly affects student outcomes, this risk becomes critical.

This is where Mira Network introduces a new approach. By building a verification layer for AI outputs, Mira provides a system that can evaluate and confirm whether AI generated content is actually reliable. When integrated into educational platforms such as Learnrite, this technology significantly improves the integrity and scalability of digital testing systems.

The Growing Reliability Problem in AI Education Tools

AI models are powerful at generating information quickly. Educational platforms often use them to produce practice questions, exam papers, explanations, and automated grading systems. However these models operate probabilistically. They predict likely answers based on patterns in data rather than verifying facts in real time.

This leads to several risks in education systems:

AI may generate incorrect facts that appear convincing

Questions may contain logical inconsistencies

Automated grading may misinterpret student answers

Content quality may vary across subjects and difficulty levels

For platforms serving thousands of students, even small inaccuracies can damage credibility. Educational institutions require testing systems that are accurate, consistent, and verifiable.

How Mira Network Adds a Verification Layer to AI

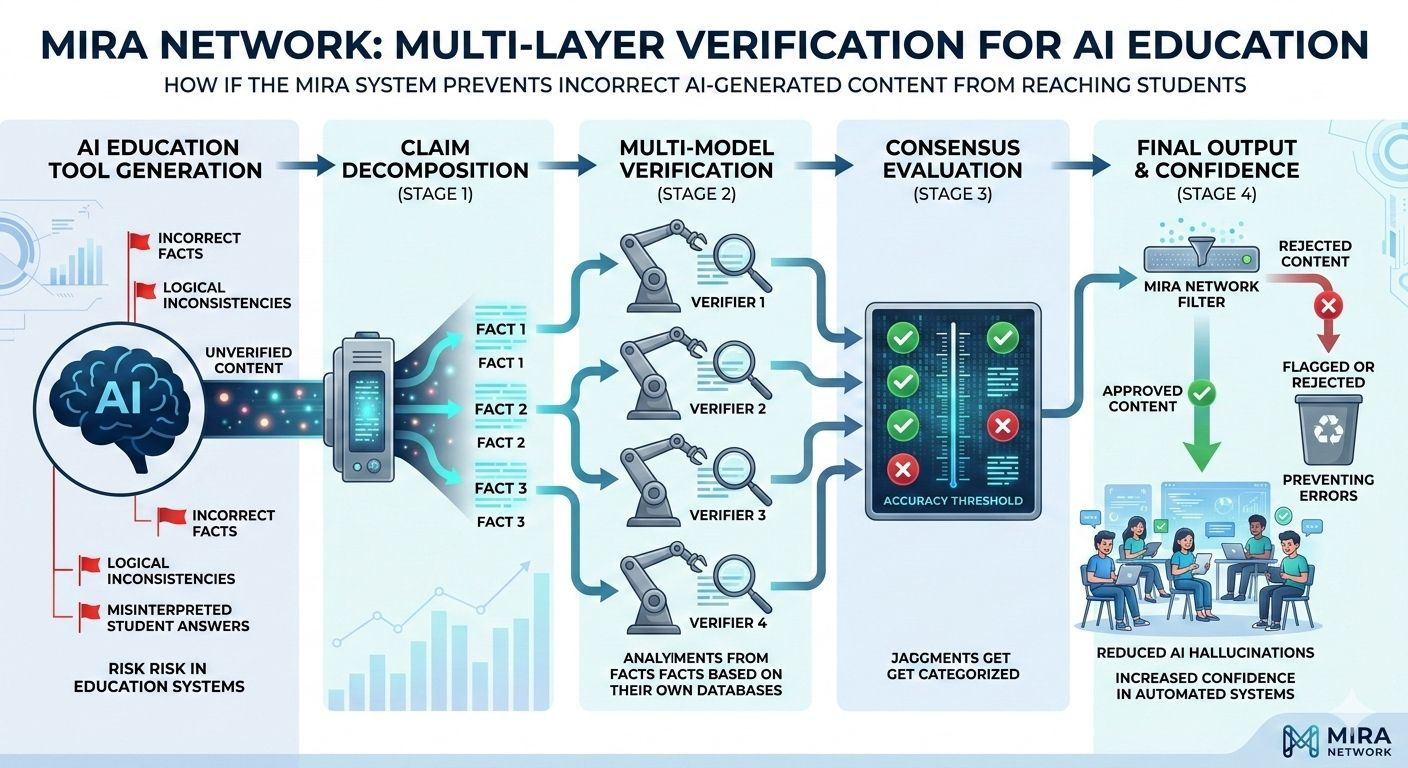

Instead of relying on a single AI model’s output, Mira introduces a multi layer verification framework designed to check whether AI generated claims are correct before they are used.

The core idea is simple but powerful. Every AI output should be verified before it is trusted.

The system works through several stages.

Claim Decomposition

When an AI model generates a response, Mira breaks the output into smaller factual components. For example, if a question or explanation contains several facts, each statement becomes an independent claim that can be analyzed separately.

This allows the system to evaluate the reliability of specific pieces of information rather than treating the entire output as one unit.

Multi Model Verification

Once claims are extracted, they are distributed to multiple independent verification models. Each model evaluates the claims based on its own training data and reasoning capabilities.

Instead of trusting one AI system, the network gathers multiple perspectives on the same claim.

Consensus Evaluation

After verification models evaluate the claims, Mira aggregates their responses. A consensus mechanism determines whether a claim meets the accuracy threshold required for approval.

If verification fails, the content is flagged or rejected before reaching the final platform.

This approach significantly reduces AI hallucinations and improves confidence in automated systems.

Learnrite’s Challenge Before Integration

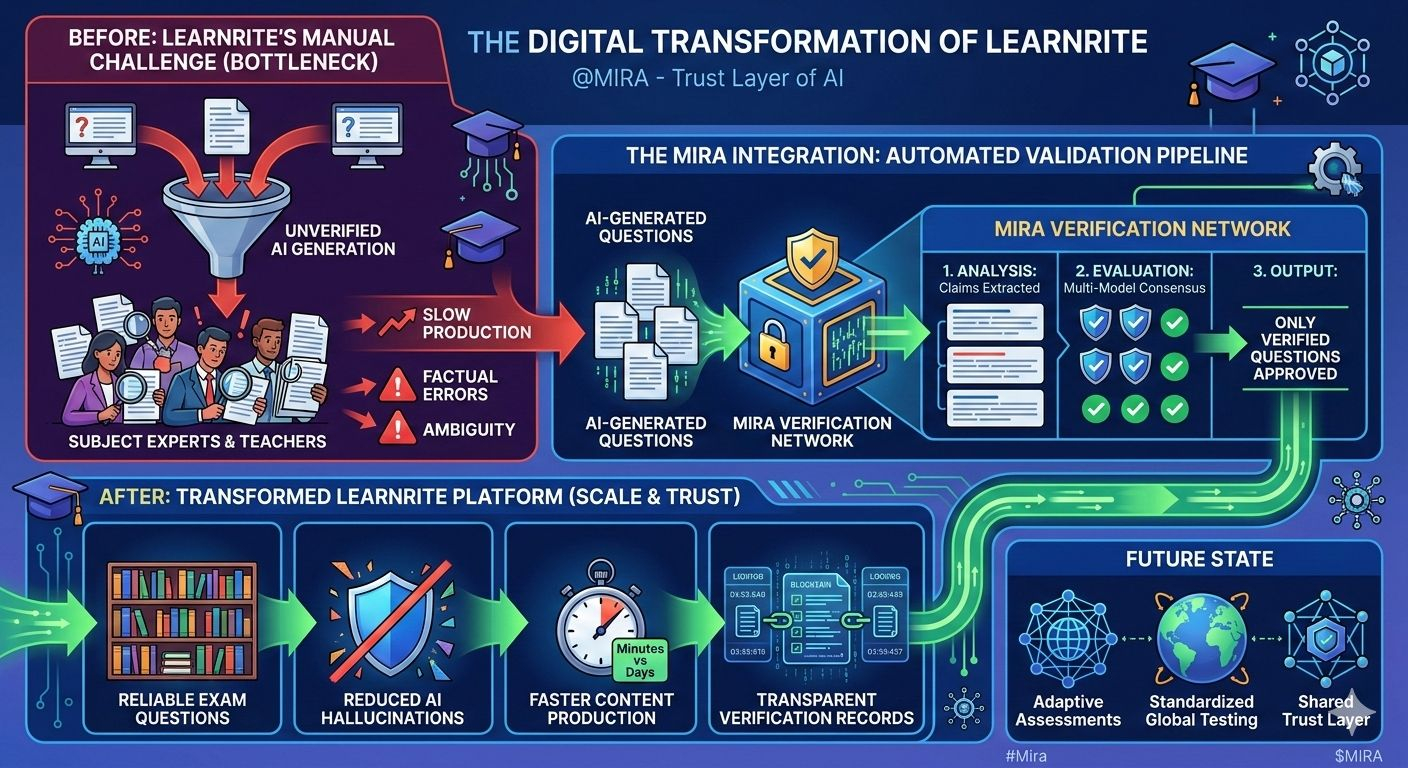

Before integrating Mira’s technology, Learnrite relied on AI to generate educational testing material. While this allowed the platform to scale rapidly, it also created operational challenges.

First, the platform required human reviewers to verify many AI generated questions. Teachers and subject experts had to manually check content before it could be used in exams. This process slowed down content production.

Second, AI generated questions sometimes contained minor factual errors or ambiguous wording which required revision. Even when errors were rare, they created uncertainty about relying entirely on automated systems.

Finally, scaling the platform to serve more institutions became difficult because manual verification could not keep up with the speed of AI generation.

Learnrite needed a way to maintain AI efficiency while ensuring strict educational standards.

Integration of Mira Verification Technology

The integration of Mira into Learnrite’s system transformed the platform’s workflow.

Instead of sending AI generated questions directly to human reviewers, the content is first passed through Mira’s verification network.

The process now works like this:

AI models generate test questions and answers across different subjects

Mira analyzes the content and breaks it into verifiable claims

Verification models evaluate each claim independently

Only questions that pass verification are approved for use in exams

This system creates a fully automated validation pipeline that maintains accuracy while dramatically improving efficiency.

Major Improvements in the Learnrite Platform

Reliable AI Generated Exam Questions

With verification in place, Learnrite can confidently expand its question database. The platform can generate thousands of new questions while maintaining academic reliability. This reduces repetition in tests and provides students with more diverse assessments.

Reduced AI Hallucinations

AI hallucinations occur when models produce confident but incorrect information. Mira’s consensus verification significantly reduces these errors by requiring multiple models to validate claims before approval. This makes automated testing far more dependable.

Faster Content Production

Previously generating high quality exam material required a slow cycle of AI generation followed by human review. With Mira verifying content automatically this process becomes much faster. Entire question banks can now be created in minutes rather than days.

Improved Academic Standards

Because verification models analyze factual accuracy and logical consistency, the platform maintains stronger academic standards across subjects. This ensures that exam questions match educational expectations and curriculum requirements.

Transparent Verification Records

Another important benefit is transparency. Verification logs allow Learnrite to demonstrate that every question was validated before deployment. This builds trust with schools, educators, and students.

Why Verified AI Matters for Education

Education is one of the most sensitive environments for AI deployment. Testing systems influence grades, academic progress, and professional certifications. Any inaccuracies in exam material can have serious consequences.

As AI becomes more integrated into digital learning systems, verification layers will likely become essential infrastructure. Platforms must ensure that automation does not compromise reliability.

By combining AI generation with decentralized verification, Mira introduces a model where speed and trust can coexist.

The Future of Verified AI Learning Platforms

The success of the Learnrite integration highlights how verification networks could shape the future of education technology.

Fully automated exam systems could generate verify and grade exams without manual intervention.

Adaptive learning assessments could allow AI to create personalized tests for each student while maintaining verified accuracy.

Global digital testing platforms could use verified AI systems to support standardized assessments across countries and institutions.

Shared verification infrastructure may allow multiple educational platforms to rely on networks like Mira as a common trust layer.

Conclusion

The integration between Mira Network and Learnrite demonstrates a significant shift in how AI systems can be deployed responsibly.

Instead of relying on raw AI outputs platforms can now implement verification frameworks that ensure accuracy before information reaches users. For education technology where trust and reliability are essential, this model represents a major step forward.

As AI continues to scale across industries verification technologies like Mira may become a foundational layer ensuring that intelligent systems remain reliable accountable and trustworthy.