There is a subtle problem emerging at the intersection of robotics, artificial intelligence, and distributed systems.

It rarely appears in headlines.

Most discussions about robotics focus on intelligence—how quickly models improve, how well machines learn from data, or how convincingly robots mimic human behavior. The public narrative revolves around capability. More sensors. Better models. Faster learning loops.

But capability is not the hardest problem.

Coordination is.

Once machines move beyond controlled environments, the challenge shifts dramatically. A robot operating in a laboratory is one thing. A robot operating in a shared physical world—factories, hospitals, logistics networks, homes—is something else entirely.

Suddenly the system must answer uncomfortable questions.

Who verifies what the robot is doing?

Who updates its operational rules?

Who is responsible when systems interact?

And perhaps most importantly—who coordinates the infrastructure that governs all of this?

The robotics industry tends to approach these questions through centralized control systems. Manufacturers manage firmware. Cloud services coordinate updates. Platforms maintain operational rules.

This works—up to a point.

But centralized coordination creates fragility.

Robotic ecosystems are becoming increasingly complex. Machines from different manufacturers interact. Software agents exchange data. Autonomous systems make decisions in environments that no single entity fully controls.

At that scale, coordination becomes an infrastructure problem.

And infrastructure problems rarely have simple solutions.

The traditional architecture of robotics systems evolved in a relatively controlled world.

Industrial robots lived inside factories.

Autonomous systems operated within fixed boundaries.

Updates were slow, deliberate, and tightly managed.

That environment allowed centralized governance to function reasonably well.

But the landscape is changing.

Robots are gradually moving into open environments. Delivery networks. Public spaces. Shared industrial systems. Autonomous inspection systems. Distributed logistics networks.

In these environments, machines interact not just with their owners—but with each other.

That changes the dynamics of the entire system.

The first problem is verification.

Robots increasingly rely on AI systems to interpret environments and make decisions. These systems are probabilistic by nature. They make predictions, not guarantees.

In controlled settings, occasional errors are manageable.

In open systems, they become systemic risk.

The second problem is coordination.

When multiple autonomous machines operate in shared spaces, their behavior must align with common rules. Safety constraints. Regulatory boundaries. Resource allocation. Data sharing.

Centralized coordination struggles here.

No single organization can realistically govern a global network of autonomous machines from different vendors operating across jurisdictions.

The third problem is incentive alignment.

Robotics platforms today are largely controlled by manufacturers or cloud providers. Their incentives often center around platform control rather than ecosystem neutrality.

Participants in the network—operators, developers, data providers—may not trust a single centralized authority to coordinate critical infrastructure.

This leads to fragmentation.

And fragmentation introduces ris

Fabric Protocol appears to emerge from this tension.

At its core, the project attempts to rethink robotics infrastructure from a network perspective rather than a platform perspective.

Instead of assuming that robots will be coordinated by centralized services, the protocol explores the idea of an open coordination layer for machines.

A public infrastructure layer.

The core concept is relatively simple, though the implications are complex.

Fabric Protocol proposes a system where data, computation, and governance for robotic systems are coordinated through a distributed ledger combined with verifiable computing.

In other words, it treats robotic coordination as a shared infrastructure problem rather than a proprietary platform problem.

The system is designed to support what the protocol describes as “agent-native infrastructure.” In this context, agents refer to autonomous systems—robots, software agents, or hybrid AI systems that interact with the physical world.

Instead of relying on centralized services to validate robot behavior, the network attempts to create mechanisms where actions, data, and computational outputs can be verified across distributed participants.

The ambition is not merely to operate robots.

It is to coordinate them.

And that is a fundamentally different problem

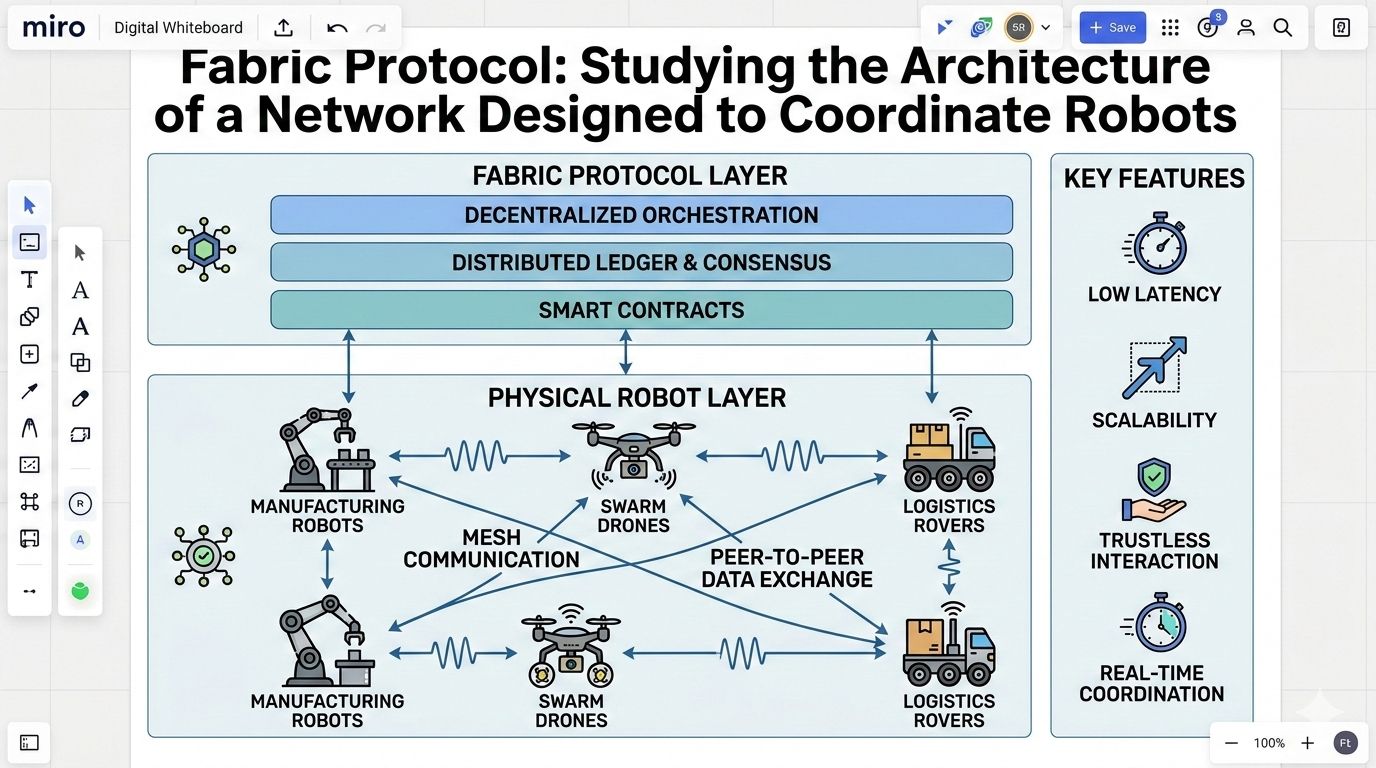

At a structural level, Fabric Protocol combines several architectural components that operate together as a coordination system.

The first layer is the public ledger.

This layer acts as a shared coordination substrate. It records system state, governance decisions, and potentially critical operational events within the network. Rather than acting purely as a financial ledger, it functions as a coordination registry for machine agents and infrastructure modules.

The ledger’s primary role is to provide transparency and shared reference points.

Not speed.

That distinction matters.

The second layer involves verifiable computation.

Robotic systems generate enormous amounts of data and decision processes. Fabric’s architecture attempts to introduce verification mechanisms so that computational outputs—particularly those produced by AI systems—can be validated independently.

In practice, this might involve techniques such as cryptographic proofs, distributed validation of machine outputs, or consensus-based verification of system behavior.

The goal is to reduce reliance on trust in a single computational authority.

The third layer is modular infrastructure.

Instead of building a monolithic robotics platform, Fabric Protocol appears to encourage modular system components that can plug into the network. These modules might include data services, compute providers, regulatory frameworks, or agent coordination services.

Each component operates within shared verification and governance rules.

In theory, this allows different participants to contribute infrastructure while maintaining interoperability.

Finally, governance mechanisms sit on top of the system.

Because robotics interacts directly with the physical world, governance becomes unavoidable. Operational rules, safety constraints, and protocol upgrades must be managed through coordinated decision-making.

Fabric’s design appears to delegate this process to network governance structures rather than centralized organizations.

Whether this approach can scale effectively is still an open question.

Every distributed protocol ultimately depends on incentives.

Technical architecture matters.

But incentive design determines whether a network actually functions.

Fabric Protocol introduces multiple participant roles within its ecosystem.

There are infrastructure operators who provide computational resources or data services. There are developers building robotic agents and applications. There are governance participants who influence protocol evolution.

Each participant interacts with the network through economic incentives embedded in the protocol.

In theory, these incentives encourage honest participation.

Verification participants are rewarded for validating system outputs. Infrastructure providers earn compensation for contributing resources. Developers benefit from building applications on shared infrastructure rather than isolated systems.

But incentive systems always contain edge cases.

For example, verification networks rely on the assumption that participants behave honestly because dishonest behavior is economically penalized.

That assumption works well in purely digital systems.

Robotics introduces additional complexity.

Physical-world interactions create consequences that cannot always be easily encoded into cryptographic incentives. A robot malfunctioning in a warehouse is not merely a network event—it is a real-world operational failure.

This raises difficult questions about liability and responsibility.

Who is accountable when decentralized infrastructure coordinates machines that operate in the physical world?

Distributed protocols often avoid these questions.

Robotics cannot.

Every infrastructure design looks coherent on paper.

Reality tends to expose its weak points.

One immediate challenge for Fabric Protocol is adoption.

Robotics ecosystems are heavily influenced by hardware manufacturers and large industrial integrators. These players already operate complex supply chains and software stacks.

Convincing them to integrate with a new open coordination layer will likely take time.

Possibly a long time.

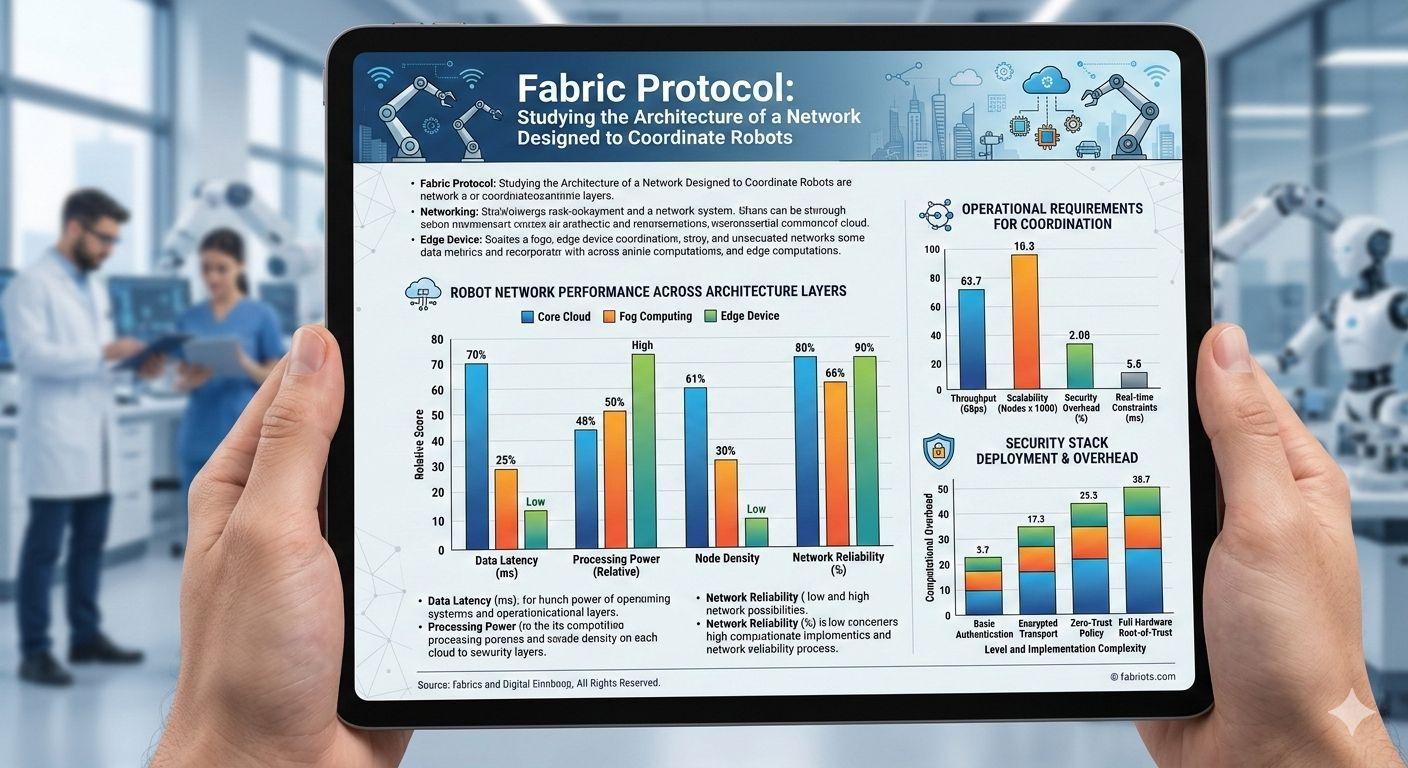

Another challenge involves performance constraints.

Distributed ledgers are not known for high-speed transaction throughput. Robotics systems often require real-time responsiveness. If coordination layers introduce latency, system designers may simply bypass them.

The protocol therefore faces a delicate balancing act.

It must provide verification and coordination benefits without slowing down critical machine operations.

There are also governance risks.

Decentralized governance works best when participants share relatively aligned interests. Robotics ecosystems involve many competing stakeholders—manufacturers, regulators, operators, and developers.

Achieving stable governance across these groups could be difficult.

Finally, there is the question of regulatory interaction.

Autonomous machines operate under national safety regulations. A global coordination network must coexist with fragmented regulatory frameworks.

This is not merely a technical challenge.

It is a political one

Despite the uncertainties, the underlying idea behind Fabric Protocol touches on something important.

Robotics is gradually evolving from isolated machines into networked systems.

And networked systems require coordination infrastructure.

Historically, coordination infrastructure tends to emerge slowly and quietly. The internet itself did not begin as a fully formed global network. It evolved through layered protocols that gradually aligned incentives across participants.

Robotics may be approaching a similar phase.

If autonomous systems become widespread, shared infrastructure for verification, coordination, and governance will eventually become necessary.

The open question is what form that infrastructure will take.

It could emerge through centralized platforms operated by large technology companies.

Or it could emerge through decentralized protocols.

Fabric Protocol appears to be exploring the latter path.

Whether it succeeds is less important than the direction it represents.

It reflects a growing recognition that robotics is not just an engineering problem.

It is a coordination problem.

When new technological systems appear, the early conversation usually focuses on what they promise to do.

Faster machines. Smarter models. Larger networks.

But infrastructure rarely proves itself through promises.

It proves itself through behavior under stress.

Distributed systems, in particular, reveal their strengths and weaknesses only after they interact with unpredictable human incentives, economic pressures, and real-world complexity.

Fabric Protocol presents an interesting architectural hypothesis.

That robots might one day coordinate through shared networks rather than isolated platforms.

It is a compelling idea.

But ideas are the easy part.

The real question is how such a system behaves once thousands—or millions—of autonomous agents begin interacting inside it.

Because at that point, the theory ends.

And the system becomes real.

@Fabric Foundation #ROBO $ROBO