The current public fascination with AI is largely confined to a narrow rectangle—the chat interface. We have spent the last two years treating Large Language Models (LLMs) like digital librarians or creative assistants. However, in the institutional and decentralized finance sectors, the conversation has already pivoted toward something far more consequential: Autonomous On-Chain Agents. These are not tools that talk; they are systems that act. They manage complex portfolios, execute intricate cross-chain swaps, and participate in high-stakes DAO governance. But as these agents move from merely "suggesting" content to actively "executing" transactions, we face a terrifying realization: we are handing the keys to our global financial infrastructure to black-box models that are notoriously prone to hallucination and logic gaps.

This is where Mira Network fundamentally shifts the paradigm of the AI-Web3 intersection. Mira is not building the agents themselves; it is building the decentralized judiciary that governs them. If an AI agent is a high-speed vehicle speeding down the blockchain highway, Mira represents the sophisticated set of guardrails and the incorruptible traffic court that ensures every move is legal, verified, and backed by economic reality. Without a robust verification layer, an autonomous agent is nothing more than a high-risk liability. With Mira’s infrastructure, it becomes a trusted fiduciary capable of managing institutional-grade assets with cryptographic certainty.

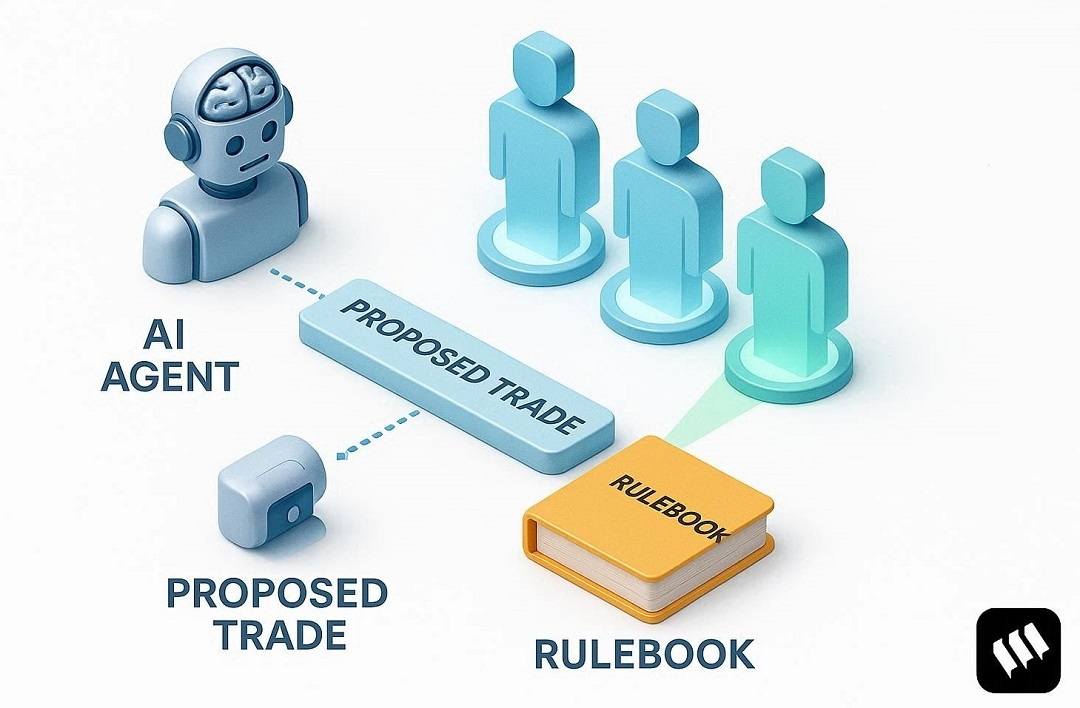

The transition to a truly agentic economy requires a fundamental shift from "optimistic execution" to "verifiable execution". In traditional Decentralized Finance (DeFi), we trust the code of a smart contract because it is static, transparent, and auditable. AI agents, however, are inherently dynamic, probabilistic, and unpredictable. Mira solves this critical trust gap by requiring agents to submit their proposed actions to the decentralized network for consensus before they are finalized. Utilizing the Mira-20 blockchain's advanced PoSA (Proof-of-Stake-Authority) consensus model, the network ensures that no single model or entity has the final word. Instead, a diverse and geographically distributed group of validators—deeply incentivized by the $MIRA token—must reach a consensus that the agent’s proposed output or transaction is valid, accurate, and matches the user's intended parameters.

Consider the profound implications for the future of DAO governance and corporate decision-making. Currently, human voters are often overwhelmed by the sheer volume and technical complexity of governance proposals. An AI agent could analyze these proposals and vote on behalf of a community. But how do we know the agent wasn't "hallucinating" a benefit or, worse, being manipulated by a biased prompt? Mira provides the answer through the cert_hash—a cryptographic proof that the agent's decision survived a rigorous gauntlet of independent, decentralized verification. This certificate is the critical difference between a random automated action and a legally and financially defensible transaction.

Beyond simple verification, the Mira ecosystem creates a perpetual feedback loop of quality and accountability. Through its sophisticated dual-token system, which includes the utility-driven $MIRA token and the stability-oriented Lumira token, the network effectively manages the cost of verification without ever sacrificing network security. This economic design allows for high-frequency agent actions—such as automated arbitrage, real-time risk management, or liquidity optimization—to remain economically viable while being fully audited in real-time. We are finally moving away from the era of simply "chatting" with our technology. We are building a new world where intelligence acts autonomously on our behalf, but only under the watchful, decentralized eye of a judge that never sleeps. Mira is the indispensable bridge between the vast potential of autonomous AI and the absolute necessity of on-chain accountability and trust.