The discomfort of artificial intelligence

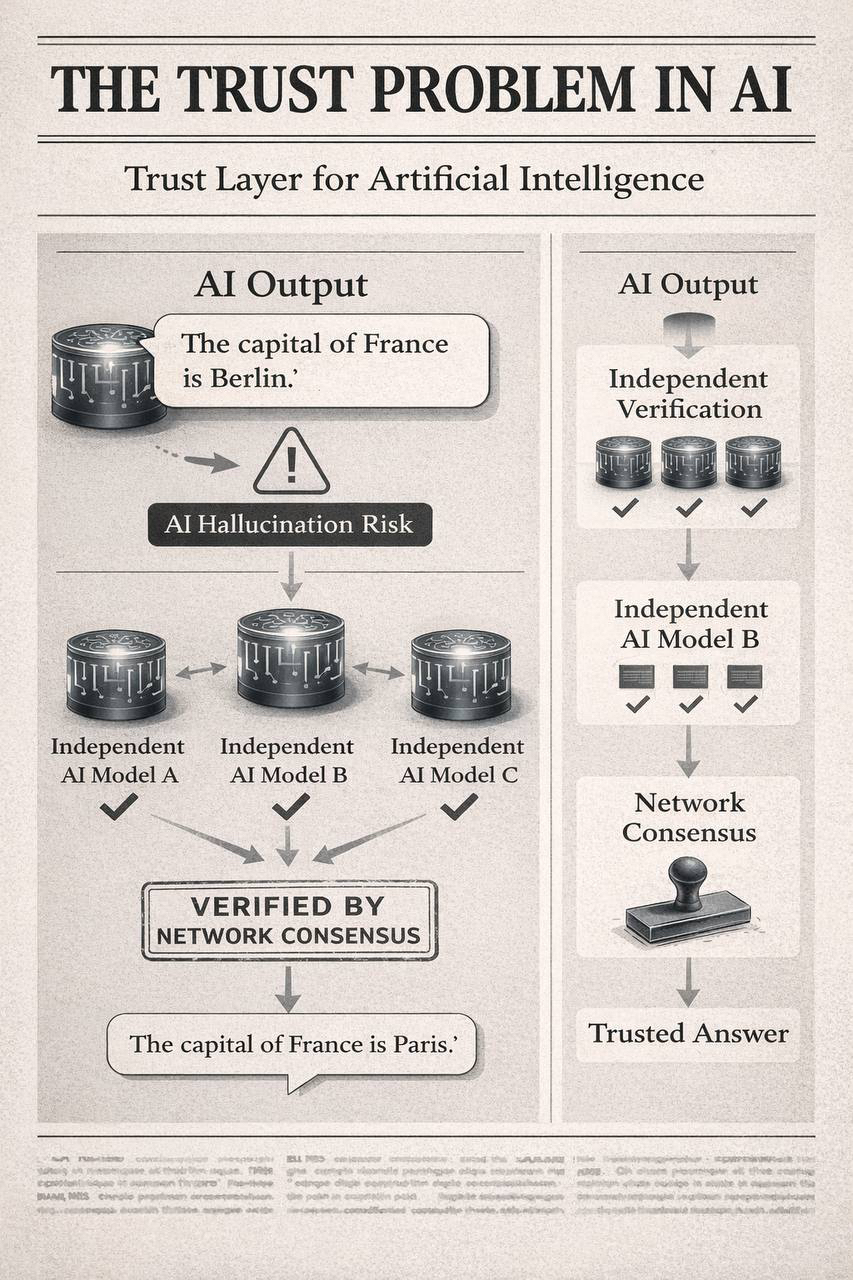

One of the hard facts made me look at artificial intelligence more closely when I began doing so. AI systems are so sure but not necessarily accurate. The language model is able to provide a clear explanation and name sources and to organize the arguments, however still may be wrong in terms of simple facts. This issue is referred to as AI hallucination and this is one of the primary reasons why AI is not a trusted tool in other serious circles. The systems are not applicable to hospitals, courts, financial markets and schools which at times compose information. The technology can prove to be powerful, yet when it is not trustworthy, it can become risky. The further I was reading this, the more I understood that it is not merely about making better models. This is because even the most perfect models are fallible as they are probabilistic in nature. Instead of proving, they make guesses of what they think is possibly true. So though the error rate may decrease, it never goes out. And with a billion AI interactions on a daily basis, you can already have a big problem even with a minor error rate.

This is the main point of the concept of Mira.

Transforming AI responses into checkable form.

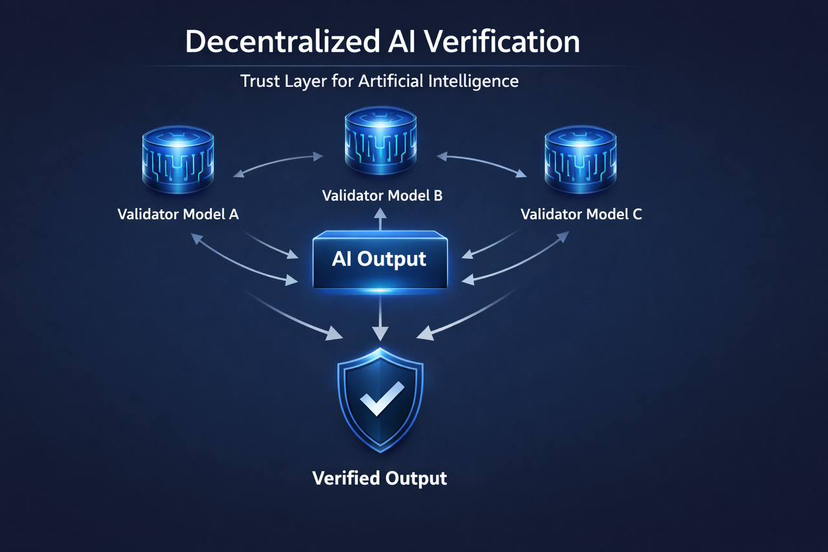

The most interesting thing about Mira, which I noticed, is that it does not attempt to perfect a single AI model. Rather it poses another question. Would it be possible to authenticate AI results as the blockchains authenticate transactions? Mira regards any AI output as a statement which should not be believed without verification. Rather than having a single model, the system breaks an answer down into smaller facts and presents the facts to a high number of different models or validators which are then checked independently. In case sufficient independent verifiers confirm the claim is right, the system accepts it. In case of disagreement, the response is rejected or recreated. This concept is comparable to the functioning of science. Science does not accept the claim made by one individual. It is believed in since other people put it to test and verify the findings. Mira attempts to apply the same principle to AI outputs. The intriguing aspect is that such verification is performed in a decentralized network rather than by a central company.

The importance of decentralization in AI verification.

When the majority of the population mentions the word blockchain, they imagine money. However, it is more about something deeper with blockchains: distributed agreement. They do not have to rely on one authority to decide on what is true by allowing many independent participants. Mira uses that concept on artificial intelligence. The network decentralizes verification, instead of relying on a single AI model, or the assessment of a single company, the network is disseminated among numerous participants and models. All validators verify a claim to be correct and a consensus is reached in the network. What I find interesting about this design is that it alters the position of AI per se. In conventional models, AI comes up with responses and users have to choose to believe them. In the approach by Mira, AI is also the creator and the auditor. The work of various models is verified.

The layer of economy in the absence of verification.

Naturally, verification does not occur free of charge. Mira proposes a token-based incentive scheme to enable the stakeholders in the network to deposit tokens, and be rewarded in case they verify the outputs of the AI in an honest manner. Validators may on the one hand lose some of their stake in case they provide incorrect verification or behave maliciously. This economic system is significant as it co-ordinates incentives. Validators are not rewarded on quickness and cheapness of production of answers. Ideally, this brings about a market in which reliability is appreciable.

The thing that I find specific to this is that what is being done on the network has a purpose. Miscellaneous proof-of-work systems such as the early blockchain mining have computers calculating puzzles which are never useful. In the example by Mira, the information generated by AI systems is being verified by the computational work. That is, the effectiveness of digital knowledge is directly enhanced by the network work.

Another possible basis of autonomous AI.

I began to have a larger vision of it. In case the AI results are trustworthy, the AI systems can start working more independently. At the moment, most AI workflows are monitored by people. Individuals verify findings, make corrections and check output. However, should the presence of a decentralized verification layer be capable of providing credible assurance regarding AI reasoning, a reduction in the necessity to have human supervision at all times may occur. This may enable AI to be used in zones that require it to be reliable. Checked outputs would be useful in financial analysis, legal research, academic writing, and medical support tools.

That is not to say that Mira cracks the AI reliability issue. Even in the verification systems, there may be flaws. They rely on quality of validators, economic incentives and system design. However, the concept of having a layer of trust over AI is an idea with power. The most intriguing part that I eventually learn about Mira is that it does not oversee AI reliability as a technical issue but a coordination problem. Rather than attempting to develop an idealized model, it develops a system in which a large number of flawed models are able to cross-check their results with one another. The system presupposes the occurrence of errors, yet it attempts to create a system in which the errors can be tracked down before they contaminate. The success or failure of the network notwithstanding, the concept behind it poses a significant question. The AI systems that produce answers may not be the most significant in the future. They could be the systems that authenticate them.