The more I explore the world of artificial intelligence, the more one question keeps coming back to me: Can we truly trust what AI tells us? AI tools today can write articles, analyze data, answer questions, and even assist in complex decision-making. But I’ve also noticed something interesting—and sometimes worrying. AI can sound very confident even when it’s wrong. These mistakes, often called hallucinations, show that intelligence alone isn’t enough. What we really need is reliability. That’s exactly why Mira Network immediately caught my attention.

When I first learned about Mira Network, it became clear to me that the project is focused on one of the biggest challenges facing AI today: trust. Mira Network is a decentralized verification protocol designed to make AI outputs more reliable. Instead of blindly accepting what an AI system produces, Mira creates a process where the information generated by AI can be checked, validated, and verified. In simple terms, it aims to turn AI-generated answers into information that people can actually trust.

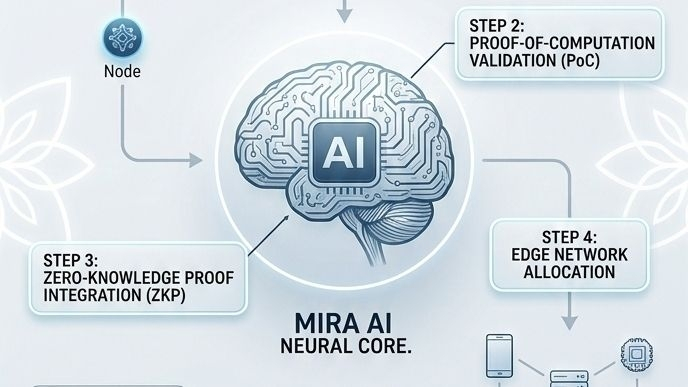

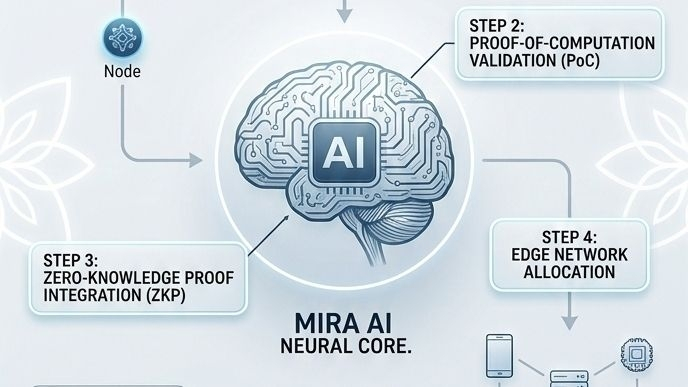

What makes Mira particularly interesting, from my perspective, is how it combines artificial intelligence with blockchain technology. Normally, AI systems operate in a centralized way—one model generates an answer and users simply rely on it. Mira takes a very different approach. Instead of trusting one model, the system distributes verification across a network. This decentralized process means that multiple AI systems evaluate the accuracy of information, creating a more reliable outcome.

As I looked deeper into how Mira works, one idea stood out to me: breaking complex information into smaller, verifiable pieces. AI-generated content often contains many different claims or statements. Mira analyzes those outputs and separates them into individual claims that can be checked independently. These claims are then reviewed by different AI models within the network. If several models agree that the claim is correct, the system can mark it as verified. If there are disagreements, the claim can be flagged for further review.

To me, this feels a bit like how scientific research works. Scientists don’t rely on a single experiment or opinion. Instead, results are tested, reviewed, and confirmed by multiple researchers before they become accepted knowledge. Mira Network applies a similar principle to artificial intelligence by introducing a process of distributed validation.

Another thing that really caught my attention is the role of incentives in the system. Mira doesn’t rely solely on technology—it also uses economic incentives to encourage accurate verification. Participants in the network are rewarded for contributing to the validation process. This creates a system where honest and accurate verification is beneficial, while incorrect or dishonest behavior becomes less rewarding.

From what I can see, this incentive structure is important because it aligns the interests of everyone involved in the network. Instead of relying on trust in a central authority, Mira builds trust through transparent participation and economic motivation. It’s a clever way of combining technology with human and machine collaboration.

The importance of this idea becomes even clearer when we think about how AI is being used today. AI is rapidly moving into industries where accuracy really matters. Healthcare systems use AI to analyze medical data. Financial institutions rely on AI to assess risk and detect fraud. Researchers use AI to process massive datasets. In these situations, even small errors can have serious consequences.

This is where Mira Network could make a significant impact. By providing a verification layer for AI outputs, it creates an extra level of confidence for users. Instead of asking, “Is this AI correct?” people could rely on a transparent system that verifies the information through consensus. That shift—from assumption to verification—could be incredibly valuable.

Another area where I see strong potential is autonomous systems. As technology evolves, machines are becoming more independent. Robots, self-driving vehicles, and automated infrastructure systems all depend on accurate information to function properly. If these systems rely on AI outputs that haven’t been verified, mistakes could happen. Mira’s approach could provide a safeguard by ensuring that the information guiding these systems has been validated.

While exploring the broader ecosystem around Mira, I also noticed that the project fits into a growing trend in the technology world: decentralized AI infrastructure. Over the past few years, developers have started experimenting with ways to combine blockchain networks and artificial intelligence. Some projects focus on decentralized computing, while others explore open data marketplaces. Mira’s focus on verification adds another important layer to this emerging ecosystem.

Recent developments suggest that interest in reliable AI systems is increasing across the entire industry. Governments, research organizations, and technology companies are all beginning to recognize that AI reliability is not just a technical issue—it’s a societal one. As AI becomes more integrated into everyday life, ensuring accuracy and accountability will become increasingly important.

From my perspective, Mira Network’s timing is interesting. The world is excited about AI’s potential, but there is also growing concern about misinformation, bias, and unreliable outputs. Solutions that address these challenges could play a major role in shaping how AI evolves over the next decade.

Another aspect that stands out to me is Mira’s philosophy. The project isn’t trying to replace existing AI models or compete directly with them. Instead, it acts as a verification layer that can work alongside different AI systems. This makes the concept more flexible and practical. Developers could continue using powerful AI models while relying on Mira to verify the accuracy of their outputs.

This approach could open the door for many new applications. Imagine educational platforms where AI-generated explanations are verified before being presented to students. Or research tools that automatically check claims before publishing summaries. Even news and information platforms could use verification networks to reduce the spread of inaccurate content.

Of course, building a decentralized verification network is not a simple task. It requires coordination between many participants, efficient verification processes, and strong technical infrastructure. Like many emerging technologies, Mira will likely face challenges as it grows. Scalability, adoption, and integration with existing systems will all play important roles in determining its success.

But despite those challenges, the core idea behind Mira Network feels powerful to me. In a world where AI-generated content is becoming more common every day, the ability to verify information could become just as important as generating it.

When I step back and think about the bigger picture, Mira represents something deeper than just another blockchain or AI project. It represents a shift toward responsible intelligence—technology that doesn’t just produce answers, but ensures those answers are trustworthy.

If Mira Network succeeds in building a reliable verification layer for AI systems, it could change the way we interact with artificial intelligence. Instead of questioning whether AI is right or wrong, we might one day rely on transparent verification networks that confirm the truth behind the information.

And from where I stand, that future—where powerful AI is matched with equally powerful verification—might be exactly what the next generation of technology needs.