Artificial intelligence is advancing faster than most people ever imagined. Today, AI can write articles, answer complicated questions, generate software code, analyze data, and even help doctors and lawyers with complex decisions. But despite all this progress, one major problem still stands in the way of fully trusting AI: reliability.

Many AI systems often produce answers that sound extremely confident but contain mistakes, fabricated facts, or misleading information. These errors, often referred to as “hallucinations,” happen because AI models do not truly understand information the way humans do. Instead, they predict words and patterns based on massive datasets they were trained on. This makes them powerful, but also unpredictable at times.

Many AI systems often produce answers that sound extremely confident but contain mistakes, fabricated facts, or misleading information. These errors, often referred to as “hallucinations,” happen because AI models do not truly understand information the way humans do. Instead, they predict words and patterns based on massive datasets they were trained on. This makes them powerful, but also unpredictable at times.

This is the exact challenge that Mira Network is trying to solve.

Rather than building yet another AI model, Mira takes a completely different approach. It focuses on creating a system that verifies AI outputs before people rely on them. In simple terms, Mira acts like a global fact-checking layer for artificial intelligence. Instead of trusting one AI model blindly, the network allows multiple independent systems to examine and confirm whether an answer is accurate.

The idea behind Mira Network is inspired by something that has worked in many other areas of society: collective verification. In science, discoveries are reviewed by other researchers before they are accepted. In journalism, editors verify information before publication. In blockchain networks, multiple nodes verify transactions before they are confirmed. Mira applies this same principle to artificial intelligence.

The idea behind Mira Network is inspired by something that has worked in many other areas of society: collective verification. In science, discoveries are reviewed by other researchers before they are accepted. In journalism, editors verify information before publication. In blockchain networks, multiple nodes verify transactions before they are confirmed. Mira applies this same principle to artificial intelligence.

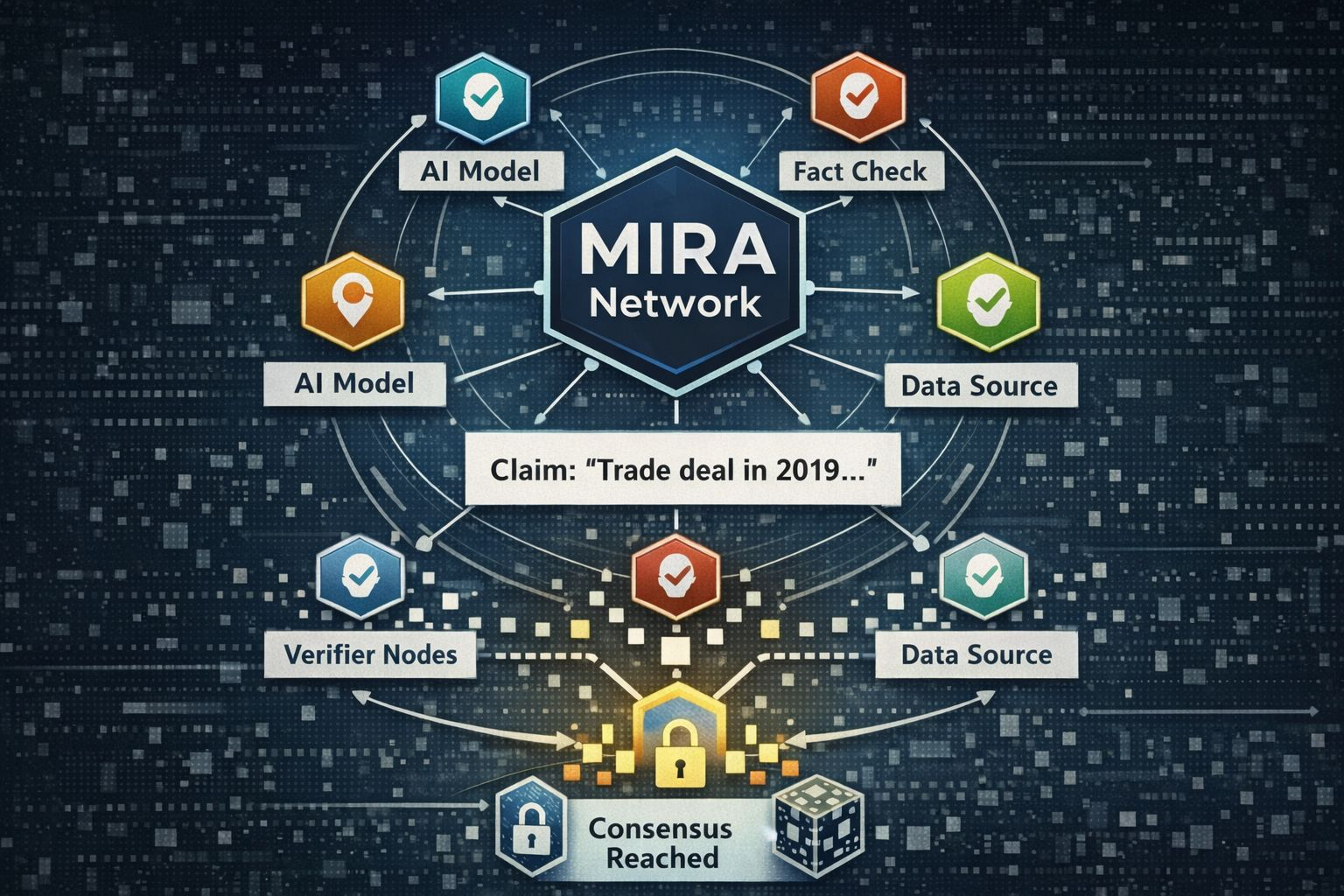

When an AI produces a response, Mira’s system breaks that response into smaller pieces of information, or claims. Each claim is then sent across a decentralized network where different AI models analyze it independently. These models evaluate whether the statement appears true, false, or uncertain based on available knowledge.

Once the verification process is complete, the results from all the models are combined. If a strong majority agrees that the claim is correct, the information is accepted as verified. If there is disagreement or uncertainty, the claim can be flagged or rejected. This process ensures that no single model has complete authority over the result.

Once the verification process is complete, the results from all the models are combined. If a strong majority agrees that the claim is correct, the information is accepted as verified. If there is disagreement or uncertainty, the claim can be flagged or rejected. This process ensures that no single model has complete authority over the result.

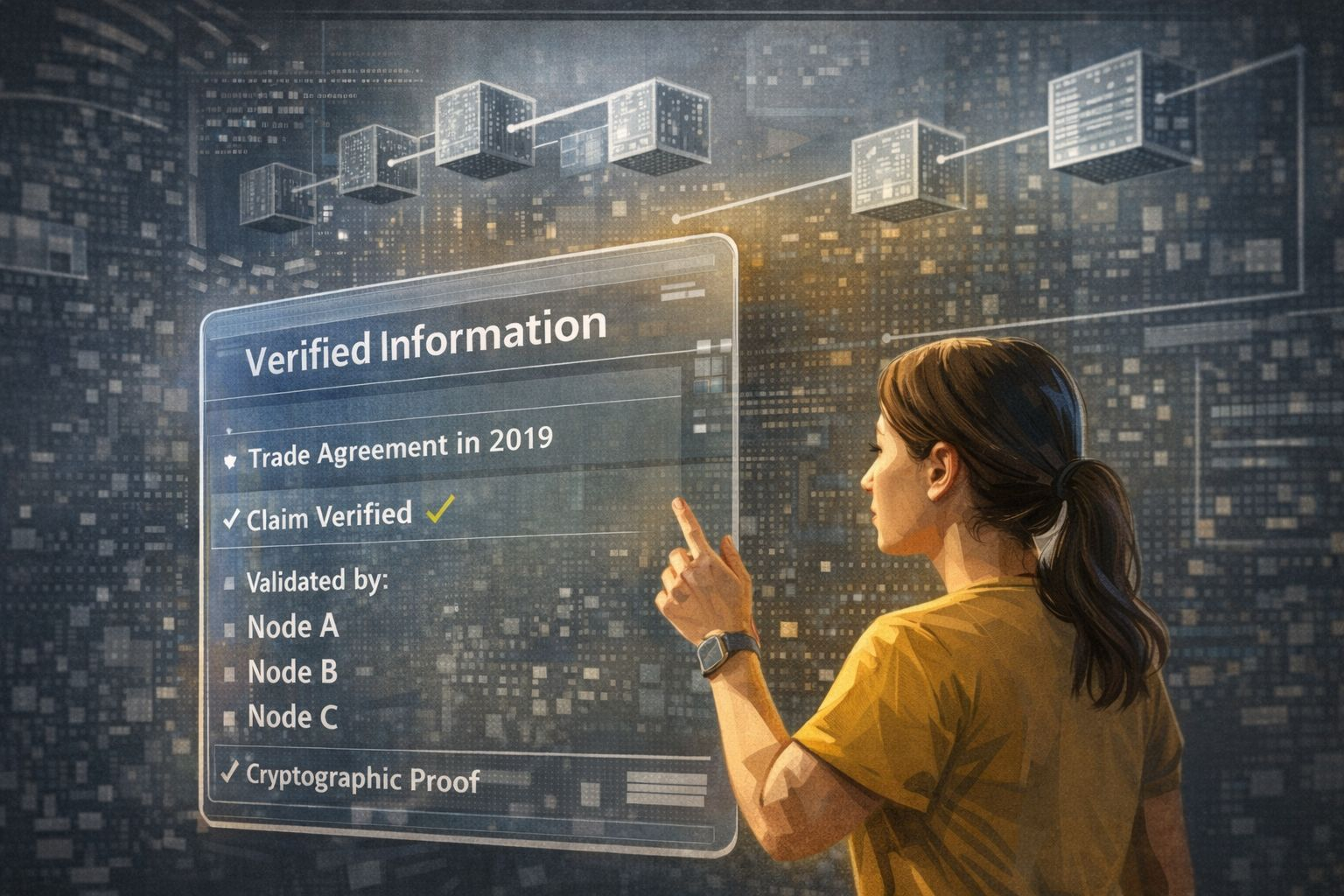

What makes this system even more interesting is the way it uses blockchain technology. After a claim is verified, the result is recorded on a public ledger along with cryptographic proof of the verification process. This means anyone can later check how the decision was made, which nodes participated, and what the final consensus was. The verification becomes transparent, traceable, and extremely difficult to manipulate.

Mira Network also introduces an economic system that encourages honest participation. Individuals or organizations that run verification nodes must stake tokens in order to take part in the network. If they consistently provide inaccurate evaluations or try to manipulate results, they risk losing part of their stake. On the other hand, nodes that contribute accurate and reliable verification are rewarded.

This creates a system where participants are financially motivated to verify information carefully. Instead of wasting energy on meaningless computations, the network directs computational resources toward something far more useful: validating knowledge.

Another interesting element of the ecosystem is its decentralized computing model. Running verification nodes requires GPU power, which means people with computing resources can contribute to the network and earn rewards. This opens the door to a new type of digital economy where idle hardware can help support the reliability of artificial intelligence.

The potential applications of such a system are enormous. In healthcare, AI-generated medical recommendations could be verified before reaching doctors or patients. In finance, automated trading systems could confirm market data before executing large transactions. In legal research, AI-generated arguments could be verified for accuracy before being presented in court.

Education could also benefit significantly. AI tutors are becoming more common, but students sometimes receive incorrect explanations. A verification layer like Mira’s could ensure that educational responses are checked before being delivered, helping learners receive more reliable guidance.

Of course, the idea is not without challenges. Verification requires additional computation, which could increase processing time or costs. There is also the possibility that different AI models might share similar biases if they were trained on similar data. Addressing these issues will require continuous improvement and diversity in the verification models participating in the network.

Even with these challenges, Mira Network reflects a deeper shift happening in the world of artificial intelligence. The first wave of AI development focused on building models capable of generating information. The next wave will likely focus on ensuring that this information can be trusted.

As AI systems begin to power autonomous agents, digital economies, research tools, and decision-making systems, reliability will become more important than ever. A future where machines assist with critical decisions cannot depend on answers that may or may not be correct.

Mira Network’s long-term vision is to create what could be described as a “trust layer” for AI. Just as blockchain technology introduced a new way to verify financial transactions without relying on centralized authorities, decentralized verification networks may offer a way to verify machine-generated knowledge.

If successful, this approach could fundamentally reshape how humans interact with artificial intelligence. Instead of questioning whether an AI answer might be wrong, people could rely on systems that show exactly how that answer was verified and confirmed. @Mira - Trust Layer of AI $MIRA #mira #MIRA $MIRA