I was trying to explain this to a colleague during a late shift in the operations room: when multiple robots work together, the real problem isn’t intelligence it’s trust. Each robot has its own model, its own sensors, its own interpretation of the environment. When three machines see the same aisle differently, which one should the system believe? That question is what pushed us to experiment with @Fabric Foundation n and the $ROBO trust layer.

Our setup isn’t huge, but it’s busy. A small fleet of warehouse robots handles inspection, pallet movement, and corridor monitoring. Each robot generates dozens of AI predictions per minute: obstacle alerts, pallet recognition, path confidence. Before we integrated Fabric Protocol, those predictions went straight into the coordination engine. If one robot said “aisle clear,” the scheduler simply accepted it.

Most of the time that worked fine. But occasionally we’d see strange behavior. A robot would reroute for an obstacle that didn’t exist, or two units would disagree about the same pallet location. The logs showed the models were confident, yet the system clearly had inconsistent views.

So we introduced a verification layer.

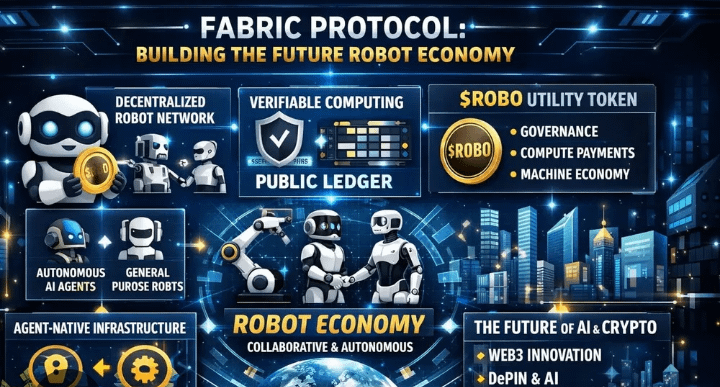

Instead of letting each robot’s AI output directly affect the fleet coordinator, we began treating every important signal as a claim. The robot might claim “pallet detected at sector B7” or “path confidence 94%.” Those claims are passed into the Fabric trust layer where decentralized validators review them before they influence the coordination engine.

In simple terms, @Fabric Foundation sits right between AI output and operational trust.

During our first deployment cycle we processed around 27,000 robot-generated claims over ten days. Most were navigation confirmations or object detections. Consensus verification through $ROBO averaged about 2.6 seconds per claim. Occasionally we saw spikes near 3.4 seconds when multiple robots reported simultaneously, but since route planning runs in multi-second intervals anyway, the latency stayed manageable.

The interesting part wasn’t speed. It was disagreement.

Roughly 3.8% of claims failed consensus validation. That number caught my attention because the models themselves reported high confidence scores. Digging deeper into the rejected cases revealed patterns we hadn’t noticed before. Some failures came from lighting conditions affecting a specific robot’s camera. Others involved sensor drift from a unit that had slightly misaligned calibration.

Before using $ROBO, those signals would have been accepted automatically.

One small test made the value clearer. We intentionally reduced camera resolution on two robots during an overnight cycle. The models still produced confident obstacle detections, but decentralized validators challenged nearly 40% of those claims because nearby robots reported contradictory observations. The system essentially forced the machines to cross-check each other.

That experiment changed how I look at collaborative robotics.

Of course, there are tradeoffs. Decentralized verification adds complexity and overhead. Validators must remain active, and network health matters. When participation dipped briefly during a maintenance window, consensus time increased by almost a second. It didn’t break the system, but it reminded us that distributed trust layers have their own dependencies.

Another subtle effect appeared inside the engineering team. We stopped thinking of AI outputs as conclusions. Instead, they became proposals waiting for validation. That shift sounds philosophical, but operationally it matters. Engineers began checking consensus logs before adjusting models or sensors.

Fabric’s modular design helped too. Because the protocol works as middleware, we didn’t need to redesign the robots’ AI models. We simply standardized claim formats before sending them to the verification layer. Integration ended up taking days instead of weeks.

Still, I try not to exaggerate what this technology does. Decentralized validators can compare signals and enforce consensus rules, but they don’t magically know ground truth. If every robot misreads the same environment, agreement alone won’t fix it. Verification improves reliability it doesn’t eliminate uncertainty.

After several months running this architecture, the biggest improvement isn’t fewer errors. It’s transparency. Every robot claim now carries a validation record tied to $ROBO consensus decisions. When something unexpected happens, we can trace exactly why the system trusted one machine over another.

Looking back, integrating @Fabric Foundation didn’t make our robots smarter. What it did was create a structured trust layer between machine perception and real-world action.

And honestly, that might be the missing piece in many AI systems today. Intelligence is advancing quickly, but trust mechanisms are still catching up.

For teams running autonomous systems, the real challenge isn’t teaching machines to see the world. It’s building systems that make sure those machines are telling the truth about what they see.