Most discussions around Robo agents focus on one thing only speed. People talk about how fast agents can execute tasks how automation can replace manual work and how thousands of tasks can run without human involvement. That part is exciting but while thinking about it I realized there is another question that rarely gets attention. What happens if Robo agents start failing quietly and nobody notices. Not a big system crash not a visible error just small tasks that fail somewhere inside the workflow while the system still looks normal from the outside.

When thousands of agents are running tasks continuously it becomes very easy for small failures to hide inside the system. One agent may return incomplete data another might stop in the middle of a process and a third one might produce a result that technically looks correct but is actually wrong. Dashboards may still show activity and the network may still appear healthy but in reality some of the outputs are slowly becoming unreliable. This type of silent failure is often more dangerous than a visible breakdown because when systems crash people react immediately but when failures are silent they can continue for a long time before anyone realizes something is wrong.

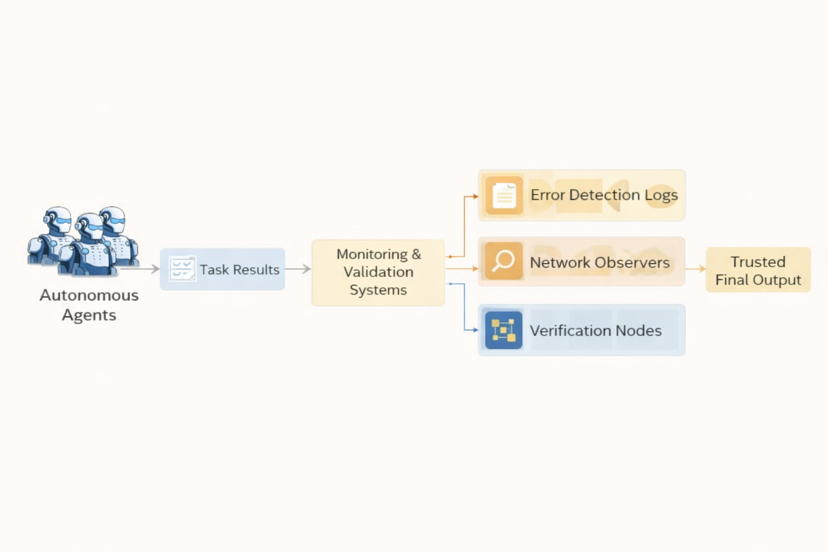

Real systems solve this problem by building strong monitoring and validation layers around automation. Instead of trusting every task result automatically the network constantly checks whether results match expected patterns. If something looks unusual the system triggers alerts or sends the result for additional validation. Error reporting pipelines validation mismatch signals and network monitoring tools all work together to make sure silent failures do not spread across the system.

Another important method is comparing outputs across multiple observers. If one agent produces a result but other validators see a mismatch the system treats it as a warning signal instead of blindly accepting the output. This kind of cross checking is what makes large automation networks reliable. Without it even small errors can slowly propagate through many connected workflows and eventually affect the overall system performance.

So when we talk about Robo agents the real challenge may not be how fast they execute tasks but how safely the system can monitor them. If agents start doing real work then reliability monitoring validation systems and error detection infrastructure become just as important as the automation itself. Autonomous systems are powerful but their biggest risk sometimes comes from errors that nobody sees because they happen quietly in the background.