What caught my attention was not the headline claim, but the deeper assumption.A lot of people look at Mira and go straight to the obvious surface story: more models, more reviewers, more verification. I understand why. That is the easy part to explain. It sounds intuitive. If one model can be wrong, ask several. If one verifier is weak, add more verifiers. But the part I am not fully convinced people are focusing on is simpler and more foundational than that.@Mira - Trust Layer of AI $MIRA #Mira

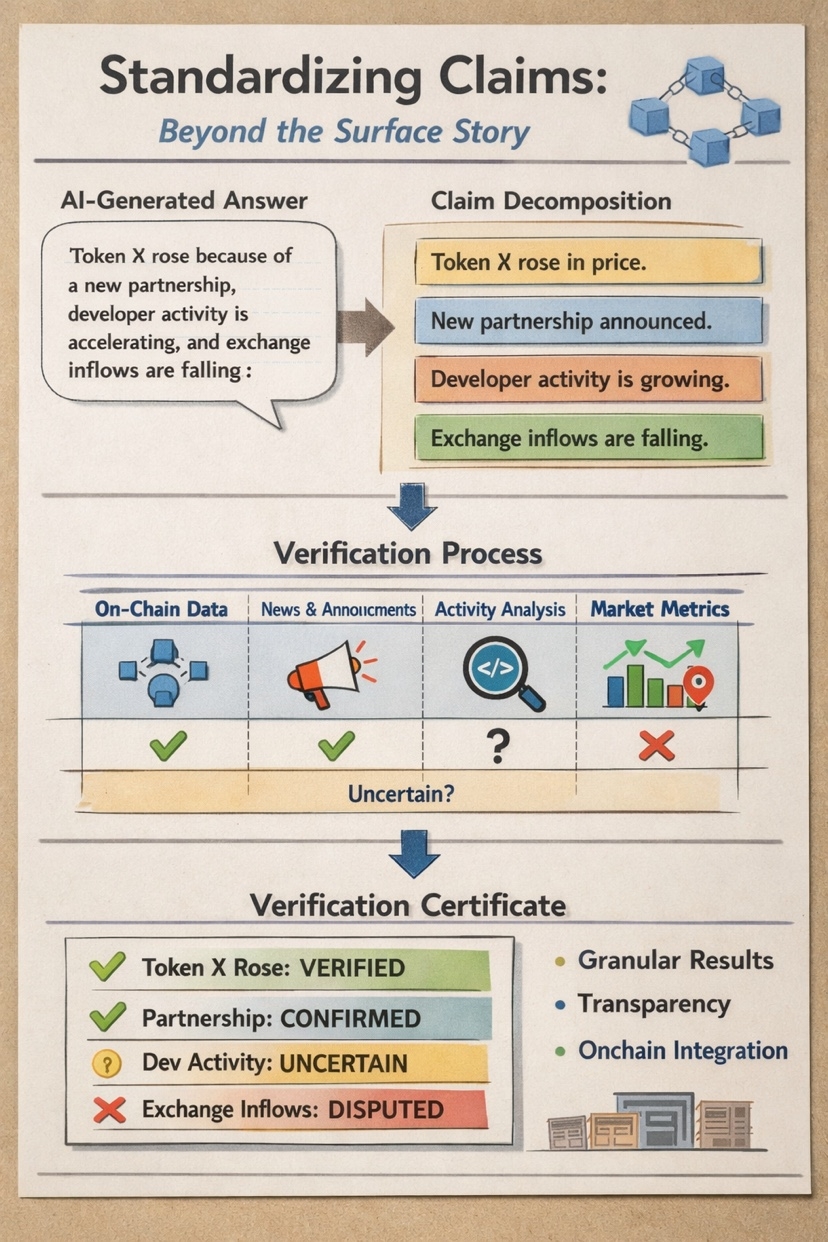

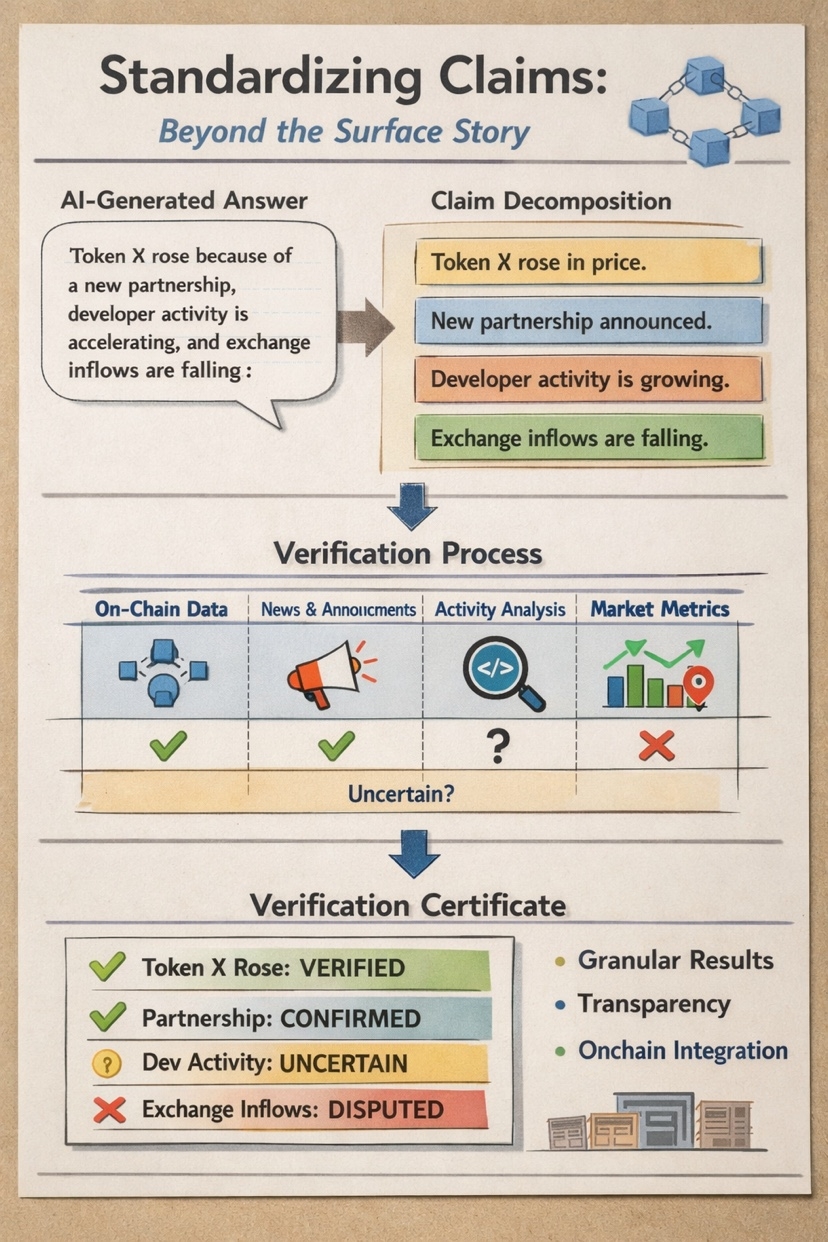

What exactly is being verified? I keep coming back to that question because trustless verification breaks down very quickly when the object of verification is still vague, messy, or inconsistently framed. Before a network can coordinate around truth, it has to coordinate around the unit of judgment. In my view, that may be Mira’s most important design choice: not the number of models, but the attempt to decompose outputs into standardized claims that can actually be checked. That sounds technical, but the practical friction is easy to see.Take a long AI-generated answer. It may include facts, interpretations, causal links, probabilities, implied assumptions, and a few stylistic filler sentences that sound confident without really saying much. If you ask a verifier to judge the entire answer at once, you create a soft target. One verifier may focus on the main conclusion. Another may focus on one factual line. A third may think the answer is “mostly right” even if one critical sentence is false. Now the network has disagreement, but not necessarily meaningful disagreement. It is not comparing like with like.

That sounds technical, but the practical friction is easy to see.Take a long AI-generated answer. It may include facts, interpretations, causal links, probabilities, implied assumptions, and a few stylistic filler sentences that sound confident without really saying much. If you ask a verifier to judge the entire answer at once, you create a soft target. One verifier may focus on the main conclusion. Another may focus on one factual line. A third may think the answer is “mostly right” even if one critical sentence is false. Now the network has disagreement, but not necessarily meaningful disagreement. It is not comparing like with like.

That is why I think claim decomposition matters so much. The hard problem is not merely distributing verification work across many participants. The hard problem is transforming a fuzzy block of language into discrete objects that multiple verifiers can evaluate in a reasonably consistent way.Mira’s strongest design choice may be claim standardization, because verification only becomes scalable and comparable once the network agrees on what a claim is before it argues about whether the claim is true.

That distinction matters more than it first appears. Crypto systems do not just need intelligence; they need legibility.They need clear units the network can evaluate, pay for, dispute, reward, penalize, and log onchain.A verifier market cannot function well if each participant is effectively verifying a different thing. Standardizing the object of verification is what turns “review” into something closer to infrastructure.The mechanism, at least conceptually, is powerful. An answer or document gets transformed into smaller claims. Those claims are then routed for assessment. Verifiers are not asked to score a vague cloud of meaning; they are asked to evaluate a bounded statement. The output can then be attached to some certificate layer showing which claims passed, which were disputed, and where uncertainty remains.For builders, that is much more important than it sounds. Once claims are decomposed, you can begin to imagine cleaner interfaces for trust. A downstream product does not need to consume one giant “verified” label. It can consume structured confidence. It can know that three factual statements were supported, one causal claim was contested, and two claims lacked enough evidence. That is a very different product surface than the usual binary badge. I think this is where verifier consistency becomes the real story. People often talk about model diversity as the main defense against hallucination. That helps, but only after the verification task has been normalized. If different verifiers are looking at different slices of meaning, diversity does not solve much. It may even hide the problem by producing the appearance of robust review while the network is actually misaligned on the task definition.Imagine an AI-generated market post that says: “Token X rose because of a new partnership, developer activity is accelerating, and exchange inflows are falling.” That looks like one paragraph. In practice, it contains several distinct claims: Token X rose.A partnership got announced. The price move may have been driven by that news. Developer activity also seems to be picking up.Exchange inflows are falling. Each of those needs different evidence, different time windows, and maybe different standards of proof.

If Mira can reliably break that paragraph into verifiable pieces, the network becomes much more useful. One claim may be supported by onchain data. Another may be supported by a public announcement. The causal claim may remain uncertain. That is fine. In fact, that is better than fine. It is more honest. The certificate becomes a map of what was checked, not a theatrical stamp of certainty.This is why I think claim transformation is not just a preprocessing trick. It is the coordination layer. It is what makes verifier outputs composable. It is what lets different actors in the network compare results, accumulate evidence, and attach economic consequences to specific judgments instead of vague impressions.And this is where the crypto angle becomes more credible to me.Without decomposition, a verification network starts to look like a loose review marketplace with fancy language around it. With decomposition, it starts to resemble a system that can create structured trust objects. Those objects can be rewarded, challenged, aggregated, and maybe eventually used inside broader onchain workflows. Certificates become more useful when they point to granular claims rather than blessing an entire blob of generated text.Standardizing claims can improve consistency, but it can also flatten nuance. Not every statement fits neatly into a clean atomic unit. Some truths are contextual. Some depend on framing. Some are partly factual and partly interpretive. If decomposition becomes too rigid, the network may become better at verifying narrow fragments while losing the meaning of the whole. Builders should care about that risk. It is possible to create a system that is extremely good at certifying small pieces and still weak at judging whether the broader synthesis is misleading.There is another risk too: whoever defines the transformation rules may quietly shape the entire network. If the decomposition layer decides what counts as a claim, how claims are split, and which forms are easier to verify, it influences incentives upstream and downstream. That is not a minor implementation detail. That is governance by architecture.So when I look at Mira, I do not think the deepest question is whether many models can verify each other. I think the harder and more interesting question is whether the network can standardize claims without oversimplifying reality. That is the place where the design either becomes infrastructure or stays a compelling demo.What I want to see next is not just more verifier throughput or broader participation. I want to see whether the claim decomposition layer remains stable under messy, real-world inputs: market commentary, disputed facts, conditional statements, fast-changing information, mixed media. That is where this design choice either proves itself or starts leaking ambiguity back into the system.

The architecture is interesting, but the operating details will matter more. If verification becomes a real coordination layer, then the quietest part of the stack may turn out to be the most important one: who defines the claim before everyone else decides whether to trust it.@Mira - Trust Layer of AI $MIRA #Mira

Articolo

Mira’s Hardest Problem Is Defining the Claim

Disclaimer: Include opinioni di terze parti. Non è una consulenza finanziaria. Può includere contenuti sponsorizzati. Consulta i T&C.

0

4

118

Esplora le ultime notizie sulle crypto

⚡️ Partecipa alle ultime discussioni sulle crypto

💬 Interagisci con i tuoi creator preferiti

👍 Goditi i contenuti che ti interessano

Email / numero di telefono