The AI race today is obsessed with one thing: capability. Every week a new model appears claiming better reasoning, faster responses, or more parameters. But while the world is chasing smarter machines, a far more uncomfortable problem is quietly growing in the background — can we actually trust what AI produces?

This is the gap where Mira Network begins to look increasingly important.

Right now the internet is being flooded with AI-generated content. Articles, research summaries, code, data analysis, even market insights are being produced by models at a speed humans simply cannot match. The productivity boost is undeniable. But so is the risk. AI models hallucinate, misinterpret data, and sometimes generate completely fabricated information that still looks convincing. The more AI becomes embedded into daily workflows, the more dangerous this becomes.

That’s why the conversation is slowly shifting from “how powerful is the model?” to “how reliable is the output?”

Mira Network is building around that exact question.

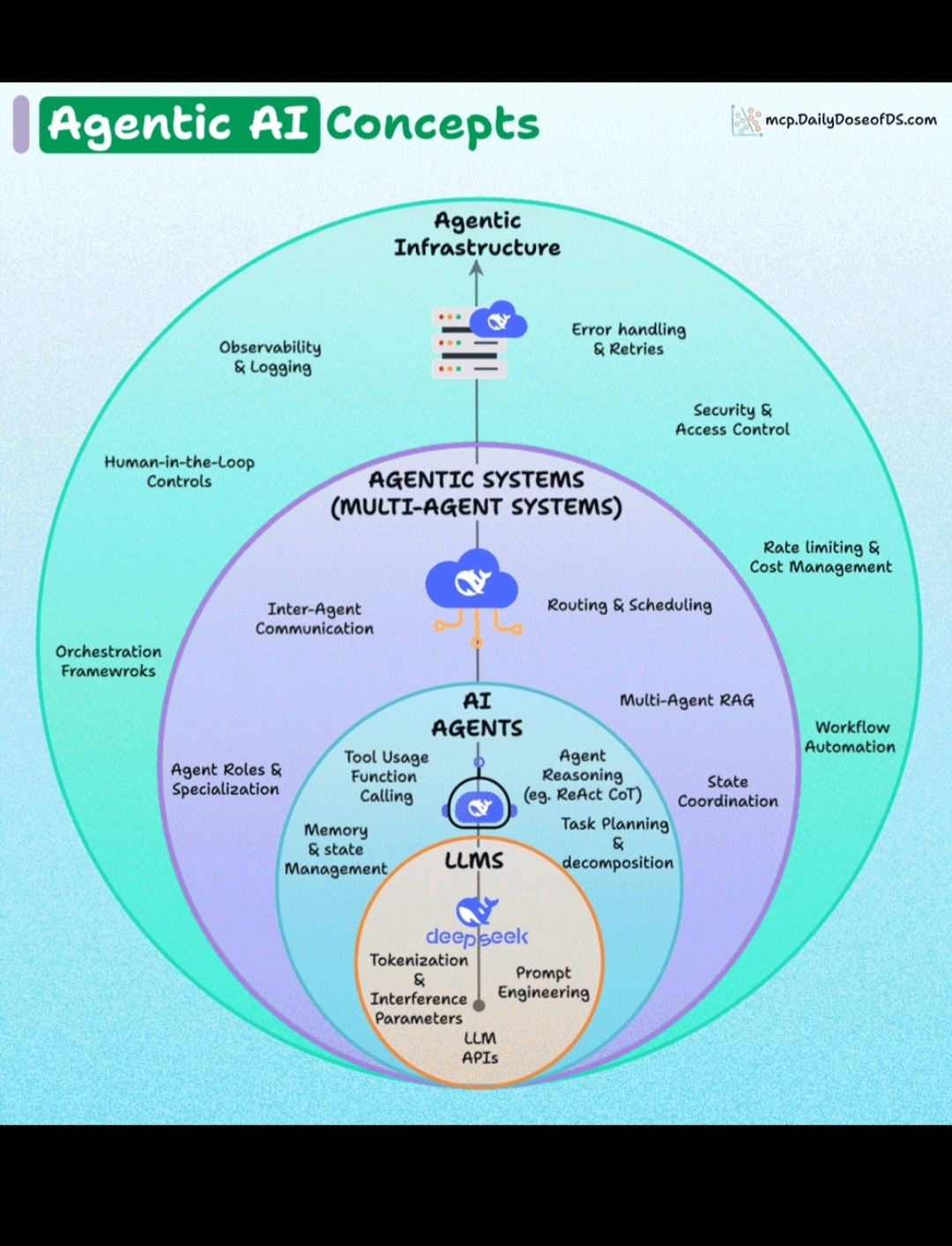

Instead of treating AI responses as something that must simply be accepted, Mira introduces the idea that AI outputs should be verifiable. The network focuses on creating a system where results produced by AI can be checked, validated, and confirmed through decentralized verification processes.

In practical terms, that changes the dynamic of AI usage completely.

Imagine AI tools used in finance, healthcare research, legal analysis, or institutional decision-making. In these environments, accuracy is not optional. A wrong answer isn’t just inconvenient — it can be expensive, dangerous, or legally problematic. Systems that can verify AI outputs before they are trusted become extremely valuable infrastructure.

That is the narrative where Mira begins to make sense.

The token acts as the coordination layer for the ecosystem, helping incentivize participants who contribute to verification processes and network security. In many ways, it transforms trust into something economically secured rather than socially assumed, which is a powerful concept when dealing with machine-generated information.

My opinion is that the market may be focusing on the wrong part of the AI revolution.

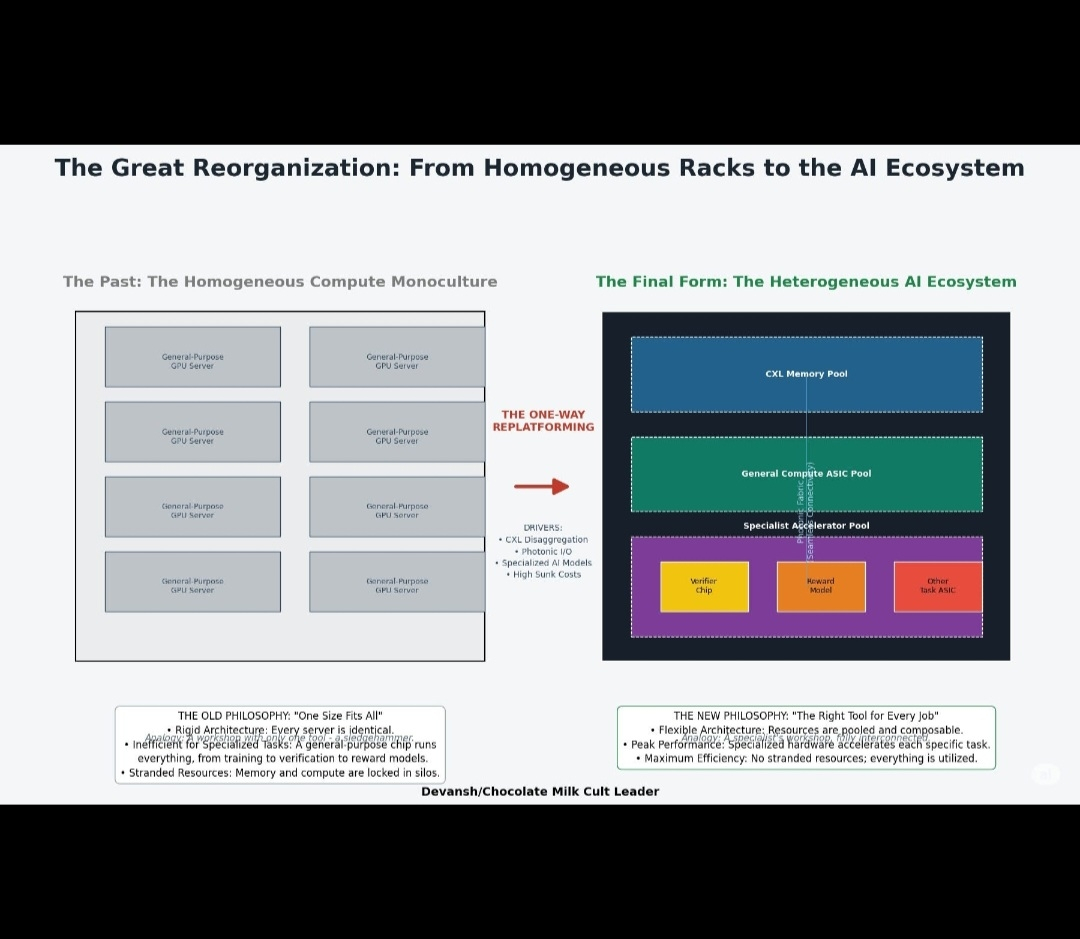

Most attention is currently directed toward model builders — the companies training massive neural networks. But technology history repeatedly shows that infrastructure layers often become just as important as the applications themselves. Cloud computing, payment rails, and data infrastructure quietly became the backbone of the modern internet.

AI will likely develop in a similar way.

If billions of AI-generated outputs are going to shape decisions, influence markets, and guide real-world actions, the systems verifying those outputs will become critical pieces of the digital economy. In that scenario, networks focused on verifiable AI are not just niche experiments — they are foundational infrastructure.

That’s why projects like Mira Network are worth paying attention to right now.

Not because they promise hype or quick narratives, but because they are working on a problem the industry hasn’t solved yet.

AI may generate the answers, but sooner or later the world will demand proof that those answers are actually correct.

And if that moment arrives, the networks focused on trust, verification, and reliability could quietly become the most important layer of the entire AI ecosystem — which is exactly the space whMIRA is trying to build its foundation.