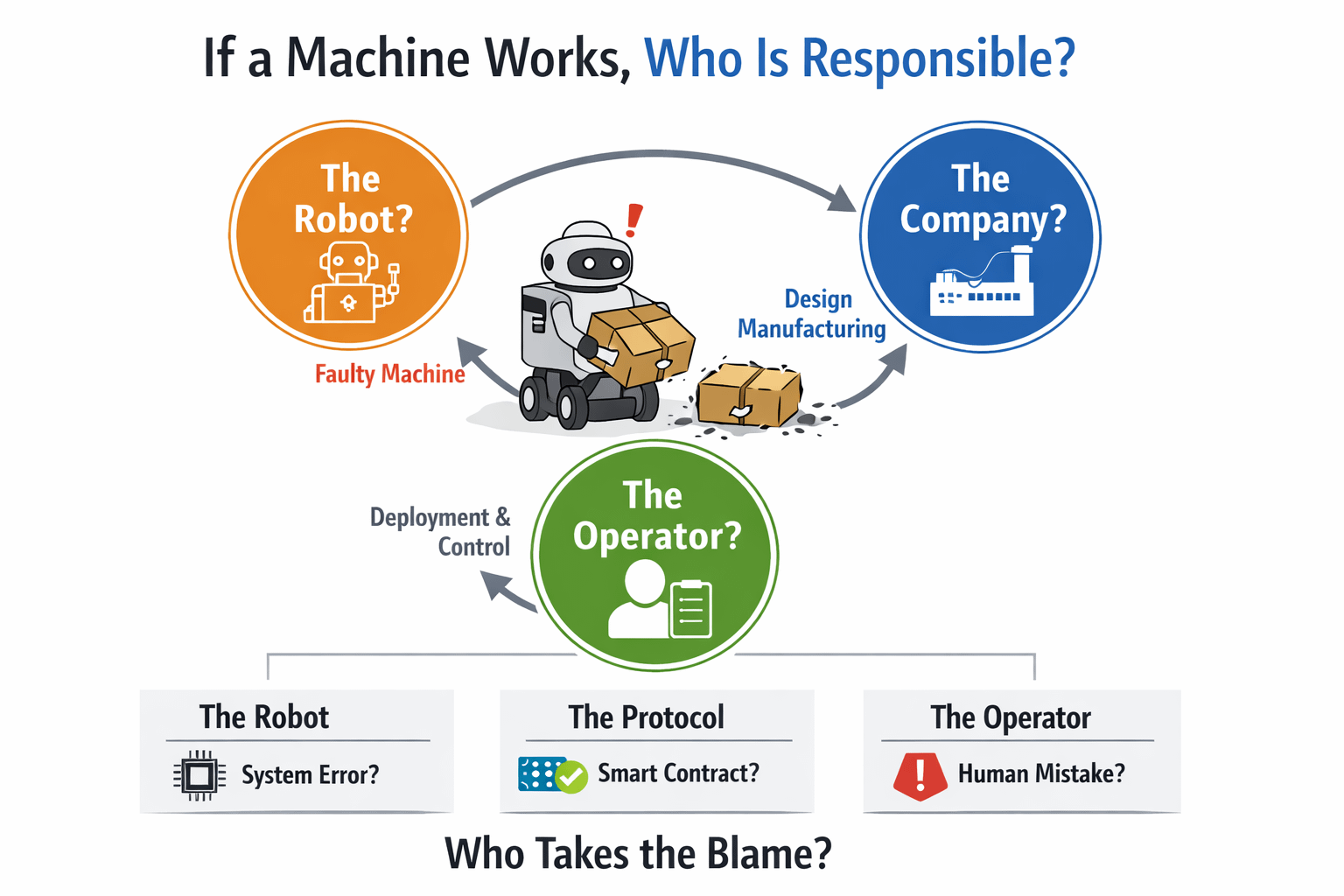

I remember the day I went to class and left a delivery package at home. I trusted the automated system to handle everything. Later I heard that the delivery robot had dropped the package. At that moment a simple question came to mind: who is at fault? Is it the robot? The company that built the robot? Me, for trusting the robot?

This is not an incident. As automation spreads rapidly around the world an old question is taking a form: if a machine does the work instead of a human, who answers when something goes wrong? This is a question that affects the Fabric Foundation and its work with robotics and blockchain.

When a tool fails the tool itself is not at fault. The one who chose the tool is. When a network like the Fabric Foundation brings robotics and blockchain together to create an automated ecosystem this question is no longer just philosophical. It becomes a business reality. If a robot misses a delivery if a smart contract executes at the time or if an automated decision harms a client, who will step forward and take responsibility? Who will say, "This mistake is mine" and take the blame for the machine?

Imagine a robot node on the Fabric network completing a logistics task. Due to the epoch cycle the reward for that task is not triggered because the networks timing window had already closed. Now the question arises: is the robot responsible? Is the protocol responsible? Is the operator who deployed the task responsible? If responsibility across these three layers is not clear the networks trustworthiness is at stake.

From a business perspective if this question has no answer investments will not come. No corporate client will trust a system where mistakes happen. No one takes responsibility for the machine. The biggest barrier to intelligence and robotics today is not technical. It is the absence of accountability for the machines.

If an automated system in your business makes a decision, who is listed as responsible in your contract for the machine? In a network like the Fabric Foundation is it possible to define "responsibility" in code or does it always depend on human judgment for the machines? If a robot does the work and a human receives the outcome, who owns the failure in between for the robot?

The Fabric Foundation is tackling this challenge. It is not connecting robots and blockchain. It is creating a transparent framework for responsibility within that connection for the machines. Through the ROBO token reward system epoch-based timing and on-chain verification. These are all attempts to encode accountability for the robots. Code alone is not enough. Human judgment beyond the protocol, operator vigilance and the ethical responsibility of system designers. Without these three layers no smart contract can ever be fully safe for the machines.

No matter how intelligent a machine becomes it cannot go beyond rules written by humans for the machine. Humans can. And that is the core problem for the machines. Machines do not make mistakes. We do by making the machines do the work.

Not a Conclusion, But a Question

time you rely on an automated system pause and ask yourself: if this machine makes a mistake am I ready to take that responsibility, for the machine?