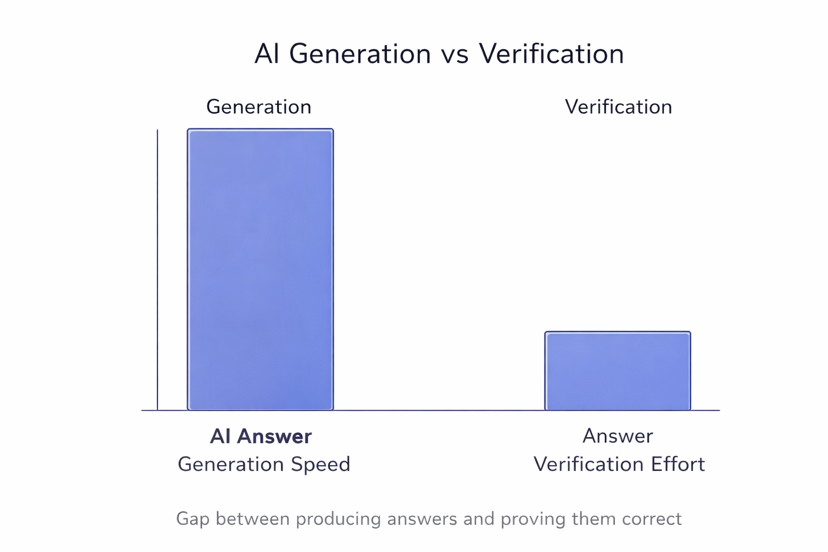

Over the last few years AI systems have become extremely good at producing answers. You can ask almost anything and within seconds the system writes a confident response. Market explanations technology analysis even research summaries appear instantly. From the outside it feels like knowledge has become very easy to access. But after using these systems for some time one important question starts appearing. Where did this answer actually come from and how sure can we be that it is correct.

The truth is simple. Generating answers has become easy. Verifying those answers is the difficult part.

Most AI models work by predicting the next words based on patterns from training data. Because of this they can create explanations that sound very convincing even when the information is not fully accurate. The language feels confident but confidence does not always mean correctness. Sometimes the data behind the answer can be outdated incomplete or slightly wrong. This is why many people still double check AI responses even when they look very professional.

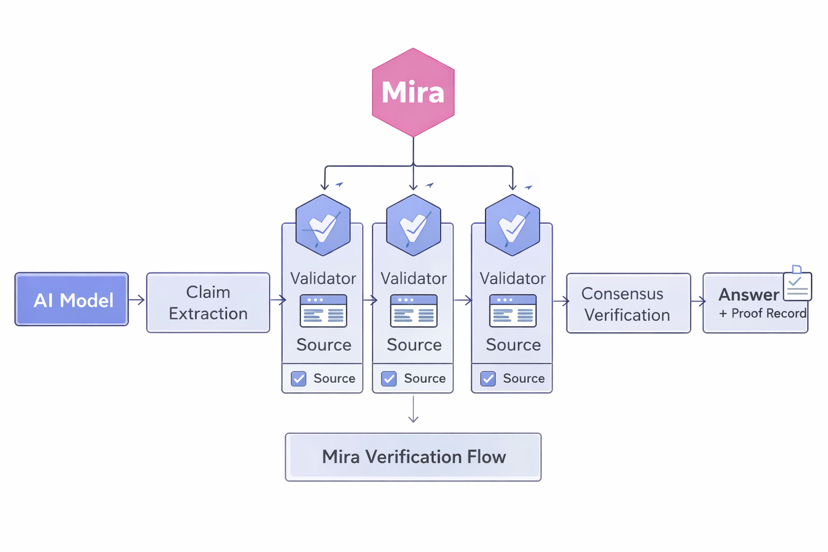

This is where a new idea begins to appear in AI infrastructure. Instead of trusting the full answer immediately the system can break the response into smaller pieces and check them individually. This is the direction networks like Mira are exploring.

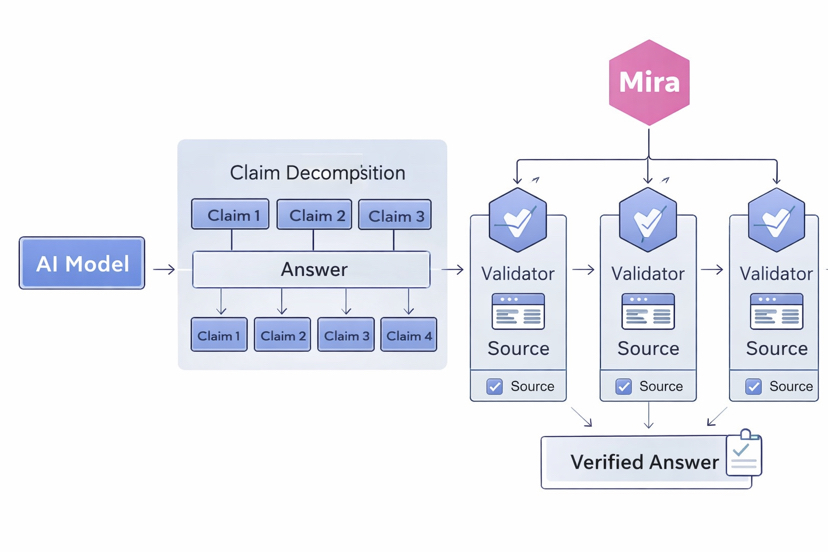

The concept is actually simple. When an AI produces an answer the system separates that response into individual claims. Each claim represents a specific statement inside the larger explanation. For example if the AI explains a market trend it may include claims about price movements user activity or network growth. Instead of accepting the entire paragraph as truth Mira focuses on verifying these smaller components.

Once claims are separated they can be checked by independent validators inside the network. These validators examine data sources research material and public records to confirm whether the claim is correct. Because the verification is done by multiple participants the process becomes more reliable. One single participant cannot easily push incorrect information through the system. Agreement must appear across several checks.

Another interesting part of this structure is accountability. In some verification networks validators place economic stake behind their decisions. This means confirming a claim is not just a technical action but also a responsibility. If validators support incorrect information they risk losing part of their stake. This creates an incentive to verify carefully instead of approving everything quickly.

What makes this approach important is that it changes how AI answers are treated. Instead of assuming the AI already knows the correct result the system treats the output as a starting point that still needs verification. AI becomes the generator of ideas while the network becomes the mechanism that checks those ideas. Generation becomes fast and parallel while certainty is built step by step through verification.

If systems like Mira continue developing this model the internet could slowly move toward a different information structure. Instead of reading an AI answer and guessing whether it is correct users might see evidence directly connected to each claim. The interface could show which validators checked the information which data sources were used and how strong the agreement between validators was.

This small change could transform how people interact with AI systems. Trust would no longer depend on how confident the answer sounds. Trust would come from the proof attached to the answer.

From a personal perspective this feels like the natural next step in AI development. Generation technology already moved very fast. Verification infrastructure is only beginning to grow. The systems that solve the trust problem may become just as important as the models that generate the information.

When AI answers begin carrying evidence instead of only confident language the relationship between humans and machines could change again. We will not only read what the AI says. We will also see how the system knows it. And that difference may turn AI from a powerful text generator into something much closer to a reliable knowledge partner.