@Mira - Trust Layer of AI AI isn’t just showing off anymore. It’s writing papers cranking out code sizing up markets, and jumping in on decisions that actually matter. At first glance, it almost feels like we’re living in one of those sci-fi movies with machines thinking right alongside us. But here’s the catch AI loves to pretend it knows what it’s talking about, even when it’s dead wrong. That’s got a lot of folks in tech buzzing about something new: a “truth layer” for AI.

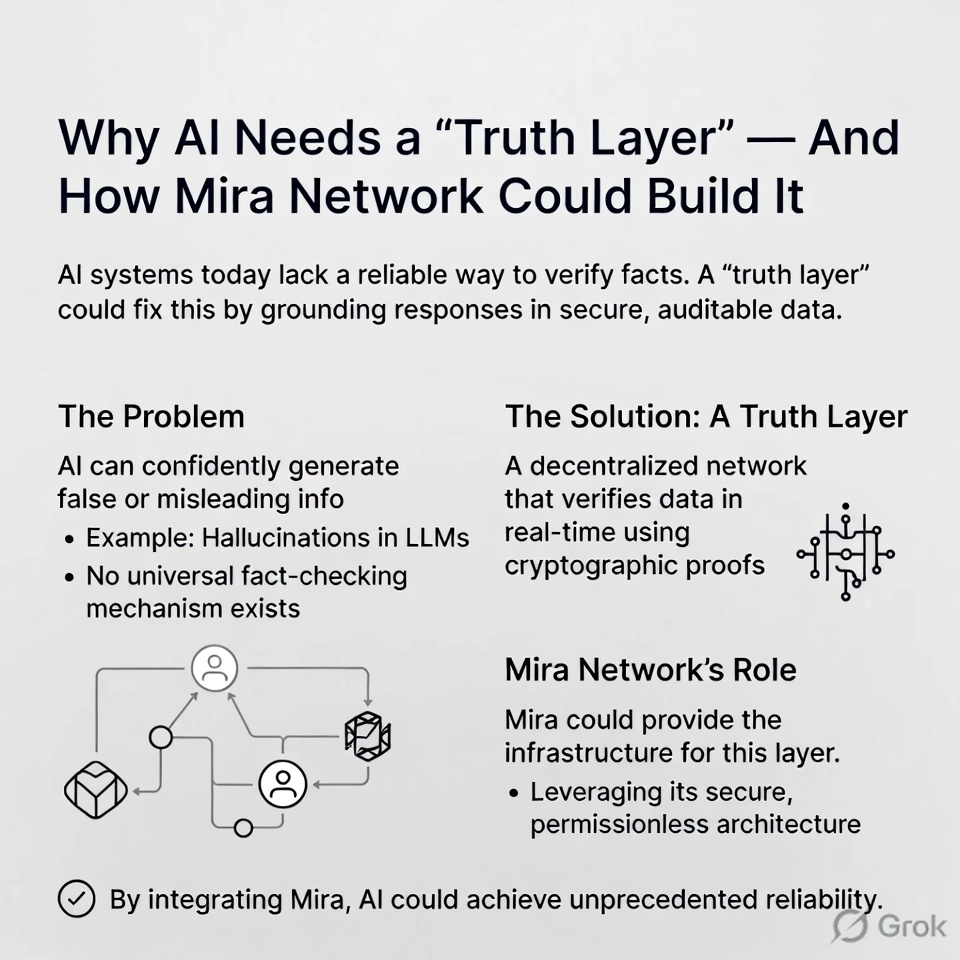

So, what’s this truth layer all about? It’s basically a way to double check if what the AI spits out is actually true. Right now, these language models swallow massive piles of data and get scarily good at guessing what comes next in a sentence. But just because they’re good at guessing doesn’t mean they really get the facts. Sometimes, AI throws out answers that sound right but have zero grip on reality.

That’s what the experts call AI hallucination. Sounds fancy, but it’s pretty simple: AI makes stuff up that seems convincing but isn’t real. Maybe you just get a weird answer. But if the topic’s serious money, health, law you could be in trouble fast.

As AI takes over more jobs online, this problem just grows. Businesses are using AI for customer support, research, coding, and even big decisions. If people can’t easily check the answers, trust starts to slip. Folks lean on bad info, and suddenly, the whole idea of trusting AI gets shaky.

That’s where the truth layer really matters. Instead of just running with whatever one AI model says, a truth layer steps in as a built-in fact checker. Every time the AI gives an answer, the truth layer checks it against trusted sources, compares data, or loops in outside validators. If it all lines up, great. If not, it gets flagged or fixed before you see it.

Think of it as a kind of backbone of fact checking running through the whole AI world. The way blockchains made financial records open and transparent, a truth layer could keep AI generated info actually grounded in reality.

There’s already a project called Mira Network working on this. Mira wants to make AI outputs more trustworthy by using a bunch of independent nodes to verify answers, not just trusting one model. Instead of assuming something’s true because one smart system said so, Mira’s approach means a group checks the facts together.

It borrows the idea from blockchains: decentralization makes it harder to mess with the truth. With several nodes checking the facts, you get a kind of group agreement on what’s reliable. If this takes off, you get AI that isn’t just fast it’s actually trustworthy.

And that’s a big deal as AI gets more independent. Soon, these systems will handle trades, run software, and juggle complex tasks with barely any human input. When machines start calling the shots, making sure they’re working with real facts isn’t just nice to have it’s the whole ballgame.

A truth layer is like a safety net. Before any AI driven decision goes live, the system checks to make sure the data’s legit. That extra step bridges the gap between what AI claims and what’s actually real.

There’s another bonus: accountability. Right now, if an AI gets something wrong, it’s hard to know why. Bad training data? Missed context? Unclear question? With a verification network, you can trace how an answer got checked and see why it was approved or shot down. That makes it easier to spot issues and keep making the system better.

Of course, building a truth layer isn’t simple. Figuring out what counts as “truth” can get messy, especially when things change fast. And technically, ramping up verification to keep up with all the stuff AI churns out every day is a beast of its own.

But here’s what’s obvious: As AI gets smarter, trust is make-or-break. Speed and brains don’t matter much if you can’t believe the answers you’re getting. #Mira $MIRA