The first time I started looking into @Fabric Foundation Protocol, I realized that it approaches robotics from a direction that most discussions usually ignore. When people talk about robotics, the conversation normally focuses on hardware capabilities or artificial intelligence models. Fabric is not really trying to compete in that space. Instead, it focuses on something more structural — the coordination layer that could allow robots, software agents, and human operators to interact through a shared network.

That idea immediately made me curious. Robotics systems today are usually closed environments. A single company controls the hardware, the software stack, and the environment where the robot operates. That works well inside factories or controlled spaces, but it becomes harder when machines need to interact across different systems or organizations. Fabric Protocol seems to be exploring what happens when that control layer becomes shared infrastructure rather than something owned by one company.

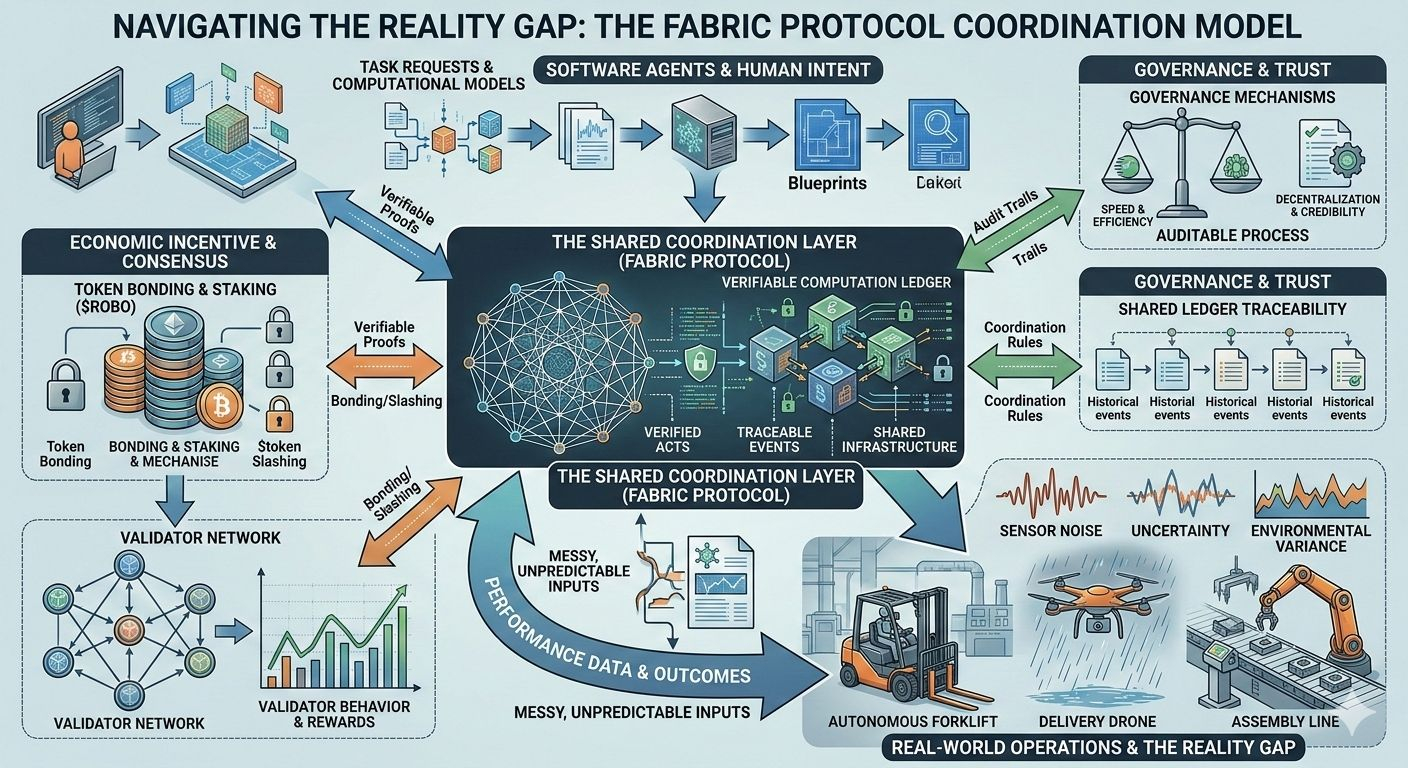

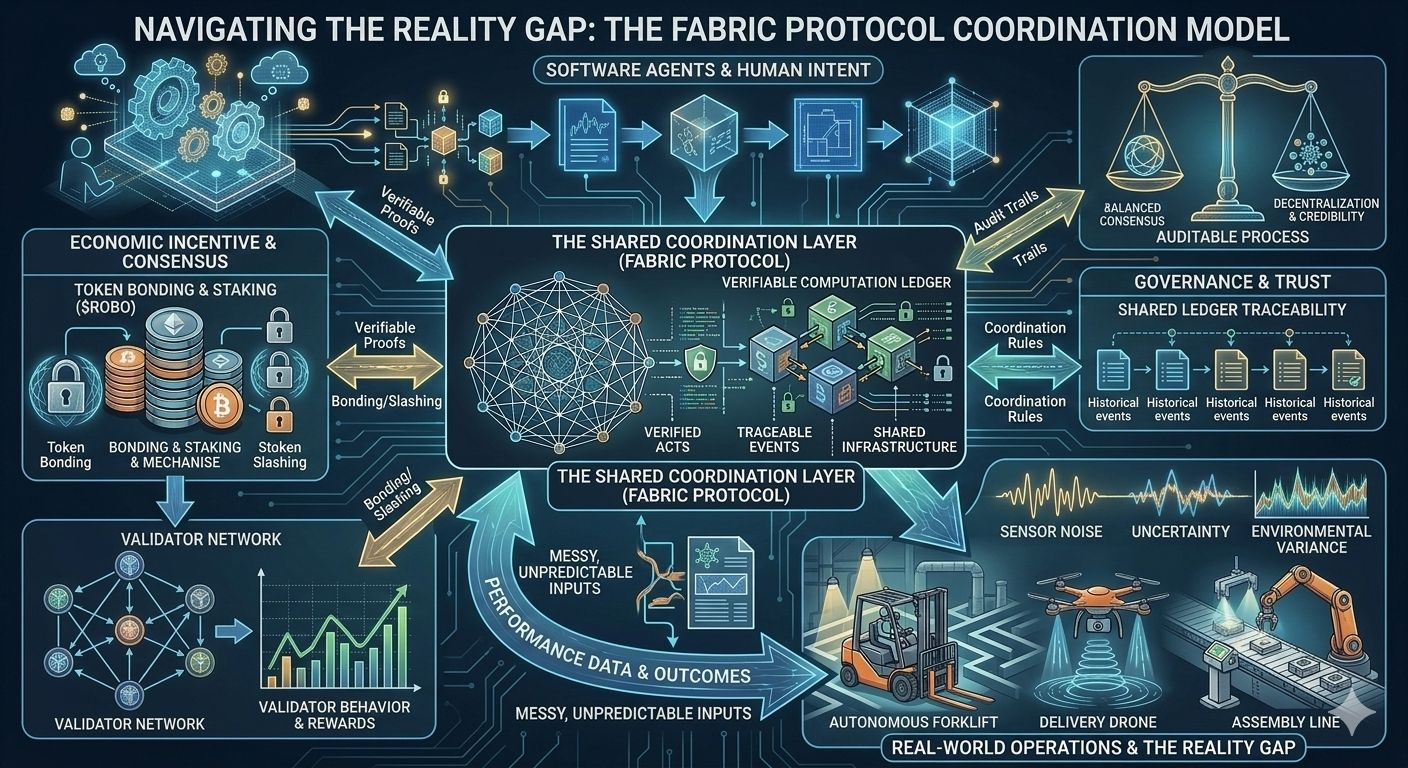

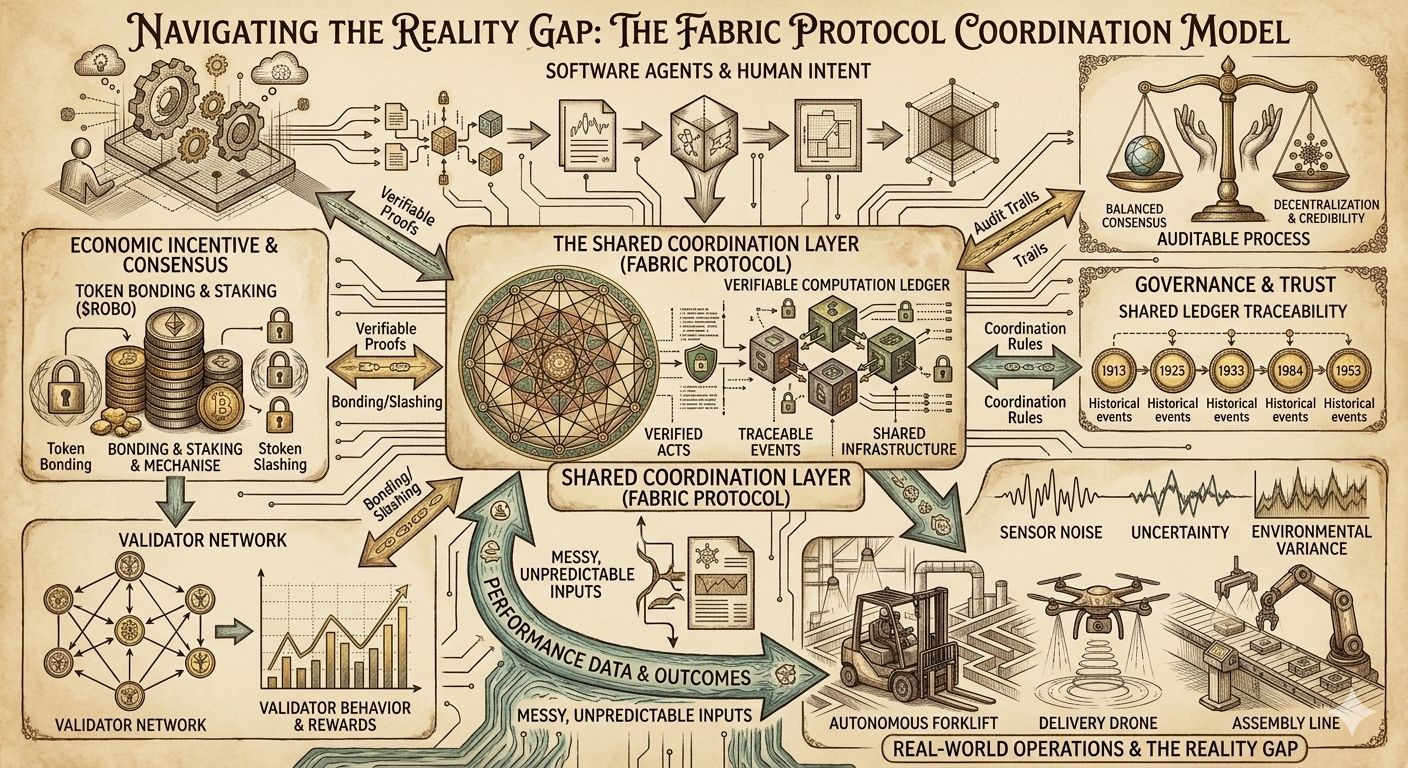

From what I could understand while studying the system, Fabric treats robots and software agents as participants inside a network rather than isolated machines. When an agent performs a task or generates data, that activity can be recorded and verified through the network’s computational layer. Instead of simply trusting the machine that produced the result, the protocol tries to introduce a process where outcomes can be verified by other participants.

This is where the idea of verifiable computing becomes important. In simple terms, the system attempts to make machine actions auditable. If an autonomous agent completes a task, the network can check whether the computation or result followed the expected rules. In theory, this creates a form of accountability that traditional robotics systems rarely have.

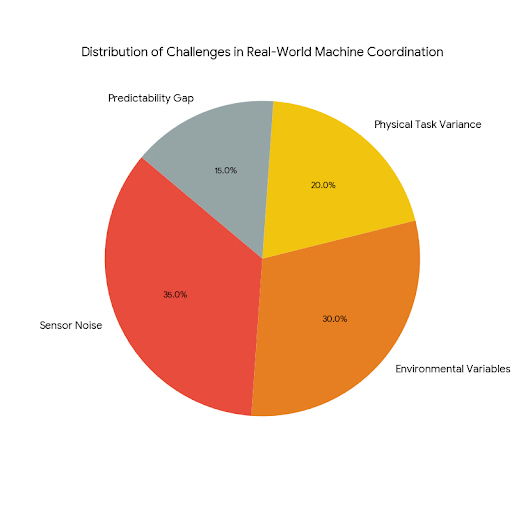

But the moment you start thinking about this in real-world conditions, things become more complicated. Software networks deal with predictable inputs. Robotics doesn’t. Sensors can misread environments, machines can behave slightly differently under similar conditions, and physical tasks often include variables that are difficult to measure perfectly. So the natural question becomes: how does a verification system handle uncertainty when machines operate in messy, unpredictable environments?

Another part of Fabric Protocol that caught my attention is how it describes itself as an open network supported by the Fabric Foundation. Openness in decentralized systems sounds simple at first, but in practice it usually comes with hidden boundaries. Anyone may technically be able to join the network, but running reliable infrastructure requires computing power, stable uptime, and operational resources. Over time, those requirements tend to shape who actually participates in meaningful roles.

The protocol also attempts to coordinate data, computation, and regulation through a shared ledger. That design choice introduces something robotics networks often struggle with — traceability. If a robot or agent performs an action, the network could theoretically record the sequence of events that led to that outcome. That kind of transparency might become increasingly important as autonomous systems start interacting with public environments.

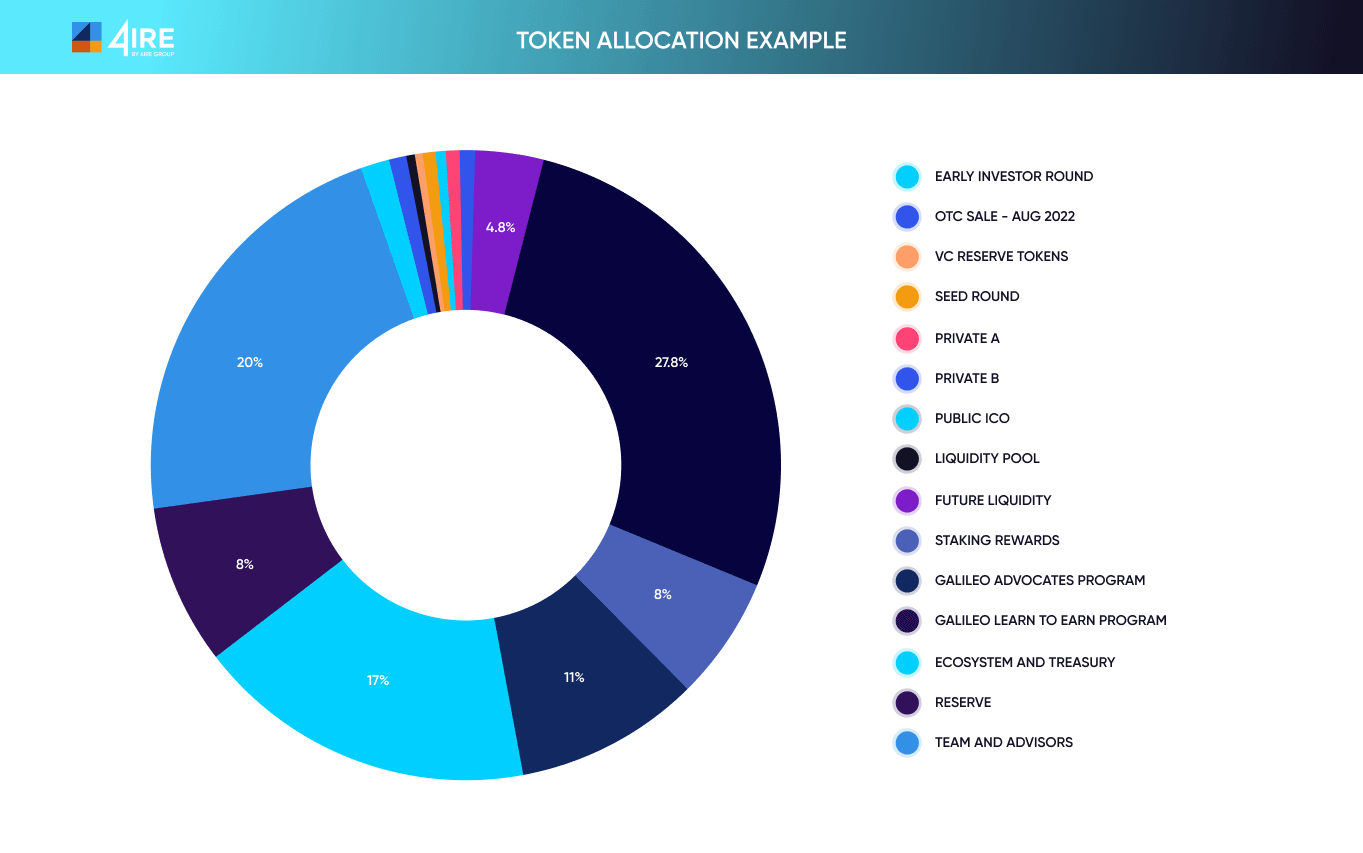

Still, transparency alone does not solve every problem. Recording activity on a ledger only works if the participants submitting information behave honestly. That is where the economic layer of the system comes in. Tokens within the network appear to function mainly as incentive tools rather than just tradable assets. Operators who run agents or validation infrastructure may need to stake or bond tokens to participate.

The idea behind bonding is fairly straightforward. If participants lock economic value in order to operate, they have something to lose if their actions produce incorrect or misleading results. This kind of mechanism has been used in many blockchain networks to align incentives. However, it also introduces another challenge: if the economic requirements become too high, participation may gradually concentrate among a smaller number of well-funded operators.

Liquidity and transaction flow also become important when a network coordinates machine activity. Robots and agents interacting through decentralized infrastructure could generate frequent micro-transactions related to computation, verification, or task execution. If those transactions become slow or expensive, the system may struggle to support real-time operations. Efficient routing and low execution friction will likely be necessary for the network to function smoothly.

Validator behavior is another piece of the puzzle. In most decentralized networks, validators respond to economic incentives. If verification rewards are structured poorly, participants might focus on maximizing rewards rather than maintaining accuracy. Designing a system where validators remain economically motivated while still prioritizing reliable verification is not always easy.

Governance also enters the conversation once machines begin interacting with shared infrastructure. Autonomous agents may eventually face unexpected situations or edge cases that require human intervention. When that happens, the network must have a process for adjusting rules or resolving disputes. If governance becomes too centralized, it risks undermining the credibility of the system. If governance becomes too fragmented, decision-making may become slow and inefficient.

Another aspect that deserves attention is usability for developers and operators. Even if the technical architecture works well, adoption depends on how easily people can build on top of it. Integrating robotics systems with decentralized infrastructure could introduce complexity that developers may not be prepared to manage. The success of the ecosystem may depend partly on how well the protocol simplifies these interactions.

What I find interesting about Fabric Protocol is that it does not present itself as a finished answer to these challenges. Instead, it seems to be experimenting with a new coordination model where machines, software agents, and human operators interact through shared computational rules and economic incentives.

Whether that model works at scale is still an open question. Robotics systems operate in environments that are far less predictable than purely digital networks. Economic incentives can shape behavior, but they also introduce strategic decision-making among participants. Governance structures can evolve, but they must remain credible during moments of disagreement or stress.

For me, studying Fabric Protocol feels less like reviewing a completed product and more like watching the early development of a new kind of infrastructure. It attempts to connect robotics, decentralized computation, and economic incentives into a single system designed for coordination rather than control.

If those pieces eventually align in practice, the network could provide a framework for machines to collaborate across organizations and environments without relying entirely on centralized authorities. But that outcome will depend on whether verification mechanisms, economic incentives, and governance structures can remain balanced as the network grows.

The real test for Fabric Protocol will be whether its design can maintain transparency, fair participation, and reliable machine coordination once the complexity of real-world operations begins to interact with the economic and technical layers of the network.

@Fabric Foundation #ROBO #robo $ROBO