Most people already use systems that check if something is true. For example a weather app checks sources before showing the forecast for tomorrow. A trading platform checks prices from places before showing the value of something. The person using it only sees the number.. Behind that number there is a process to make sure it is correct.

Something like this is happening with intelligence. As language models make information people start to wonder how we know that information is true. That is where networks like Mira Network come in. They do not look like machine learning systems. They look like something that helps us trust the information.

In blockchain systems there is something called an oracle. It is a service that brings information from outside the blockchain into the blockchain. The blockchain cannot see the world on its own. It cannot check stock prices or sports results. The oracle solves this by gathering information from sources and comparing it. Then it publishes a version that the network can trust.

Artificial intelligence verification networks have a problem. Language models make statements all the time. They might say a company got funding or launched a product. These statements sound confident. That does not mean they are correct. The hard part is not making the text. The hard part is checking if each statement is true.

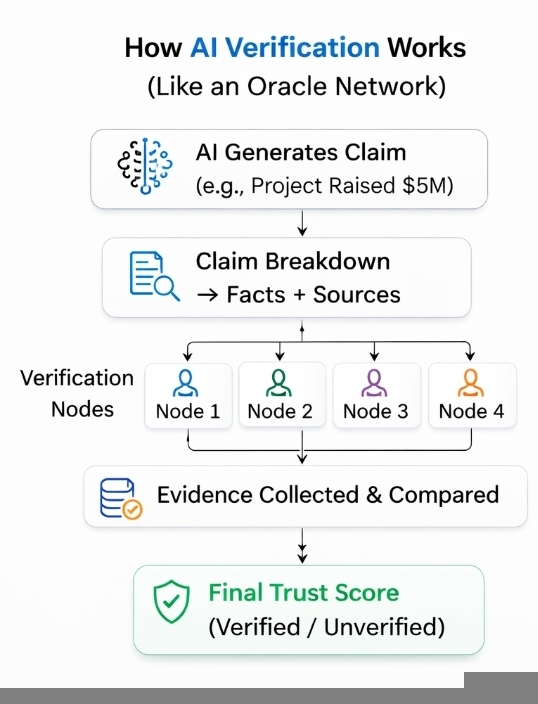

This problem is similar to the oracle model. Of asking a neural network to figure out the truth verification networks break it down into smaller parts. Each statement can be checked. One person might check a funding number. Another person might check a launch date. Someone else might compare the statement to records. Then the network combines these checks to see if the statement is reliable.

In terms the system is not like a model that knows things. It is like a network that gathers evidence.

Mira Network is trying to do this with intelligence verification. Of trying to make a perfect model it makes a system where many people review and evaluate statements made by artificial intelligence systems. The goal is not to find the truth. It is to see if a statement passes a verification process.

This idea is not new in the blockchain world. Oracle networks already show that verification can work when people have the incentives. People gather information compare it and get rewards for being accurate. Over time people who provide information gain trust. People who provide information lose trust.

What is interesting is how similar this looks when applied to intelligence outputs.

The difference is that artificial intelligence statements can be complex. A statement about a projects roadmap or funding history might require reading documents or checking records. The verification process is slower and more complicated.

This has both bad sides. On the side decentralized verification means many people are responsible for checking the information. Of relying on one model or one company many people can evaluate the same statement. When the incentives are good people are rewarded for being accurate.

The system also depends on people working together. Oracle networks already have problems with people working to submit incorrect information. Verification networks might have the problems. If many people follow the source without checking the system might look decentralized but still make the same mistake.

Another challenge is when verification is visible to the public. Platforms use dashboards and metrics to decide which information to show. If a verification network produces credibility scores those scores might influence how people read the information. A statement that is labeled as verified might get attention.

This influence is powerful. It changes how people behave.

Writers might start writing in ways that maximize verification scores. Analysts might prefer statements that're easy to verify rather than complex arguments. Over time the verification layer does more than just confirm information. It guides how information is made in the place.

I wonder if this is the role of these systems. Not just checking truth. Shaping how information is made.

In that sense comparing intelligence verification networks to oracle systems feels correct. Both are about coordinating trust. The model might make the draft of reality but the network decides what is believable.

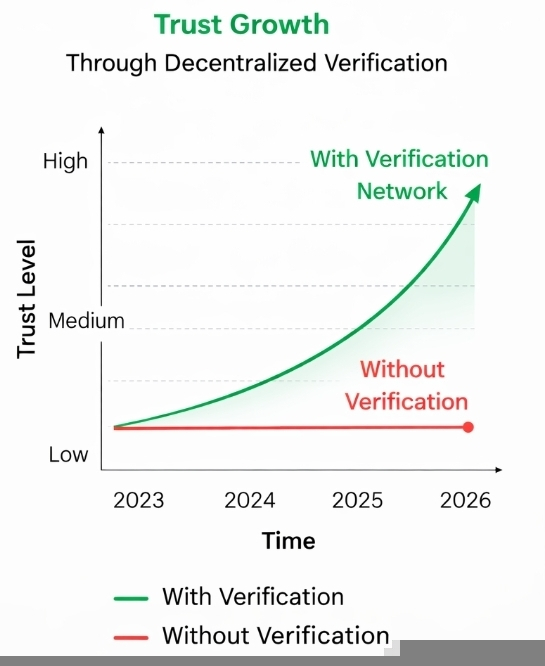

This also explains why verification networks might become more important as artificial intelligence improves. Better models will make convincing statements but not necessarily more accurate ones. The number of statements will increase faster than any system can check.

At that point the problem is not about machine learning. It is about infrastructure. A network that organizes verification might be as important as the model that makes the information.

Whether Mira Network succeeds is still uncertain. Designing incentives for verification has always been hard.. The direction is interesting. It suggests that the future of artificial intelligence might depend less, on perfect models and more on systems that compare, question and confirm what those models say.

If that is true artificial intelligence verification might feel less like artificial intelligence and more like the slow work of an oracle network.