I was experimenting with a few AI prompts recently while researching something and one response actually looked perfect at first.

Clear explanation.

Confident tone.

Even a reference at the end.

Then I tried to open the source.

It didn’t exist.

Not completely fake… just slightly wrong. And that’s the strange thing about modern AI systems. They are extremely good at producing answers that sound reliable even when a detail inside the response is inaccurate.

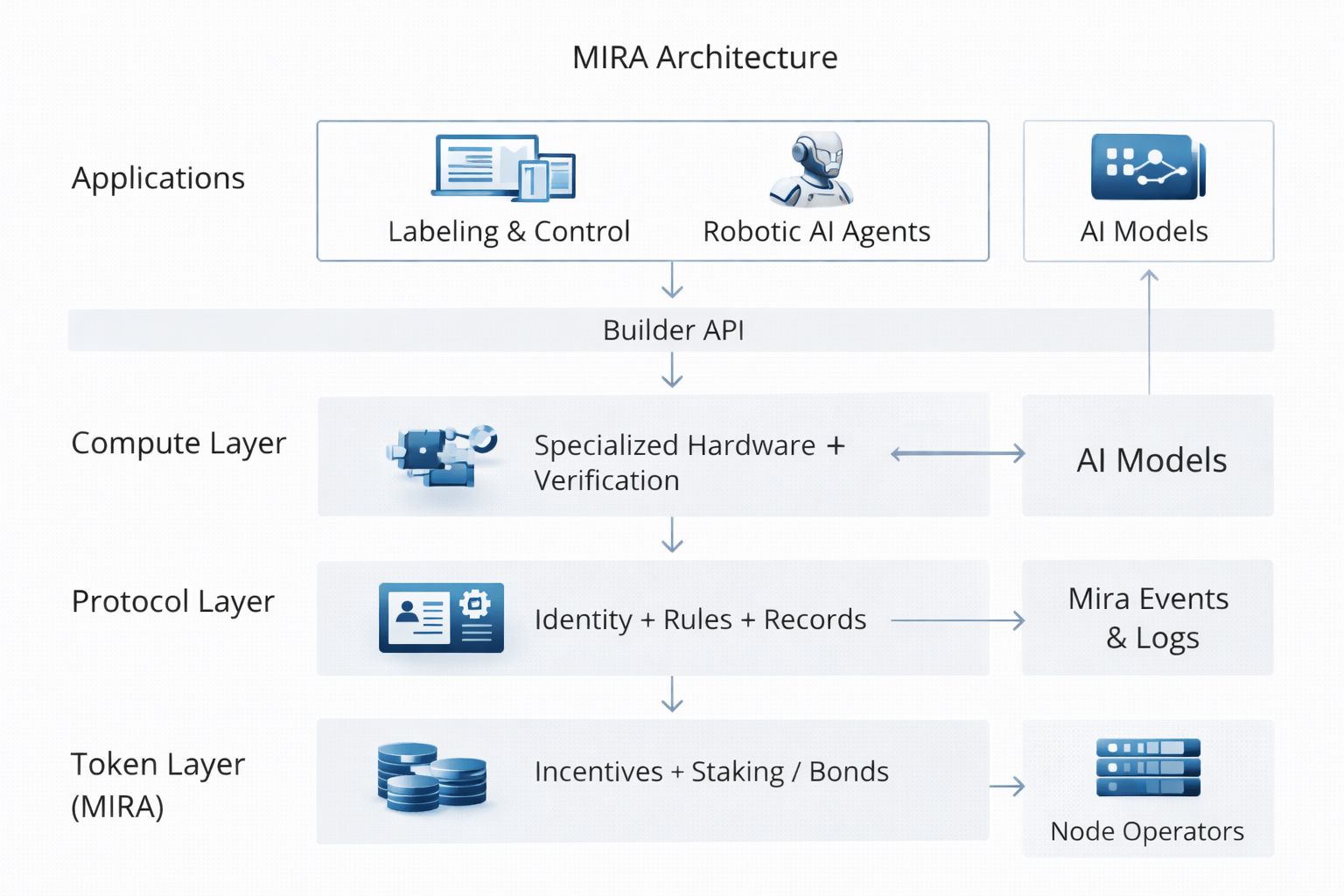

That’s the problem “Mira Network” is trying to address.

Most AI projects today focus on “making models smarter”. Bigger datasets, more parameters, faster inference. The assumption is that better intelligence will eventually solve reliability.

Mira takes a different approach.

Instead of assuming models will become perfect, the protocol focuses on “verifying the information those models generate”.

And honestly that feels like a more realistic way to think about AI.

The way Mira works is actually interesting once you understand the mechanics.

When an AI produces an output the system doesn’t treat the response as one piece of information. Instead the output can be broken into “individual claims”.

A statistic.

A statement.

A reference.

Each of those claims can be verified independently.

Those claims are then distributed across a network of validators. Some validators might be other AI systems while others could be specialized models designed to evaluate certain types of information.

Instead of trusting one model’s answer the network looks for “agreement across multiple validators”.

If enough independent validators confirm the same result the claim becomes verified and the outcome is recorded through blockchain consensus.

That small change actually shifts the trust model around AI quite a bit.

Right now when we read an AI response we act as the verification layer ourselves. We open extra tabs check sources compare answers between models and try to decide what’s correct.

Mira moves that verification process “into the protocol itself”.

Validators are incentivized to review claims carefully because accurate verification can earn rewards while incorrect validation can lead to penalties.

Over time the system turns AI outputs into something closer to “verifiable information instead of confident guesses”.

What makes this idea interesting to me is where AI seems to be heading.

Today AI mostly works like an assistant. You read the answer and decide what to do with it. But new AI agents are already starting to automate tasks across finance research and digital infrastructure.

In those environments even small mistakes can matter a lot more.

That’s why verification might end up being just as important as intelligence itself.

Mira’s core idea is simple but powerful.

AI systems will keep generating information. But a decentralized network should determine “whether that information can actually be trusted”.

And after seeing another confident but slightly wrong AI answer recently… that idea suddenly feels a lot more necessary.