I’ll explain it the same way I described it to a colleague while reviewing our system logs AI models are great at producing answers, but they are surprisingly bad at proving those answers should be trusted. That realization is the reason we started experimenting with @Mira - Trust Layer of AI as a verification layer in our pipeline.

Our team runs an internal analytics tool where large language models generate short reports about on-chain activity patterns. The outputs look convincing most of the time. Too convincing, actually. Early audits showed roughly 86% of generated claims were accurate, but the remaining ones were subtle errors wrong correlations, exaggerated trends, or statements that sounded confident without solid data. That’s where the idea of testing the $MIRA verification layer came in.

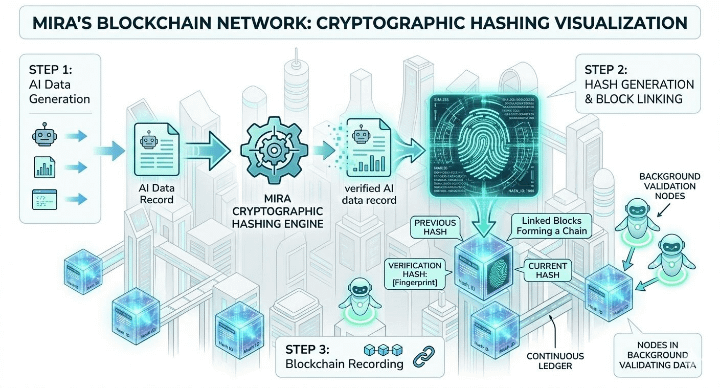

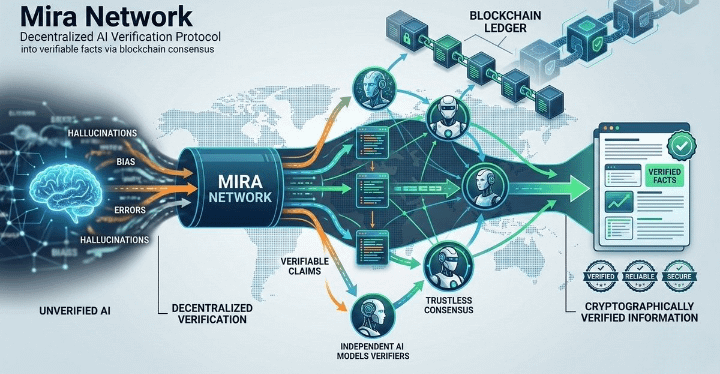

Instead of sending AI outputs directly to our dashboards, we placed Mira between generation and consumption. Architecturally, the model produces structured claims first. Each claim is hashed and submitted to the Mira Dynamic Validator Network. Independent validators analyze the claim using different evaluation strategies, and a decentralized consensus score is returned before the claim moves further in the pipeline.

The first thing I noticed was how the validator distribution works in practice. The network doesn’t rely on a single verification node. Validators are dynamically selected, which reduces the risk of a single biased evaluator dominating the result. In our early test runs we observed consensus forming from roughly 6–10 validators per claim. That diversity mattered more than I initially expected.

Latency was the first operational concern. During the first week our average verification time was around 470 milliseconds per claim. That added noticeable overhead because a single report can contain multiple independent claims. After optimizing the request batching and caching validator responses, we reduced that to about 390 milliseconds on average. Not instant, but acceptable for our use case.

What made the experiment interesting was the disagreement between AI confidence and validator consensus. Roughly 12% of claims that our model labeled “high confidence” received only moderate consensus scores from the Mira network. When we manually reviewed those cases, most involved inference leaps the model connected two data points that were statistically related but not causally proven. Our internal rule checks didn’t catch that nuance.

Another experiment we ran involved comparing three workflows: AI-only verification, centralized rule validation, and AI combined with the decentralized validation layer from @Mira - Trust Layer of AI . Over a two-week window we processed about 18,000 individual claims. The decentralized approach reduced correction events by around 17% compared with the AI-only pipeline. Centralized validation performed reasonably well too, but it lacked transparency about how decisions were reached.

Of course, the system isn’t perfect. Validators sometimes disagree widely when a claim contains ambiguous language or incomplete evidence. When consensus variance exceeded our threshold, we routed those claims into a manual review queue. This happened in roughly 4% of cases. It’s manageable, but it highlights something important: decentralized consensus measures agreement, not absolute truth.

One architectural tradeoff we debated was validator diversity versus response speed. Increasing the number of validators improved confidence in the consensus score but also increased latency slightly. In the end we settled on a mid-range configuration because reliability mattered more than shaving a few milliseconds from the pipeline.

Another subtle benefit appeared over time. Because every verification result includes a confidence gradient rather than a simple pass/fail outcome, our team started interpreting AI outputs differently. Instead of blindly trusting high-confidence statements, engineers began looking at the distribution of validator scores. That shift in mindset turned out to be valuable.

After running the system for a while, my perspective on AI reliability changed a bit. The Dynamic Validator Network from @Mira doesn’t magically eliminate mistakes, and it doesn’t replace human oversight. What it does provide is a structured way to challenge AI claims before they quietly propagate through automated systems.

Working with $MIRA reminded me of something engineers often forget: the problem with AI isn’t just generating information it’s knowing when that information deserves trust. Decentralized verification doesn’t solve the entire problem, but it introduces accountability into a process that used to rely mostly on assumptions.

And in complex AI systems, that small shift from assumption to measurable consensus can make a bigger difference than it first appears.