Most people don’t think about this until something unusual happens. As machines quietly move deeper into everyday life, an important question is slowly coming into focus: who verifies what machines actually do? 🤖

A delivery robot bumps into a curb, an AI assistant produces a confusing answer, or an automated system records the wrong data. In those moments, the discussion quickly shifts from the mistake itself to a deeper concern — who is responsible, and how can anyone verify what really happened?

This is where the idea behind Fabric Foundation and its $ROBO ecosystem begins to stand out. The project is exploring a new type of infrastructure focused on machine governance. Instead of relying solely on internal logs controlled by a single company, Fabric proposes a system where machine actions can be recorded, verified, and confirmed by multiple independent participants on a network.

In traditional systems, a company builds the machine, runs the software, and stores the data logs. If something goes wrong, people must trust that company’s records. But as machines become more autonomous — from delivery robots to AI-driven tools — that centralized trust model becomes harder to maintain.

Fabric’s approach introduces a different concept: verifiable machine activity.

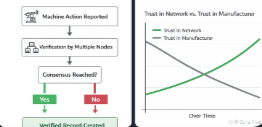

In simple terms, when a machine performs an action, it reports what it did to the network. Independent nodes — computers operated by different participants — check whether the claim is valid. If enough of these nodes confirm the information, the event becomes part of a shared, verifiable record.

This transforms machine activity into something closer to public infrastructure rather than private data.

The idea is somewhat similar to how cloud computing changed access to digital infrastructure years ago. Platforms like Amazon Web Services made computing power widely accessible through shared systems. Fabric is attempting something conceptually similar, but focused on governance and verification for machines.

Instead of asking users to blindly trust a device manufacturer or software provider, the system moves toward network-based accountability.

A robot reports its action.

Multiple nodes verify the claim.

The network confirms the result.

If successful, this model could quietly reshape how trust works in automated environments.

But technology alone is not enough. Systems like this depend heavily on incentives, transparency, and participation. Verification networks only work when participants are motivated to check and confirm information accurately.

Interestingly, similar behavioral dynamics already exist on platforms like Binance Square, where visibility, reputation signals, and community feedback influence how information spreads and how credibility is built. When verification affects reputation, participants naturally adjust their behavior.

Fabric appears to be applying that same principle to machines themselves.

If the system evolves successfully, the change might not be dramatic or highly visible at first. There may be no headlines or obvious breakthroughs. Instead, Fabric could become a quiet verification layer — a background network where machine actions are checked, validated, and recorded before disputes even begin.

In a future filled with autonomous devices, that invisible layer of accountability may become more important than people realize.

Because as automation expands, one question will always remain essential:

When a machine makes a decision, who verifies the truth behind it?

And if machines begin interacting with each other at scale, should their actions be trusted automatically — or should every important action be verified by a network first?