I didn’t start thinking about AI doctors because I believed AI was ready to replace physicians.

I started thinking about it because I noticed something strange about how we use AI in medicine today.

Whenever an AI system suggests a diagnosis or explains a symptom, no one treats it as the final answer.

Doctors double check.

Researchers cross-reference papers.

Patients ask a second opinion.

Without realizing it, we already treat AI medical advice as a hypothesis that needs confirmation.

That realization made me look differently at the infrastructure behind AI.

Most discussions around AI in healthcare focus on intelligence. Better models. More training data. Higher diagnostic accuracy. But the deeper issue isn’t how smart the system is. It’s how certain we can be that it’s right.

That’s where Mira stopped feeling like just another AI project and started feeling like infrastructure.

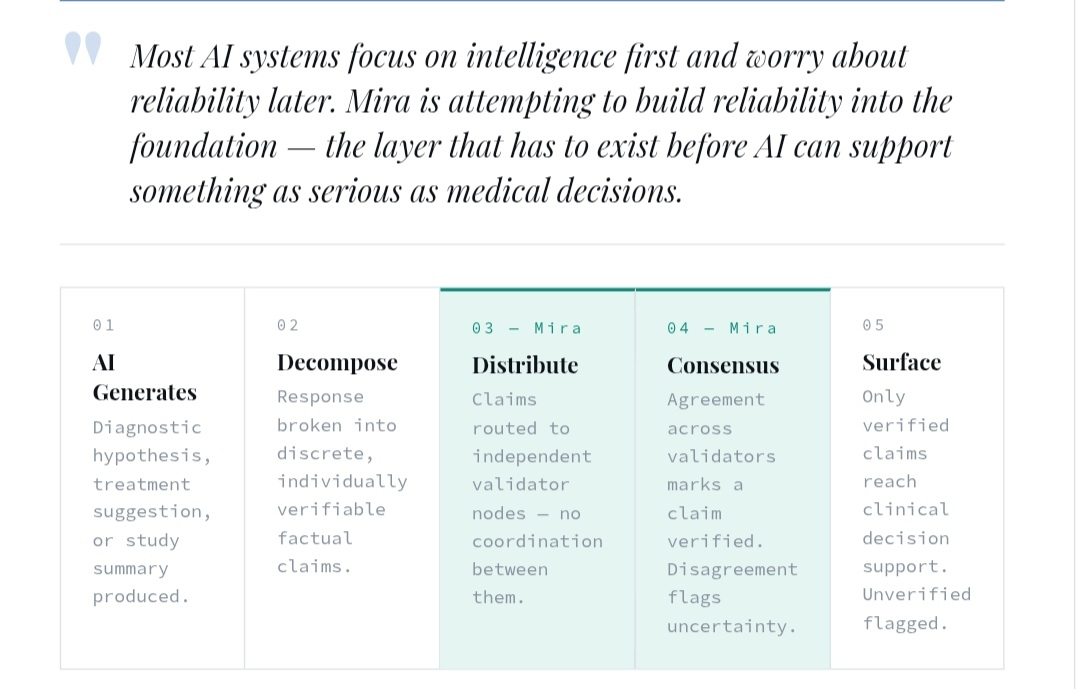

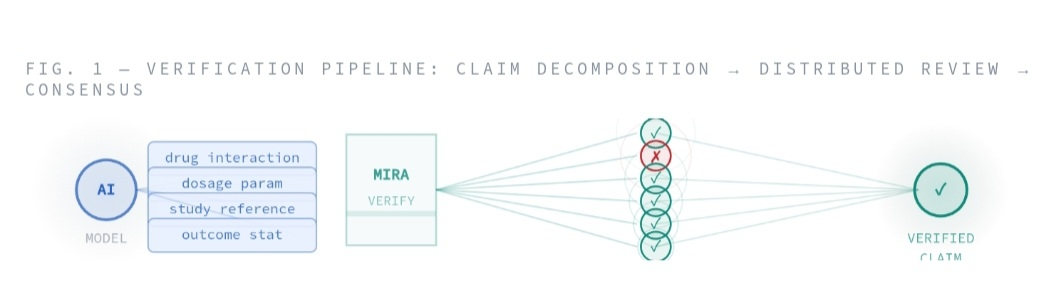

Mira isn’t trying to train the smartest medical model. It’s building a verification layer around AI outputs. Instead of trusting one system’s answer, Mira breaks responses into smaller factual claims and distributes them across a decentralized network of validators that check those claims independently. Consensus determines whether the information is accepted as reliable.

The framing matters.

Instead of AI producing medical advice that users must trust or question, Mira treats AI outputs like scientific claims that require peer review. One model proposes an answer. Multiple others evaluate the evidence. Agreement becomes the signal of reliability.

At first, I underestimated how important that shift is.

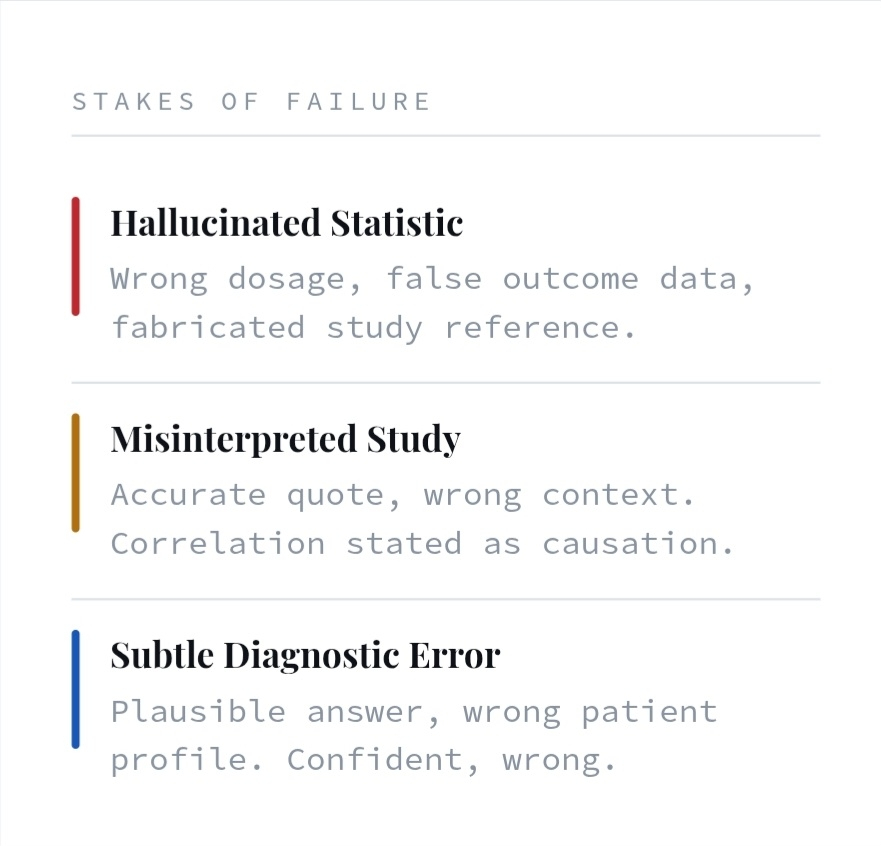

But healthcare is one of the most sensitive environments for AI deployment. A hallucinated statistic, a misinterpreted study, or a subtle diagnostic error isn’t just inconvenient — it can be dangerous.

That’s why AI systems today still require human oversight.

The underlying models generate responses probabilistically. They predict what a correct answer should look like, which means errors and hallucinations remain possible even in highly capable systems.

Mira introduces a system where those probabilistic outputs don’t automatically become trusted recommendations.

Claims get verified.

That’s the piece that made me pause.

Instead of humans checking results after the fact, Mira embeds verification directly into the pipeline. Complex outputs are decomposed into individual statements, each statement is checked by independent verifier nodes, and only the claims that pass consensus are accepted.

That changes how you imagine future AI healthcare systems working.

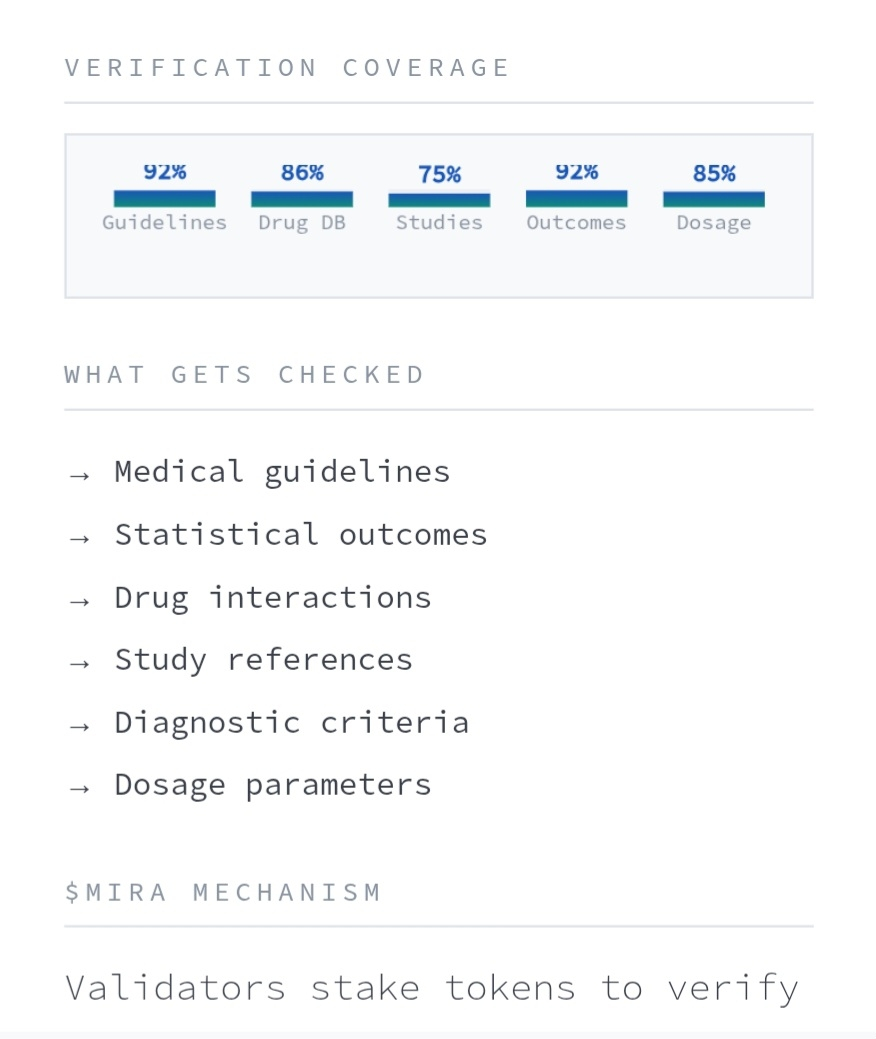

An AI diagnostic assistant could generate a treatment hypothesis. The verification network checks the underlying claims — medical guidelines, statistical outcomes, drug interactions, study references — before the recommendation is surfaced.

The AI proposes.

The network confirms.

MIRA functions as the incentive layer inside this architecture. Validators stake tokens to verify claims and are rewarded for accurate evaluations, creating economic pressure for honest verification and reliable outcomes.

That design choice feels intentional.

Healthcare is an environment where trust cannot rely on probability alone. If AI is going to move from research assistant to clinical decision support, reliability has to be engineered into the system.

Mira feels like it’s trying to build that missing trust layer.

I’m not naive about the challenges.

Medical knowledge is complex. Not every claim is easy to verify automatically. Verification networks introduce latency and coordination overhead. And clinical responsibility will always require human judgment.

But what stands out to me is the sequencing.

Most AI systems focus on intelligence first and worry about reliability later. Mira is attempting to build reliability into the foundation.

That approach is slower. Less flashy. Harder to explain.

But if AI is ever going to support something as serious as medical decisions, the real question won’t be “how smart is the model?”

It will be “who verified the answer?”

That’s the layer Mira is trying to build.

And if AI doctors ever exist, they probably won’t run on intelligence alone.

They’ll run on verification.